“Problems faced while collecting the log data in ec2 manually and finding a solution for easily querying the logs of the database in AWS". I have tried to find out certain solutions from a security and cost perspective. Installed and configured kinesis agent for streaming of logs. And also used Amazon athena for querying log data. Created a data delivery stream for source as direct put and destination as Amazon S3. In terms of security perspective, Amazon kinesis data firehose provides an encryption option and s3 bucket also provides encryption. In terms of cost perspective, the logs ingested are having a cost in kinesis data firehose and s3 bucket charges. Athena only needs to pay for queries you run(i.e.; charged for the amount of data scanned).

Amazon Kinesis Data Firehose is a fully managed service for delivering real-time streaming data to destinations such as Amazon Simple Storage Service (Amazon S3), Amazon Redshift, Amazon OpenSearch Service, Splunk and any custom HTTP endpoint or HTTP endpoints owned by supported third-party service providers, including Datadog, Dynatrace, LogicMonitor, MongoDB, New Relic, and Sumo Logic. Kinesis Data Firehose is part of the kinesis streaming data platform, along with Kinesis Data Streams, Kinesis Video Streams, and Amazon Kinesis Data Analytics. With Kinesis Data Firehose, you don’t need to write applications or manage resources. You configure your data producers to send data to Kinesis Data Firehose, and it automatically delivers the data to the destination that you specified. You can also configure Kinesis Data Firehose to transform your data before delivering it.

Amazon Athena is an interactive query service that makes it easy to analyze data directly in Amazon Simple Storage Service (Amazon S3) using standard SQL. With a few actions in the AWS Management Console, you can point Athena at your data stored in Amazon S3 and begin using standard SQL to run ad-hoc queries and get results in seconds. Athena is serverless, so there is no infrastructure to set up or manage, and you pay only for the queries you run. Athena scales automatically - running queries in parallel - so results are fast, even with large datasets and complex queries.

In this post, you will get to know how to collect the log data using Kinesis Agent and Querying the log data using Athena. Here I have used a ec2 server for collecting the streaming logs data using kinesis agent and used athena service for querying logs.

Prerequisites

You’ll need an Amazon EC2 Server for this post. Getting started with amazon EC2 provides instructions on how to launch an EC2 Server.

Architecture Overview

The architecture diagram shows the overall deployment architecture with data flow, application server, Amazon Kinesis Data Firehose, Amazon Simple Storage Service and Amazon Athena.

Solution overview

The blog post consists of the following phases:

- Create a data delivery stream using Amazon Kinesis service as Kinesis Data Firehose

- Installing and configuring the Kinesis Agent Application

- Querying the log data using Amazon Athena

I have a EC2 server as below →

Phase 1: Create a data delivery stream using Amazon Kinesis service as Kinesis Data Firehose

- Open the Amazon Kinesis service Console and select a kinesis data firehose.

- Create a delivery stream, select source as Direct PUT and destination as Amazon S3.

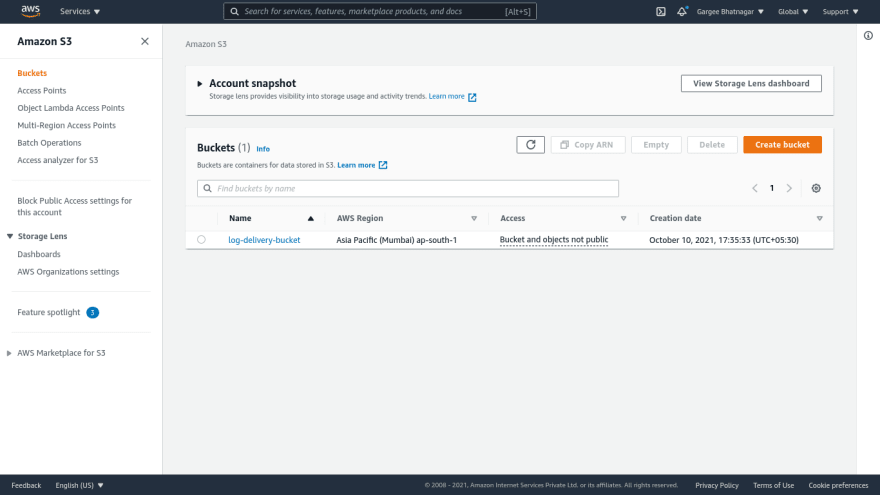

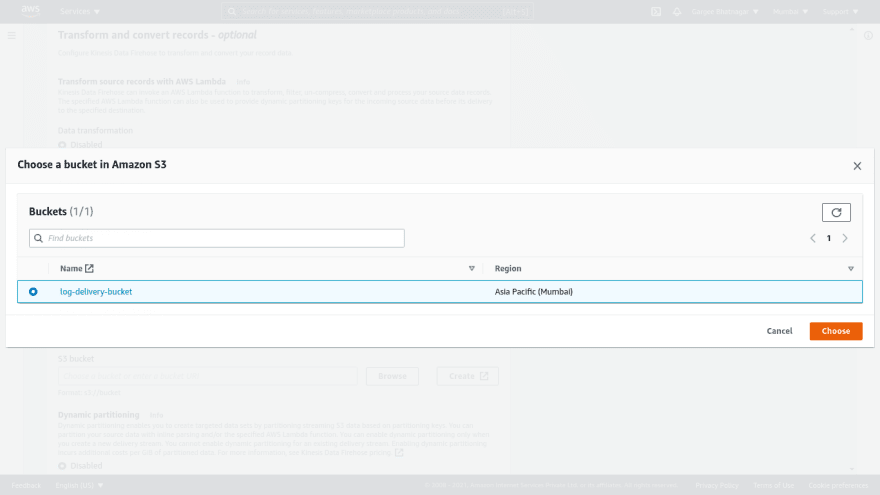

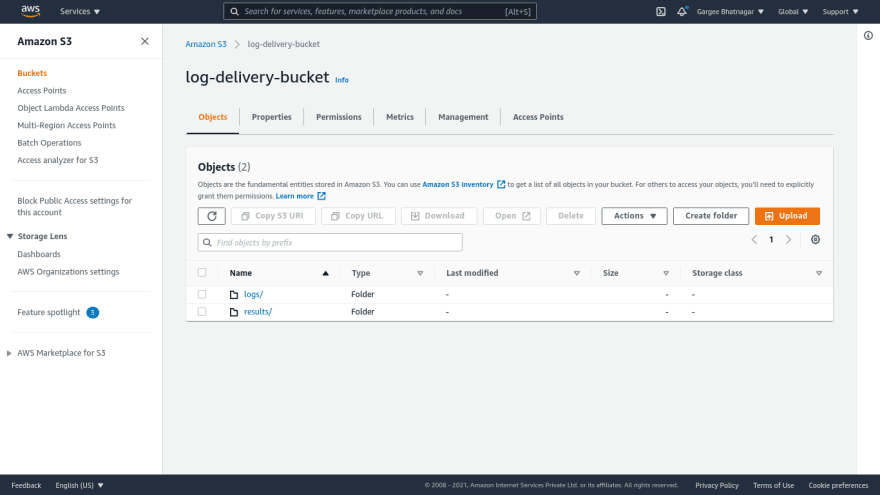

- Give a delivery stream name as “log-delivery-stream”. Also create a s3 bucket named as “log-delivery-bucket” with default options. In destination settings, choose the s3 bucket created and give s3 bucket prefix as “logs/” and s3 bucket error output prefix as “errors/”.

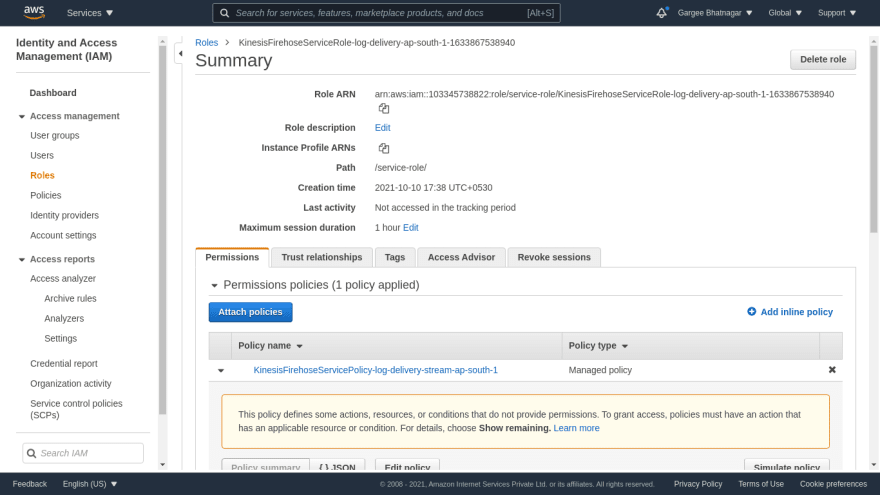

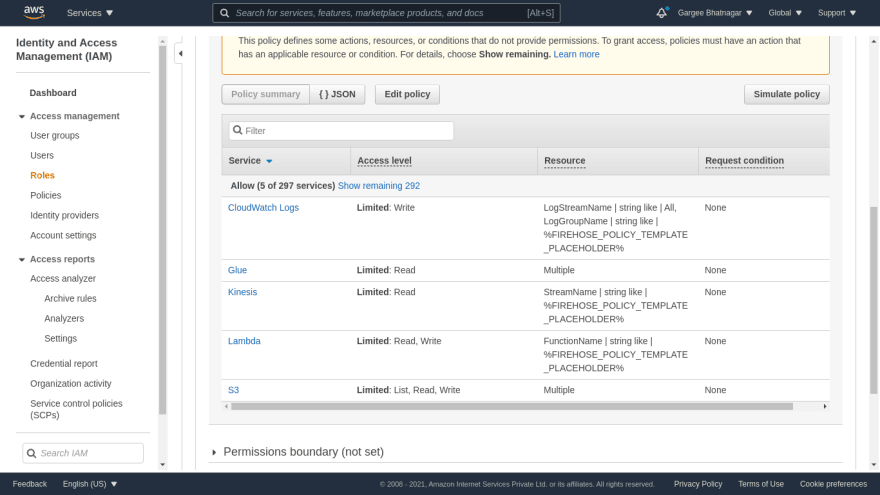

- Set buffer interval as “60 seconds”. And choose create or update IAM role as default defined. Leave all other settings as default only or can change it as per our requirement. And choose a create delivery stream option. Once the stream is created, the status changes to the active state of the delivery stream.

- You can view the IAM role policy permissions defined for the creation of the delivery stream.

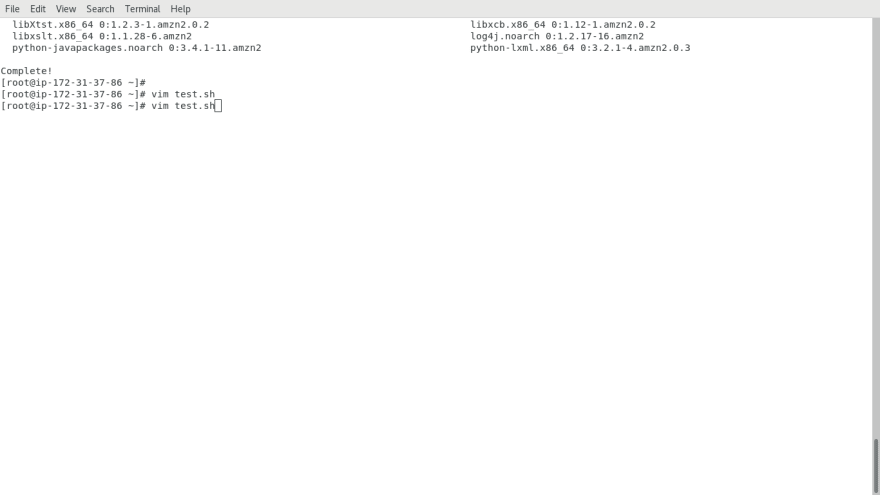

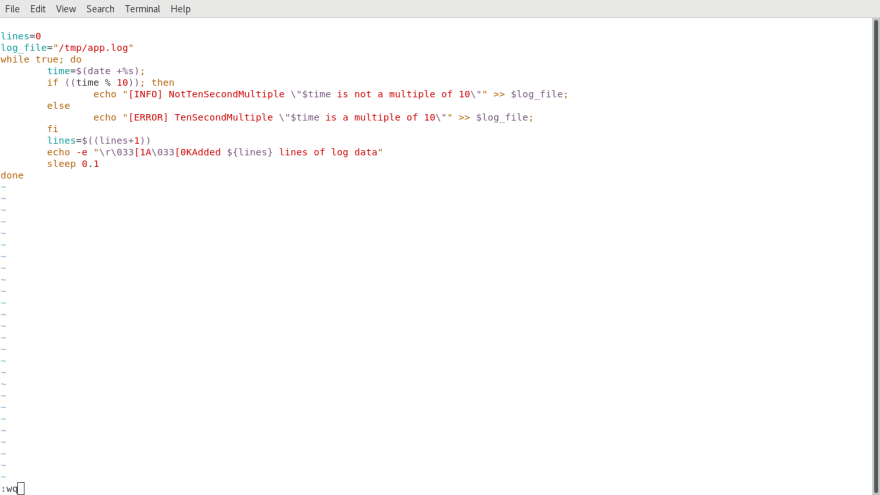

Phase 2: Installing and configuring the Kinesis Agent Application.

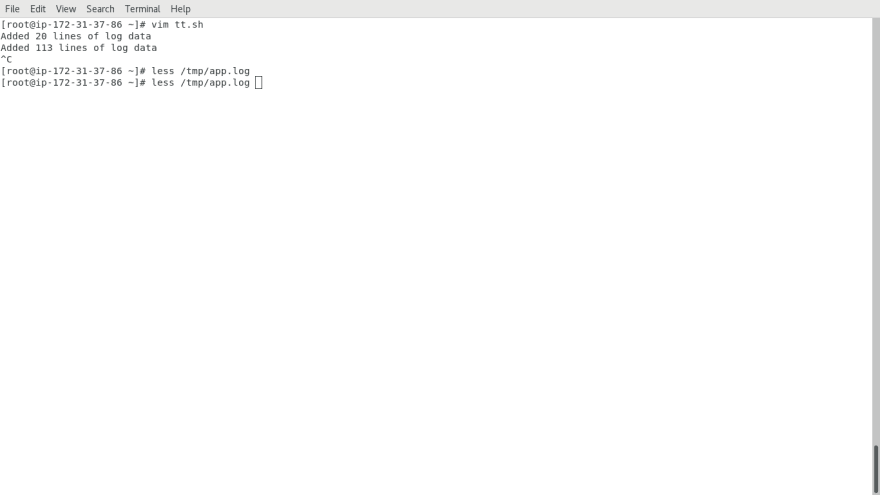

- Login the ec2 server and run the command to install the streaming data agent. Create a script for cloudwatch metrics, firehose endpoint and flow including {file pattern and delivery stream}. Run the script and start the aws-kinesis-agent service.

- Create another script for adding logs lines in a file named as /tmp/app.log and can check the lines added in the log file. You can check the logs of the kinesis agent using tail command.

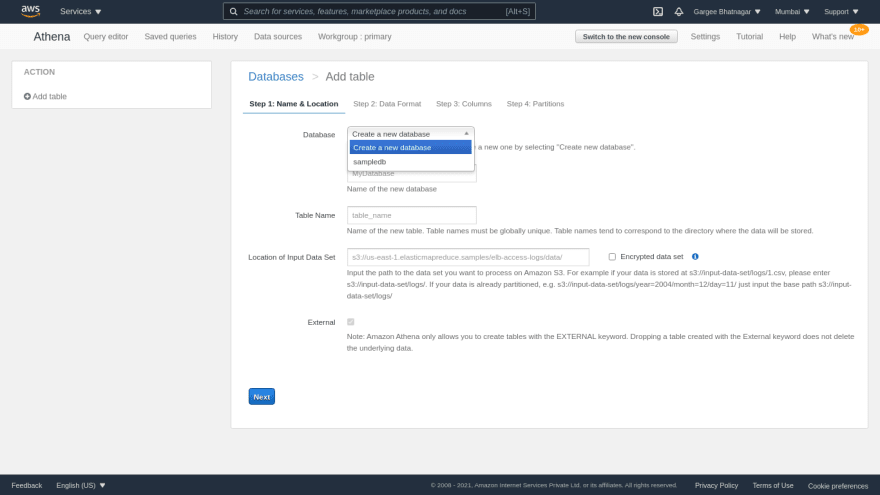

Phase 3: Querying the log data using Amazon Athena

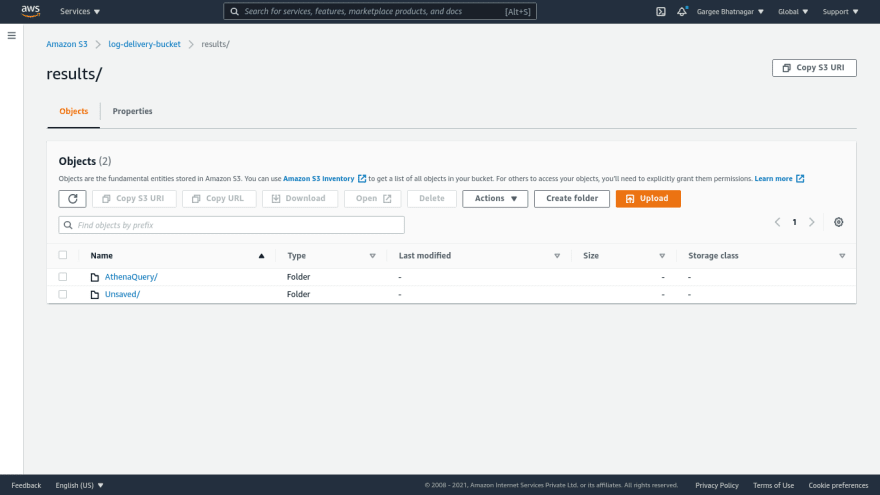

- Open the Amazon Athena console and create a folder named as “results” in the bucket. Goto settings option on athena console and select the s3 location of the folder you have created and then save it.

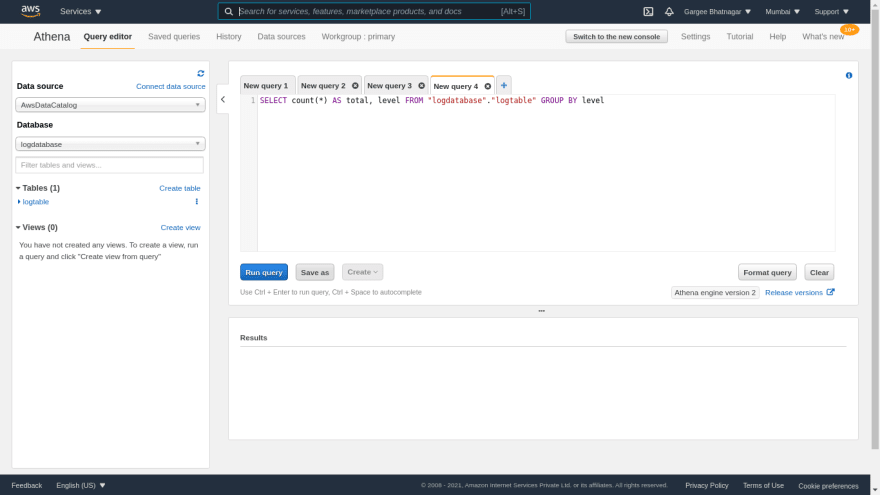

- Click on connect data source, choose data located as “Query data in Amazon S3” and metadata catalog as “AWS Glue Data Catalog”. In the next page: choose parameter “AWS Glue Data Catalog in this account” and “create a table using the Athena table wizard”. Click on continue to add table...

- Choose to create a new database option.Give the database name and table name. Create a logs folder in s3 bucket and select the bucket location in the input data set option. Then add the data format and regex. Also add the column name and column type and create a table.

- Results of query for database and tables created in athena. And then you are able to save the query on the athena console by “save as” option. You are also able to view the saved queries, history[query submitted time, query engine version and soon..]. You can create a workgroup based on users, teams, workloads and applications… In S3 bucket you can view the logs of the query run.

Clean-up

Terminate EC2 Server.

Delete Amazon Kinesis Data Firehose - Delivery Stream.

Delete the S3 bucket.

Pricing

I review the pricing and estimated cost of this example.

Cost of EC2 = $0.0124 per hour = $0.01.

Cost of Amazon Kinesis Data Firehose = $0.034 per GB of data ingested = $0.31(9.210 GB).

Cost of S3 bucket = $0.0

Cost of Athena = $0.0

Total Cost = $(0.01+0.31+0.0+0.0) = $0.32

Summary

In this post, I had shown you how to collect the log data using Kinesis Agent and Querying the log data using Athena.

For more details on Amazon Kinesis Data Firehose, Checkout Get started Amazon Kinesis Data Firehose, open the Amazon Kinesis Data Firehose console. To learn more, read the Amazon Kinesis Data Firehose documentation. For more details on Amazon Athena, Checkout Get started Amazon Athena, open the Amazon Athena console. To learn more, read the Amazon Athena documentation.

Thanks for reading!

Connect with me: Linkedin

Top comments (0)