A platform-agnostic way of accessing credentials in Python.

Photo credits | Branded content disclosure

Even though AWS enables fine-grained access control via IAM roles, sometimes in our scripts we need to use credentials to external resources, not related to AWS, such as API keys, database credentials, or passwords of any kind. There are a myriad of ways of handling such sensitive data. In this article, I'll show you an incredibly simple and effective way to manage that using AWS and Python.

Popular ways of managing credentials

Depending on your execution platfor*m (Kubernetes, on-prem servers, distributed cloud clusters*) or version control hosting platform (Github, Bitbucket, Gitlab, Gitea, SVN, ...), you may use a different method to manage confidential access data. Here is a list of the most common ways to handle credentials that I've heard about so far:

- environment variables,

- ingesting credentials as part of CI/CD deployment,

- leveraging specific plugins from a development tool ex. Serverless Credentials Plugin, ingesting environment variables in Run/Debug configuration in Pycharm,

- storing credentials within the workflow orchestration solution ex. Airflow Connections or Prefect Secrets,

- storing credentials as Kubernetes or Docker secrets objects

- leveraging tools such as HashiCorp Vault.

All of the above solutions are perfectly feasible, but in this article, I want to demonstrate an alternative solution by leveraging AWS Secrets Manager. This method will be secure (encrypted using AWS KMS) and will work the same way regardless of whether you run your Python script locally, in AWS Lambda, or on a standalone server provided that your execution platform is authorized to access AWS Secrets Manager.

Our use case

We will perform the following steps:

- create an API key on the Alpha Vantage platform so that we can get stock market data from this API,

- store the API key inside of AWS Secrets Manager,

- retrieve this API key within our script by using just two lines of Python code

- use the key to get the most recent Apple stock market data

- build AWS Lambda function and test the same functionality there.

Implementation --- PoC showing this method

Create the API Key

If you want to follow along, go to https://www.alphavantage.co/ and get your API key.

AWS Secrets Manager

First, make sure that you configured AWS CLI with an IAM user that has access to interact with the AWS Secrets Manager. Then, you can store the secret using the following simple command in your terminal:

To see whether it worked, you can list all secrets that you have in your account using:

If your credentials should change later (ex. if you changed your password), updating the credentials is as simple as the following command:

Retrieve the credentials using awswrangler

AWS Secrets Manager allows storing credentials in a JSON string. This means that a single secret could hold your entire database connection string, i.e., your user name, password, hostname, port, database name, etc.

The awswrangler package offers a method that deserializes this data into a Python dictionary. When combined with **kwargs, you could unpack all the credentials from a dictionary straight into your Python function performing authentication.

My requirements.txt looks as follows (using Python 3.8):

Then, to retrieve the secret stored using AWS CLI, you just need those 2 lines:

Using the retrieved credentials to get stock market data

There is a handy Python package called pandas_datareader that allows to easily retrieve data from various sources and store it as a Pandas dataframe. In the example below, we're retrieving Apple stock market data (intraday) for the last two days. Note that we are passing the API key from AWS Secrets Manager to authenticate with the Alpha Vantage data source.

Here is a dataframe that I got:

AWS Lambda function using the credentials

Since we were able to access the credentials on a local machine, the next step is to do the same in AWS Lambda to demonstrate that this method is platform agnostic and works in any environment that can run Python.

Side note: I'm using the new alternative way of packaging AWS Lambda function with a Docker container image. If you want to learn more about that, have a look at my previous article discussing it in more detail.

I use the following Dockerfile as a basis for my AWS Lambda function:

The script lambda.py inside of src directory looks as follows:

To build and package the code to a Docker container, we use the commands:

Finally, we build an ECR repository and push the image to ECR:

Note: Replace 123456789 with your AWS account ID. Also, adjust your AWS region, accordingly --- I'm using eu-central-1.

We are now ready to build and test our AWS Lambda function in the AWS management console.

Getting insights about our Lambda function

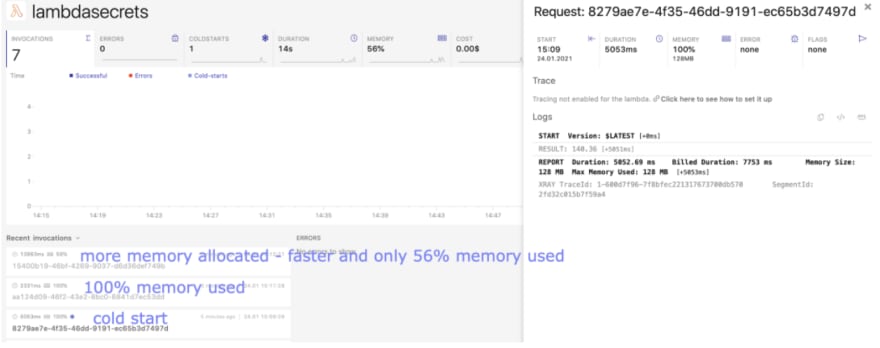

If you are running multiple Lambda function workloads, it's beneficial to consider using an observability platform that will help you keep an overview of all your serverless components. In the example below, I'm using Dashbird to obtain additional information about the Lambda function executed above, such as:

- the execution duration per specific function invocation,

- the cold starts,

- memory utilization,

- number of invocations and percentage of errors across them,

- ...and much more.

Using Dashbird to debug and gain insights about the AWS Lambda function invocations --- image by the author.

You can see in the image above that the first function execution had a cold start. The second one used 100% of memory. Those insights helped me to optimize the resources by increasing the allocated memory. In the subsequent invocation, my function* ran faster *and didn't max out the total memory capacity.

The benefits of AWS Secrets Manager

Hopefully, you could see how easy it is to store and retrieve your sensitive data using this AWS service. Here is a list of benefits that this method gives you:

- Security --- credentials are encrypted using AWS KMS

- One central place for all credentials --- if you decide to store all your credentials there, you get a single place where all credentials are located. When authorized, you can also view and update the secrets in the AWS management console.

- Accessibility & platform-independent credentials store --- we can access the secrets from a local machine, from serverless functions or containers, or even from on-prem servers, provided that those compute platforms or processes are authorized to access AWS Secrets Manager resources.

- Portability across programming languages --- AWS provides SDKs for various languages so that you can access the same credentials using Java, Node.js, JavaScript, Go, C++, .NET, and more.

- AWS CloudTrail integration --- when enabling CloudTrail traces, you can keep track of who accessed specific credentials and when --- this provides you with an audit trail about the usage of your resources.

- Granularity in access control --- we can easily grant permissions only for specific credentials to particular users, making it much easier to have an overview of who has access to what.

The potential downsides with AWS Secrets Manager

I have a policy to always provide pros and cons to any technology without sugar-coating anything. Those are the risks or downsides I see so far using this service to manage credentials on an enterprise-scale:

- If you store all credentials in a single place and you don't give access according to the least-privilege principle, i.e., some super-user has access to all credentials, then when credentials of such super-user get compromised, you risk exposing all secrets. This is only true if you use the service inappropriately, but I still wanted to include it for the sake of completeness.

- Costs --- since you pay for each secret per month, you must be aware that the price can sum up if you use the service to store a large number of credentials.

- Trust --- it's still hard to convince some IT managers that cloud services can be more secure than on-prem resources when configured correctly. Having said that, many IT managers still don't trust any cloud vendor enough to literally confide in them with their secrets.

- Your execution platform must have access to the Secrets Manager itself. This means that you need to either configure an IAM role, or you need to store this single secret some other way. It's not really a downside or risk, but simply, you need to be aware that access to the Secrets Manager needs to be additionally managed in some way, too.

Wrapping up

In this article, we looked at the AWS Secrets Manager as a way of managing credentials in Python scripts. We could see how easy it is to put, update, or list secrets using AWS CLI. We then looked at how we can access those credentials in Python using just two lines of code thanks to the package awswrangler. Additionally, we deployed the script to AWS Lambda to demonstrate that this method is platform-agnostic. As a bonus section, we looked at how we can add observability to our Lambda functions using Dashbird. Finally, we discussed the pros and cons of AWS Secrets Manager as a way of managing credentials on an enterprise-scale.

Further reading:

Python error handling in AWS Lambda

Top comments (0)