![Cover image for Assigning [ ] performs better than Array(n) - Reports attached.](https://media2.dev.to/dynamic/image/width=1000,height=420,fit=cover,gravity=auto,format=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fi%2Fyeci3mlipnrhaodeaga4.png)

Here is a small snippet that was measured using jsperf sit

That means Array(n) is a lot slower than [].

What might be the reason?

I...

For further actions, you may consider blocking this person and/or reporting abuse

Hey there's a problem with your jsperf code. The first example ends up with an array containing 3999 items not 2000. So all of the rest of it is skewed by that. The fact is the best performance is the other way around:

jsperf.com/test-assign-vs-push/1

That's right. I'm sorry that I didn't observe that. But as per the explanation holey array should be slower unless there's a trade off on memory allocation on a repeated basis. I'll get back on this. Thanks for correcting!

Update

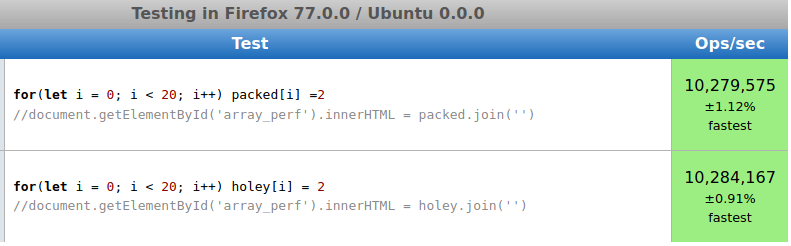

I have updated the image with the latest example. Please check

You may argue that the length of array is 2000 in the other case. But in reality there are no 2000 elements in the memory.

Also, if we do assign 2000 memory then we will be doing initial 20 operations which are slower. But yes, there's a trade-off in memory allocation performance

Well you are doing an array.join on 2000 elements in the 2nd example and only 20 in the first. The fact that they are

undefinedand therefore add up to very little output doesn't change the fact it has to process it with the join. It's your innerHTML that is taking the time now...Remove that inconsistency (and make it so there's enough data to really compare) jsperf.com/assign-vs-push-d2/1

I guess if you are saying that you have to account for empty elements in a bigger array, then I get that - over-allocating an array would certainly cause problems as everything will have to deal with the empty space when running across the whole array. The point I'm making is that it's more performant for allocation than pushing on-demand, individual item lookup performance remains optimal, but I agree that whole comprehensions are slower if the empty space is meaningless.

Please refer,

dev.to/svaani/comment/12443

As you scroll down the comments, you will see the same discussion that went through. Looks like, chrome team has recently has optimized on holey arrays. Firefox gives different results even with the link that you have provided.

I noticed you updated your example for accuracy. While the statements you are making are true, your example is still not entirely representative of this fact. The initialization of the array is one of the more costly steps in your example, especially one of size 2000

It might make sense to use jsperfs setup function to create them outside the timed section.

Notice how this shows the holey array as faster? This is because it still isn't a fair test, the packed array needs to grow to accommodate insertions using push, so we should be testing with a packed array the same size as the holey array.

I'm not seeing much of a difference between a packed array and holey array using the constructor, so I tried a different way of making a holey array and saw a major drop in performance.

This last example shows the effects of holey arrays. I'm unable to test this in a way that shows a performance benefit to not using the array constructor, I could be wrong about this in some way, but the benefits seem to be marginal at best. The holey arrays created using the other method do seem to act different than ones created using the constructor nonetheless.

In most of the examples that you have quoted, you are checking only the assignment part but not retrieve part.

Consider this example, where

jointriggers retrieve part also. You will get the results otherwise,Only assignment case

In the same example as you have given in the first snippet also gives the equal results if we don't use push.

pushtend to take a longer time but I still need to get a better clarity on to howpushtakes longer.I'm guessing you ran this test: jsperf.com/test-assign-vs-push/12

The join method is taking so long because it is running on an array with length 2000 compared to the array of length 20, it's not a fair comparison. Despite those being 'empty' they aren't ignored by the join method.

Try running:

It still prints

test test test test test test test test testdespite the empty entriesIf you change

new Array(2000)tonew Array(20)that difference becomes negligible.In that case, let's have this code in the setup,

And this is the result,

Now both the arrays are of size 2000 but the

packedis not holey array.That brings another loophole in holey arrays

In holey array, even though we have not assigned any values to the array, just because of allocation, the javascript engine is trying to hit those elements.

When we use an array with

new Array(2000), would we not tend to use join,reduce,map and more?jsperf.com/test-assign-vs-push/23 looks to be the same as what you are using, however when I run it I get much different performance results:

A very negligible difference.

Also speaking to your note on javascript trying to hit those empty spots, this is actually very inconsistent in javascripts implementation. Some methods skip the holes entirely, others use them.

for example, map:

Interestingly the console output is only:

meaning the callback was only run for the one index that had a value.

I think that's because chrome team is trying optimize the holey arrays too.

@Vani - Thoughts on the comments below?

If they are correct, consider editing your post for accuracy please.

We want accurate metrics on dev.to so all can benefit.

💯❤️

This is an updated example

Dope! ❤️💯

What about

Array.prototype.fill?Seems the issue is the way the array was instantiated. What if you did array(0) or something