Using DJL.AI For Deep Learning BERT Q&A in NiFi DataFlows

Introduction:

I will be talking about this processor at Apache Con @ Home 2020 in my "Apache Deep Learning 301" talk with Dr. Ian Brooks.

Sometimes you want your Deep Learning Easy and in Java, so let's do that with DJL in a custom Apache NiFi processor running in CDP Data Hubs. This one does BERT QA.

To use the processor feed in a paragraph to analyze via the paragraph parameter in the NiFi processor. Also feed in a question, like Why? or something very specific like asking the date or an event.

The pretrained model is BERT QA model using PyTorch. the NiFi Processor Source:

https://github.com/tspannhw/nifi-djlqa-processor

Grab the Recent Release NAR to install to your NiFi lib directories:

https://github.com/tspannhw/nifi-djlqa-processor/releases/tag/1.2

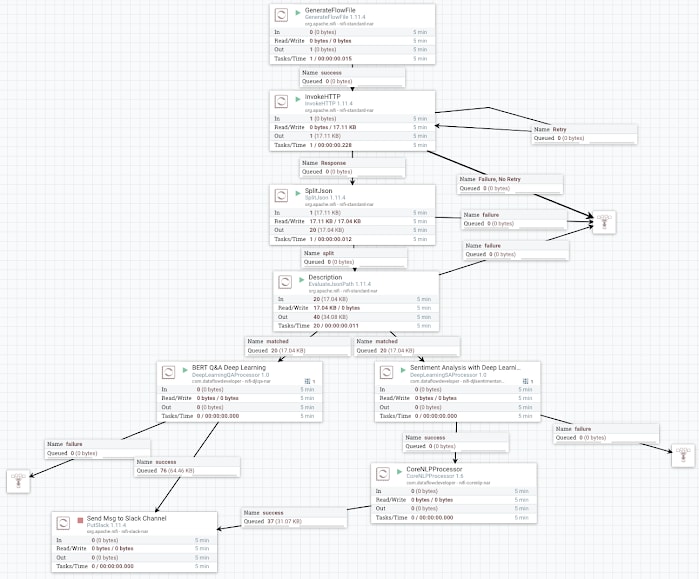

Example Run

Demo Data Source

https://newsapi.org/v2/everything?q=cloudera&apiKey=REGISTERFORAKEY

Reference:

- Deep Learning Sentiment Analysis with DJL.ai

- https://github.com/awslabs/djl/blob/master/mxnet/mxnet-engine/README.md

- https://github.com/aws-samples/djl-demo/tree/master/flink/sentiment-analysis

- https://github.com/awslabs/djl/releases

- https://www.datainmotion.dev/2020/09/using-djlai-for-deep-learning-based.html

- http://docs.djl.ai/jupyter/BERTQA.html

Deep Learning Note:

BERT QA Model

Tip

Make sure you have 1-2 GB of RAM extra for your NiFi instance for running each DJL processor. If you have a lot of text, run more nodes and/or RAM. Make sure you have at least 8 cores per Deep Learning process. I prefer JDK 11 for this.

See Also: https://www.datainmotion.dev/2019/12/easy-deep-learning-in-apache-nifi-with.html

Top comments (0)