What I built/am building

I am working on a small web application that integrates Twilio with Slack, allowing a company to use Slack as a front-end/message router for Twilio. This allows someone to quickly stand up a process (for only the cost of the text messages and a small vm) and leverage the Slack desktop/mobile/web GUI and all of the alerts, logging, search, integrations that come with it. It also allowed me to avoid much of the boilerplate/front end development I despise/ do not know how to do/ would not have the time to complete

Category Submission:

COVID-19 Communications

Link to Code / Screenshots / Setup

https://github.com/mikejagmin/wellness_check

How I built it

Python

Flask web framework

Twilio Python helper library

Slack client/event api

dataTables.JS

MySQL/SQLite

Redis

python-rq (lightweight job queue)

How I got this to run somewhere besides my local machine (and with https!):

https://www.digitalocean.com/community/tutorials/how-to-serve-flask-applications-with-gunicorn-and-nginx-on-ubuntu-18-04

Additional Resources/Info

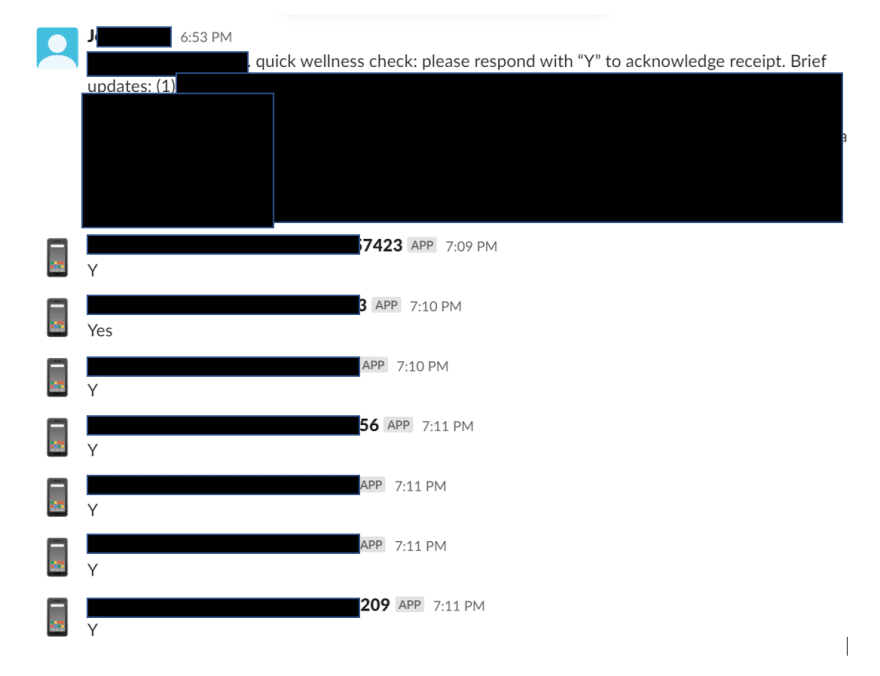

A friend came to me with a need to conduct wellness checks for ~200 employees across a 5-6 locations/groups. They were hoping to use text messages to streamline the process of checking in while the offices are closed, but needed a way to log responses. I came up with this idea based on some Slack integrations I had built in the past. The project has been evolving for approximately 2 weeks.

I chose Slack because of the easy to use API. The channels work well for organizing the communication, and the desktop/mobile notifications will allow management to see responses in real time if someone reaches out for assistance. There was a concern of assistance not being able to be provided in a timely fashion if the responses only went to a database and had to be queried. Building something with desktop/push notifications or an app is a huge undertaking, but the slack API allowed me to leverage their great UX easily.

Learning/Improvements so far:

Introducing a worker queue to offload request processing from the web-server

I was concerned if many people began responding quickly the web-server may not be able to accept the requests fast enough. During testing I experienced some cases where Twilio resent a message because my web-server was slow to respond. I considered:

Increasing instance size or adding web-servers

I quickly realized this would also require a load balancer and a hosted database (was using Sqlite for development/testing), which would exponentially increase cost/complexity very quickly. My use case has no load 98% of the time, then a large number of outgoing messages followed by a large number then slow trickle of responses, and a few data requests for the response audit. So burst processing, which would likely mean a lot of idle capacity coming out of my piggybank.Use a server-less architecture

I was quickly brought back to reality when I remembered most of my app depends on inbound requests from Slack/Twilio and web-based reporting that needed something running full time. The added cost of a standalone SQL database, paired with refactoring for server-less architecture, seemed like unnecessary added complexity.

I ended up taking somewhat of a hybrid approach by testing out the new-to-me python concurrent-futures, which turned out to be much easier to implement than I expected and allowed my web-server to respond to requests immediately, while the 'heavy lifting' continued in the background. This hybrid approach has kept my costs sub $20/month (not including SMS fees), which my friend was quite pleased with.

I was still concerned about running the futures within my flask application, so I settled on locally queuing incoming messages using python-rq and a worker process running inside of a tmux instance to process the actual requests. I suspect there is a way to give the web-server process priority use of resources and let the worker process when resources are not in use since a small delay in recording replies is acceptable. I kept the concurrent-futures as a fail-over if the queue does not respond using a plain old try/except. I did something similar when I moved to MySQL from SQLite, keeping the local db's and continuing to sync the user and channel data to both of them in case of a failure. I thought this provided a cheap insurance.

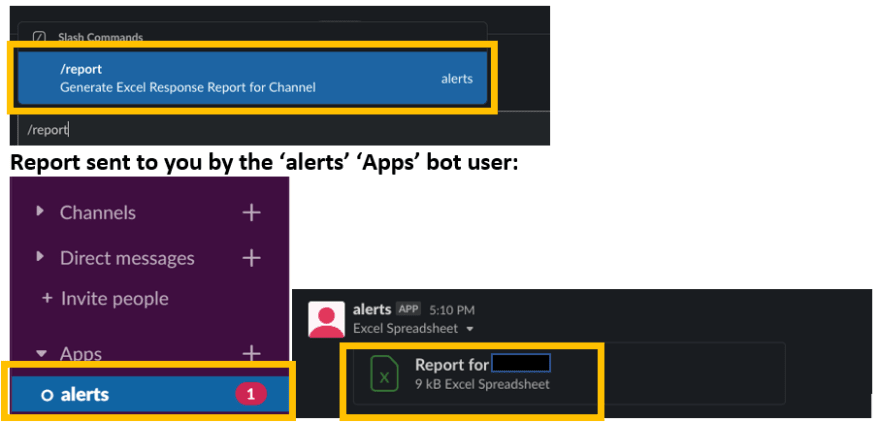

Creating slash commands to reduce the need for the cumbersome web gui reporting/export

Today I moved the common audit tasks into Slack slash commands so the user no longer needs to visit the web based reporting to check who has not responded. By typing /noreply 24 in a channel, Slack will reply in a DM with a list of contacts who have not replied within the last 24 hours. This is much easier than logging into the site, searching, and then sorting by the reply timestamp

I also added a /report command, incorporating a new package I began using recently, openpyxl, to parse excel files. The command launches the same SQL query as used by the front end report, exports the data to an xlsx, and then uses Slack's very well written python slackclient (2.0) to post the file to a direct message.

I was impressed by 1.0, but 2.0 has fixed all of my previous gripes. To upload a file is an absolute piece of cake:

channels=channel, username=from_user,

file=file_location, filename=file_name, title=title,

text=body)

The rest of the Slack API is just as user-friendly, and documentation/tester was a huge help while learning: https://api.slack.com/methods/files.upload. I highly recommend it for fast error reporting/notifications. I began using Slack to notify me if a long running ETL/analysis I was running broke or completed, as I would too often move onto something else and come back hours later to see it had failed on a bad row >:(. By adding a one line Slack web-hook with a try/except wrapper, I get a ping when the job has stopped or completed.

Today was a very educational day as I learned how to use git-filter-branch to remove an old boo boo from my history and got to finally force myself to write some markup.

Next, I need to write some unit tests (I have only been saying that for 5 years...) and learn how to mock Twilio, which would make testing a lot easier. Also, I'm thinking about a elegant way to get the CUD parts of CRUD for managing the SMS contacts through Slack so I can remove the front end from basic usage. The ability to drop in an xls file formatted correctly for a bulk import update directly into a channel just occurred to me as I write this and would be pretty nifty...

The online https://www.roomservicefest.com that kept me going all day is winding down but is back tomorrow, so if anyone reads this before the 27th check it out.

Top comments (0)