If you’re looking for work as a software developer, it won’t be long until you run into the term ‘AWS’, or ‘Amazon Web Services’. It shows up in developer job descriptions across every industry. If you’re anything like me, you’d be very curious as to what AWS actually does.

Here’s the very short version: AWS lets users run their applications on Amazon’s hardware.

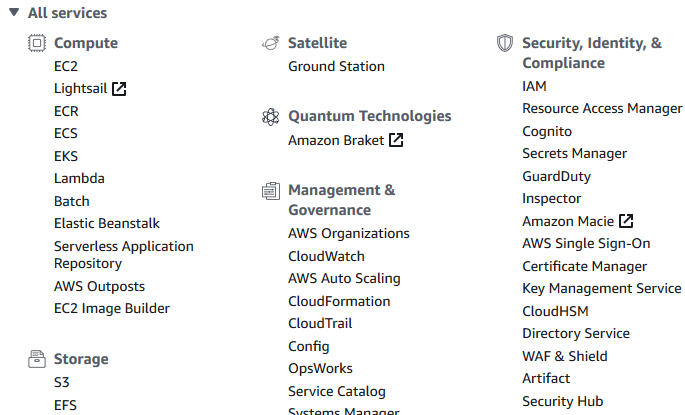

Here’s the longer version: Amazon Web Services is a massive set of services that handle hosting, managing, supporting, and protecting your applications. Below is a screenshot of the AWS console which shows only about a quarter of the offered services.

Clearly, that’s too many to explore in a reasonable time. And I would be lying if I said I knew what half of them do, so I’ll just be going over the basics of AWS: why it’s important and which services are the most fundamental.

Why use AWS?

So what makes AWS so useful? Why do so many companies choose to let Amazon handle their hardware needs?

Simple, hardware is expensive. Buying servers is expensive and limited. First, you need a place to put it, which means if you want your app to have low latency in both California and Taiwan, you’d better be willing to pay for two pieces of real estate. Second, you need to be very accurate in judging the size of your user base. Too few servers and you won’t be able to handle the excess requests, too many and you’ve wasted money on idle servers. Finally, servers wear down and need to be replaced over time.

Fortunately, Amazon is a globe-spanning, multi-billion dollar corporation. They already have large server farms in Oregon, Hong Kong, and London. They’re willing to lease all of that computing power, and the best part is that they only charge for the resources that you’re using. If your app is having a slow traffic day, you can save money by reducing the number of instances you’re running. If your app soars in popularity, it can immediately request additional resources so that it can handle the load.

This adaptability and low entry cost has made AWS incredibly popular with companies and start-ups, and has lowered the barrier to entry for many applications. Airbnb, IMDb, and Pintrest are all hosted through AWS. Even some larger companies like Netflix and McDonalds have decided its cheaper to use AWS rather than in-house options.

Speaking of low barrier to entry, you can make an AWS account right now, and start their 1 year free-tier trial. So there is no cost to creating an account and learning how to use this powerful tool. Let’s look at some of the services you should try first.

Virtual Private Cloud

The first thing you’ll need to do when working with AWS is to create a virtual space for your projects to exist. Virtual Private Cloud, or VPC, allows you to isolate your applications and resources from the outside world. A VPC can hold multiple subnets, which manage how their contents can be accessed.

Breaking up VPCs into subnets gives some very powerful options. You might have two subnets in a VPC, one which is accessible to the outside world, and one which is accessible only by its sibling. This allows users to create very secure infrastructures for their applications. Another option is to create identical subnets and run them in different locations, this allows for redundancy should one of the locations fail. Another option is to use a Load-balancer to direct users to the least used subnet of set, ideally keeping latency and load manageable.

VPCs and subnets are necessary to host the real meat and potatoes of your app: EC2 instances.

Elastic Cloud Compute

The foundation for most AWS applications is handled by the Elastic Cloud Compute service, or ‘EC2’ for short. EC2 allows you to set up virtual servers, called instances, in the Amazon cloud. You can do anything with an instance that you could with a computer: run applications, connect with other machines, or even act as desktops.

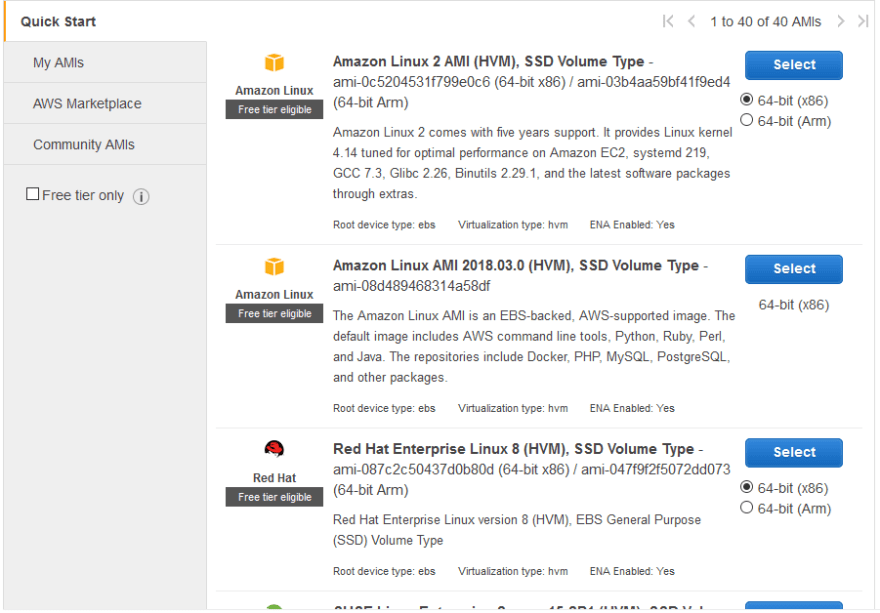

EC2 supports many operating systems, so you can create your EC2 instance with whatever OS best fits your needs at the time. Each instance is launched with an Amazon Machine Image, which defines the OS, as well as which software is preinstalled. You can start up a basic AMI that just runs Linux or Windows, or you can choose an image which has been optimized for a specific task, like Amazon Deep Learning Image.

You can also define your own images. After installing your application and its dependencies in one instance, you can save that instance as an image. This allows you to create new instances that are ready to run your application immediately. This allows you to easily scale your application up or down to accommodate a changing number of users. You can even use some of the other AWS features to automate this process!

Simple Storage Service

Simple Storage Service, or S3, is the primary service used to store files on AWS. Much like instances for EC2, S3 has its own building blocks called buckets and objects. The relationship between buckets and objects is pretty self-explanatory. An object can be any type of file, and has attached meta-data which holds file type and last-modified date. Objects are stored in buckets. Each bucket has a DNS attached to it, so you can access the contents of your bucket through a URL. You can control the access rights to a bucket, which is by default only accessible to its creator. You can make buckets visible and editable to other AWS accounts, or the public at large.

One thing to note for aspiring AWS users is that S3 does not technically have a ‘free tier’ like many of the other services, so you will be paying to use it. Don’t worry too much about it though, because the prices are measured in fractions of pennies per Terabyte per month. If I leave my 20 GB of content alone in my bucket forever, I’ll owe Amazon my the first penny sometime in the 24th century.

Finally, S3 works best for static files such as documents, media, or program files. More dynamic files, such as frequently accessed databases, are better served by some of the other AWS services.

Relational Database Service

RDS is AWS’s tool for SQL databases. The building block of RDS is called an instance because it is really just a specialized EC2 instance. RDS instances have additional features for better reliability and data protection. Backups of RDS databases are performed daily, and can be held for up to a month depending on your settings. This allows you to retrieve your data should it become lost or corrupted.

Earlier, when I was describing subnets, I mentioned that you could have multiple identical subnets in case one fails. The same idea is present in RDS through Multi-AZ Deployment, where multiple copies of a database are kept in

separate locations. Should one location fail, requests for the database are automatically routed to the surviving databases.

Finally, RDS can produce Read-Replicas of a database. These replicas are essentially copies of a database that are updated when the RDS instance has spare processing power. RDS will never pull information from these replicas, even during the event of a location failure. They provide a way for the database to be read without impacting customer-facing resources.

Top comments (0)