Suppose that we have several vehicles that are cars and trucks. We measure the weight of each vehicle and the number of wheels of each vehicle. We also forget for now that cars and trucks look different. How can we make an algorithm tell them apart?

If you haven't already, check out this article for the intuition of machine learning before we continue: https://rhurbans.com/machine-learning-intuition/

Almost all cars have exactly four wheels, and many large trucks have more than four wheels. Trucks are usually heavier than cars, but a large sport-utility vehicle may be as heavy as a small truck. We could find relationships between the weight and number of wheels of vehicles to predict whether a vehicle is a car or a truck. A decision tree can be useful here.

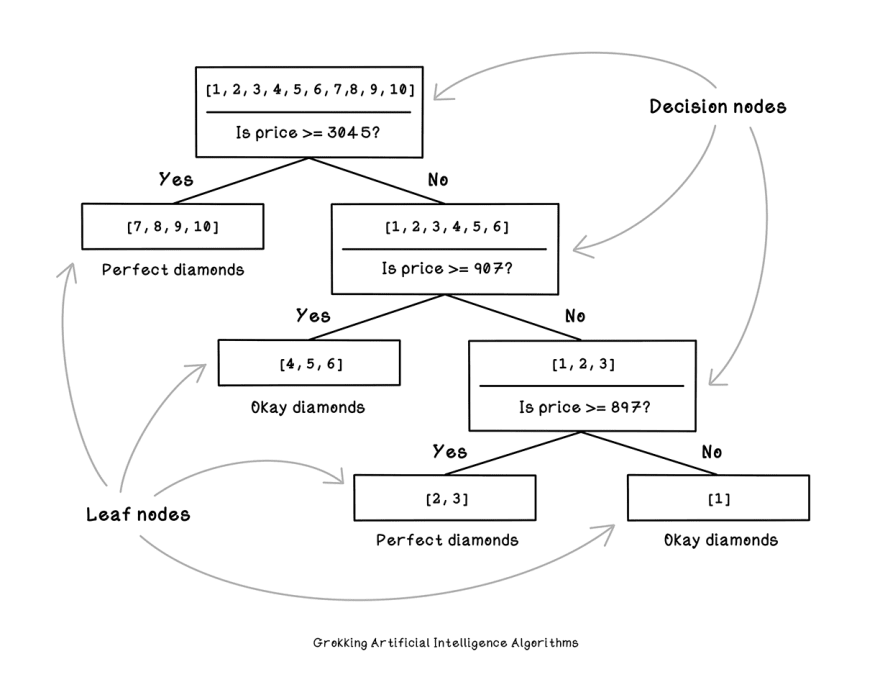

Decision trees are structures that describe a series of decisions that are made to find a solution to a problem. So a decision tree can be used to encompass the above logic. Let's think about how we might train a model to categorize diamonds.

A decision tree includes decision nodes, and leaf nodes. A decision node contains a question being asked, the positive examples related to the question, and the negative examples.

A decision tree also includes leaf nodes containing a list of examples only. These are all examples which were categorised correctly.

In building a decision tree, we test all possible questions to determine which one is the best question to ask at a specific point in the decision tree. To test a question, we use the concept of entropy — the measurement of uncertainty of a dataset.

If we had 5 Perfect diamonds and 5 Okay diamonds, and tried to pick a Perfect diamond by randomly selecting a diamond from the 10, what are the chances that the diamond would be Perfect?

Decision trees are a simple but powerful ML algorithm. To learn more, see Grokking AI Algorithms with Manning Publications: http://bit.ly/gaia-book, consider following me - @RishalHurbans, or join my mailing list for infrequent knowledge drops: https://rhurbans.com/subscribe.

Top comments (0)