A second article on Argo CD. This time we are going to see how we can use it with Istio to handle canary deployments.

Canary deployment is a method to deploy a new version of an application only to a small subset of servers. If an error is introduced in a new release, it will affect only a small part of users. This type of deployment is most of the time composed of multiple steps that send more and more traffic to the canary release until all the traffic is sent to it and it becomes the stable version. Between each step, we can have some tests to be sure everything is fine. If an error is detected by a test, we roll back to the previous working version. That means it is possible to validate a new version without impacting all users.

In this demo, we will deploy an application provided by Argo CD. More information about this application and the code here.

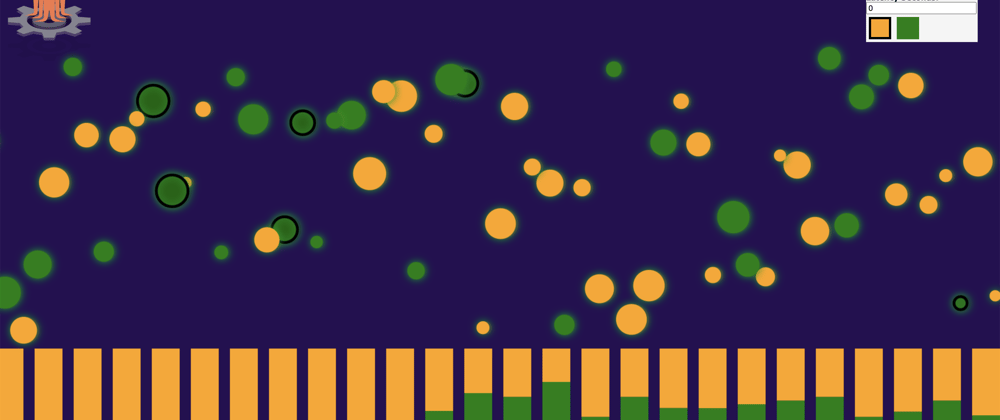

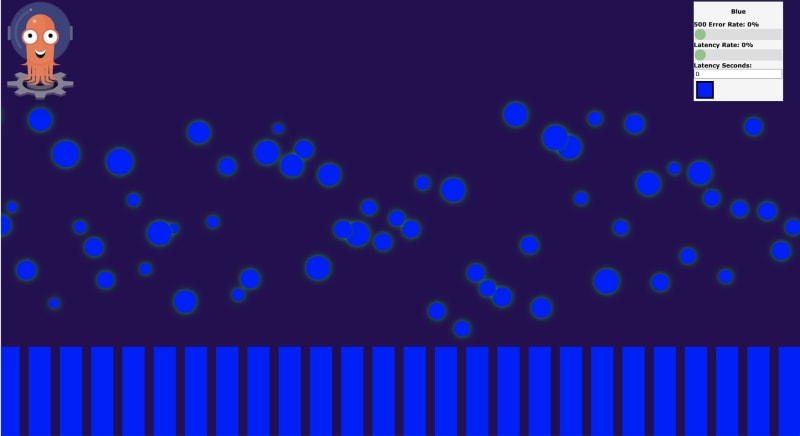

It is perfect for our demo. It provides a Web UI which makes multiple POST requests every second. Each POST request is represented by a bubble. In our example, we deploy our application with the blue version, then we update it with the orange version using canary deployment. In the picture below, you see how the traffic is routed between our current version (blue) and the new canary release (orange).

Requirements

Kubernetes cluster

We will use GKE in the demo. If you have a Google Cloud account, you can simply create a cluster with the below command. If you already have a cluster, you can pass to the next step.

gcloud beta container clusters create gke-argocd-canary \

--release-channel regular

Istio

You can install istio with istioctl.

istioctl install --set profile=demo

kubectl label namespace default istio-injection=enabled

Argo CD

kubectl create namespace argocd

kubectl apply -n argocd -f https://raw.githubusercontent.com/argoproj/argo-cd/stable/manifests/install.yaml

Argo CD Rollout

Argo Rollouts is a Kubernetes controller and set of CRDs which provide advanced deployment capabilities such as blue-green, canary, canary analysis, experimentation, and progressive delivery features to Kubernetes.

https://argoproj.github.io/argo-cd/

With Kubernetes, we use a deployment resource to manage our applications. With Argo CD rollout, we don't use a deployment, it is replaced by a rollout resource. Like a deployment, Argo CD will create replicaset and will add itself some labels on services to identify pods.

kubectl create namespace argo-rollouts

kubectl apply -n argo-rollouts -f https://raw.githubusercontent.com/argoproj/argo-rollouts/stable/manifests/install.yaml

Prepare Istio YAML

First, we have to create Istio resources: the gateway and the virtual service.

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: istio-gateway

labels:

app: istio-gateway

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: istio-vs

labels:

app: istio-vs

spec:

hosts:

- "*"

gateways:

- istio-gateway

http:

- name: primary

route:

- destination:

host: demo

weight: 100

- destination:

host: demo-canary

weight: 0

From now, 100% of the traffic will go to the main service (called demo here). We'll see later that Argo CD will change these values to send some traffic on the canary service.

Create our Rollout resource

The rollout resource is like a deployment in Kubernetes with some new fields.

Let's focus on them:

strategy:

# which strategy to use (blueGreen, canary..)

canary:

# this part represents all the steps during an update.

steps:

# we send at the beginning of the new release 20% of the traffic to the canary release.

# To do that, Argo CD will update the weight of Istio's VirtualService.

- setWeight: 20

# Then we wait 1min to generate some traffic on the canary release.

- pause:

duration: "1m"

# After 1 min, we send 50% of the traffic to the canary release.

- setWeight: 50

# Then we wait again 2min

- pause:

duration: "2m"

# At the end if no more step is present,

# Argo will send 100% of the traffic to the canary release.

canaryService: demo-canary # represents our canary clusterIP service.

stableService: demo # represents our main clusterIP service.

trafficRouting:

istio:

virtualService:

name: istio-vs

routes:

- primary

The full YAML of our Rollout resource with their clusterIP services:

---

apiVersion: argoproj.io/v1alpha1

kind: Rollout

metadata:

name: demo

labels:

app: demo

spec:

strategy:

canary:

steps:

- setWeight: 20

- pause:

duration: "1m"

- setWeight: 50

- pause:

duration: "2m"

canaryService: demo-canary

stableService: demo

trafficRouting:

istio:

virtualService:

name: istio-vs

routes:

- primary

replicas: 4

revisionHistoryLimit: 2

selector:

matchLabels:

app: demo

version: blue

template:

metadata:

labels:

app: demo

version: blue

spec:

containers:

- name: demo

image: argoproj/rollouts-demo:blue

imagePullPolicy: Always

ports:

- name: web

containerPort: 8080

resources:

requests:

memory: "64Mi"

cpu: "100m"

limits:

memory: "128Mi"

cpu: "140m"

---

apiVersion: v1

kind: Service

metadata:

name: demo

spec:

ports:

- port: 80

targetPort: 8080

selector:

app: demo

type: ClusterIP

---

apiVersion: v1

kind: Service

metadata:

name: demo-canary

spec:

ports:

- port: 80

targetPort: 8080

selector:

app: demo

type: ClusterIP

Deploy the application

We have the two mandatory files to deploy our application on Argo CD. Let's do this, connect to your Argo CD and deploy the application.

Little reminder on how to do that:

- Get the password of your running Argo CD:

kubectl get pods \

-n argocd \

-l app.kubernetes.io/name=argocd-server \

-o "jsonpath={.items[0].metadata.name}"

- Then use port forwarding to access it:

kubectl port-forward svc/argocd-server -n argocd 8080:443

Information to add when you create the application in Argo CD :

When it is deployed, we can access the application. We just need the load balancer IP used by Istio:

kubectl -n istio-system get services istio-ingressgateway \

-o "jsonpath={.status.loadBalancer.ingress[0].ip}"

Access this IP from your browser and you will this something like this:

Remember, each bubble represents a POST request made to your load balancer IP.

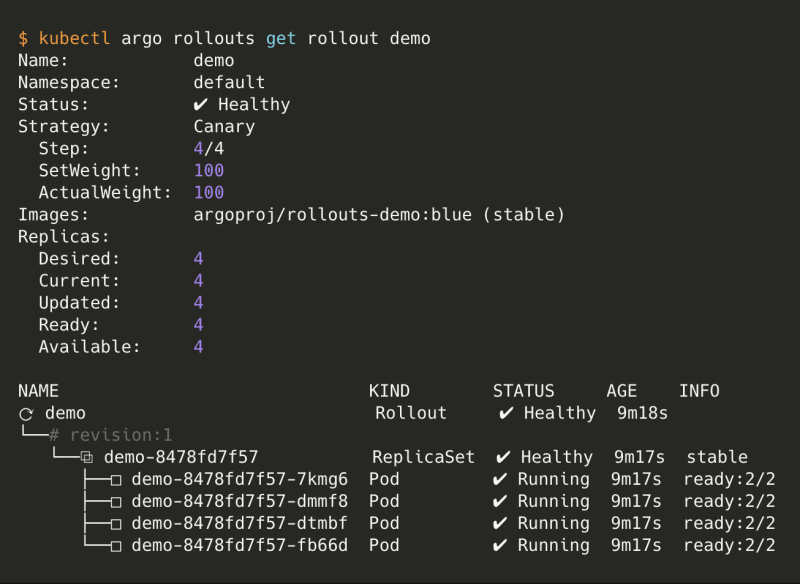

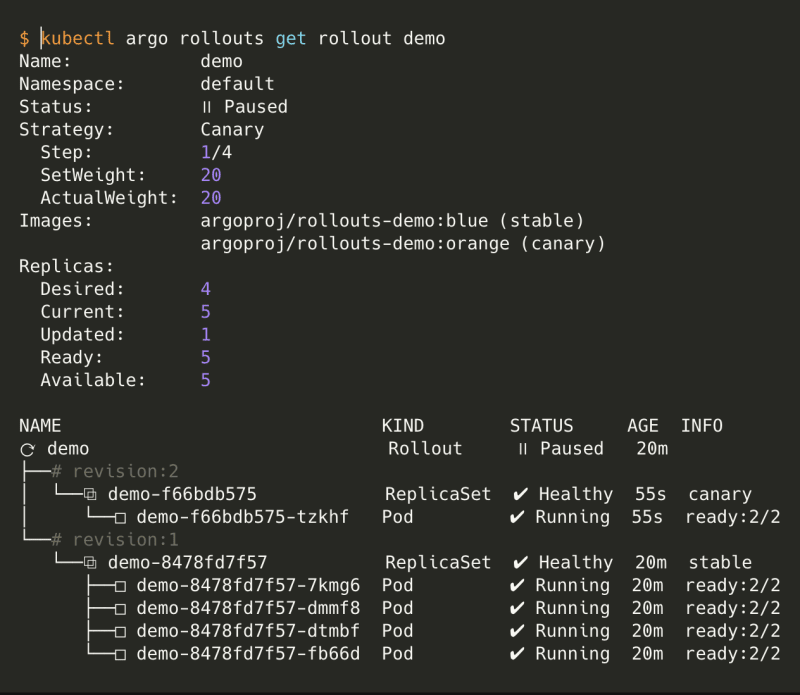

Before moving forward, let's see a new kubectl command.

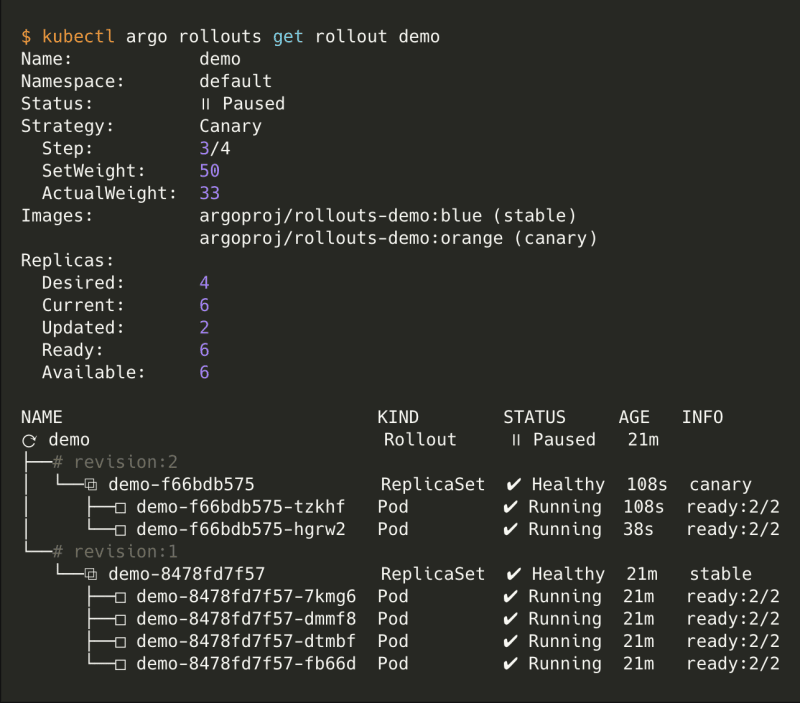

kubectl argo rollouts get rollout demo is useful to see the status of your rollout. During a canary deployment, all the steps will be displayed.

Deploy a new release.

Ok, blue bubbles are great but we want to try orange ones. Two options to change the image of our rollout, the first one is committed an update and the second one is using kubectl cli. We are going to use the second option, simpler for the demo.

Like the kubectl set image there is an equivalent with rollout :

kubectl argo rollouts set image demo "*=argoproj/rollouts-demo:orange"

You should have something like this:

You can see the progress of the canary deployment and its current step.

So if you return to your application from your browser, you'll see something like this:

After some minutes, 50% of the traffic is split between the two versions.

Once it's done, you can get the display again the state of your rollout. The rollout should be healthy and have the orange tag as stable.

Rollout summary:

- Rollout resource is a plugin of Argo CD

- You create only rollout resource and not deployment

- You can use canary or blue-green strategy

- You need an ingress supported: Istio, AWS ALB, Nginx, SMI

- Argo CD updates itself the weight of the services in the Istio Virtual Service

Validation

We can add some tests during the canary deployment to ensure there is no problem with the new version. It can be a simple test like executing a command or a complex one like querying Prometheus. That's what we are going to do.

Go back to our YAML files, we need to create a new resource with type "AnalysisTemplate":

---

apiVersion: argoproj.io/v1alpha1

kind: AnalysisTemplate

metadata:

name: success-rate

spec:

args:

- name: service-name

metrics:

- name: success-rate

successCondition: result[0] >= 0.95

interval: 30s

failureLimit: 1

provider:

prometheus:

address: http://prometheus.istio-system.svc.cluster.local:9090

query: |

sum(irate(

istio_requests_total{reporter="source",destination_service=~"{{args.service-name}}",response_code!~"5.*"}[1m]

)) /

sum(irate(

istio_requests_total{reporter="source",destination_service=~"{{args.service-name}}"}[1m]

))

In this test, we query Prometheus which is installed by Istio.

We want to query Prometheus every 30 seconds during the canary deployment to check the percentage of HTTP requests in error in the last minute. If the result is more than 5% ( result[0] >= 0.95 ) the test is considered failed.

So for a 5 minutes deployment, multiple tests run. If one fails, the deployment stops and rollbacks to the previous healthy version.

Our test is ready, we need to add it to our workflow. In the rollout YAML, let's see what is new.

---

apiVersion: argoproj.io/v1alpha1

kind: Rollout

metadata:

name: demo

labels:

app: demo

spec:

strategy:

canary:

# New part

analysis:

templates:

# name of AnalysisTemplate

- templateName: success-rate

# When it begins (after the 1-minute pause)

startingStep: 2

args:

- name: service-name

# name of the canary service (used in prometheus to find the correct metrics)

value: demo-canary.default.svc.cluster.local

steps:

- setWeight: 20

- pause:

duration: "1m"

- setWeight: 50

- pause:

duration: "2m"

canaryService: demo-canary

stableService: demo

[....]

The test will be executed every 30 seconds after the 1-minute pause. We start it after the pause because we need to have some traffic generated otherwise we won't have any data to query in Prometheus.

Let's try it! We need to deploy a new tag of the image and we'll generate some HTTP requests with a 500 status code. Two possibilities to generate bad traffic. We can directly use the application deployed and choose the number of error with the bar or just deployed a "bad" version.

We will deploy a bad version:

kubectl argo rollouts set image demo "*=argoproj/rollouts-demo:bad-green"

We can see two new types of bubbles :

- Green = succeeded HTTP request

- Green with black circle = error HTTP request

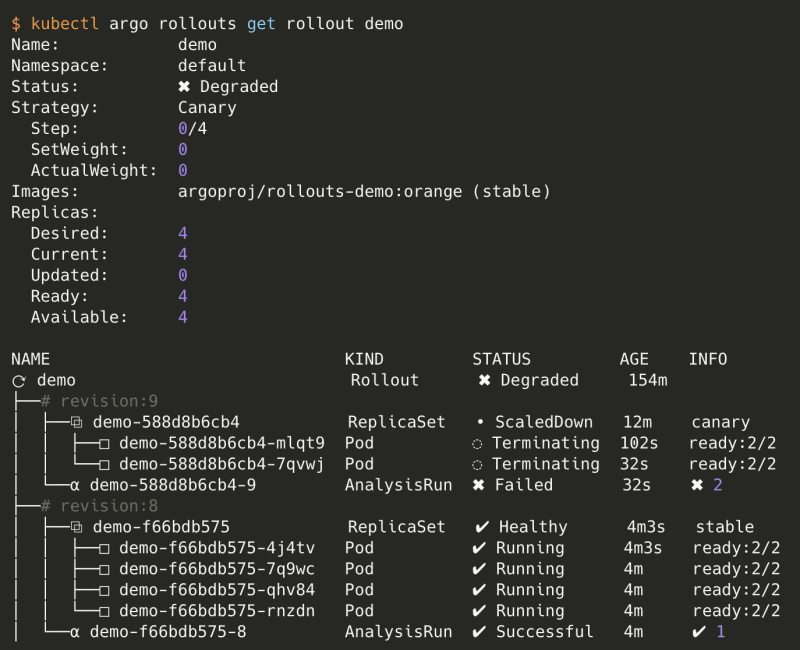

Let's see what happens if we describe the rollout.

The test fails two times and the status of the rollout is degraded. Argo CD has rolled back to the previous healthy version but keeps a degraded state. To return in the healthy state we must change the version manually to the correct image.

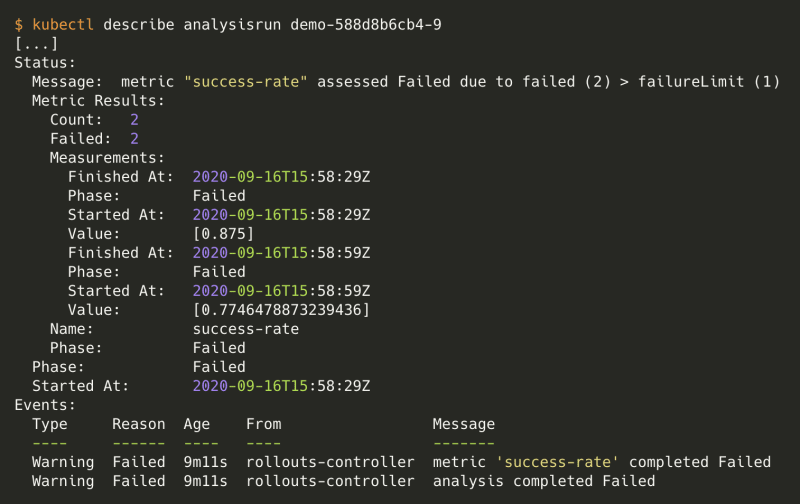

If you want to have details about failing tests, simply execute:

$ kubectl get analysisrun

NAME STATUS

demo-588d8b6cb4-9 Failed

We only had 77% and 87% of succeeded HTTP requests. That was not enough, we wanted at least 95%.

Validation summary:

- Analysis template is used for testing

- You can link an analysis template to multiple rollouts

- You can have multiple analysis template starting at a different step in a rollout

Full code here: https://github.com/pabardina/argocd-canary

Top comments (2)

Thanks for the post. Canary deployments is done only with ArgoCD. FMPOV, Istio should be out of the equation here.

Is there anything I'm missing between Istio and ArgoCD here or is just used for Monitoring and LB?

Regards!

Can you help me? I get stuck with rollout, I checked rollout status