The AWS Virtual Private Cloud (VPC) is an elemental feature of AWS. A number of services and resources within your AWS configuration rely upon the VPC and how it is configured. Hence, it’s important to have a fundamental understanding of how the VPC works, is configured, and how other core network services integrate with it

This blog will cover some of the essential steps to creating a VPC and configuring core network services. The topics to be covered are:

- The Default VPC

- Creating a new VPC

- Creating and configuring subnets

- Network security and security groups

- Route tables, the internet gateway and NAT gateways

The Default VPC

After one creates an AWS account, you will have a default VPC available in each region.

In the above case I have set a Name tag called “default” as a Name tag for default VPC’s will not be set. The purpose of the default VPC is to get you up and running quickly. It will include core default resources like a default route table, a default internet gateway attached to the default VPC, a default security group, a default network ACL and default subnets in each availability zone.

This will allow you to immediately begin deploying compute resources into the default VPC and using additional services like the Elastic Load Balancer, Amazon RDS, and Amazon EMR. You are able to modify the components of your default VPC to meet your needs but when learning or setting up a new environment you will want to create a new VPC with all its related components.

Create a new VPC

The very first thing one needs to do when creating a new VPC, after giving it a name, is to select an IPv4 CIDR block. In other words, choose an IP address range for your VPC.

There are three main recommendations when choosing a range for your VPC. They are:

- Ensure the range is one of the RFC1918 ranges

- 10.0.0.0 - 10.255.255.255

- 172.16.0.0 - 172.31.255.255

- 192.168.0.0 - 192.168.255.55

- Choose a /16 address range

- This will allow for 65538 addresses and should be enough for most initial use cases

- The allowed block size is between /16 and /28

- Avoid ranges that overlap with other networks

- If unsure, start with a smaller address range

- Then add additional noncontiguous ranges to your VPC later on

If you wish, you may also include an IPv6 CIDR block. This blog will only focus on IPv4 configurations. It should be noted that in AWS, the first four IP addresses and the last IP address cannot be used. AWS uses these for their own internal purposes. Unless you have strict requirements to run your VPC in a dedicated tenancy like regulatory compliance or licensing restrictions where compute resources need to run on dedicated hardware, choose the default tenancy. To learn more about AWS tenancy check out Understanding AWS Tenancy.

On the creation of a new VPC, the following will also be automatically created and associated to the new VPC:

- A single main route table

- A single default security group

- A single network access control list

Creating and Configuring Subnets

An AWS region is divided into multiple availability zones. Subnets will reside within individual availability zones and are a sub range of the IP space created for the VPC. Multiple subnets are created in multiple availability zones for the purpose of deploying high availability applications and subnets are essentially how you get your resources into availability zones.

When creating your subnets, after assigning a name, you will select the VPC that the subnet is associated with. Then select an availability zone (if one is not selected, it will be automatically applied). Next, type a sub range of the IP space as a CIDR block for the subnet. In the below example we are creating three public subnets and assigning a range of 172.31.0.0/24, 172.31.1.0/24 and 172.31.2.0/24 respectively. The first public subnet will reside in availability zone af-south-1a, the second in availability zone af-south-1b and the third in af-south-1c. The intention would be to deploy compute resources across all three availability zones to ensure high availability of the compute resources.

The CIDR block of /24 will allow each subnet to have 256 addresses of which AWS reserves five, leaving 251 useable addresses per subnet.

The above diagram introduces the concept of public and private subnets. We will address this concept later on in more detail when we talk about security groups and NAT gateways.

In the diagram, the public subnets are represented in green and the private subnets are represented in blue.

It should be noted that for simplicity, this example does not include a load balancer for instances in the public subnet. Load balancers are out of the scope of this blog.

Above we created, what we called, three public subnets in each availability zone. You would do the same with what would be described as private subnets: one private subnet in each availability zone.

The concept is simple: compute resources that reside in the public subnets will be accessible from the internet and have both public and private IP addresses. The resources that reside in the private subnet will not be accessible from the internet and only have private IP addresses. When creating compute resources like EC2 instances, on launching of the instances, one can define whether the instance will have a public IP address and if so, it can be assumed it will reside in a public subnet. Access to the subnets are controlled by security groups.

Network Security with Security Groups

Security groups are simple, yet powerful tools that help you do network security within your VPC. In a traditional data center, they are akin to a firewall. Security groups can be considered the first defense layer protecting your VPC resources. The second layer of defense are Network Access Control Lists (NACL). The core differences between the two are that security groups are stateful, meaning a request coming from one direction automatically sets up permissions for the response to that request coming from the other direction. NACL’s are stateless, meaning that inbound and outbound rules need to be explicitly set. Security groups are also associated with specific compute instances and NACL’s are applicable at the subnet level and will include all compute resources in the subnet. NACL configurations are optional for VPC’s and are useful for a large number of instances within a subnet that need fine grain decision making on network traffic. NACL’s are not in the scope of this blog and for our use case, the default NACL configuration for the created VPC is used.

In our example we have 2 sets of compute resources: web servers and application servers. These servers will also reside in public and private subnets respectively. The web servers in the public subnet will accept traffic from the internet and in the course of handling this traffic they will offload requests to backend application servers in the private subnets.

Each group of instances shares a common purpose. Which means different rules will apply to each batch of compute resources and they will reside within different security groups. For example, the web servers will have a security groups rule that allows web traffic from everywhere on the internet. For the backend application servers however, their security group rule will state that only tcp port 5100 traffic from the web servers will be accepted, creating a secure flow of traffic to these application servers. This is described in the diagram above. (A third availability zone is assumed)

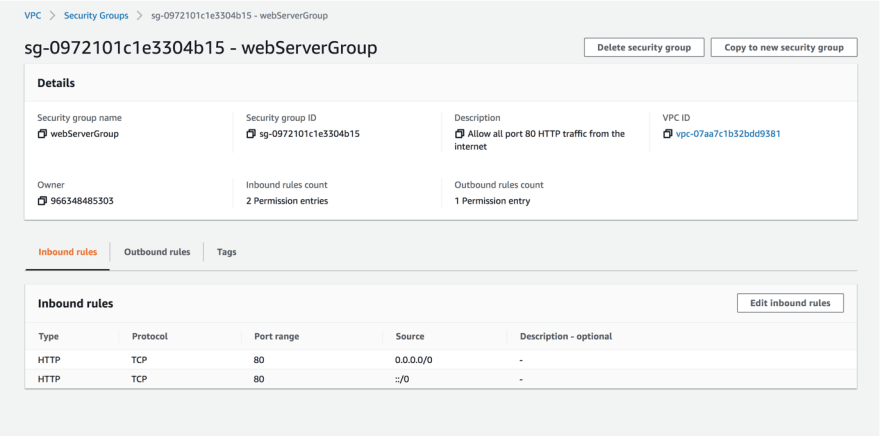

To configure this on the console you would start by creating the first security group for the web servers. Give the security group a name, a description and the appropriate VPC. Then edit the inbound rules for this particular security group.

Security group rules are quite flexible, allowing for many combinations of possible rules. In this case, what we want to do is allow all HTTP traffic or web traffic, with the TCP protocol on port 80 from the internet. In a live scenario, this will probably be encrypted HTTPS traffic in port 443. All other traffic will be blocked by default. Once you have created the rule, the security group configuration for the web servers will look like this:

For the backend application servers, we do not want traffic sourced from the internet, or any other source except for the web servers. So, we will create a security group for the backend servers and edit the inbound rules to look like this:

Note that, in this case, the custom TCP rule’s source will be configured as the Web server’s security group. This will only allow traffic to port 5100 for the backed servers from any instance within the Web servers security group making for a very flexible and almost zero maintenance configuration while still applying the principle of least privilege.

Route tables, the Internet Gateway and the NAT Gateway

Compute resources within subnets, inside of the VPC need to relay traffic within the VPC as well as to other destinations outside of the VPC. This is managed by route tables. Route tables are a list of rules stating that traffic for a certain destination should go to the appropriate target gateway.

When the VPC is first created, a default route table is assigned, it has a single route with a target of “local” which applies to traffic within the VPC only. All other route tables created will also have the same default single route. Initially, the default route table is also known as the main route table, subsequent route tables can also be set as the main route table but there can only be one main route table at a time.

The main route table for a VPC will have a slightly different quality to the other route tables that may exist. This is true in the sense that it cannot be deleted and that all subnets are implicitly associated to the main route table unless they are explicitly associated with another route table that exists. You can also explicitly associate subnets to the main route table. This can be done in case you would want to change your main route table for any reason.

There are a number of ways of managing routes and route tables. One could create a route table for each subnet and associate each subnet to an allotted route table. One could create a private and a public route table and associate your private and public subnets to each respectively. The route table strategy you select will largely depend on how you decide to design your VPC infrastructure.

In our example, I’ll create a single public route table for all three public subnets and for the private subnets, one route table for each. The three public subnets will be associated to the single public route table and each private subnet will be associated with each private route table. The reason for one public route table and three private route tables will become clearer when we add NAT gateways for the private subnets.

Let’s see this in action and how we create the gateways, routes and subnet associations for both our private and public subnets. To send traffic out to the internet from the public subnets, an internet gateway needs to be created and attached to the VPC.

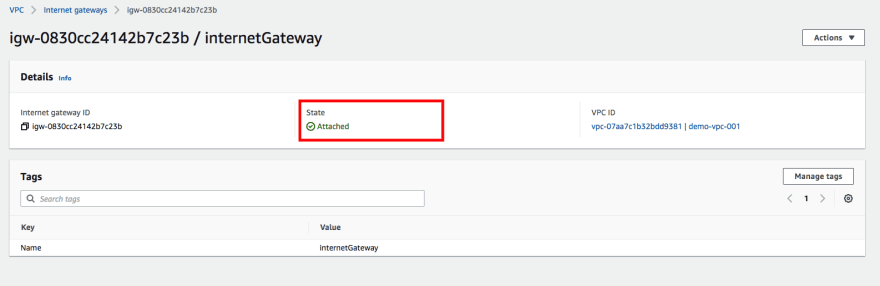

Once the internet gateway is created, with a name tag, one then attaches it to a VPC.

The internet gateway will now be in an attached state and ready to pass traffic to the internet.

Now we will create our public route table.

At this point you will need to assign a new route to the public route table. The destination will be 0.0.0.0/0. The new route will allow all traffic that is not destined for the VPC to go out to the internet. The route should be configured to point to the internet gateway as the target, allowing traffic to be routed out to the internet for the public subnets.

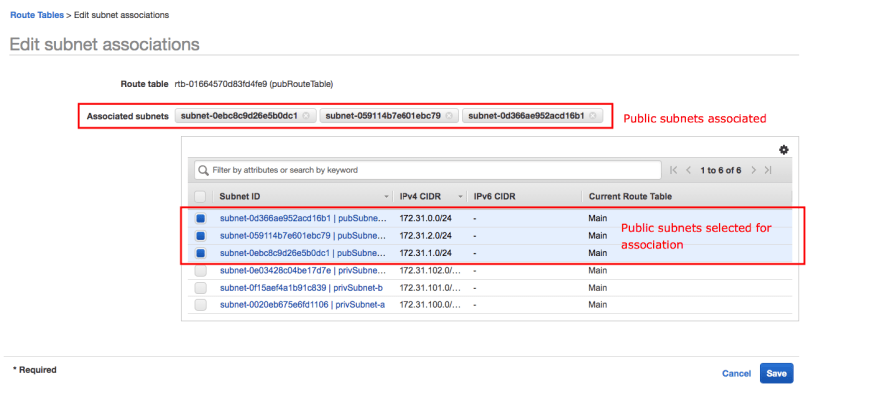

Things to be aware of here is that the order of the rules do not matter as it deals with the most specific route. Also, the internet gateway cannot be considered a single point of failure. This resource is an abstraction backed by something highly available. Let’s now associate the public subnets with the public route table.

The public subnets are now explicitly associated to the public route table. This means that compute instances that are deployed to the public subnet with a public IP address will be able to get access out to the internet via the internet gateway.

I’ve also created three private route tables and have associated each private subnet respectively.

The web servers in the public subnet gain internet access out via routes to the internet gateway. What about the backend application servers in the private subnet? They may need to do software updates or other actions that require access to the internet. This is where the NAT gateway comes in.

A NAT gateway will allow resources in the private subnet access out to the internet. When you create a NAT gateway, you choose a public subnet and allocate an elastic IP to the NAT gateway. In our example I will create three NAT gateways in each public subnet and create a route for each private route table to point to the relevant target NAT gateway. The NAT gateways must exist in a public subnet as they will have public IP addresses allocated and will need access to the internet via the internet gateway.

Create a NAT gateway in each public subnet and allocate an elastic IP.

Create a new route in each private subnet and point it to its corresponding NAT gateway target.

Once each NAT gateway is created in each public subnet, the private route tables will all have a route out to the internet via their corresponding target.

This has achieved the following: any compute instances deployed to the public subnets will have access out to the internet via the internet gateway and their public IP address. Any compute instances deployed to the private subnets will have access out to the internet via the NAT gateway, which has a public IP address associated with it. The below graphic describes the configuration. (A third availability zone is assumed)

The reason three NAT gateways are created in the three public subnets and three route tables in the private subnets, is for high availability. If there are degraded services in any availability zone, the private compute instances in the remaining working availability zones will still have access to a NAT gateway. For the public subnets, one route table is sufficient because as previously stated, the internet gateway is, by default, highly available.

In an upcoming blog post, I will be discussing compute instances in more detail and how they are deployed to the VPC.

Conclusion

Understanding the AWS VPC and its components is vital to becoming more familiar with AWS’s extended services. This has been a VPC fundamentals crash course and the reader is encouraged to continue exploring the AWS VPC on his own to become comfortable with the fundamental networking and configuration concepts. Once again critical feedback is encouraged.

Top comments (0)