Git automation, either in the form of Gitlab pipelines or Github actions, is amazing. It enables you to automate a lot of software maintenance tasks (testing, monitoring, mirroring repositories, generating documentation, building and distributing packages etc.) that until a couple of years ago used to take a lot of development time. These forms of automation have democratized CI/CD, bringing to the open-source world benefits that until recently either belonged mostly to the enterprise world (such as TeamCity) or had a steep curve in terms of configuration (such as Jenkins).

I have been using Github actions myself for a long time on the Platypush codebase, with a Travis-CI integration to run integration tests online and a ReadTheDocs integration to automatically generate online documentation.

You and whose code?

However, a few things have changed lately, and I don't feel like I should rely much on the tools mentioned above for my

CI/CD pipelines.

Github has too often taken the wrong side in DMCA disputes since it's been acquired by Microsoft. The CEO of Github has in the meantime tried to redeem himself, but the damage in the eyes of many developers, myself included, was done, all in spite of the friendly olive branch to the community handed over IRC. Most of all, that doesn't change the fact that Github has taken down more than 2000 other repos in 2020 alone, often without any appeal or legal support - the CEO bowed down in the case of youtube-dl only because of the massive publicity that the takedown attracted. Moreover, Github has yet to overcome its biggest contradiction: it advertises itself like the home for open-source software, but its own source code is not open-source, so you can't spin up your own instance on your own server. There's also increasing evidence in support of my initial suspicion that the Github acquisition was nothing but another old-school Microsoft triple-E operation. When you want to clone a Github repo you won't be prompted anymore with the HTTPS/SSH link by default, you'll be prompted with the Github CLI command, which extends the standard git command, but it introduces a couple of naming inconsistencies here and there. They could have contributed to improve the git tool for everyone's benefit instead of providing their new tool as the new default, but they have opted not to do so. I'm old enough to have seen quite a few of these examples in the past, and it never ended well for the extended party. As a consequence of these actions, I have moved the Platypush repos to a self-hosted Gitlab instance - which comes with much more freedom, but also no more Github actions.

And, after the announcement of the planned migration from travis-ci.org to travis-ci.com, with greater focus on enterprise, a limited credit system for open-source projects and a migration process that is largely manual, I have also realized that Travis-CI is another service that can't be relied upon anymore when it comes to open-source software. And, again, Travis-CI is plagued by the same contradiction as Github - it claims to be open-source friendly, but it's not open-source itself, and you can't install it on your own machine.

ReadTheDocs, luckily, seems to be still coherent with its mission of supporting open-source developers, but I'm also keeping an eye on them just in case :)

Building a self-hosted CI/CD pipeline

Even though abandoning closed-source and unreliable cloud development tools is probably the right thing to do, that leaves a hole behind: how do we bring the simplicity of the automation provided by those tools to our new home - and, preferably, in such a format that it can be hosted and moved anywhere?

Github and Travis-CI provide a very easy way of setting up CI/CD pipelines. You read the documentation, upload a YAML file to your repo, and all the magic happens. I wanted to build something that was that easy to configure, but that could run anywhere, not only in someone else's cloud.

Building a self-hosted pipeline, however, also brings its advantages. Besides liberating yourself of the concern of handing your hard-worked code to someone else who can either change their mind about their mission, or take it down overnight, you have the freedom of setting up the environment for build and test however you please and customize it however you please. And you can easily set up integrations such as automated notifications over whichever channel you like, without the headache of installing and configuring all the dependencies to run on someone else's cloud.

In this article we'll how to use Platypush to set up a pipeline that:

Reacts to push and tag events on a Gitlab or Github repository and runs custom Platypush actions in YAML format or Python code.

Automatically mirrors the new commits and tags to another repo - in my case, from Gitlab to Github.

Runs a suite of tests.

If the tests succeed, then it proceeds with packaging the new version of the codebase - in my case, I run the automation to automatically create the new

platypush-gitpackage for Arch Linux on new pushes, and the newplatypushArch package and thepippackage on new tags.If the tests fail, it sends a notification (over email, Telegram, Pushbullet or whichever plugin supported by Platypush). Also send a notification if the latest run of tests has succeeded and the previous one was failing.

Note: since I have moved my projects to a self-hosted Gitlab server, I could have also relied on the native Gitlab CI/CD pipelines, but I have eventually opted not to do so for two reasons:

Setting up the whole Docker+Kubernetes automation required for the CI/CD pipeline proved to be a quite cumbersome process. Additionally, it may require some properly beefed machine in order to run smoothly, while ideally I wanted something that could run even on a RaspberryPi, provided that the building and testing processes aren't too resource-heavy themselves.

The alternative provided by Gitlab to setting up your Kubernetes instance and configuring the Gitlab integration is to get a bucket on the cloud to spin a container that runs all you have to run. But if I have gone so far to set up my own self-hosted infrastructure for hosting my code, I certainly don't want to give up on the last mile in exchange of a small discount on the Google Cloud services :)

However, if either you have enough hardware resources and time to set up your own Kubernetes infrastructure to integrate with Gitlab, or you don't mind running your CI/CD logic on the Google cloud, Gitlab CI/CD pipelines are something that you may consider - if you don't have the constraints above then they are very powerful, flexible and easy to set up.

Installing Platypush

Let's start by installing Platypush with the required integrations.

If you want to set up an automation that reacts on Gitlab events then you'll only need the http integration, since we'll use Gitlab webhooks to trigger the automation:

$ [sudo] pip install 'platypush[http]'

If you want to set up the automation on a Github repo you'll only have one or two additional dependencies, installed through the github integration:

$ [sudo] pip install 'platypush[http,github]'

If you want to be notified of the status of your builds then you may want to install the integration required by the communication mean that you want to use. We'll use Pushbullet in this example because it's easy to set up and it natively supports notifications both on mobile and desktop:

$ [sudo] pip install 'platypush[pushbullet]'

Feel free however to pick anything else - for instance, you can refer to this article for a Telegram set up or this article for a mail set up, or take a look at the Twilio integration if you want automated notifications over SMS or Whatsapp.

Once installed, create a ~/.config/platypush/config.yaml file that contains the service configuration - for now we'll just enable the web server:

# The backend listens on port 8008 by default

backend.http:

enabled: True

Setting up a Gitlab hook

Gitlab webhooks are a very simple and powerful way of triggering things when something happens on a Gitlab repo. All you have to do is setting up a URL that should be called upon a repository event (push, tag, new issue, merge request etc.), and set up an automation on the endpoint that reacts to the event.

The only requirement for this mechanism to work is that the endpoint must be reachable from the Gitlab host - it means that the host running the Platypush web service must either be publicly accessible, on the same network or VPN as the Gitlab host, or the Platypush web port must be tunneled/proxied to the Gitlab host.

Platypush offers a very easy way to expose custom endpoints through the WebhookEvent. All you have to do is set up an event hook that reacts to a WebhookEvent at a specific endpoint. For example, create a new event hook under ~/.config/platypush/scripts/gitlab.py:

from platypush.event.hook import hook

from platypush.message.event.http.hook import WebhookEvent

# Token to be used to authenticate the calls from Gitlab

gitlab_token = 'YOUR_TOKEN_HERE'

@hook(WebhookEvent, hook='repo-push')

def on_repo_push(event, **context):

# Check that the token provided over the

# X-Gitlab-Token header is valid

assert event.headers.get('X-Gitlab-Token') == gitlab_token, \

'Invalid Gitlab token'

print('Add your logic here')

This hook will react when an HTTP request is received on http://your-host:8008/hook/repo-push. Note that, unlike most of the other Platypush endpoints, custom hooks are not authenticated - that's because they may be called from any context, and you don't necessarily want to share your Platypush instance credentials or token with 3rd-parties. Instead, it's up to you to implement whichever authentication policy you like over the requests.

After adding your endpoint, start Platypush:

$ platypush

Now, in order to set up a new webhook, navigate to your Gitlab project -> Settings -> Webhooks.

Enter the URL to your webhook and the secret token and select the events you want to react to - in this example, we'll select new push events.

You can now test the endpoint through the Gitlab interface itself. If it all went well, you should see a Received event line with a content like this on the standard output or log file of Platypush:

{

"type": "event",

"target": "platypush-host",

"origin": "gitlab-host",

"args": {

"type": "platypush.message.event.http.hook.WebhookEvent",

"hook": "repo-push",

"method": "POST",

"data": {

"object_kind": "push",

"event_name": "push",

"before": "previous-commit-id",

"after": "current-commit-id",

"ref": "refs/heads/master",

"checkout_sha": "current-commit-id",

"message": null,

"user_id": 1,

"user_name": "Your User",

"user_username": "youruser",

"user_email": "you@email.com",

"user_avatar": "path to your avatar",

"project_id": 1,

"project": {

"id": 1,

"name": "My project",

"description": "Project description",

"web_url": "https://git.platypush.tech/platypush/platypush",

"avatar_url": "https://git.platypush.tech/uploads/-/system/project/avatar/3/icon-256.png",

"git_ssh_url": "git@git.platypush.tech:platypush/platypush.git",

"git_http_url": "https://git.platypush.tech/platypush/platypush.git",

"namespace": "My project",

"visibility_level": 20,

"path_with_namespace": "platypush/platypush",

"default_branch": "master",

"ci_config_path": null,

"homepage": "https://git.platypush.tech/platypush/platypush",

"url": "git@git.platypush.tech:platypush/platypush.git",

"ssh_url": "git@git.platypush.tech:platypush/platypush.git",

"http_url": "https://git.platypush.tech/platypush/platypush.git"

},

"commits": [

{

"id": "current-commit-id",

"message": "This is a commit",

"title": "This is a commit",

"timestamp": "2021-03-06T20:02:25+01:00",

"url": "https://git.platypush.tech/platypush/platypush/-/commit/current-commit-id",

"author": {

"name": "Your Name",

"email": "you@email.com"

},

"added": [],

"modified": [

"tests/my_test.py"

],

"removed": []

}

],

"total_commits_count": 1,

"push_options": {},

"repository": {

"name": "My project",

"url": "git@git.platypush.tech:platypush/platypush.git",

"description": "Project description",

"homepage": "https://git.platypush.tech/platypush/platypush",

"git_http_url": "https://git.platypush.tech/platypush/platypush.git",

"git_ssh_url": "git@git.platypush.tech:platypush/platypush.git",

"visibility_level": 20

}

},

"args": {},

"headers": {

"Content-Type": "application/json",

"User-Agent": "GitLab/version",

"X-Gitlab-Event": "Push Hook",

"X-Gitlab-Token": "YOUR GITLAB TOKEN",

"Connection": "close",

"Host": "platypush-host:8008",

"Content-Length": "lenght"

}

}

}

These are all fields provided on the event object that you can use in your hook to build your custom logic.

Setting up a Github integration

If you want to keep using Github but run the CI/CD pipelines on another host with no dependencies on the Github actions, you can leverage the Github backend to monitor your repos and fire Github events that you can build your hooks on when something happens.

First, head to your Github profile to create a new API access token. Then add the configuration to ~/.config/platypush/config.yaml under the backend.github section:

backend.github:

user: your_user

user_token: your_token

# Optional list of repos to monitor (default: all user repos)

repos:

- https://github.com/you/myrepo1.git

- https://github.com/you/myrepo2.git

# How often the backend should poll for updates (default: 60 seconds)

poll_seconds: 60

# Maximum events that will be triggered if a high number of events has

# been triggered since the last poll (default: 10)

max_events_per_scan: 10

Start the service, and on e.g. the first repository push event you should see a Received event log line like this:

{

"type": "event",

"target": "your-host",

"origin": "your-host",

"args": {

"type": "platypush.message.event.github.GithubPushEvent",

"actor": {

"id": 1234,

"login": "you",

"display_login": "You",

"url": "https://api.github.com/users/you",

"avatar_url": "https://avatars.githubusercontent.com/u/1234?"

},

"event_type": "PushEvent",

"repo": {

"id": 12345,

"name": "you/myrepo1",

"url": "https://api.github.com/repos/you/myrepo1"

},

"created_at": "2021-03-03T18:20:27+00:00",

"payload": {

"push_id": 123456,

"size": 1,

"distinct_size": 1,

"ref": "refs/heads/master",

"head": "current-commit-id",

"before": "previous-commit-id",

"commits": [

{

"sha": "current-commit-id",

"author": {

"email": "you@email.com",

"name": "You"

},

"message": "This is a commit",

"distinct": true,

"url": "https://api.github.com/repos/you/myrepo1/commits/current-commit-id"

}

]

}

}

}

You can easily create an event hook that reacts to such events to run your automation - e.g. under ~/.config/platypush/scripts/github.py:

from platypush.event.hook import hook

from platypush.message.event.github import GithubPushEvent

@hook(GithubPushEvent)

def on_repo_push(event, **context):

# Run this action only for a specific repo

if event.repo['name'] != 'you/myrepo1':

return

print('Add your logic here')

And here you go - you should now be ready to create your automation routines on Github events.

Automated repository mirroring

Even though I have moved the Platypush repos to a self-hosted domain, I still keep a mirror of them on Github. That's because lots of people have already cloned the repos over the years and may lose updates if they haven't seen the announcement about the transfer. Also, registering to a new domain is often a barrier for users who want to create issues. So, even though me and Github are no longer friends, I still need a way to easily mirror each new commit on my domain to Github - but you might as well have another compelling case for backing up/mirroring your repos. The way I'm currently achieving this is by cloning the main instance of the repo on the machine that runs the Platypush service:

$ git clone git@git.you.com:you/myrepo.git /opt/repo

Then add a new remote that points to your mirror repo:

$ cd /opt/repo

$ git remote add mirror git@github.com:/you/myrepo.git

$ git fetch

Then try a first git push --mirror to make sure that the repos are aligned and all conflicts are solved:

$ git push --mirror -v mirror

Then add a new sync_to_mirror function in your Platypush script file that looks like this:

import logging

import os

import subprocess

repo_path = '/opt/repo'

# ...

def sync_to_mirror():

logging.info('Synchronizing commits to mirror')

os.chdir(repo_path)

# Pull the updates from the main repo

subprocess.run(['git', 'pull', '--rebase', 'origin', 'master'])

# Sync the updates to the repo

subprocess.run(['git', 'push', '--mirror', '-v', 'mirror'])

logging.info('Synchronizing commits to mirror: DONE')

And just call it from the previously defined on_repo_push hook, either the Gitlab or Github variant:

# ...

def on_repo_push(event, **_):

# ...

sync_to_mirror()

# ...

Now on each push the repository clone stored under /opt/repo will be updated and any new commits and tags will be mirrored to the mirror repository.

Running tests

If our project is properly set up, then it probably has a suite of unit/integration tests that is supposed to be run on each change to verify that nothing is broken. It's quite easy to configure the previously created hook so that it runs the tests on each push. For instance, if your tests are stored under the tests folder of your project and you use pytest:

import datetime

import os

import pathlib

import shutil

import subprocess

from platypush.event.hook import hook

from platypush.message.event.http.hook import WebhookEvent

# Path where the latest version of the repo will be cloned

tmp_path = '/tmp/repo'

# Path where the results of the tests will be stored

logs_path = '/var/log/tests'

# ...

def run_tests():

# Clone the repo in /tmp

shutil.rmtree(tmp_path, ignore_errors=True)

subprocess.run(['git', 'clone', 'git@git.you.com:you/myrepo.git', tmp_path])

os.chdir(os.path.join(tmp_path, 'tests'))

passed = False

try:

# Run the tests

tests = subprocess.Popen(['pytest'],

stdout=subprocess.PIPE,

stderr=subprocess.PIPE)

stdout = tests.communicate()[0].decode()

passed = tests.returncode == 0

# Write the stdout to a logfile

pathlib.Path(logs_path).mkdir(parents=True, exist_ok=True)

logfile = os.path.join(logs_path,

f'{datetime.datetime.now().isoformat()}_'

f'{"PASSED" if passed else "FAILED"}.log')

with open(logfile, 'w') as f:

f.write(stdout)

finally:

shutil.rmtree(tmp_path, ignore_errors=True)

# Return True if the tests passed, False otherwise

return passed

# ...

@hook(WebhookEvent, hook='repo-push')

# or

# @hook(GithubPushEvent)

def on_repo_push(event, **_):

# ...

passed = run_tests()

# ...

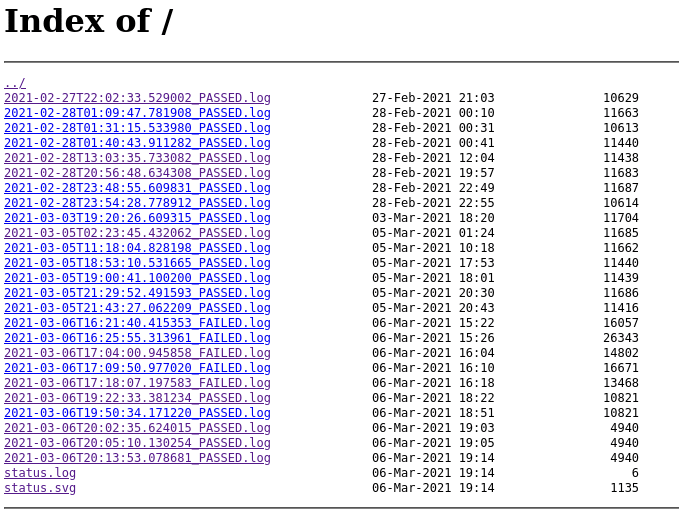

Upon push event, the latest version of the repo will be cloned under /tmp/repo and the suite of tests will be run. The output of each session will be stored under /var/log/tests in a file formatted like <ISO timestamp>_<PASSED|FAILED>.log. To make things even more robust, you can create a new virtual environment under the temporary directory, install your repo with all of its dependency in the new virtual environment and run the tests from there, or spin a Docker instance with the required configuration, to make sure that the tests would also pass on a fresh installation and prevent the "but if works on my box" issue.

Serve the test results over HTTP

Now you can simply serve /var/log/tests over an HTTP server and the logs can be accessed from your browser. Simple case:

$ cd /var/log/tests

$ python -m http.server 8000

The logs will be served on http://host:8000. You can also serve the directory through a proper web server like nginx or Apache.

It doesn't come with all the bells and whistles of the Jenkins or Travis-CI UI, but it's simple and good enough for its job - and it's not hard to extend it with a fancier UI if you like.

Another nice addition is to download some of those nice passed/failed badge images that you find on many Github repositories to your Platypush box. When a test run completes, just edit your hook to copy the associated banner image (e.g. passed.svg or failed.svg) to e.g. /var/log/tests/status.svg:

import os

import shutil

# ...

def run_tests():

# ...

passed = tests.returncode == 0

badge_path = '/path/to/passed.svg' if passed else '/path/to/failed.svg'

shutil.copy(badge_path, os.path.join(logs_path, 'status.svg'))

# ...

Then embed the status in your README.md:

[](http://your-host:8000)

And there you go - you can now show off a dynamically generated and self-hosted status badge on your README without relying on any cloud runner.

Automatic build and test notifications

Another useful feature of the popular cloud services is the ability to send notification when a build status changes. This is quite easy to set up with Platypush, as the application provides several plugins for messaging. Let's look at an example where a change in the status of our tests triggers a notification to our Pushbullet account, which can be delivered both to our desktop and mobile devices. Download the Pushbullet app if you want the notifications to be delivered to your mobile, get an API token and then install the dependencies for the Pushbullet integration for Platypush:

$ [sudo] pip install 'platypush[pushbullet]'

Then configure the Pushbullet plugin and backend in ~/.config/platypush/config.yaml:

backend.pushbullet:

token: YOUR_PUSHBULLET_TOKEN

device: platypush

pushbullet:

enabled: True

Now simply modify your push hook to send a notification when the status of build changes. We will also use the variable plugin to retrieve and store the latest status, so that notifications are triggered only when the status changes:

from platypush.context import get_plugin

from platypush.event.hook import hook

from platypush.message.event.http.hook import WebhookEvent

# Name of the variable that holds the latest run status

last_tests_passed_var = 'LAST_TESTS_PASSED'

# ...

def run_tests():

# ...

passed = tests.returncode == 0

# ...

return passed

# ...

@hook(WebhookEvent, hook='repo-push')

# or

# @hook(GithubPushEvent)

def on_repo_push(event, **_):

variable = get_plugin('variable')

pushbullet = get_plugin('pushbullet')

# Get the status of the last run

response = variable.get(last_tests_passed_var).output

last_tests_passed = int(response.get(last_tests_passed_var, 0))

# ...

passed = run_tests()

if passed and not last_tests_passed:

pushbullet.send_note(body='The tests are now PASSING',

# If device is not set then the notification will

# be sent to all the devices connected to the account

device='my-mobile-name')

elif not passed and last_tests_passed:

pushbullet.send_note(body='The tests are now FAILING',

device='my-mobile-name')

# Update the last_test_passed variable

variable.set(**{last_tests_passed_var: int(passed)})

# ...

The nice addition of this approach is that any other Platypush device with the Pushbullet backend enabled and connected to the same account will receive a PushbulletEvent when a Pushbullet note is sent, and you can easily leverage this to build some downstream logic with hooks that react to these events.

Continuous delivery

Once we have a logic in place that automatically mirrors and tests our code and notifies us about status changes, we can take things a step further and set up our pipeline to also build a package for our applications if the tests are successful.

Let's consider in this article the example of a Python application whose new releases are tagged through git tags, and each time a new version is released we want to create a pip package and upload it to the online PyPI registry. However, you can easily adapt this example to work with any build and release process.

Twine is a quite popular option when it comes to uploading packages to the PyPI registry. Let's install it:

$ [sudo] pip install twine

Then create a Gitlab webhook that reacts to tag events, or react to a GithubCreateEvent if you are using Github, and create a Platypush hook that reacts to tag events by running the logic of on_repo_push, and additionally make a package build and upload it with Twine if the tests are successful:

import importlib

import os

import subprocess

from platypush.event.hook import hook

from platypush.message.event.http.hook import WebhookEvent

# Path where the latest version of the repo has been cloned

tmp_path = '/tmp/repo'

# Initialize these variables with your PyPI credentials

os.environ['TWINE_USERNAME'] = 'your-pypi-user'

os.environ['TWINE_PASSWORD'] = 'your-pypi-pass'

# ...

def upload_pip_package():

os.chdir(tmp_path)

# Build the package

subprocess.run(['python', 'setup.py', 'sdist', 'bdist_wheel'])

# Check the version of your app - for example from the

# yourapp/__init__.py __version__ field

app = importlib.import_module('yourapp')

version = app.__version__

# Check that the archive file has been created

archive_file = os.path.join('.', 'dist', f'yourapp-{version}.tar.gz')

assert os.path.isfile(archive_file), \

f'The target file {archive_file} was not created'

# Upload the archive file to PyPI

subprocess.run(['twine', 'upload', archive_file])

@hook(WebhookEvent, hook='repo-tag')

# or

# @hook(GithubCreateEvent)

def on_repo_tag(event, **_):

# ...

passed = run_tests()

if passed:

upload_pip_package()

# ...

And here you go - you now have an automated way of building and releasing your application!

Continuous delivery of web applications

We have seen in this article some examples of CI/CD for stand-alone applications with a complete test+build+release pipeline. The same concept also applies to web services and applications. If your repository stores the source code of a website, then you can easily create automations that react to push events and pull the changes on the web server and restart the web service if required. This is in fact the way I'm currently managing updates on the Platypush blog and homepage. Let's see a small example where we have a Platypush instance running on the same machine as the web server, and suppose that our website is served under /srv/http/myapp (and, of course, that the user that runs the Platypush service has write permissions on this location). It's quite easy to tweak the previous hook example so that it reacts to push events on this repo by pulling the latest changes, runs e.g. npm run build to build the new dist files and then copies the dist folder to our web server directory:

import os

import shutil

import subprocess

from platypush.event.hook import hook

from platypush.message.event.http.hook import WebhookEvent

# Path where the latest version of the repo has been cloned

tmp_path = '/tmp/repo'

# Path of the web application

webapp_path = '/srv/http/myapp'

# Backup path of the web application

backup_webapp_path = '/srv/http/myapp-backup'

# ...

def update_webapp():

os.chdir(tmp_path)

# Build the app

subprocess.run(['npm', 'install'])

subprocess.run(['npm', 'run', 'build'])

# Verify that the dist folder has been created

dist_path = os.path.join('.', 'dist')

assert os.path.isdir(dist_path), 'dist path not created'

# Remove the previous app backup folder if present

shutil.rmtree(backup_webapp_path, ignore_errors=True)

# Backup the old web app folder

shutil.move(webapp_path, backup_webapp_path)

# Move the dist folder to the web app folder

shutil.move(dist_path, webapp_path)

@hook(WebhookEvent, hook='repo-push')

# or

# @hook(GithubPushEvent)

def on_repo_tag(event, **_):

# ...

passed = run_tests()

if passed:

update_webapp()

# ...

Top comments (0)