Today I will run a machine-learning-backed code analysis against the Python-based deepracer-utils project.

As the AWS re:Invent continues, many new products have been announced. I was especially impressed with Andy Jassy's Keynote on Tuesday Jan 1st, it was pretty fast pace and packed with announcements. Blink once, you'll miss two new products. Interestingly machine learning was largely omitted since there is a separate Machine Learning Keynote with Swami Sivasubramanian on Dec 8th at 2:45 pm UTC.

I'm especially curious about the devices announcements. DeepRacer is still holding up pretty damn well with this year's final in progress (virtually), multiple companies using it for mass machine learning and cloud immersion (DBS - 3K+ employees, Accenture - 2K+, DNP - 1K+, and there's more that I cannot mention :) ) and while last years AWS DeepComposer did not make many jaws drop it still was pretty fun for learning. I'm wondering if there will be any additions to this group.

AWS CodeGuru Reviewer

CodeGuru Reviewer is a code analysis utility provided by AWS. It initially provided analysis for Java, this year Python was added. I haven't used it before, mainly because all of my Java code is work code and I haven't had much to run it against. This year however I have published deepracer-utils to support various methods of training that little rascal.

I will attach it to a GitHub repository and see what analysis results I get.

Deepracer-utils

Deepracer-utils is a Python based project, a first one in which I published on PyPi and prepared with my most complete (at that time) understanding of what a Python project should look like.

My expectations

I am expecting to get some proper hints about what I have flipped up, hopefully no critical level issues. I have 10 years professional experience but since Java ways don't always translate well into Python, I'm hoping to learn a thing or two today.

Getting started

The main project page has a quick access to a setup page:

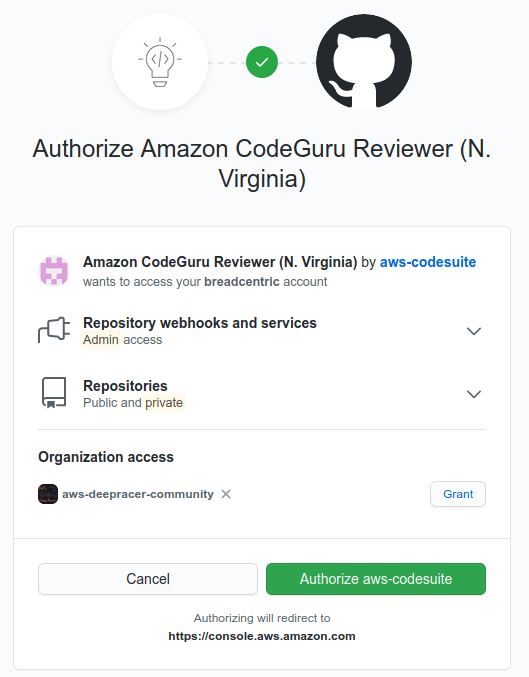

Then you need to grant access to your repositories. The tool is asking for an admin access to webhooks (to integrate to selected projects) and gives you the option to do it for selected organisations (you need to click the "Grant" button next to the org for permission to be granted). Another permission is read access to both public and private repositories.

If you would like to revoke the authorization, you will find it in your Applications setting page on the Authorized OAuth Apps.

I have granted the permissions, but aws-deepracer-community projects where nowhere to be found - I decided to revoke the authorization and redo it since I could not edit the nature of an already granted authorization (guess it makes sense). That did work. I selected the right repo and presto:

In the repository itself I can see a new webhook created, listening for Pull requests, Pull requests reviews and Pull request review comments.

The associated repositories section doesn't give you many options: you can associate repository, disassociate repository or manage repository's tags. I go over to Code review page and there are two tabs, one for pull requests (empty since I made no PRs today) and Repository analysis. There I can request a full repository analysis:

I requested repository analysis and was asked whether I wanted code recommendations or code and security. I can see that Code and security involves creating an S3 bucket for the analysis and code and build artifacts are stored there. I did some googling around and it looks like that part is still Java only (but don't quote me on that), so let's just run the code recommendations:

Suggested name is consistent with my lack of talent for consistent naming so I will leave it as it is.

Results

My analysis run returned three issues around resource closing:

The header is a link to the line in GitHub repository, the description of an issue is pretty detailed and links lead to Python docs.

The resources that analysis tool points at are not production code and come from a utility called Versioneer which lets you treat git tags as a project versioning utility, just like Gradle's Axion release plugin which I'm using or universal jgitver. I prefer this solution to editing text files with numbers. As it appears, the code that I was supposed to add to the project for Versioneer to work has some flaws in it.

I've decided to make appropriate corrections: https://github.com/aws-deepracer-community/deepracer-utils/pull/7

The issues have been reviewed and so I have accepted the pull request. No production changes so no release.

Telemetrics

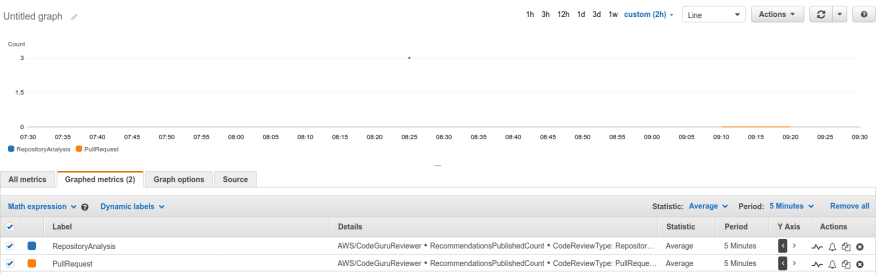

A nice feature that come out of the box is access to telemetrics in CloudWatch:

(The blue dot is showing a number of recommendations found in the repository analysis, and the orange line at zero is showing the amount of issues found in pull requests).

Feedback is one of the substantial elements of a reliable DevOps infrastructure and that applies not only to business, application or system monitoring, but also to developers and quality of their deliverables.

Profiler

A second new feature that I have not looked into is CodeGuru Profiler - it allows you to perform profiling on your Python lambda function or application deployed on EC2. You can have a look at it in the AWS CodeGuru Python support announcement article.

Resources

Apart from the AWS CodeGuru Python support announcement article I recommend that you have a look at AWS CodeGuru Reviewer documentation

As usual, I recommend checking out the pricing page for CodeGuru.

Summary

I'd say the tool looks promising and integrates quite easily into your code. It would be awesome if the tool went a step further and enforced no new issues in pull requests and allowed provision of feedback in the pull request or maybe even the build flow in CodeBuild. One step at a time, I guess.

As any other first-class citizen in the AWS offering (except for DeepRacer, ekhm) AWS CodeGuru offers integration which allows you (among other things) to fetch the recommendations for code reviews so I guess a feedback integration could be scripted. That might be some Fix it Friday project.

Top comments (0)