Def: synchrony : simultaneous action, occurrence, or development

In seventh grade math class, we took a detour from the textbook one trimester and were given instead the infamous “Yellow Book”. It was homemade and spiral-bound, a series of photocopied worksheets (whose origin, I’d now guess, was probably a typewriter, given the old-school CourierNew-esque font), handwritten explanations, problem sets pulled from a hodge-podge of sources -- a most bizarre collection indeed. And the topics covered were far from your run-of-the-mill seventh grade math class standards. We had units on modulo arithmetic, logical operators, geometric proofs by construction, networks and matrices… But what I remember being a total nightmare at the time was a brief foray into the world of circuits. Circuits in series -- connecting end-to-end, a simple sum-of-its-parts pattern -- made sense enough; parallel circuits, on the other hand: I could not wrap my head around them.

When I first started learning about synchronous and asynchronous programming, I had a flashback to my Yellow Book. In my head, I connected synchronous programming with the series circuit model: In synchronous programming, things happen one at a time, one after another. It’s all very linear and sensible. If you call a function that performs a lengthy action, that function will run to completion -- and too bad for the rest of your program, which is put on pause. Only once that original function has finished and returned its result will the program move on. Okay, so perhaps not-so-sensible. After all, we live in a society that is not known for its patience.

But, as with circuits, there is an alternative. In case you’re interested in a brief review: Series circuits are basically long daisy-chains. There is a single path along which the current can flow, and the circuit’s total voltage is equivalent to the sum of the voltage drops through each component. (Duh. No wonder my 7th grade brain liked this. But also, and more relevantly: This is similar to time in a synchronous programming model. The total time taken to run a program will equal at least the sum of the time required to complete each task in sequence.) A parallel circuit, on the other hand, is one in which the current is not restricted to a single path. In the words of a Boston University physics professor, “the current in a parallel circuit breaks up, with some flowing along each parallel branch and re-combining when the branches meet again.” Which, it turns out, is actually not a bad analogy for asynchronous programming.

In an asynchronous programming model, many actions can be taken at the same time. When a function is executed, it does not necessarily halt the entire program in its tracks. Rather, your program will move on to the next functions, and when the initial action completes, the results will be revisited and/or incorporated as necessary.

So conclusion number one: Asynchronous programming has huge advantages when it comes to speed.

Conclusion number two, which is far less obvious: Sometimes, asynchronous programming can prove rather frustrating, since we don’t actually know exactly how long a process may take. For example, if I want to fetch come data from an API, I can make that request -- but I cannot determine the precise moment that the request will complete -- and in the meantime, my program will keep running. Will the results come in 5 lines later in my code? 10? How many functions will have executed in the meantime? What actions will occur without any of the information from that request having come in yet?

What a glory it was to learn about callback functions last week. To be perfectly honest, I’m not a fan of the name “callback.” There are other names that seem far more intuitive to me. And the definition that is often put forward as the initial one seems, actually, not to be informative of a callback’s primary purpose. But regardless, here’s the important thing: A callback function is one that is told to wait its turn. It is, in fact, explicitly not executed until another function has completed its action.

There are three big truths about JavaScript that help explain this:

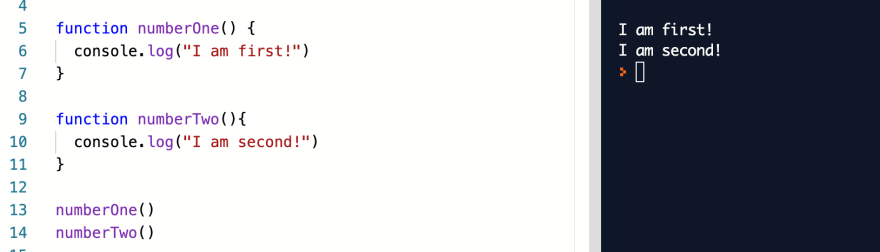

JavaScript is an “event-driven language” and behaves asynchronously by default. It does not wait for a response from a function before moving on to its next task. Take this classic example: When you call these two functions one after another, all goes as you might expect.

But make a slight change to function numberOne (here, setTimeout is used to kinda imitate what would happen if, for instance, you made a GET request to an API that took .5 seconds to complete), and your results are a bit weirder.

Even though function numberOne is called first, its results (console logging “I am first!”) show up second. This is because JavaScript mimics the impatience of humans and likes to rush forward, finishing all the things it can even while impatiently waiting for something in the background.

JavaScript can be impatient but its trying to help us out by delivering things efficiently. And sometimes, it must wait. For instance: Functions, at the end of their execution, return things. Those things, whatever they may be, are accessible only at the completion of the function. Need to make something wait until function DoingSomething is over? Great! You can make it part of what DoingSomething returns.

JavaScript likes to treat everything as an object. That means functions, too, are objects. Because of their object-ness, functions can become arguments to other (outer) functions. Thus, they can also be returned by other functions. Voila.

Let’s look a few other, perhaps more slightly realistic, example. As a student at Flatiron for the past 10 weeks, I’ve been doing a lot of building websites where we’re grabbing existing data or getting inputs from users, rendering said data to the page in various configurations, editing and updating with mouse/keyboard events… A large number of these mini projects involve fetching data from an API. These fetch requests are a great example of when callbacks are useful.

In the example below, a fetch request is made to a Random Recipes API. We’ll get back one recipe, and that will be what we make for our next meal.

When we call hungerAlert followed by yummy, you might expect to see their console.logs rendered in that order. But no! “Ahh, dinner complete!” is printed to the console first, despite the fact that we clearly called it second. While Javascript was waiting for the API fetch to complete in order to take the steps dependent on its promises, it went on to execute yummy without any delay.

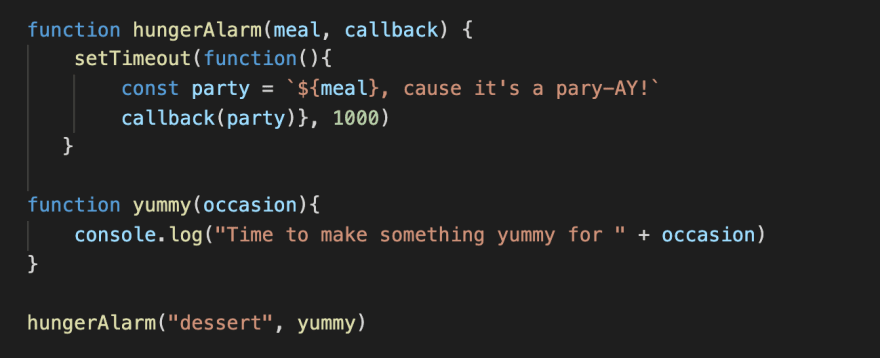

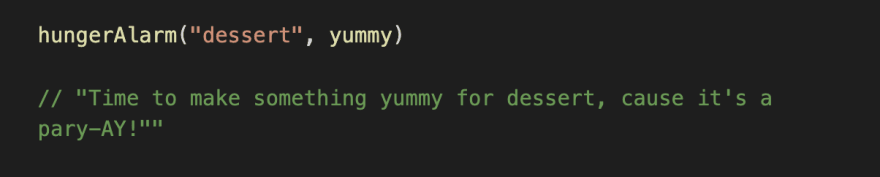

Below, we use a setTimeout function to simulate the delay of an API fetch. We also change our two functions slightly, so that hungerAlert now accepts a callback function as an argument. Even though hungerAlert is held on pause for 1 second, yummy does not execute this time. Because it is called inside hungerAlert as a callback function, it waits, and only due to this wait does it even know what “occasion” is.

With the advent of promises, callback functions are not the only tool in our toolbox for dealing with ordering of functions. But still, they are a useful thing to have in the arsenal.

Other fun facts about callback functions:

They should not actually be invoked when being passed as an argument

They can themselves have parameters

They act as closure

Callbacks can be named or anonymous

Top comments (0)