Anyone who's done cross-platform development on Windows knows that getting things to compile can be a huge pain in the ass. Sometimes it's not even possible. The whole situation kinda sucked, until now.

Enter Linux on Windows

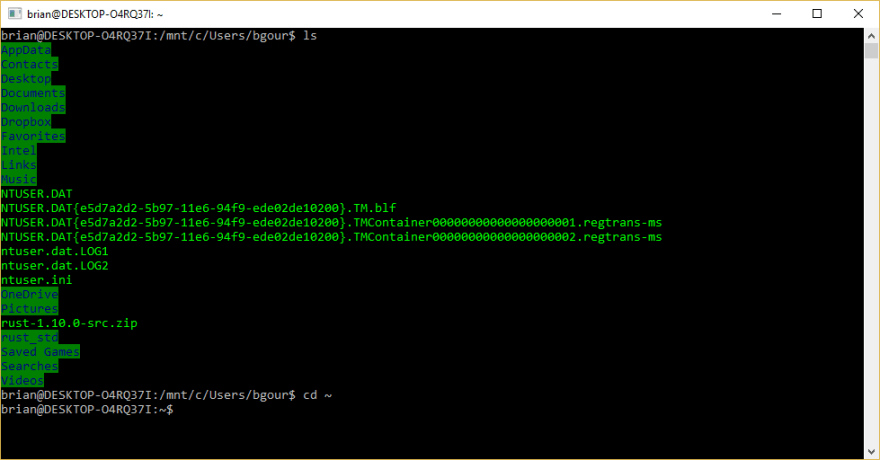

There's this new thing called the Windows Subsystem for Linux. Without going into too much detail, WSL provides a bash shell that exposes a Linux environment. It's native Linux, too — These are ELF binaries running inside Windows. The Windows file-system is fully accessible, with each drive having its own mount point. Linux processes can bind to the Windows loopback address and it all just works. You can read the technical details here, but the practical implications are simple enough: Windows is finally a good OS for doing cross-platform development.

WSL isn't installed by default, so you'll need to follow these instructions to get started.

A practical example

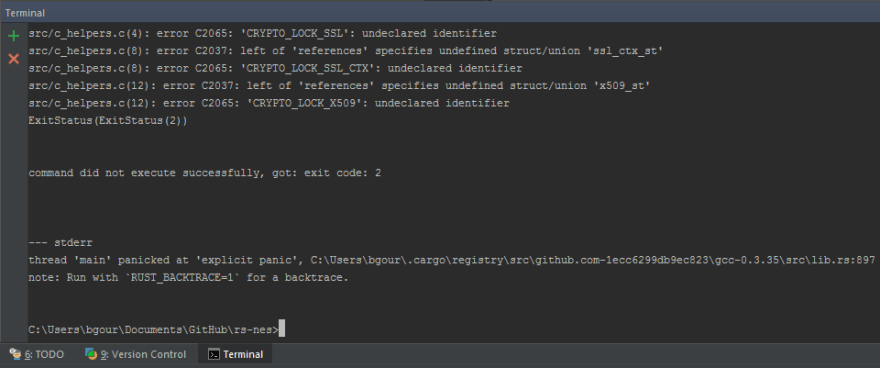

I do a lot of Rust development in my personal time. Rust actually has excellent Windows support, however, there are packages that link against C libraries that aren't well supported on Windows. One of my projects depends transitively on OpenSSL, for example. Trying to compile on Windows results in this:

While most of these issues can be resolved with enough persistence, it's a huge waste of time and effort. My typical reaction is to ditch my beefy desktop and its multiple monitors for a small, relatively under-powered MacBook where things just work™. Not anymore!

To get started, I needed to install the Linux Rust toolchain. Something I haven't mentioned to this point is that the WSL uses Ubuntu, meaning it uses apt for package management. Installing things from the bash shell inside Windows is no different than doing it on any other Ubuntu installation:

brian@DESKTOP-O4RQ37I:~$ sudo apt-get install build-essential

...

brian@DESKTOP-O4RQ37I:~$ curl https://sh.rustup.rs -sSf | sh

...

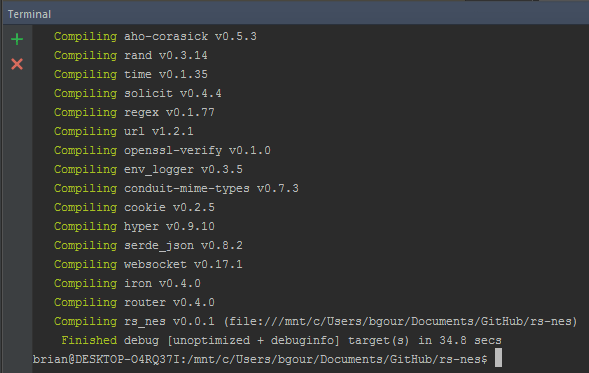

First I install the build-essential package which installs gcc (among other things). Then I install rustup, Rust's toolchain manager, via its handy shell script. That's it — I navigate to the same code base that failed to compile within the standard prompt and compile it from the bash shell instead:

I was even able to configure IntelliJ IDEA to launch the bash shell in its terminal, meaning my entire workflow stays the same. The difference is that I'm now compiling a Linux binary and running it directly in Windows!

Top comments (2)

Annoyingly the Ubuntu for Windows thing has a LOT of issues that you bump into in certain use cases.

You don't have USB access, so android development requires tools to run on Windows -side.

Kernel access is messy at best, so you can't e.g. boot up virtual machines.

Symlinks were pretty wonky at least at one point, so creating a symlink from

/home/<user>/foo->/mnt/c/foocreated this odd infinite loop thing that always showed you the contents of/home/<user>regardless of how many times you'dcd fooI at least wasn't able to figure out how to launch executables on the Windows side, so you can't use it to automate running tools that only work on Windows -side. A bunch of tools will simply refuse to run on the Linux -side, so you will end up having to juggle between the two environments.

Additionally it has randomly caused lockups of my whole computer, no issues otherwise but if I simply keep a Ubuntu for Windows -terminal window open for a period of time the whole computer hard locks up.

I also believe it randomly pops up console windows to do automated apt updates, like Windows didn't already feel too free to interrupt whatever you're doing by opening random popups and dialogs you really didn't care about.

Also the ownership of the files on Windows filesystems are

root:rootso tools likegit,hg, and many others refuse to trust them when you're using your local user account, as you should.You can end up with zombie processes for the terminals that you can't kill. After this when trying to open new terminals the new terminal windows seem to instantly freeze (I believe fs access causes this), and these zombie processes stop rebooting from working cleanly.

Many basic tools simply refuse to work, crash, or give invalid results. Back a while ago

pingwould never work,traceroutestill doesn't.wsays nobody is logged in,lastlogsays nobody has ever logged in.dmesgsaysdmesg: read kernel buffer failed: Function not implemented./devis very empty.Symlinks created in the Linux environment show up as plain files on Windows side, even on NTFS which supports symlinks just fine.

One nice thing is that you CAN run X applications just with

export DISPLAY=:0when you are running Xming, but it's never a great experience with Xming. However since the environment has so many random issues you'll have issues running most things one way or another. For example I tried to run Firefox, and for some reason all downloads would end up as a 0 -byte file and the download not progressing at all. The terminal I launched it from would get some random I/O errors or some such.And the worst thing is that it is (currently) based only on Ubuntu, which is probably the least reliable Linux distribution out there, and apt & dpkg constantly mess something up. However, it seems they're planning to support OpenSUSE, which probably means some other distributions will be available too in the future.

Don't get me wrong, it definitely has some promise of a better future and for some use-cases it already is good enough, however it's far from great as it is. Some of my information is from a while back though, but since I've not heard of any major updates to it it's fairly likely all of this is still valid.

Update: Tried a few more things.

Installed

supertuxcartto see what happens when trying to run something unexpected, i.e. a 3D game. It dies to an unexpected error.Assertion 'pthread_mutex_unlock(&m->mutex) == 0' failed at pulsecore/mutex-posix.c:108, function pa_mutex_unlock(). Aborting.Aborted (core dumped)

I had some better luck with

doomsday, which plays, though really slowly and not surprisingly without audio.prboom-plus(another DOOM engine) seemed to run REALLY smoothly. Specifically ALSA does not have any audio device set up, though I'd imagine if Microsoft was feeling nice it shouldn't be a big deal to set up an audio bridge of a sort.I managed to download Colonization off of GOG and run it, though in DOSBox the cursor isn't working quite as well as you'd hope, which is likely Xming's fault as I had some issues with

xeyesas well.The kernel has only support for 64-bit ELF binaries, so you can't run 32-bit applications (such as Steam) even after installing the 32-bit libraries, you just get a

cannot execute binary file: Exec format error-error.Overall I'm positively surprised by some of these things.

Nice info thank you!