Video Chat with Unity3D, the ARFoundation Version

Many of you took steps in creating your very own video chat app using our How To: Create a Video Chat App in Unity. Now, that you’ve got that covered, let’s take your app a couple notches by adding an immersive experience to it. In this Augmented Reality (AR) tutorial, you’ll learn how to communicate and chat with friend in AR. This quick start guide is very similar to the previous tutorial. We just need to make few changes to make it work in less than an hour.

Prerequisites

Unity Editor (Version 2018 or above)

2 devices to test on (one to broadcast, one to view)

Broadcast device will be a mobile device to run AR scene: Apple device of iOS 11 or above; Android device with Android 7 or above.

Viewing device will run the standard Video Chat demo app - pretty much any device with Windows, Mac, Android, or iOS operating systems will work

A developer account with Agora.io

Getting Started

To start, we will need to integrate the Agora Video SDK for Unity3D into our project by searching for it in the Unity Asset Store or click this link to begin the download. Note the current SDK version is archived here, in case that the future SDK release has a different layout.

After you finish downloading and importing the SDK into your project, you should be able to see the README.md files for the different platforms the SDK supports. For your convenience, you can also access the quick start tutorials for each platform below.

Unity AR Packages

On UnityEditor, open Package Manager from the Window tab. Install the following packages:

For Unity 2018:

AR Foundation 1.0.0 — preview.22 (the latest for 1.0.0)

ARCore XR Plugin 1.0.0 — preview.24 (the latest for 1.0.0)

ARKit XR Plugin 1.0.0-preview.27 (the latest for 1.0.0)

For Unity 2019:

AR Foundation 3.0.1

ARCore XR Plugin 3.0.1

ARKit XR Plugin 3.0.1

Modify the Existing Project

Modify the play scene

Open up the TestSceneHelloVideo scene. Take out the Cube and Cylinder. Delete the Main Camera since we will use an AR Camera later.

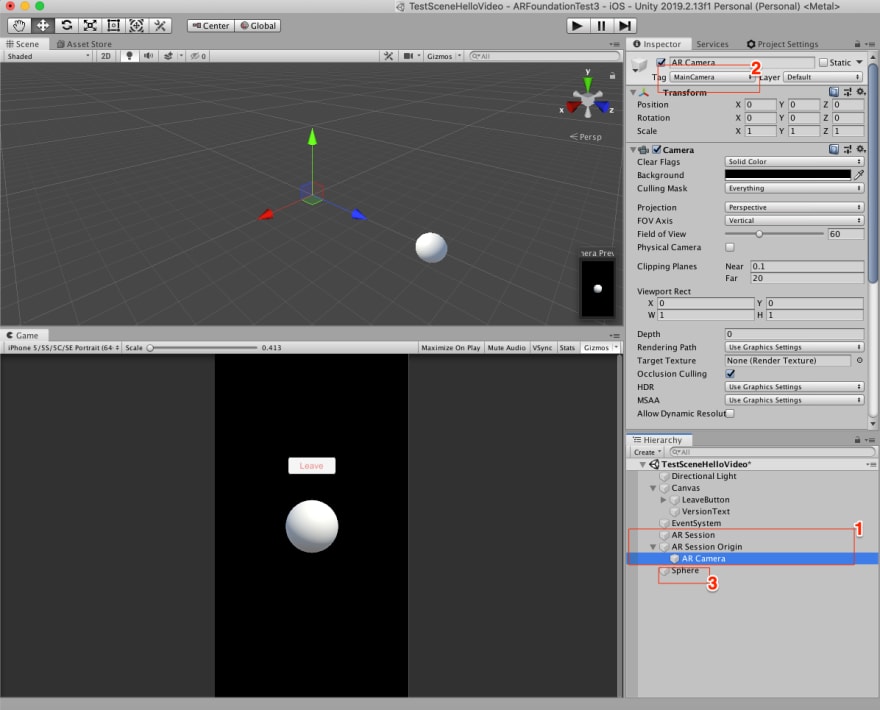

On the Hierarchy panel, create a “AR Session” and “AR Session Origin”. Click on the AR Camera and change the tag to “Main Camera”, then create a Sphere 3D object. Modify its transform position to (0,0,5.67) so it can be visible in your Editor Game view and Save.

Unlike the Cube or Cylinder, the purpose of this sphere is just for positional reference. You would find this sphere in your AR view when running on a mobile device. We will need to add video view objects relative to this sphere’s position by code.

Modify Test Scene Script

Open TestHelloUnityVideo.cs, change the onUserJoin() method to generate a cube instead of a plane. We will add a function to provide a new position for each new remote user joining the chat.

In the Join() method, add the following line in the “enable video” section:

mRtcEngine.EnableLocalVideo(false);

This call disables the front camera, so it won’t get into conflict with the back camera which is used by the AR Camera on the device.

Last but not the least, fill in your APP ID for the variable declared in the beginning section of the TestHelloUnityVideo class.

If you haven’t already go to Agora.io, log in and get an APP ID (it’s free). This will give you connectivity to the global Agora Real-time Communication network and allow you to broadcast across your living room or around the world, also your first **10,000 minutes are free every month.

Build Project

The configuration for building an ARFoundation enabled project is slightly different from the standard demo project.

Here is a quick checklist of things to set:

IOS:

Rendering Color Space = Linear

Graphics API = Metal

Architecture = ARM64

Target Minimum iOS Version = 11.0

A unique bundle id

Android:

Graphics API = GLES3

Multithreaded Rendering = off

Minimum API Level = Android 7.0 (API level 24)

Create a new key store in the Publishing Settings

Now build the Application to either iOS or Android. Run the standard demo application for the remote users, from any of the four platforms that we discussed at the beginning of this tutorial. To test your demo, stand up and use the device to look around you and you should find the sphere. A joining remote user’s video will now be placed on the cubes next to the sphere.

Great job! You’ve built a simple AR world of video chatters!

All Done!

Thank you for following along. If you have any questions please feel free to leave a comment, DM me, or join our Slack channel agoraiodev.slack/unity-help-me.

Note there will be another two chapters of the AR Foundation Video Chat tutorial with deeper contents. Stay in touch!

Other Resources

The complete API documentation is available in the Document Center.

For technical support, submit a ticket using the Agora Dashboard or reach out directly to our Developer Relations team devrel@agora.io

Top comments (4)

@icywind Excellent tutorial! We tried it, but noticed the following: in the AR Client we do see the video of the Standard client (so we see the video in AR floating around). However, we dont see the video stream from the AR Client in the Standard client. Could it be that the AR Client is using the back camera for the AR view and is not able to stream the front camera at the same time? If so, would this be possible too? If not, how could we make this work?

HI Raymond, please check out my two other Blogs if you haven't seen them yet:

dev.to/icywind/video-chat-with-uni...

dev.to/icywind/video-chat-with-uni...

Hi Rick, thanks for reaching out. Yes, i looked at the other 2 blogs. Great stuff! I only don't see how to work with 2 AR devices simultaniously. Also in these 2 other blogs, there is 1 AR client and 1 normal client application. So the bottom line: is it possible that the AR Client uses the back camera for the AR view and simultaniously the front camera for sending your own local video to the other devices?

Currently not possible. Both hardware and software limitation exist. First, you will need latest iOS and Android device that can turned on both camera at the same time. Secondly the SDK needs to be able to send the camera images as separate stream. Current it can't. It may come in the future release of the SDK.