If you don’t know what visual testing is, or you’ve heard of it but aren’t sure how it’s different from your existing test efforts, this post is for you.

Read on to learn about the differences (and overlap) between functional and visual testing, what benefits and challenges come with visual testing, and how we approach it at Percy.

How is visual testing different from functional testing?

Functional testing — from unit and integration testing to acceptance and end-to-end testing — checks that software is behaving as it should.

These kinds of tests check assertions against desired outcomes. You build software to behave a specific way, and you write tests to assure that it continues to do so, even as your application grows.

Automated testing has become a critical part of healthy software development practices within modern teams — but it has its limits.

As software grows, many of us have tried to stretch our test suites past those limits to check not only for behavioral outcomes but for visual elements as well. After catching (or after an end-user caught) a visual bug, you may have tried to write functional tests to prevent it from happening again.

We’ve all written tests to check for classes or other purely visual elements

Everyone wants to be confident that their code won’t break something. But writing functional tests to ensure visual stability or to catch visual regressions is not the answer, and falls into a lot of traps.

Pitfalls of using functional testing for visual elements

Functional tests are great for checking known inputs against their desired outputs, but it’s nearly impossible to assert visual “correctness” with code.

What are we supposed to assert?

That a specific CSS class is applied? Or maybe a computed style exists on the button, or that the text is a particular color?

Even with these kinds of assertions, you’re not actually testing anything visually, and there are so many things that can make your tests “pass” while resulting in a visual regression. Class attributes can change; other overriding classes can be applied, etc. It’s also hard to account for visual bugs caused by how elements get rendered by different browsers and devices.

When browsers and devices are part of the equation, it becomes even harder to assert the desired outcomes in tests. Trying to assert all those edge cases only exacerbates the challenge above and doesn’t give you a way to test new visual elements that come along.

This testing culture creates unruly and brittle test suites that constantly needs to be rewritten whenever you implement any kind of design or layout changes.

Visual testing is designed to overcome these challenges.

Much like functional testing, visual testing is intended to be part of code review processes. Whenever code changes are introduced, you can systemically monitor what your users actually see and interact with, and keep your functional test focused on behaviors.

Benefits and challenges of visual testing

When you’re truly testing the visual outcome of your code — your user interface — it doesn’t matter what has changed underneath. The inputs are the same, but instead of checking specific outputs with test assertions, it checks if what has changed is perceptible to the human eye.

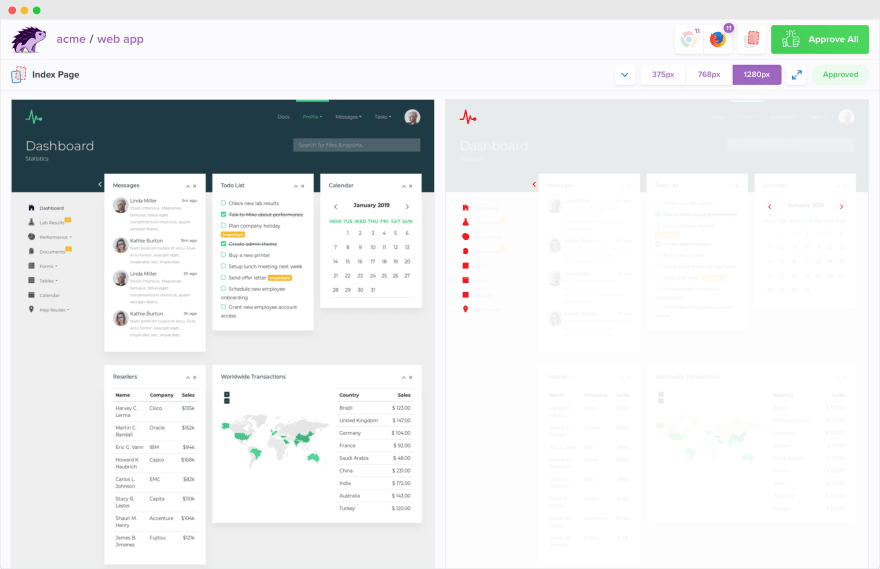

Visual testing works by analyzing browser renderings of software for visual changes. Then, by comparing rendered snapshots against previously determined baselines, visual testing detects visual changes between the two. Those differences are called visual diffs.

At Percy, we pioneered the use of DOM snapshots to get the most deterministic version of your web app, static site, or components.

Their object-oriented representation allows us to manipulate the documents’ structure, style, and content to reconstruct pages across browsers and screen widths in our own environment. We don’t have to replay any of the network requests, do any test setup, insert mock data, or get your UI to the right state. DOM snapshots give us the power to better control the output for predictability.

Visual testing also comes with its own challenges. Visual snapshotting and diffing is inherently static, which means the things that make websites interesting — like animations — can also make visual testing very difficult.

In designing Percy, we’ve built in several core features to make visual testing as useful as possible. Freezing CSS animations and GIFs, helping stabilize dynamic data, or simply hiding or changing UI elements helps minimize false positives.

Non-judgemental testing with visual reviews

Our visual testing workflow is designed to run alongside your functional test suite and code reviews.

We pull in code changes from feature branches and compare the resulting snapshots to what your app looked like before — usually whatever is on your master branch. Deterministic rendering, coupled with precise baseline picking, helps us accurately detect and highlight visual changes to be reviewed.

This leads us to the most ideological difference between visual and functional testing. Functional tests are written to pass or fail, whereas visual testing is non-judgemental—they don’t “pass” or “fail.”

Discerning between visual regressions and intentional visual changes and will always require human judgment. That’s why Percy is called a “visual testing and review platform.” We both facilitate the detection of visual changes and make reviewing those changes collaborative, efficient, and fast.

It’s great to be alerted when something changed unexpectedly — what you might say is a “failing” test. But getting continuous insight into intended visual changes is also incredibly valuable. (We wrote all about it in a recent post.)

The end goal is to provide teams with confidence in every code change by knowing the full impact they have visually. Today, visual testing is the best solution to that challenge.

Although visual “correctness” frequently correlates with functionality, at the end of the day, functional testing isn’t designed to check visual elements. Visual testing is also not suited to replace all of your functional tests. It can, however, replace “visual” regression tests and help you write smaller, more focused tests.

To learn more about visual testing with Percy, check out these resources:

Top comments (0)