The next post from the Redis replication series.

The next post from the Redis replication series.

Previous parts:

Redis: replication, part 2 – Master-Slave replication, and Redis Sentinel

Redis: replication, part 3 – redis-py and work with Redis Sentinel from Python

The task now is to write an Ansible role for automated Redis replication cluster provisioning and configuration.

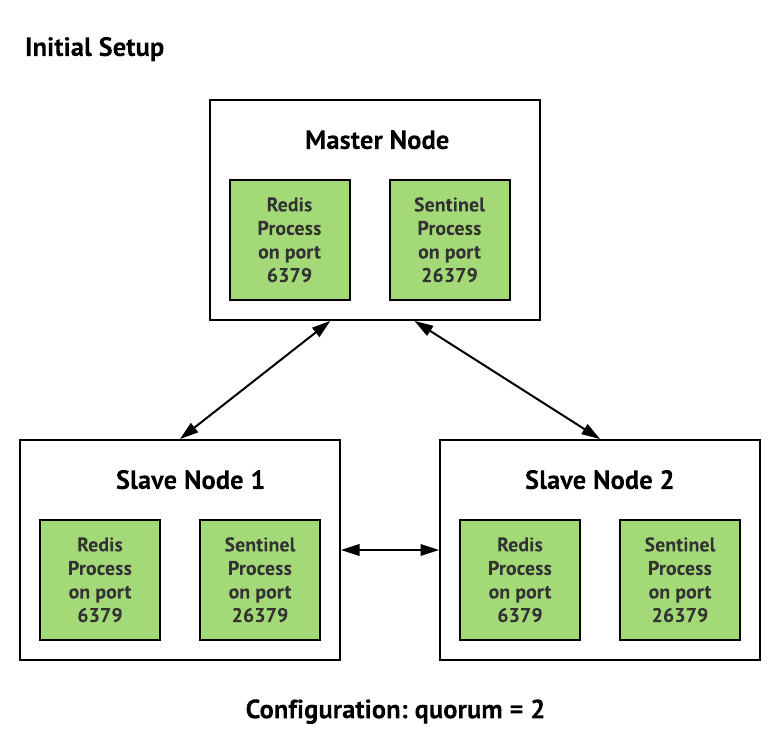

This role has to install and run a Redis Master node and two its Redis slaves, plus – Redis Sentinel instances which have to monitor Redis replicas and run a failover operation to create a new Master if the current one will go offline.

The task is a bit more complex because we have Redis already running on our environments and the new cluster must work some time simultaneously with this existing one until backend-develops will update all our projects to use replication and Sentinel.

To achieve this – new Redis nodes will use port 6389 (while the standard 6379 will be used by the currently existing Redis nodes), also will have to create own systemd‘s unit-files to manage new Redis and Sentinels.

The overall new scheme will be absolutely common:

I.e. will have three servers here:

- Console: or central host where we have some administrative tasks running. Also, Redis Master node and the first Sentinel instance will be placed here

- App-1 and App-2: two of our applications hosts where will have two Redis Slaves and two Sentinels

Ansible role

Create directories for the new role:

$ mkdir roles/redis-cluster/{tasks,templates}

And its execution to the playbook:

...

- role: redis-cluster

tags: common, app, redis-cluster

when: "'backend-bastion' not in inventory_hostname"

...

Variables

Create variables to be used in this role:

...

### ROLES VARS ###

# redis-cluster

redis_cluster_config_home: "/etc/redis-cluster"

redis_cluster_logs_home: "/var/log/redis-cluster"

redis_cluster_data_home: "/var/lib/redis-cluster"

redis_cluster_runtime_home: "/var/run/redis-cluster"

redis_cluster_node_port: 6389

redis_cluster_master_host: "dev.backend-console-internal.example.com"

redis_cluster_name: "redis-{{ env }}-cluster"

redis_cluster_sentinel_port: 26389

...

Tasks

Create tasks file roles/redis-cluster/tasks/main.yml.

Will start writing our role from the Redis Master installation and start.

Catalogs and files must be owned by the redis user.

For the Redis Master will use when: "'backend-console' in inventory_hostname" condition – our hostnames are dev.backend-console-internal.example.com for Console aka Master host, and dev.backend-app1-internal.example.com withи dev.backend-app2-internal.example.com – for Redis slaves.

Describe tasks:

- name: "Install Redis"

apt:

name: "redis-server"

state: present

- name: "Create {{ redis_cluster_config_home }}"

file:

path: "{{ redis_cluster_config_home }}"

state: directory

owner: "redis"

group: "redis"

- name: "Create {{ redis_cluster_logs_home }}"

file:

path: "{{ redis_cluster_logs_home }}"

state: directory

owner: "redis"

group: "redis"

- name: "Create {{ redis_cluster_data_home }}"

file:

path: "{{ redis_cluster_data_home }}"

state: directory

owner: "redis"

group: "redis"

- name: "Copy redis-cluster-master.conf to {{ redis_cluster_config_home }}"

template:

src: "templates/redis-cluster-master.conf.j2"

dest: "{{ redis_cluster_config_home }}/redis-cluster.conf"

owner: "redis"

group: "redis"

mode: 0644

when: "'backend-console' in inventory_hostname"

- name: "Copy Redis replication cluster systemd unit file"

template:

src: "templates/redis-cluster-replica-systemd.j2"

dest: "/etc/systemd/system/redis-cluster.service"

owner: "root"

group: "root"

mode: 0644

- name: "Redis relication cluster restart"

systemd:

name: "redis-cluster"

state: restarted

enabled: yes

daemon_reload: yes

Templates

Create files templates

Will start from the systemd. As our new Redis-cluster has to work alongside already existing Redis nodes and use non-standard ports and directories – we can not use the default Redi’s systemd unit file.

So copy it and update for our needs.

systemd

Create a roles/redis-cluster/templates/redis-cluster-replica-systemd.j2 template file:

[Unit]

Description=Redis relication cluster node

After=network.target

[Service]

Type=forking

ExecStart=/usr/bin/redis-server {{ redis_cluster_config_home }}/redis-cluster.conf

PIDFile={{ redis_cluster_runtime_home }}/redis-cluster.pid

TimeoutStopSec=0

Restart=always

User=redis

Group=redis

RuntimeDirectory=redis-cluster

ExecStop=/bin/kill -s TERM $MAINPID

UMask=007

PrivateTmp=yes

LimitNOFILE=65535

PrivateDevices=yes

ProtectHome=yes

ReadOnlyDirectories=/

ReadWriteDirectories=-{{ redis_cluster_data_home }}

ReadWriteDirectories=-{{ redis_cluster_logs_home }}

ReadWriteDirectories=-{{ redis_cluster_runtime_home }}

CapabilityBoundingSet=~CAP_SYS_PTRACE

ProtectSystem=true

ReadWriteDirectories=-{{ redis_cluster_config_home }}

[Install]

WantedBy=multi-user.target

In the ExecStart=/usr/bin/redis-server {{ redis_cluster_config_home }}/redis-cluster-master.conf parameter our own Redis config file will be passed.

Redis Master

Create a Redis Master config file template roles/redis-cluster/templates/redis-cluster-master.conf.j2:

bind 0.0.0.0

protected-mode yes

port {{ redis_cluster_node_port }}

tcp-backlog 511

timeout 0

tcp-keepalive 300

daemonize yes

supervised no

pidfile {{ redis_cluster_runtime_home }}/redis-cluster.pid

loglevel notice

logfile {{ redis_cluster_logs_home }}/redis-cluster.log

databases 16

stop-writes-on-bgsave-error yes

rdbcompression yes

rdbchecksum yes

dbfilename dump.rdb

dir {{ redis_cluster_data_home }}

slave-serve-stale-data yes

slave-read-only yes

repl-diskless-sync no

repl-diskless-sync-delay 5

repl-disable-tcp-nodelay no

slave-priority 100

appendonly yes

appendfilename "appendonly.aof"

appendfsync everysec

no-appendfsync-on-rewrite no

auto-aof-rewrite-percentage 100

auto-aof-rewrite-min-size 64mb

aof-load-truncated yes

lua-time-limit 5000

slowlog-log-slower-than 10000

slowlog-max-len 128

latency-monitor-threshold 0

notify-keyspace-events ""

hash-max-ziplist-entries 512

hash-max-ziplist-value 64

list-max-ziplist-size -2

list-compress-depth 0

set-max-intset-entries 512

zset-max-ziplist-entries 128

zset-max-ziplist-value 64

hll-sparse-max-bytes 3000

activerehashing yes

client-output-buffer-limit normal 0 0 0

client-output-buffer-limit slave 256mb 64mb 60

client-output-buffer-limit pubsub 32mb 8mb 60

hz 10

aof-rewrite-incremental-fsync yes

Later will have to update it and set more appropriate parameters but for now, can leave it with the defaults – just update bind and port.

Deploy it using ansible_exec.sh. In future it will be deployed via a Jenkins job:

$ ./ansible_exec.sh -t redis-cluster

Tags: redis-cluster

Env: mobilebackend-dev

...

Check Redis Master status:

root@bttrm-dev-console:/home/admin# systemctl status redis-cluster.service

● redis-cluster.service - Redis relication cluster node

Loaded: loaded (/etc/systemd/system/redis-cluster.service; enabled; vendor preset: enabled)

Active: active (running) since Wed 2019-04-03 14:05:46 EEST; 9s ago

Process: 22125 ExecStop=/bin/kill -s TERM $MAINPID (code=exited, status=0/SUCCESS)

Process: 22131 ExecStart=/usr/bin/redis-server /etc/redis-cluster/redis-cluster-master.conf (code=exited, status=0/SUCCESS)

Main PID: 22133 (redis-server)

Tasks: 3 (limit: 4915)

Memory: 1.1M

CPU: 14ms

CGroup: /system.slice/redis-cluster.service

└─22133 /usr/bin/redis-server 0.0.0.0:6389

Apr 03 14:05:46 bttrm-dev-console systemd[1]: Starting Redis relication cluster node...

Apr 03 14:05:46 bttrm-dev-console systemd[1]: redis-cluster.service: PID file /var/run/redis/redis-cluster.pid not readable (yet?) after start: No such file or directory

Apr 03 14:05:46 bttrm-dev-console systemd[1]: Started Redis relication cluster node.

OK.

Redis Slaves

Add config for Redis Slaves – roles/redis-cluster/templates/redis-cluster-slave.conf.j2.

It almost the same as the master’s config just has slaveoff:

slaveof {{ redis_cluster_master_host }} {{ redis_cluster_node_port }}

bind 0.0.0.0

port {{ redis_cluster_node_port }}

pidfile {{ redis_cluster_runtime_home }}/redis-cluster.pid

logfile {{ redis_cluster_logs_home }}/redis-cluster.log

dir {{ redis_cluster_data_home }}

protected-mode yes

tcp-backlog 511

timeout 0

tcp-keepalive 300

...

Add task.

Here the when: "'backend-console' not in inventory_hostname" condition used to copy this file to the App-1 and App-2 only:

...

- name: "Copy redis-cluster-slave.conf to {{ redis_cluster_config_home }}"

template:

src: "templates/redis-cluster-slave.conf.j2"

dest: "{{ redis_cluster_config_home }}/redis-cluster.conf"

owner: "redis"

group: "redis"

mode: 0644

when: "'backend-console' not in inventory_hostname"

...

Deploy, check:

root@bttrm-dev-app-1:/home/admin# redis-cli -p 6389 info replication

Replication

role:slave

master_host:dev.backend-console-internal.example.com

master_port:6389

master_link_status:down

master_last_io_seconds_ago:-1

...

Check replication

Add a key on the Master:

root@bttrm-dev-console:/home/admin# redis-cli -p 6389 set test 'test'

OK

Get it on slaves:

root@bttrm-dev-app-1:/home/admin# redis-cli -p 6389 get test

"test"

root@bttrm-dev-app-2:/home/admin# redis-cli -p 6389 get test

"test"

Redis Sentinel

Add a Redis Sentinel’s config, one for all hosts – roles/redis-cluster/templates/redis-cluster-sentinel.conf.j2.

Use the sentinel announce-ip here, see the Redis: Sentinel – bind 0.0.0.0, the localhost issue and the announce-ip option for details:

sentinel monitor {{ redis_cluster_name }} {{ redis_cluster_master_host }} {{ redis_cluster_node_port }} 2

bind 0.0.0.0

port {{ redis_cluster_sentinel_port }}

sentinel announce-ip {{ hostvars[inventory_hostname]['ansible_default_ipv4']['address'] }}

sentinel down-after-milliseconds {{ redis_cluster_name }} 6001

sentinel failover-timeout {{ redis_cluster_name }} 60000

sentinel parallel-syncs {{ redis_cluster_name }} 1

daemonize yes

logfile {{ redis_cluster_logs_home }}/redis-sentinel.log

pidfile {{ redis_cluster_runtime_home }}/redis-sentinel.pid

Add a template for the Sentinel’s service systemd unit file – roles/redis-cluster/templates/redis-cluster-sentinel-systemd.j2:

[Unit]

Description=Redis relication Sentinel instance

After=network.target

[Service]

Type=forking

ExecStart=/usr/bin/redis-server {{ redis_cluster_config_home }}/redis-sentinel.conf --sentinel

PIDFile={{ redis_cluster_runtime_home }}/redis-sentinel.pid

TimeoutStopSec=0

Restart=always

User=redis

Group=redis

ExecStop=/bin/kill -s TERM $MAINPID

ProtectSystem=true

ReadWriteDirectories=-{{ redis_cluster_logs_home }}

ReadWriteDirectories=-{{ redis_cluster_config_home }}

ReadWriteDirectories=-{{ redis_cluster_runtime_home }}

[Install]

WantedBy=multi-user.target

Add the Sentinels stop task at the very beginning of the roles/redis-cluster/tasks/main.yml, otherwise during deploy if a Sentinel instance will be running – it will overwrite Ansible’s changes in its config:

- name: "Install Redis"

apt:

name: "redis-server"

state: present

- name: "Redis replication Sentinel stop"

systemd:

name: "redis-sentinel"

state: stopped

ignore_errors: true

...

Add files copy and Sentinel start:

...

- name: "Copy redis-cluster-sentinel.conf to {{ redis_cluster_config_home }}"

template:

src: "templates/redis-cluster-sentinel.conf.j2"

dest: "{{ redis_cluster_config_home }}/redis-sentinel.conf"

owner: "redis"

group: "redis"

mode: 0644

...

- name: "Copy Redis replication Sentinel systemd unit file"

template:

src: "templates/redis-cluster-sentinel-systemd.j2"

dest: "/etc/systemd/system/redis-sentinel.service"

owner: "root"

group: "root"

mode: 0644

...

- name: "Redis relication Sentinel restart"

systemd:

name: "redis-sentinel"

state: restarted

enabled: yes

daemon_reload: yes

The documentation says Sentinels must be started with at least 30 seconds pause – but it works (for now) without it.

Will check during Dev/Stage testing/

Deploy, check:

root@bttrm-dev-console:/home/admin# redis-cli -p 26389 info sentinel

Sentinel

sentinel_masters:1

sentinel_tilt:0

sentinel_running_scripts:0

sentinel_scripts_queue_length:0

sentinel_simulate_failure_flags:0

master0:name=redis-dev-cluster,status=ok,address=127.0.0.1:6389,slaves=2,sentinels=3

Testing Sentinel failover

Run tail -f for logs on all instances:

root@bttrm-dev-app-1:/etc/redis-cluster# tail -f /var/log/redis-cluster/redis-sentinel.log

On the Master – check current master’s IP:

root@bttrm-dev-console:/etc/redis-cluster# redis-cli -h 10.0.2.104 -p 26389 sentinel get-master-addr-by-name redis-dev-cluster

1) "127.0.0.1"

2) "6389"

And replication status:

root@bttrm-dev-console:/etc/redis-cluster# redis-cli -h 10.0.2.104 -p 6389 info replication

Replication

role:master

connected_slaves:2

...

Role – Master, two slaves – all good.

Stop the Master’s Redis node:

root@bttrm-dev-console:/etc/redis-cluster# systemctl stop redis-cluster.service

Log on the App-2:

11976:X 09 Apr 13:12:13.869 # +sdown master redis-dev-cluster 10.0.2.104 6389

11976:X 09 Apr 13:12:13.983 # +new-epoch 1

11976:X 09 Apr 13:12:13.984 # +vote-for-leader 8fd5f2bb50132db0dc528e69089cc2f9d82e01d0 1

11976:X 09 Apr 13:12:14.994 # +odown master redis-dev-cluster 10.0.2.104 6389 #quorum 2/2

11976:X 09 Apr 13:12:14.994 # Next failover delay: I will not start a failover before Tue Apr 9 13:14:14 2019

11976:X 09 Apr 13:12:15.105 # +config-update-from sentinel 8fd5f2bb50132db0dc528e69089cc2f9d82e01d0 10.0.2.71 26389 @ redis-dev-cluster 10.0.2.104 6389

11976:X 09 Apr 13:12:15.105 # +switch-master redis-dev-cluster 10.0.2.104 6389 10.0.2.71 6389

-

sdown master: Sentinel think the Master is down -

odown master quorum 2/2: both Sentinels on the App-1 and App-2 agreed -

switch-master ... 10.0.2.71– Sentinel reconfigured Redis node on the 10.0.2.71 from the Slave role to the new Master role

All works??

Check on the 10.0.2.71, it’s App-1:

root@bttrm-dev-app-1:/etc/redis-cluster# redis-cli -p 6389 info replication

Replication

role:master

connected_slaves:1

...

Turn on Redis Master on the Console/Master host:

root@bttrm-dev-console:/etc/redis-cluster# systemctl start redis-cluster.service

Check App-2 log:

11976:X 09 Apr 13:17:23.954 # -sdown slave 10.0.2.104:6389 10.0.2.104 6389 @ redis-dev-cluster 10.0.2.71 6389

11976:X 09 Apr 13:17:33.880 * +convert-to-slave slave 10.0.2.104:6389 10.0.2.104 6389 @ redis-dev-cluster 10.0.2.71 6389

Check the new Master log:

root@bttrm-dev-console:/etc/redis-cluster# redis-cli -p 6389 info replication

Replication

role:slave

master_host:10.0.2.71

master_port:6389

master_link_status:up

...

Old Master became Slave now.

All works.

Done.

Top comments (0)