Acknowledgements

We would like to thank our mentors, Asaf Nisani and Yoav Lebendiker, for their guidance throughout the project.

We thank Applied Materials for hosting us and providing the opportunity to work on a real-world industry project, and ExtraTech for organizing and supporting the collaboration.

Project Overview

Our project aimed to generate highly specific SEM wafer images based on user input.

Early on, we realized that the most intuitive and accurate way for users to express their intent is through segmentation - a simple structural layout that represents the micro-structure they wish to generate.

To support this approach, we needed a high-quality paired dataset of SEM images and their corresponding segmentation masks. Since such data does not naturally exist, we generated it ourselves.

Dataset Creation

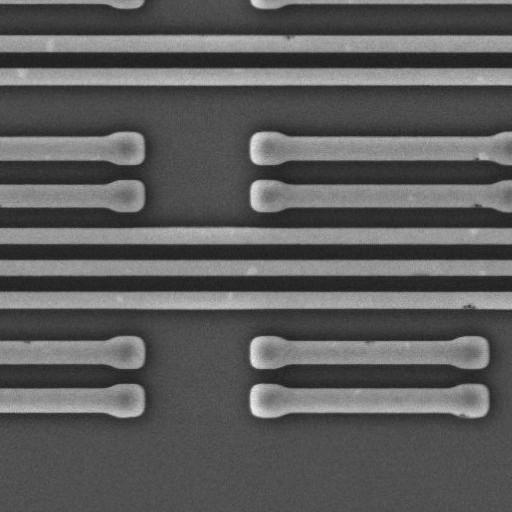

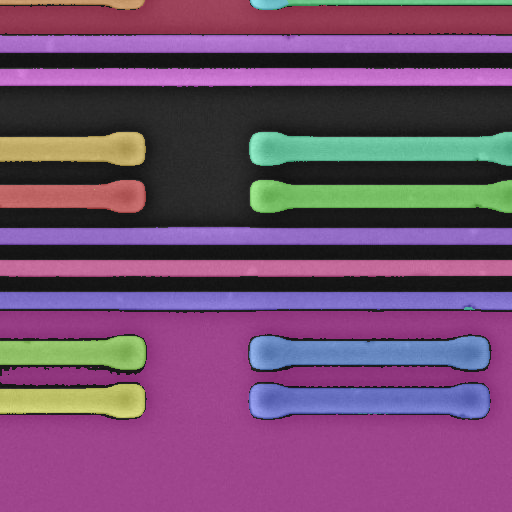

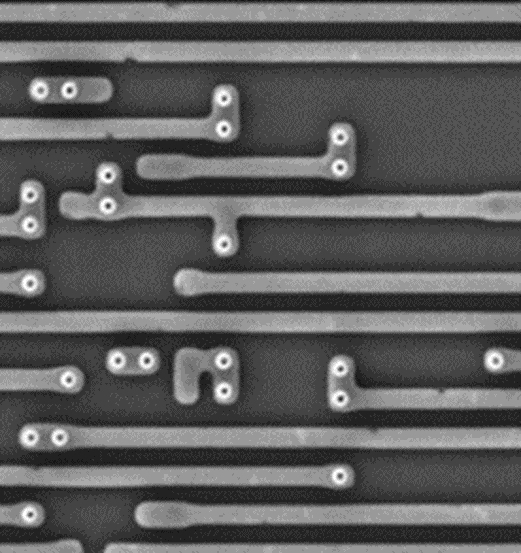

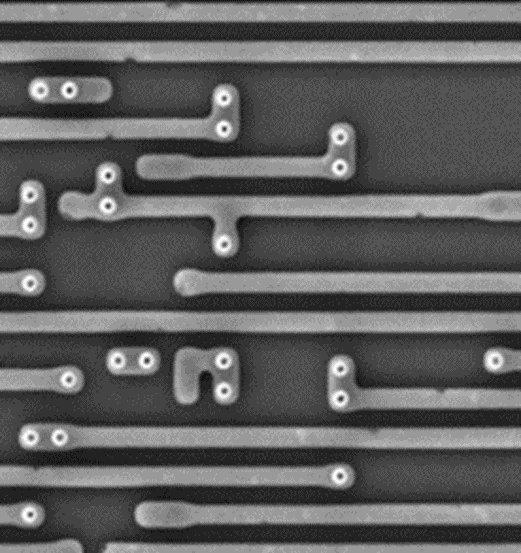

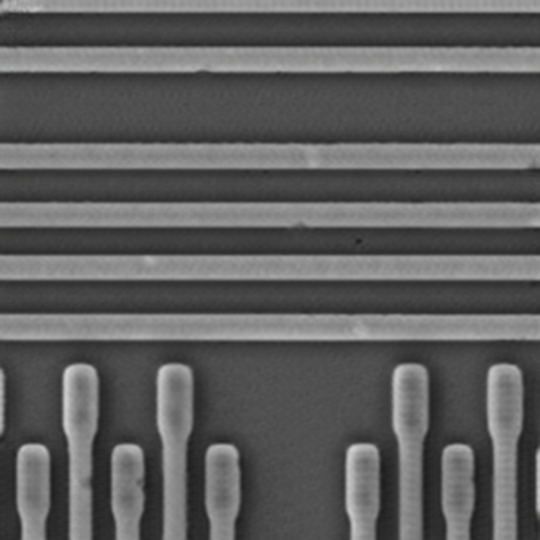

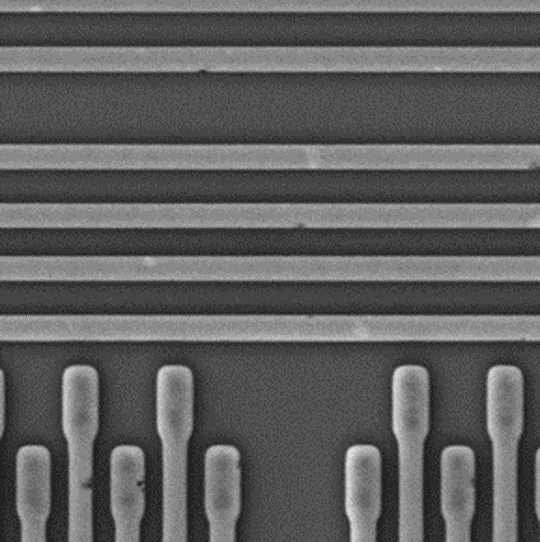

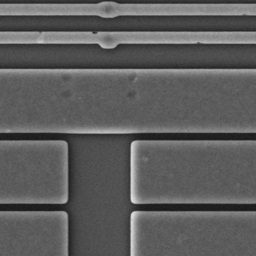

We converted the MIIC (Microscopic Images of Integrated Circuits) dataset-originally designed for anomaly detection and image inpainting-into segmentation maps using SAM-2.

This process produced precise segmentation results without requiring any additional training.

The final dataset consisted of paired samples:

SEM image → segmentation mask, which enabled supervised training.

|

|

|

|

|---|

The images were taken from the MIIC (Microscopic Images of Integrated Circuits) dataset for anomaly detection and image inpainting.

Model Training and Comparison

With the dataset prepared, we moved on to model training and compared two GAN-based image-to-image translation approaches: Pix2Pix and CycleGAN.

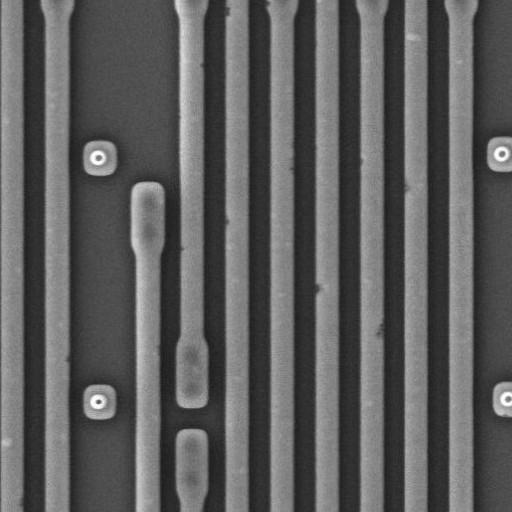

Pix2Pix

Pix2Pix is well-suited for scenarios where paired training data is available. It learns a direct mapping from segmentation masks to SEM images using a generator-discriminator framework.

We trained the model on 3,000 SEM images and 3,000 segmentation masks, split into training and test sets.

After 100 epochs, the results were strong, so we continued training for 50 additional epochs with a reduced learning rate to improve stability.

| Input | Output | Original |

|---|---|---|

|

|

|

Evaluation Metrics:

- FID: 14.329

- LPIPS: 0.033

- PSNR: 33.779

- SSIM: 0.951

These results indicate that Pix2Pix generated sharp, realistic SEM images with strong structural similarity to the ground truth.

CycleGAN

CycleGAN is designed for unpaired image translation. Although our dataset was paired, we included CycleGAN in the comparison due to its flexibility and widespread use in scientific imaging tasks.

We trained CycleGAN for 200 epochs, applying several optimizations along the way:

- Reduced identity loss to better preserve grayscale SEM texture

- Increased discriminator augmentation to mitigate overfitting

| Input | Output | Original |

|---|---|---|

|

|

|

Evaluation Metrics:

- FID: 55.470

- LPIPS: 0.628

- PSNR: 13.127

- SSIM: 0.370

While the model improved during training, its outputs remained noticeably weaker than those produced by Pix2Pix, which benefited from the supervised setup.

Limitations and Practical Challenges

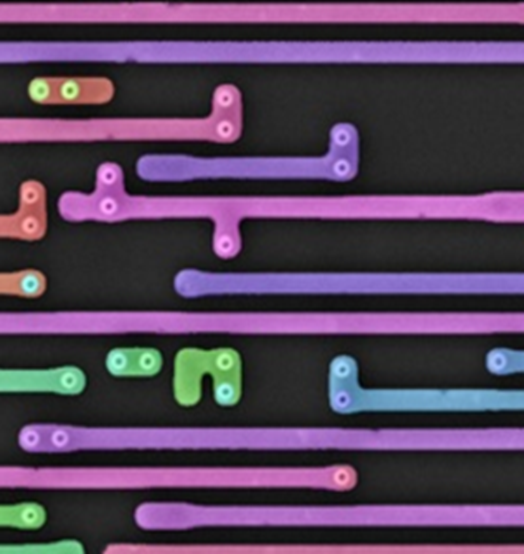

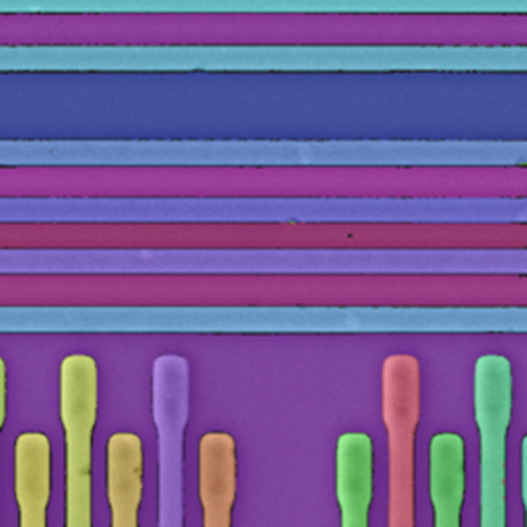

During the project, we identified an important limitation in the segmentation-based workflow.

Although segmentation masks provide an intuitive way for users to describe structure, the masks in our dataset were not randomly colored. Their colors were automatically assigned by SAM-2, which applies consistent and specific color patterns for background and different structures.

A new user drawing their own segmentation mask cannot know these exact color rules. As a result, user-generated masks may differ from the distribution the model was trained on. This mismatch leads to less accurate and less reliable outputs, even when the structural layout is correct.

| Input | Output | Original |

|---|---|---|

|

|

|

Additional Evaluation: Real vs. Generated Image Classification

To further evaluate the realism of the generated images, we trained a separate classification model to distinguish between real SEM images and generated ones.

The idea was simple: if the synthetic images are truly realistic, the classifier should struggle to tell them apart from real data and achieve accuracy close to 0.5.

We trained the classifier on a balanced dataset containing both real SEM images and images generated by our models.

However, the results were significantly higher than expected:

- 0.96 accuracy on Pix2Pix-generated images

- 0.99 accuracy on CycleGAN-generated images

These results suggest that, despite strong visual quality and favorable similarity metrics, the generated images still contain subtle artifacts or patterns that allow a neural network to reliably distinguish them from real SEM data.

Next Steps and Future Work

These findings open several directions for future work.

First, we plan to apply explainability techniques to understand which features the classifier relies on when separating real and generated images. This may reveal hidden biases, texture inconsistencies, or frequency-domain artifacts that are not obvious to the human eye.

Second, we aim to further refine the generative models through additional training, architectural adjustments, or loss-function tuning to reduce these detectable differences.

Another possible direction is to train a model that learns the residual noise patterns specific to real SEM images and injects this learned signal into generated images as a post-processing step to improve realism.

Most importantly, we plan to test the core goal of this work: using synthetic data for downstream tasks. We will train a real-world SEM task model on generated images and evaluate it on real SEM test data.

If performance transfers successfully, it would validate synthetic SEM generation as an effective tool for data augmentation and model training.

Top comments (0)