Deep learning teaches computers to do what comes naturally to humans: learn by examples. It captures complex patterns in the given examples; finds interactions between them, which lead to predicting the desired output.

Capturing interactions

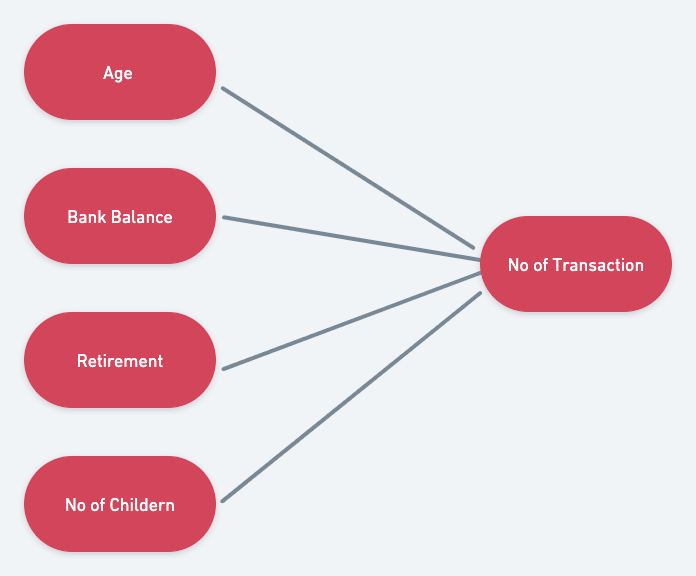

Suppose you have to predict a number of transactions using factors like Age, bank balance, Retirement status, etc.

You can use linear regression here. Linear regression is when you fit Data on the straight line to find the prediction.

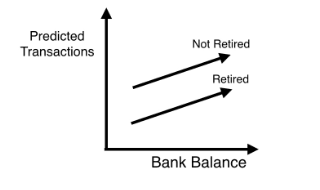

But something is missing! It seems unrealistic to think that all data variables are independent of others. See the parallel line? There is no interaction between them.

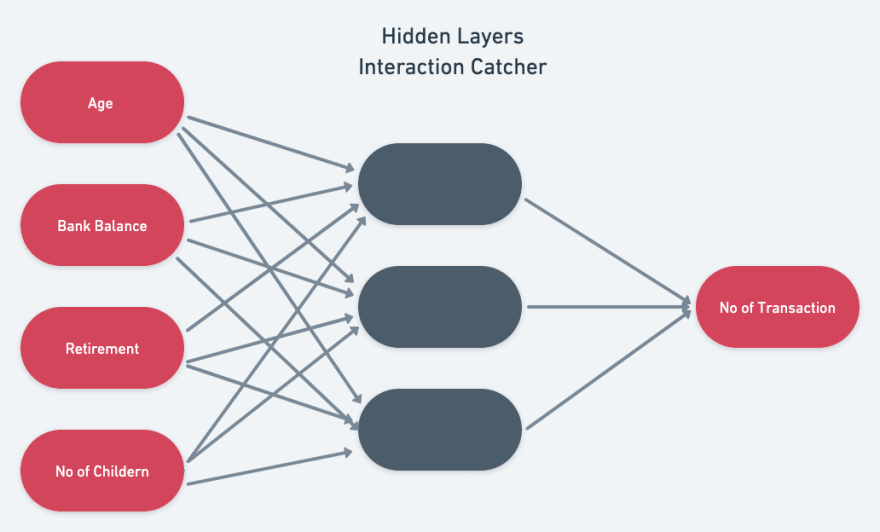

This is where Neurons come into the picture. Multiple Neurons stack on each other to find interactions in given input data(Neural Network). Something like these.

Neurons are like a black box with some magic inside it. When data is sent through it the first time, It uses its magic to predict something.

First-time, the magic fails. But as neuron predicts more things, they get good at this witchcraft. And the dystopia is here; they can and will replace you in the Job market.

With complex enough neural network and correct resources of data and time, you can literally capture any intricate interaction between any data points.

Any neural network starts with the input layer and ends on the output layer. And the magic happens in the hidden layers. And they are hidden for now; as you go deep into deep learning, you will find them.

The most complex neural network is GPT-3. It captures 175 billion interactions. Let's start with a single one.

Forward Propagation

Now let's understand magic!

Now let's understand magic!

The forward-propagation algorithm will pass this information through the network to predict the output.

Each neuron has a real value linked to it which tells the importance of that neuron feature in predicting the output. These are weights. Weights will predict output for next layer.

Output calculation algorithms:

for all connections to the node:

Node_value += input * weights

Python code for doing same calculation for neural network in given image

import numpy as np

input_data = np.array([2,3])

weights = {'node_0':np.array([1,1]),

'node_1':np.array([-1,1]),

'output':np.array([2,-1])

}

node_0_value = (input_data* weights['node_0']).sum()

node_0_output = np.tanh(node_0_value)

node_1_value = (input_data* weights['node_1']).sum()

node_1_output = np.tanh(node_1_value)

hidden_layer_value = np.array([node_0_output,node_1_output])

output = (hidden_layer_value*weights['output']).sum()

Top comments (0)