Intro

Recently, browser features such as SharedArrayBuffer and WebAssembly are increasing. Video and video converter ffmpeg also works in browsers.

Therefore, I tried to create a video editing software that works on a browser experimentally.

You can try the demo from here.

https://vega.toshusai.net/

source

https://github.com/toshusai/vega

Basic Idea

Using basic below materials.

- images

- video

- audio

- text

In order to display these materials on the screen, a container for information such as playback position, length, and screen position is called a strip.

Convert these strips into the final video in a way that suits each material.

FFmpeg inputs are video, image sequences or audio.

If you want to add text, you have to generate image included text.

Video

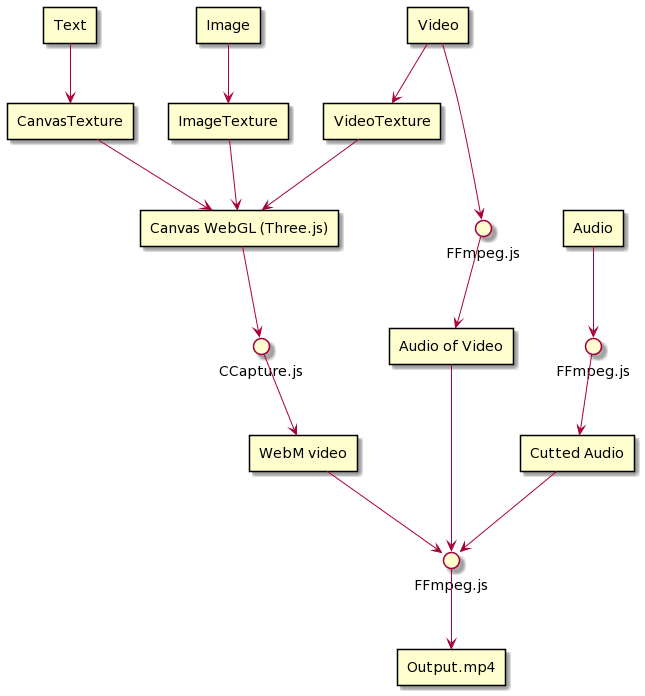

So, convert all the images/videos to image sequence once and pass them to ffmpeg. used three.js for the renderer. Three.js can handle image as Texture, also video as VideoTexture.

The image capture step uses ccapture to ensure that the playback frame is converted to an image. All frame images to webm video.

Audio

Next, Merge image sequence and audio. This is ffmpeg part.

For video, get a specific range of audio from the video.

For audio, cut the audio.

Finally, use filter_compex to combine all the audio in strip time.

-i _vega_video.webm -i video.mp3 -i audio.mp4

-filter_complex [2:a]adelay=5715|5715[out2];[1:a]adelay=260|260[out1];[out1][out2]amix=inputs=2[out]

-map [out]:a

This is the overall flow diagram.

Thanks Open Source Softwares/Libraries

- JavaScript Framework

- Video/Audio Converter

- Capture canvas

- 3D library (as Renderer)

- Audio visualization

- Monospace font

- Icons

- Design System

- Documentation

- Test Asset

- Audio

- ondoku3

- Video

- Blender Foundation

- Image

- Kenney

I also created a UI components to use for this. If you like / are interested, check this out too.

https://github.com/toshusai/spectrum-vue

Top comments (2)

this is very interesting!

I love your video editor, it's amazing

I would like to ask if you are going to finish the project with what is missing, for example the opacity, animations etc...

Thank you