“I'm going to Vegas to spend more time in the mountains.” Said no one ever unless you're a rock climber.

A Climber's guide to building recommendation engine in Python

Part I - Introduction

Recommendation engines or recommendation systems are everywhere you turn, from music, movie and product recommendations, to what kind of current events and news shown to us.

Would it be cool if rock climbers ourselves can build a climb recommendation engine to discover similar and interesting climbs?

After reading this tutorial you can build one of such engine yourself in Python.

Note: Jupyter notebook for this tutorial is available on Github.

Before we start, let’s review four common ways to build a recommendation engine:

- Popularity-based recommenders: The simplest of all. You make a list of items based on some criteria such as video view count or song play count. Twitter trending topics or Reddit comments are good examples of this approach.

- Content-based recommenders: This type of system takes one item as an input and suggests similar items based on a set of characteristics. For example, given a movie, the recommendation engine finds similar movies based on genre, directors and actors.

-

Collaborative-filtering recommenders: You may have seen “People who did X also did Y” suggestions when browsing Amazon. Based on past behaviors or preferences of other users, the engine can predict similar choices.

- Hybrid recommenders: Lastly, there are no reasons why we can’t combine two or more of the above recommenders. In fact, hybrid recommenders are commonly used in real-world applications as research has shown they can produce more accurate suggestions. Spotify’s New music recommendations is one good example of this approach. For example, first we take the list of this month’s popular songs (popularity-based recommender) and suggest only songs that are similar by genre and artists (content-based recommender).

In this tutorial we are going to build a Collaborative-filtering recommendation engine.

About The Dataset

The dataset contains 84,786 ratings from 7,950 users on 3,161 climbs in Nevada, USA where the well-known Red Rock Canyon climbing area is located. The reason Nevada was chosen was simply because I have climbed there for several seasons.

User ratings were extracted from MountainProject.com with user IDs anonymized.

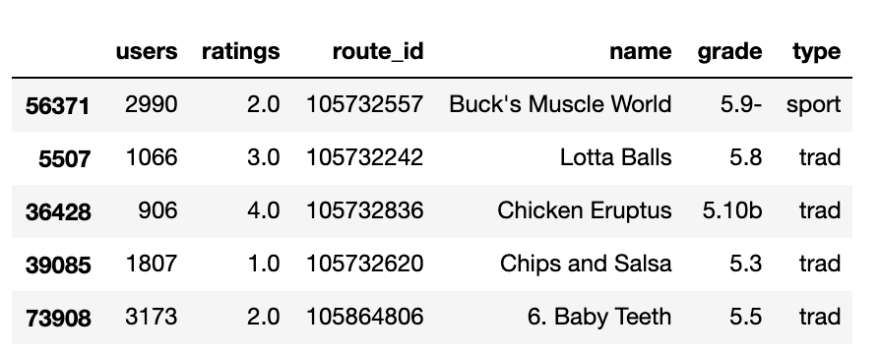

Let’s load our data into a Pandas dataframe.

import pandas as pd

df = pd.read_csv("./openbeta-ratings-nevada.zip", compression="zip")

df.sample(5)

Popular Climbs

One basic metric we can look at is how climbs are ranked by popularity by counting the number of ratings they received.

# aggregate climbs and count number of ratings

df.groupby(['route_id','name'])['ratings'] .count()

.reset_index(name="count")

Sort the result by count and only show the top 20.

popular = df.groupby(['route_id','name'])['ratings'] .count()

.reset_index(name="count")

.sort_values(by=['count'], ascending=False)

popular.head(20)

There we have it! A list of popular climbs in Red Rocks. If you have visited the area before, you would definitely recognize those names.

Understand Collaborative Filtering

In Collaborative Filtering we find “like-minded” climbers based on ratings or preferences they have given to climbing routes.

Let’s consider two multi-pitch climbs, The Nightcrawler and The Dragon that received 4-star ratings from climber Tamara. If you have also given high ratings to them, we can say two climbers have high similarity score. For the sake of this example, if Tamara gives another climb 3 or 4 stars, chances are, you will also like the climb and vice versa.

This is the principle idea behind Collaborative filtering! Intuitive and simple, right? In fact, you can calculate this similarity score by hand or with the help of a Python library. The higher the number, the more “like-minded” the two climbers.

Believe it or not, the table above can be represented in Python as a 2-D array (matrix). We’ll use Cosine similarity, a common and simple method to calculate similarity.

# Be sure to install sklearn library with pip or pipenv

import sklearn.metrics.pairwise as pw

tamara = [[4,4]]

you = [[3,4]]

pw.cosine_similarity(tamara, you)

# Result = [[0.98994949]]

We can also calculate cosine similarity for more than two routes. Let’s say Tamara disliked Cat in the Hat and gave it 1-star.

import sklearn.metrics.pairwise as pw

tamara = [[4,4,1]]

you = [[3,4,3]]

pw.cosine_similarity(tamara, you)

# Result = [[0.94947403]]

Cosine similarity drops from 0.98994949 to 0.94947403.

Now you see, we can extend the above logic to take into account other thousand climbs and user ratings. The math will, of course, get complicated as the size of the matrix increases. Take a deep breath as if you’re 1 meter above the last bolt! We are going to use Surprise, a Python machine learning library, to help us build our recommendation engine.

Cosine similarity is just one of many ways to find similar items. Surprise recommendation library supports:

- Cosine similarity

- Pearson’s correlation coefficients

- Mean Square Difference

Part II - Building Recommendation Engine

1. Load the data file

This step is a repeat of previous section for continuity.

import pandas as pd, numpy as np

df = pd.read_csv("./openbeta-ratings-nevada.zip", compression="zip") df.sample(5)

2. Create a prediction model

In this example, we use K-Nearest Neighbors algorithm to build our prediction model. Essentially, the code does cosine similarity calculation like what we’ve just done previously, but it’s working hard on the dataset and also ranking climbs by similarity scores.

from surprise import Dataset

from surprise import Reader

from surprise import accuracy

from surprise import KNNBasic

from surprise.model_selection import train_test_split

from surprise.model_selection import KFold

reader = Reader(rating_scale=(0, 4))

data = Dataset.load_from_df(df[['users', 'route_id', 'ratings']], reader)

sim_options = {'name': 'cosine', 'user_based': True, 'min_support': 4}

algo = KNNBasic(sim_options=sim_options)

kf = KFold(n_splits=5)

for trainset, testset in kf.split(data):

# train and test algorithm

algo.fit(trainset)

predictions = algo.test(testset)

# compute and print Root Mean Squared Error

accuracy.rmse(predictions, verbose=True)

Output:

Computing the cosine similarity matrix...

Done computing similarity matrix. RMSE: 0.7172

Computing the cosine similarity matrix...

Done computing similarity matrix. RMSE: 0.7057

...

Understanding Train Set and Test Set

You may be wondering about RMSE values (root-mean-square-errors) and why there is a For loop and the need to split the dataset into train set and test set?

Consider our previous rating example. In a perfect world, the recommendation engine can predict with high accuracy if Tamara gives another route a high rating, you will also like that route.

In order to measure prediction accuracy, the algorithm splits the dataset into multiple smaller sets in which it “pretends” it doesn’t know some of ratings climbers have given, and compare actual ratings vs predicted values. RSME is the measure of the deviation.

Train set: a subset of the dataset used to calculate similarity and perform prediction or “train” the prediction model.

Test set: a subset of the dataset where you apply the prediction model from the train set and test prediction accuracy.

Examples:

Test set 1 — Compare 3 (actual) with predicted value.

Test set 2 — Compare 4 (actual) with predicted value.

3. Make Recommendations

It’s time to answer the great question, climbers who liked Epinephrine also liked …

climb_name = "Epinephrine"

# look up route_id from human-readable name

route_id = df[df.name==climb_name]['route_id'].iloc[1]

print("People who climbed '{}' also climbed".format(climb_name))

# get similar climbs

prediction = algo.get_neighbors( trainset.to_inner_iid(route_id), 50)

print(prediction)

Output:

People who climbed 'Epinephrine' also climbed [263, 506, 238, 75, 8, 511, 1024, 233, 173, 418, 550, 1050, 478, 2, 379, 596, 1491, 221, 730, 261, 30, 410, 109, 313, 264, 148, 659, 68, 223, 1131, 1283, 428, 272, 354, 496, 143, 737, 1152, 835, 17, 356, 368, 545, 89, 23, 74, 281, 480, 509, 278]

Domain Id vs Surprise Internal Id

For efficiency, the prediction algorithm converts our dataset into another data structure (most likely into some sort of matrix), and work with the data by their internal IDs.

Surprise library provides two helper functions to convert one to another.

-

trainset.to_inner_iid(route_i)— Convert domain-specific Id to internal Id. -

trainset.to_raw_iid(id)— Convert internal Id back to domain-specific Id.

Convert the list of recommended climbs to human-readable names:

# convert Surprise internal Id to MP Id

recs = map( lambda id: trainset.to_raw_iid(id), np.asarray(pred))

results = df[df.route_id.isin(recs)]

r = results.pivot_table( index=['name', 'route_id', 'type', 'grade'], aggfunc=[np.mean, np.median, np.size], values='ratings')

print(r)

Off Belay

That’s it. We have just built a simple climb recommendation engine for Red Rock Canyon with the help of Python Surprise lib. You can further fine-tune the engine by suggesting climbs by difficulty and type (trad vs sport). That’s a topic for a future article. Have fun and be safe!

If you like this tutorial and want to see more like this, make sure to give it a ♥ Thanks!

Jupyter notebook for this tutorial is available on Github.

Top comments (0)