Since computer memory is limited, you cannot store numbers with infinite precision, no matter whether you use binary fractions or decimal ones: at some point, you have to cut off. But how much accuracy is needed? And where is it needed? How many integer digits and how many fraction digits?

However, when you are going to write a program which It is dealing with floating numbers and making a decision based on floating values, you have to care about losing precision. As it is obvious, losing precision is like losing your mind and your decision making logic.

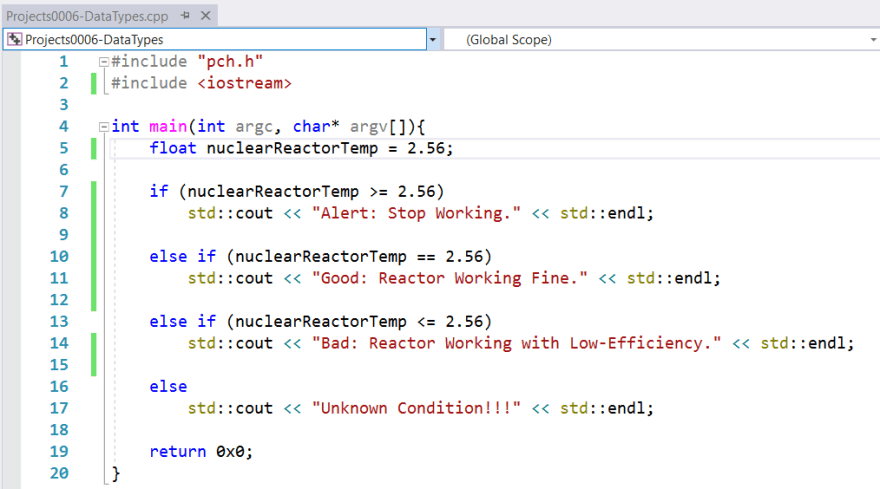

For example, consider the following C++ code. I wrote this code with this assumption that if the reactor temperature reaches a value of around 2.56, the program will stop its activity and send a message to the stdout: Alert: Stop Working.

But when I compiled and then run the above code it will show the following result in the console. It is not the result that I expected to see but what is going on?

When I set a breakpoint on the nuclearReactorTemp variable and then watch the value of that variable. Amazingly, It has the following value. I set it with 2.56 but in the debug environment you see it lose precision and It has the following value:

As I expected, the floating-point variable loses its precision and then program logic completely failed and this is a catastrophic scene in the time of critical software development. So if we want to correct the program, we have to enhance the accuracy of the variable. If we rewrite the program like the following sample, the program will work as we expected.

Yeap. This simple example shows you if we didn’t care about floating-point numbers, the logic of our program will fail in the progress and made some catastrophic decisions.

Email: m.kahsari@gmail.com

Twitter: m.kahsari

Top comments (1)

Line 10 in your program will never be reached.