This is a submission for the Built with Google Gemini: Writing Challenge

From Hackathon Hustle to Gemini Glory: My SyncroFlow Journey

The Spark: Ant Media Hackathon and a Vision for AI

It all started with the adrenaline-fueled chaos of the Ant Media Hackathon. The air buzzed with caffeine, code, and the collective ambition of developers eager to build something impactful. My goal? To create a visual logic engine that could transform complex AI video pipelines into intuitive, drag-and-drop nodes. This vision became SyncroFlow, and at its heart, powering the intelligence, was Google Gemini.

FelixMatrixar

/

SyncroFlow

FelixMatrixar

/

SyncroFlow

Your Camera Copilot AI

SyncroFlow

Demo Video

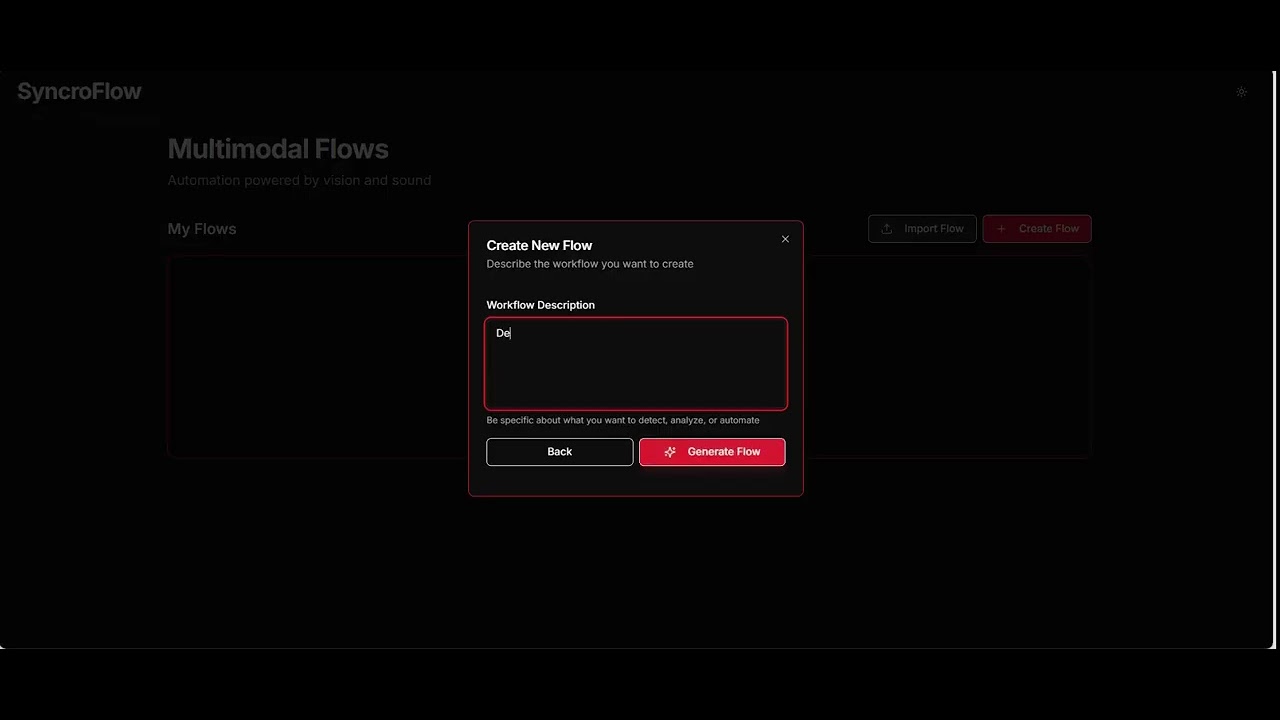

A visual logic engine for real-time video intelligence that transforms complex AI video pipelines into simple, drag-and-drop nodes using ultra-low latency WebRTC streaming.

Overview

SyncroFlow enables users to build custom computer vision and AI monitoring tools in seconds without requiring weeks of development. It combines drag-and-drop visual programming with AI-powered video analysis capabilities.

SyncroFlow enables users to build custom computer vision and AI monitoring tools in seconds without requiring weeks of development. It combines drag-and-drop visual programming with AI-powered video analysis capabilities.

Core Features

- Drag-and-Drop Editor: React Flow-based visual interface for intuitive AI pipeline creation

- Ultra-Low Latency Video: Sub-500ms WebRTC streaming via Ant Media Server integration

- Temporal AI Monitoring: Rolling frame buffers for action understanding over time

- Multi-Source Inputs: Support for webcams, MP4 files, screen shares, and RTMP streams

- No-Code Automation: Trigger real-world events based on AI analysis conditions

Technology Stack

Frontend: React 18, Vite, React Flow, Tailwind CSS Backend API: Node.js / Express AI/Vision Backend: Python, FastAPI, YOLOv8 Video Infrastructure: Ant Media Server, WebRTC, RTMP AI…

What's even more remarkable is that the very spark for SyncroFlow was ignited by Google Gemini itself. It wasn't just a tool I used to build, it was the partner that helped me think of the solution in the first place. This "full circle" moment, where Gemini helped me identify the problem and then provided the means to solve it, was a powerful testament to its potential as a creative and analytical partner.

SyncroFlow: Your Camera Copilot AI

SyncroFlow is a real-time video intelligence platform designed to empower users to build custom computer vision and AI monitoring tools in seconds, without needing weeks of development. Imagine a drag-and-drop interface where you can connect nodes to detect objects, analyze human poses, and even transcribe audio, all streaming with ultra-low latency via WebRTC.

The Role of Google Gemini

Google Gemini, accessed through the OpenRouter API, was the brain behind SyncroFlow's analytical capabilities. Specifically, I leveraged google/gemini-2.5-flash for both visual and audio analysis. This allowed SyncroFlow to:

- Perform AI analysis on detected objects and scenes: Users could prompt Gemini to describe what to analyze in detected objects or scenes, even incorporating motion context (e.g., "is the boat stationary now?").

- Transcribe real-time audio: For audio-based flows, Gemini powered real-time microphone speech-to-text, enabling voice-activated triggers and transcription for meeting notes or voice memos.

This integration was crucial. It meant that SyncroFlow wasn't just about detecting things... it was about understanding and interpreting them, bringing a new layer of intelligence to real-time video streams.

What's even more remarkable is that a significant portion of SyncroFlow's codebase: from the frontend and backend logic to the intricate AI integration WAS ALL generated with the assistance of Gemini 3 Pro. It felt like having an incredibly powerful co-pilot, accelerating development and allowing me to focus on the higher-level architecture and innovative features. Gemini 3 Pro truly carried the project, demonstrating its impressive capabilities in full-stack code generation.

The Hackathon Rollercoaster: A Memorable Ride

Building SyncroFlow during the hackathon was an intense, exhilarating, and sometimes bewildering experience. There were moments of pure triumph and moments where I felt like Patrick Star trying to understand advanced calculus.

The "I'm Ready!" Phase

Initially, I was all enthusiasm, ready to conquer the world with code. The idea of building a visual AI pipeline editor felt revolutionary, and I was eager to dive in.

The Deep Dive into Code

As I delved into the intricacies of WebRTC, FastAPI, YOLOv8, and integrating the Gemini API, the complexity started to sink in. There were countless lines of code, configurations, and debugging sessions. It felt like navigating a maze, but every successful connection and every working feature was a small victory.

The "Feeling Lost in Life" Moments

Of course, no hackathon is complete without those moments of despair. Bugs that seemed impossible to fix, unexpected API errors, and the relentless ticking clock. There were times I stared at my screen, feeling utterly lost, wondering if I'd ever make it work.

The Breakthrough and the Final Push

But then, a breakthrough! A line of code clicked, an API call finally returned the expected data, and suddenly, SyncroFlow started to come alive. The final hours were a blur of frantic coding, testing, and polishing. The satisfaction of seeing the pieces come together was immense.

The Presentation: Nerves and the "Finalist" Hunger

Then came the presentation phase. The culmination of all the sleepless nights and intense coding, it was a moment filled with both anticipation and a healthy dose of anxiety. Standing before the judges, presenting SyncroFlow, felt like a true test of fire.

I'll be honest: I didn't take home the top prize. I ended up as a finalist, standing on the podium but not quite reaching the gold. In that moment, it felt like a sting. IT IS the "so close, yet so far" sensation that every builder knows all too well.

But looking back, that "loss" was actually my biggest win. It gave me the "finalist's hunger", the drive to keep refining, keep building, and keep pushing the boundaries of what's possible with AI. The real victory wasn't a trophy... it was the realization that I had built a sophisticated, real-time AI engine from scratch, powered by a tool that 7 out of 10 of my fellow finalists also chose for its incredible cost-effectiveness and performance.

What I Learned

The hackathon and the development of SyncroFlow were a steep learning curve. Technically, I deepened my understanding of:

- Real-time video streaming with WebRTC and Ant Media Server: Mastering the nuances of low-latency video infrastructure was critical.

- FastAPI and Python backend development: Building a robust and scalable API for AI inference.

- Integrating large language models (LLMs) for multimodal analysis: Understanding how to effectively use Gemini for both visual and audio data.

- Frontend development with React Flow: Creating an intuitive drag-and-drop interface for complex workflows.

Beyond the technical skills, I also honed my problem-solving abilities, learned to work efficiently under tight deadlines, and experienced the power of rapid prototyping.

My Google Gemini Feedback

Working with Google Gemini through the OpenRouter API was largely a positive experience. The google/gemini-2.5-flash model proved to be incredibly versatile for both visual and audio analysis tasks. Its ability to interpret prompts and provide relevant insights from diverse data types was impressive.

What worked well:

- Multimodal capabilities: The seamless integration of visual and audio processing within a single model was a game-changer for SyncroFlow, allowing for truly intelligent video analysis.

- Flexibility via OpenRouter: Accessing Gemini through OpenRouter provided flexibility in model selection and simplified API interactions.

- Speed and responsiveness: For real-time applications like SyncroFlow, the speed of Gemini's responses was crucial and generally met the demands.

- Gemini 3 Pro for code generation: The ability of Gemini 3 Pro to generate significant portions of the frontend, backend, and AI integration code was a massive accelerator. It allowed for rapid iteration and a focus on core innovation rather than boilerplate.

However, I need to report a major friction point. The safety filters are way too aggressive. I keep getting hit with "inappropriate" flags on entirely safe prompts. It is incredibly frustrating.

I know why Gemini must have these filters. They have to stop fraud, malicious use, and bad actors. I respect that boundary.

But the current setup is punishing developers. I am building SyncroFlow for real-time video intelligence. This is highly technical work. The filter completely lacks context and panics over legitimate visual data or node logic. It brings my development to a dead stop.

The filter needs to understand developer context, not just keyword triggers. Please add a dedicated "Flag as False Positive" button for API and web users. Let the system learn the difference between fraud and actual engineering.

Conclusion

Building SyncroFlow with Google Gemini at the Ant Media Hackathon was an unforgettable experience. It pushed my boundaries, expanded my technical horizons, and showcased the immense potential of AI in real-time applications. From the initial "I'm Ready!" enthusiasm to the triumphant feeling of being a finalist, every moment was a valuable lesson. The instrumental role of Gemini 3 Pro in generating the very fabric of the application was particularly impressive, truly demonstrating its power as a development partner. I'm excited to continue this journey, leveraging the power of Gemini to build even more innovative solutions and transform how we interact with visual and audio data.

Top comments (6)

Amazing project!

Thank You!

I am SO glad you brought up the Gemini safety filters. I have been hitting the exact same false positives. It is incredibly frustrating!

😭

Have you deployed that? Which are the winners among those finalists you shared?

No, I didn't manage to deploy it. Didn't subscribe to cloud platform that allow the websocket that time 😂

Winners are:

1st AMS MCP Plugin

2nd StreamGuard AI: Intelligent Video Operations Platform

3rd Scribe 1.0

They all have implemented quite amazing ideas!

You can also watch the presentations here:

https://www.youtube.com/watch?v=jlReX_gzlZo&list=LL&index=1&t=3180s

Some comments may only be visible to logged-in visitors. Sign in to view all comments.