Dispelling myths around micro frontends. Originally published at Bits and Pieces.

Microfrontends are a new trend that goes back for many years. Equipped with new ways and solved challenges they are now slowly entering the mainstream. Unfortunately, a lot of misconceptions are quite apparent making it difficult for many people to grasp what microfrontends are about.

In short, microfrontends are about getting some of the benefits of microservices into the frontend. There’s more to it, and one should not forget that microservices are no silver bullet, either.

Tip: To share React/Angular/Vue components between Micro Frontends or any other project, use tools like Bit. Bit lets you “harvest” components from any codebase and share them to a collection in bit.dev. It makes your components available for your team, to use and develop in any repo. Use it to optimize collaboration, speed-up development and keep a consistent UI.

Misconceptions

Nevertheless, while some of the reasons for choosing microfrontends can be summarized, too, in this post I want to list the most common misconceptions I’ve heard in the past months. Let’s start with an obvious one.

1. Microfrontends Require JavaScript

Sure many of the currently available microfrontends solutions are JavaScript frameworks. But how can this be wrong? JavaScript is not optional anymore. Everyone wants highly interactive experiences and JS plays a crucial part in providing them.

Besides the given advantages a fast loading time, accessible web apps, and other factors should be considered, too. Many JavaScript frameworks, therefore, provide the ability to render isomorphic. In the end, this results in the ability to not only stitch at the client-side but prepare everything already on the server. Depending on the demanded performance (i.e., initial time to first meaningful render) this option sounds lovely.

Keep in mind that isomorphic rendering comes with its own challenges.

However, even without isomorphic rendering of a JavaScript solution, we are in good shape here. If we want to build microfrontends without JavaScript we can certainly do so. Many patterns exist and a significant number of them do not require JavaScript at all.

Consider one of the "older" patterns: Using <frameset>. I hear you laughing? Well, back in the days this already allowed some split that people try to do today (more on that below). One page (maybe rendered by another service?) was responsible for the menu, while another page was responsible for the header.

<frameset cols="25%,*,25%">

<frame src="menu.html">

<frame src="content.html">

<frame src="sidebar.html">

</frameset>

Today we use the more flexible (and still actively supported) <iframe> elements. They provide some nice capability - most importantly they shield the different microfrontends from each other. Communication is still possible via postMessage.

2. Microfrontends Only Work Client-Side

After the JavaScript misconception, this is the next level. Sure, on the client-side there are multiple techniques to realize microfrontends, but actually, we don't even need any <iframe> or similar to get microfrontends working.

Microfrontends can be as simple as server-side includes. With advanced techniques such as edge-side includes, this becomes even more powerful. If we want to exclude scenarios realizing a microfrontend in microfrontend functionality then even simple links work just fine. In the end, a microfrontend solution can also be as simple as tiny, separated server-side renderers. Each renderer may be as small as a single page.

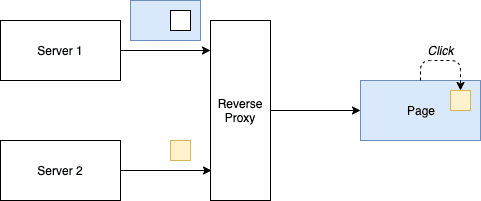

The following diagram illustrates more advanced stitching happening in a reverse proxy.

Sure, JavaScript may have several advantages but it still highly depends on the problem you try to solve with microfrontends. Depending on your needs a server-side solution may still be the best (or at least a better) option.

3. You Should Use Multiple Frameworks

In nearly every tutorial about microfrontends, the different parts are not only developed by different teams but also using different technologies. This is bogus.

Yes, using different technologies should be possible with a proper microfrontend approach, however, it should not be the goal. We also don't do microservices just to have a real patchwork (or should we say "mess") of technologies in our backend. If we use multiple technologies then only because we get a specific advantage.

Our goal should always be a certain unification. The best approach is to consider a green field: What would we do then? If the answer is "use a single framework" we are on the right track.

Now there are multiple reasons why multiple frameworks may become apparent in your application in the long run. It may be due to legacy. It may be convenience. It may be a proof of concept. Whatever the reasons are: Being able to play with this scenario is still nice, but it should never be the desired state in the first place.

No matter how efficient your microfrontend framework is - using multiple frameworks will always come at a cost that is not negligible. Not only will the initial rendering take longer, but the memory consumption will also go in the wrong direction. Convenience models (e.g., a pattern library for a certain framework) cannot be used. Further duplication will be necessary. In the end, the number of bugs, inconsistent behavior, and perceived responsiveness of the app will suffer.

4. You Split By Technical Components

In general, this does not make much sense. I have yet to see a microservice backend where the data handling is in one service and the API is in another. Usually, a service consists of multiple layers. While some technical things like logging are certainly brought to a common service, sometimes techniques like a side-car are used. Furthermore, common programming techniques within a service are also expected.

For microfrontends, this is the same. Why should one microfrontend only do the menu? Isn't a menu there for every microfrontend to be populated accordingly? The split should be done by business needs, not by a technical decision. If you've read a bit about domain-driven design you know that it's all about defining these domains - and that this definition has nothing to do with any technical demands.

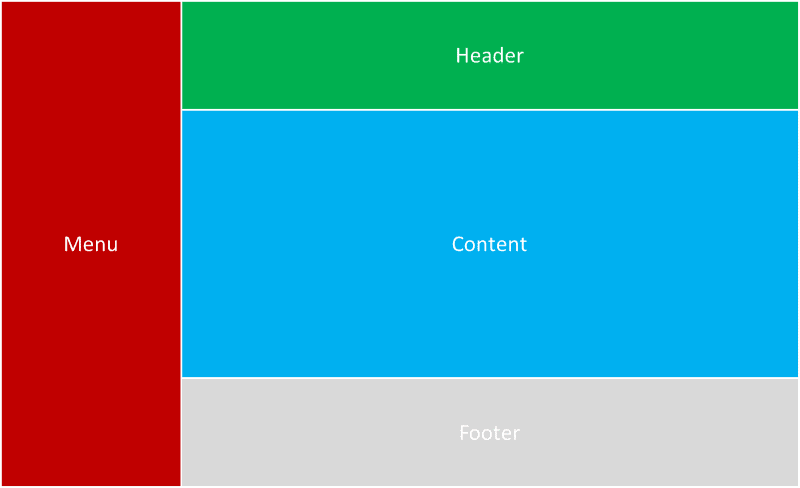

Consider the following split:

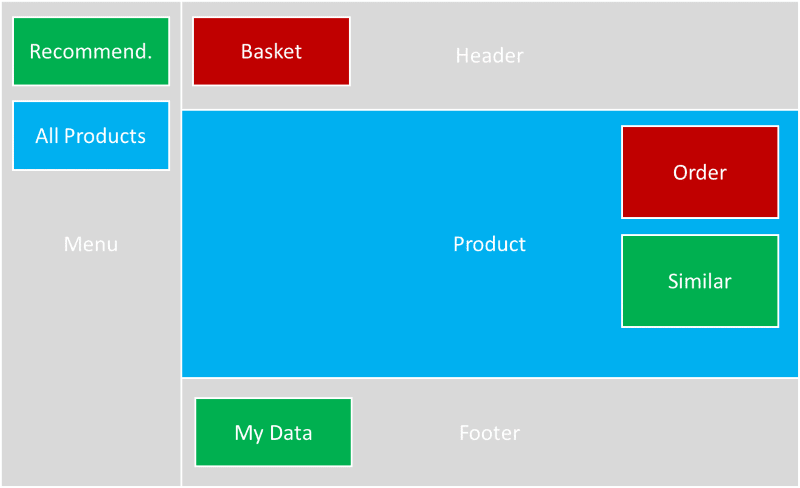

These are technical components. That has nothing to do with microfrontends. In a real microfrontends application the screen may rather look as follows:

Granted the stitching is much more complicated here, but this is what a sound microfrontends application should provide for you!

5. You Should Not Share Anything

Nope. You should share what makes sense to be shared. You should definitely not share everything (see the next point). But to get consistent you'll need to share at least a set of principles. Now if that is via a shared library, a shared URL, or just a document that is used when building or designing the application does not matter.

For microservices this "share nothing" architecture looks like the following diagram.

In the browser this would lead to the use of <iframe> as there is currently no other way to prevent leakage of resources. With Shadow DOM CSS may be isolated, but the script level is still capable of touching everything.

Even if we would want to follow the share nothing architecture we would be in trouble. The duplicated resources just to keep simple components alive would cripple the perceived performance.

Granted, the deeper the sharing is (e.g., using a shared library attached to the DOM via an app shell) the problems can arise. However, on the other hand, the looser the sharing is (e.g., just a document specifying the basic design elements) the more inconsistencies will arise.

6. You Should Share Everything

Absolutely not. If this is the idea then a monolith makes more sense. Performance-wise this may already be a problem. What can we lazy load? Can we remove something? But the real problem is dependency management. Nothing can be updated because it could break something.

The beauty of shared parts is the consistency guarantee.

Now if we share everything we introduce complexity to gain consistency. But this consistency is not maintainable either, as the complexity will introduce bugs on every corner.

The origin of this issue lies in the "dependency hell". The diagram below illustrates it nicely.

In short, if everything depends on everything we have a dependency problem. Just updating a single box has an impact on the whole system. Consistent? Truly. Simple? Absolutely not.

7. Microfrontends Are Web Only

Why should they? True, so far we touched mostly the web, but the concepts and ideas can be brought to any kind of application (mobile app, client app, ..., even a CLI tool). The way I see it microfrontends are just a fancy new word for "plugin architecture". Now how the plugin interface is designed and what is required to run the application using the plugins is a different story.

The following diagram shows a quite generic plugin architecture. Credit goes to Omar Elgabry.

There is no notion of where this is running. It could run on a phone. It could run on Windows. It could run on a server.

8. Microfrontends Require Large Teams

Again, why? If the solution is super complex then I would certainly look for a simpler one. Some problems require complex solutions, but usually, a good solution is a simple one.

Depending on the scenario it may not even require a distributed team. Having distributed teams is one of the reasons why microfrontends make sense in the first place, but they are not the only reason. Another good reason is the granularity of features.

If you look at microfrontends from the business perspective then you'll see that having the ability to turn on and off specific features can be meaningful. For different markets, different microfrontends can be used. Going already back to a simple privilege level this makes sense. There is no need to write code to turn certain things on or off depending on a certain condition. All this is left to a common layer and can just be activated or deactivated depending on (potentially dynamic) conditions.

This way code that can (or should) not be used will also not be delivered. While this should not be the protection layer, it certainly is a convenience (and performance) layer. Users are not confused since all they see is what they can do. They don't see the functionality. That functionality is not even delivered, so no bytes wasted on unusable code.

9. Microfrontends Cannot Be Debugged

I fear this is partially true, but in general, should not be and (spoiler!) does not have to be. With any kind of implementation (or underlying architecture for the sake of argument) the development experience can be crippled. The only way to fight this is to be developer-first. The first rule in implementation should be: Make it possible to debug and develop. Embrace standard tooling.

Some microfrontend frameworks don't embrace this at all. Some require online connections, dedicated environments, multiple services, ... This should not be the norm. It is definitely not the norm.

10. Microservices Require Microfrontends (or Vice Versa)

While it's true that decoupled modular backends may be a good basis for also decoupling the frontend, in general, this is not the case. It is totally viable to have a monolithic backend that demands a modular frontend, e.g., to allow simplified personalization potentially combined with authorization, permissions, and a market place.

In the same sense, indeed, a microservice backend does not justify applying a similar pattern to the frontend. Many microservice backends are operated by single-purpose applications that do not grow in features, but rather just change in appearance.

11. Microfrontends Require a Mono Repo

A couple of times I've already read that to create a microfrontends solution one needs to leverage mono repo, preferably using a tool like Lerna. I am not convinced of that. Sure, a mono repo has some advantages, but they also come with clear drawbacks.

While there are microfrontend frameworks that require a joint CI/CD build most don't. A requirement for a joint CI/CD builds usually leads to a mono repo, as it's just much simpler to set up correctly in the first place. But to me - this is the monolith re-packaged. If you have a joint build in a mono repo then you can scratch two very important factors that made microfrontends interesting in the first place:

- Independent deployment

- Independent development

In any case, if you see a microfrontend solution that requires a mono repo: Run. A well-crafted monolith is potentially better without having all the issues of distributed systems awaiting in the long run.

Conclusion

Microfrontends are still not for everyone. I do not believe that microfrontends are the future, but I'm also positive that they play an important role in the future.

Where do you see microfrontends shine? Any comment or insight appreciated!

Top comments (2)

Thanks for sharing that post. Me as being mostly application developer really like to have somewhere ability to find and integrate reusable components.

Dyslexic moment: I read the title as "11 Popular Misconceptions About Moving to Florida"

Rubs eyes