The Statement 📜

One post on the Google Testing Blog in 2015 about End-to-End testing has influenced the way we approach software testing even today.

In the article, there are points that I wholeheartedly agree and points in which I disagree. This fact does not mean nothing more than that professionals with different experiences and context can provide their unique take on things. That is how we grow as a community and individuals.

Now that this is out of the way, let's go over some article points that in my opinion we need to re-evaluate based on the new normal.

The Google Testing Blog

For people that have not come across it yet, Google Testing Blog is a hub with amazing resources for any kind of topic around software and testing. Written by industry professionals and guest experts, the content there has benefitted our craft and made people rethink how to approach software testing in more than one aspects.

The Incident 👀

"Just Say No to More End-to-End Tests"

was the title of an article posted on the blog and really dealt a powerful blow to End-to-End testing enthusiasts back then.

People from then on had an excuse to ditch honest efforts towards automation and discourage initiatives that were only aiming towards validating if the product works as a whole for the end user.

While this might not have been the original intent of the author, that is expected to happen when a so powerful title is posted on a blog that "comes from Google".

I seriously hope I am the only one that came across senior engineers or tech/team leads that stated a fact which for them seemed an undeniable truth and a correct approach:

"Why do we need to do those 'End-to-End' tests when we don't have good unit test coverage first ? " 😶

Certainly this can be the result of tons other factors, many of them reasonable, but a clear point is that we as a community of developers and testers can be considered poor in education on the topic of modern web automation testing.

For a recent data source, take a look at the open source survey done by Tricentis. We are missing education and training, that is why people cannot stand firm against absurd statements...

What Was Then, Is Not What Stands Now 👨🚀

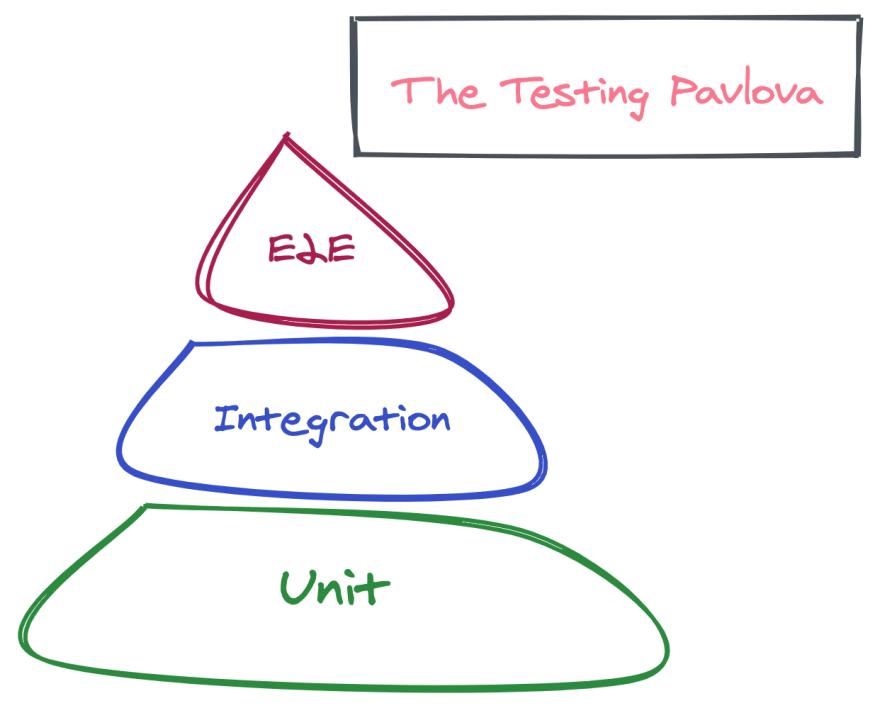

I will now be walking down into memory lane for most people. Right below we can see the staple "Testing Pyramid" as can also be observed in the said article and a presentation that went along with it:

This was absolutely the truth and the way to go, but you don't see people interested in Backbone.js either now, do you?

Slow to Orchestrate 🐢

Spinning up a new environment was indeed painstaking and the capabilities of modern DevOps were a pipe dream. Now we have on-demand environments in amazing speeds, Serverless, Review Apps, Deployment Previews and tons more great tools.

Slow to Run 😴

Spawning a full browser has its difficulties as it requires specialized preparation to provide GUI capabilities. Now with tools like Puppeteer, Playwright and Cypress just to name a few, you can run End-to-End tests headless in any minimally equipped environment. Even Serverless!

Flaky 🎰

Selenium architecture was not capable at the time to easily evaluate things like element ready state or network state. That ultimately resulted in explicit wait() calls which even a millisecond delay would trigger an error for the whole test.

This also made scaling and parallelization not as effective as it could be. The tools mentioned above are closer to the browser platform and can automatically wait for the correct moment to trigger actions without hacks or explicit wait.

Hard to Maintain* 🐛

Without wanting to dive deep into this point, I will just add a statement that goes on the grapevine. At the time the people hired for the role were not as technically strong or had the training required to excel at the expectations for the position.

*This was and is true up to this day for situations that an application is in its infant stages and is constantly experiment with new experiences.

Let us jump to the present reality...🚀

Thinking Big is Required

Quoting the article:

Think Smaller, Not Larger

The thought of unit level tests providing actual confidence for a frontend application is moving away from the truth as time passes. Actual confidence is the thing that we are paid to create and that is

"Working software for the end user".

Modern frontend applications have indisputably moved to being:

Heavy in business logic and state 🏋

Your typical product these days has shifted business logic on the frontend side which is in one way or the other intermingled with routing tied in-memory browser state.To translate the above statement to layman's terms, when you want to test your e-commerce cart in React, you have to mock/stub the state manager and the router just to get things going.

At least in my eyes, this is magnitudes faster than an end-to-end test for your cart but by stubbing a shared state manager and a global router, how confident are you that this unit test proves the cart is working ?

Multi-dependent on distributed services 🤹

In one way or the other, the shift towards distributed computing, in all its shapes, is bound to permeate the ways we approach software. Same thing is starting to happen on the frontend applications.

Their functionality, their validity and their usefulness depends on external services. Either that be another team's microservice, an authentication API or Google Places Autocomplete. That is in many ways reflected on the interfaces of your application.

Think larger and more distributed. 🌐

What Happens Now for Frontend Engineers ? 🤔

The above points, when attempted to be translated into unit or integration tests, can quickly become tightly coupled to multiple mocking techniques and external APIs that are out of your control.

To not get confused here, this is no pushing against them, but comparing them to a Puppeteer End-to-End test, they are not conceptually so far away in terms of setup and mocking required. There are even techniques to provide whole environment mocks in minutes!

The suggestion here is to consider adding a couple of End-to-End tests to validate that a user flow works before throwing it out to QA. Just add Puppeteer or Playwright, throw in some lines of JavaScript that as a frontend engineer you are so familiar with, and you are good to go.

As an ecosystem, we have reached the maturity levels that this is easily possible, so please do 🙏

Furthermore something that I want to get out of my chest... Unlike backend tests, frontend tests if not run against different environments, are not guaranteed to be working, even if they give you the green heavy checkmark.

Never let someone with no frontend expertise dictate a testing strategy for the frontend. It is like getting a swimming coach to prep you for a boxing match.

The New Normal 🏄

Great individuals as Kent C. Dodds and Guillermo Rauch have been introducing unconventional thoughts about the "Testing Pyramid".

Let me now give you my take on the new normal. Enjoy!

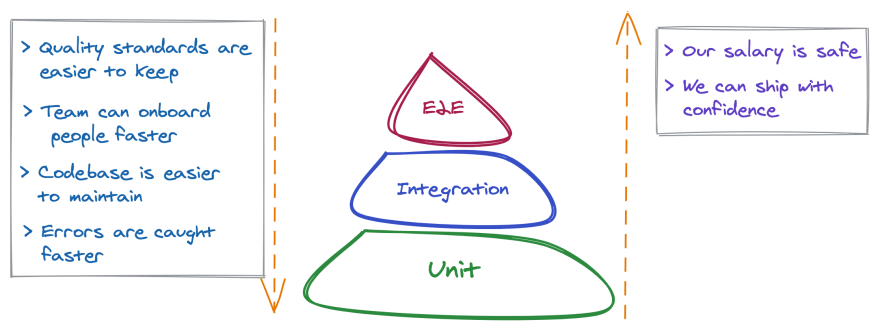

As you can observe in what I like to call "Testing Pavlova", the layers and the ratio of expected tests are kept the same as with the "Testing pyramid", only a bit more stylish.

Adding up the vertical axis that developers tend to confuse in many discussions.

Now for the revolutionary part, something that I have not seen being illustrated yet. You are receiving a shaped container, but you are not explicitly shown how to fill it.

We will show how this Pavlova should be filled, parallelizing that with the timeline of your project.

No Top Down but Side to Side

Some Useful Gists

> Consider the product "ok" to be launched when it also contains as few End-to-End tests as to cover your main revenue flows 💸

> Code coverage should be viewed as an organic side-effect of product maturity, not an explicit target 🌲

> The diminishing return occurs when you just add Unit level tests that will never confirm a product function, but probably just if a library works 🤦

🔥A Hot Take🔥

The final points I wish to tackle in the article is one that I cannot find myself familiarizing with, no matter how many years I have been in the industry.

If the loop (test feedback loop) is fast enough, developers may even run tests before checking in a change.

For the first point, I am actually confident that we as software engineering community, have invested in the tools and culture to have scheduled and automated test runs in many parts of our coding efforts assembly line. That is in our CI environment, pre-commit hooks and other examples depending on the project. If at your project/team, the mentioned techniques are a "backlog" item, please push it up, it is essential.

and now...

Developers like it (End-to-End testing) because it offloads most, if not all, of the testing to others.

For the final point, we will be including only Frontend engineers into the mix because this shift in mindset is another part of the new normal.

Taking into account the change in how applications are architected, API mocking capabilities and technotropies like Jamstack, it is to our best interest to invest in including End-to-End testing as a "frontend-related" topic.

Frontend engineers 👩💻

- Are responsible for adding the new UI/UX interactions to be tested

- Are already working with JavaScript, so Node.js automation tools are easy to onboard

- Know how the browser/network operates and its quirks

- Are the ones who will fix most frontend issues in the first place

With all the great tools, services and community resources we have at our disposal it is not making much sense for frontend engineers to not take part in creating and maintaining End-to-End tests for their interfaces. Even the bare minimum.

Closing 📔

Thank your for reaching the end of the article and I seriously hope you took your notes. Not to bash me online, but to just reconsider some of your past or present discussions about web automation testing, specifically the End-to-End topic.

A Good Idea That Often Fails In Practice

That was the characterization of End-to-End testing in the said blog post and I hope we will practically and conceptually get over this, with all the amazing people and tools around our ecosystem.

Onwards 🚀

Cross-posted from The Home of Web Automation

Top comments (0)