If you're looking for an open-source text-to-speech system that can generate podcasts, audiobooks, or multi-speaker conversations that actually sound real, Microsoft’s VibeVoice is a model you’ll want to try. Unlike traditional TTS systems that often feel robotic, inconsistent, or restricted to short clips, VibeVoice is designed from the ground up to produce expressive, long-form, multi-speaker audio with remarkable naturalness and flow. It can synthesize speech lasting up to 90 minutes and seamlessly handle up to four distinct speakers, an impressive upgrade over most existing models that struggle to maintain quality beyond a few minutes or across more than two voices. What makes this possible is its continuous speech tokenizers (acoustic and semantic) that operate at a very low frame rate (7.5 Hz), preserving audio richness while drastically reducing computation. On top of this, the model uses a next-token diffusion framework, powered by a Qwen2.5-based LLM, to understand dialogue context and generate nuanced turn-taking, while a lightweight diffusion head ensures high-fidelity acoustic detail. The result: smooth, consistent, and lifelike conversations that feel like they were recorded, not generated.

In this guide, we have covered a simple and step-by-step walkthrough of how to get this model up and running locally or in GPU-accelerated environments.

Prerequisites

The minimum system requirements for running this model are:

GPU: 1x RTX 4090 or 1x RTX A6000

Storage: 50GB (preferable)

VRAM: at least 16GB

Step-by-step process to install and run VibeVoice

For the purpose of this tutorial, we’ll use a GPU-powered Virtual Machine by NodeShift since it provides high compute Virtual Machines at a very affordable cost on a scale that meets GDPR, SOC2, and ISO27001 requirements. Also, it offers an intuitive and user-friendly interface, making it easier for beginners to get started with Cloud deployments. However, feel free to use any cloud provider of your choice and follow the same steps for the rest of the tutorial.

Step 1: Setting up a NodeShift Account

Visit app.nodeshift.com and create an account by filling in basic details, or continue signing up with your Google/GitHub account.

If you already have an account, login straight to your dashboard.

Step 2: Create a GPU Node

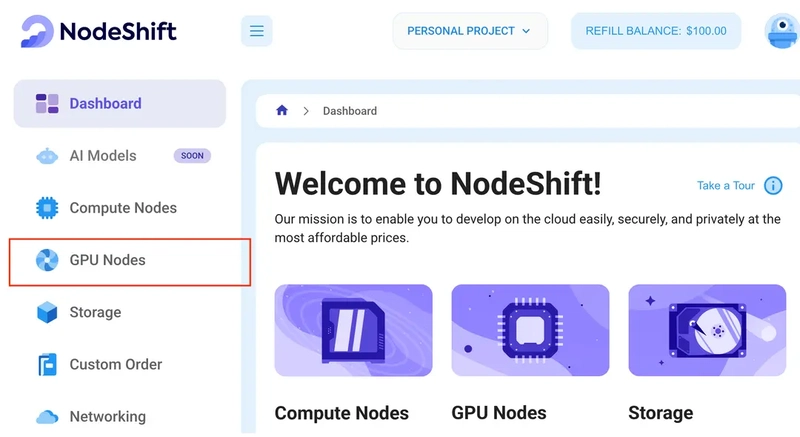

After accessing your account, you should see a dashboard (see image), now:

1) Navigate to the menu on the left side.

2) Click on the GPU Nodes option.

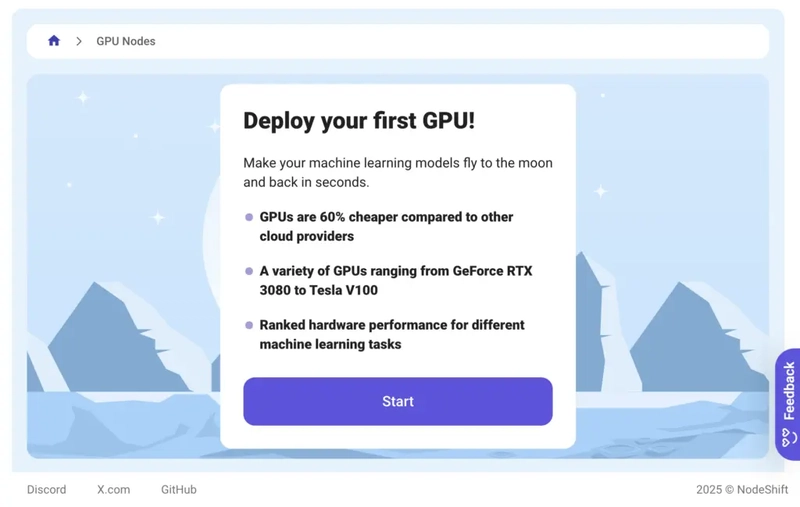

3) Click on Start to start creating your very first GPU node.

These GPU nodes are GPU-powered virtual machines by NodeShift. These nodes are highly customizable and let you control different environmental configurations for GPUs ranging from H100s to A100s, CPUs, RAM, and storage, according to your needs.

Step 3: Selecting configuration for GPU (model, region, storage)

1) For this tutorial, we’ll be using 1x A100 SXM4 GPU, however, you can choose any GPU as per the prerequisites.

2) Similarly, we’ll opt for 100GB storage by sliding the bar. You can also select the region where you want your GPU to reside from the available ones.

Step 4: Choose GPU Configuration and Authentication method

1) After selecting your required configuration options, you’ll see the available GPU nodes in your region and according to (or very close to) your configuration. In our case, we’ll choose a 1x RTXA6000 GPU node with 64vCPUs/63GB RAM/200GB SSD.

2) Next, you'll need to select an authentication method. Two methods are available: Password and SSH Key. We recommend using SSH keys, as they are a more secure option. To create one, head over to our official documentation.

Step 5: Choose an Image

The final step is to choose an image for the VM, which in our case is Nvidia Cuda.

In our previous blogs, we used pre-built images from the Templates tab when creating a Virtual Machine. However, for running a CUDA dependent application like VibeVoice, we need a more customized environment with full CUDA development capabilities. That’s why, in this case, we switched to the Custom Image tab and selected a specific Docker image that meets all runtime and compatibility requirements.

We chose the following image:

nvidia/cuda:12.1.1-devel-ubuntu22.04

This image is essential because it includes:

- Full CUDA toolkit (including nvcc)

- Proper support for building and running GPU-based applications

- Compatibility with CUDA 12.1.1 required by certain model operations

Launch Mode

We selected:

Interactive shell server

This gives us SSH access and full control over terminal operations — perfect for installing dependencies, running benchmarks, and launching models.

Docker Repository Authentication

We left all fields empty here.

Since the Docker image is publicly available on Docker Hub, no login credentials are required.

Identification

Template Name:

nvidia/cuda:12.1.1-devel-ubuntu22.04

That’s it! You are now ready to deploy the node. Finalize the configuration summary, and if it looks good, click Create to deploy the node.

Step 6: Connect to active Compute Node using SSH

1) As soon as you create the node, it will be deployed in a few seconds or a minute. Once deployed, you will see a status Running in green, meaning that our Compute node is ready to use!

2) Once your GPU shows this status, navigate to the three dots on the right, click on Connect with SSH, and copy the SSH details that appear.

As you copy the details, follow the below steps to connect to the running GPU VM via SSH:

1) Open your terminal, paste the SSH command, and run it.

2) In some cases, your terminal may take your consent before connecting. Enter ‘yes’.

3) A prompt will request a password. Type the SSH password, and you should be connected.

Output:

Next, If you want to check the GPU details, run the following command in the terminal:

!nvidia-smi

Step 7: Set up the project environment with dependencies

1) Create a virtual environment using Anaconda.

conda create -n vibe python=3.11 -y && conda activate vibe

Output:

2) Clone the official repository and move inside the project directory.

git clone https://github.com/microsoft/VibeVoice.git

Output:

3) Install required dependencies.

pip install -e .

pip install flash-attn --no-build-isolation

apt update && apt install ffmpeg -y

Output:

4) Launch the Gradio demo. This will automatically download the model checkpoints as well.

python demo/gradio_demo.py --model_path microsoft/VibeVoice-1.5B --share

Output:

5) If you're on a remote machine (e.g., NodeShift GPU), you'll need to do SSH port forwarding in order to access the Gradio session on your local browser.

Run the following command in your local terminal after replacing:

<YOUR_SERVER_PORT> with the PORT allotted to your remote server (For the NodeShift server - you can find it in the deployed GPU details on the dashboard).

<PATH_TO_SSH_KEY> with the path to the location where your SSH key is stored.

<YOUR_SERVER_IP> with the IP address of your remote server.

ssh -L 7860:localhost:7860 -p <YOUR_SERVER_PORT> -i <PATH_TO_SSH_KEY> root@<YOUR_SERVER_IP>

After this copy the URL you received in your remote server: http://0.0.0.0:7860

And paste this on your local browser to access the Gradio session.

Step 8: Run the model

1) Once you access the Gradio interface, it will look like this:

2) Generate podcast from script.

Put any script of your chocie, we’re using one of the example scripts given in the official repo.

3) The app has started to stream generated podcast audio in real-time.

Conclusion

VibeVoice stands out as a groundbreaking open-source TTS framework that combines continuous speech tokenizers, a Qwen2.5-powered LLM, and a diffusion head to deliver expressive, long-form, multi-speaker audio that feels astonishingly real. Its ability to generate up to 90 minutes of consistent, multi-voice speech makes it a powerful tool for creators and researchers alike. And while running it locally is a great way to get started, NodeShift makes the experience even smoother by providing GPU-accelerated environments, simplified deployment, and scalability out of the box, so you can focus on exploring and scaling with the model’s capabilities without worrying about complex infrastructure setup.

For more information about NodeShift:

Top comments (0)