It was December 2019. The gloomy weather and frigid temperatures in Toronto made me crave for a warm getaway. As I navigated through search results, I realized I had dozens of tabs open to answer a pretty simple question: "What are some warm places to travel to in December with cheap flights?"

Being a software engineer, I realized that all I was doing was trying to apply a filter to a broad set of travel results. What if I had a lot of data on places in the world? Can I build some type of filtering to find cool places?

I dove in over the next week to see how much data I could find on the internet to build a travel website that would help me find interesting destinations.

60 days later, Visabug was born and soft-launched on Reddit where it went to #1 on the sideproject and reactjs subreddits. 🎉

My goals for Visabug were:

- Build something that is genuinely useful: I didn't want to just build something because it was technically interesting.

- Make data freely available to help people make better decisions: Travel opens our eyes to other cultures and makes us more tolerant. I didn't want to hide data behind paywalls.

Getting Country Information

The first piece of data that I was interested in was country information. I wanted to answer the question, "What countries can I travel to easily, and how much would it cost on average to fly there?"

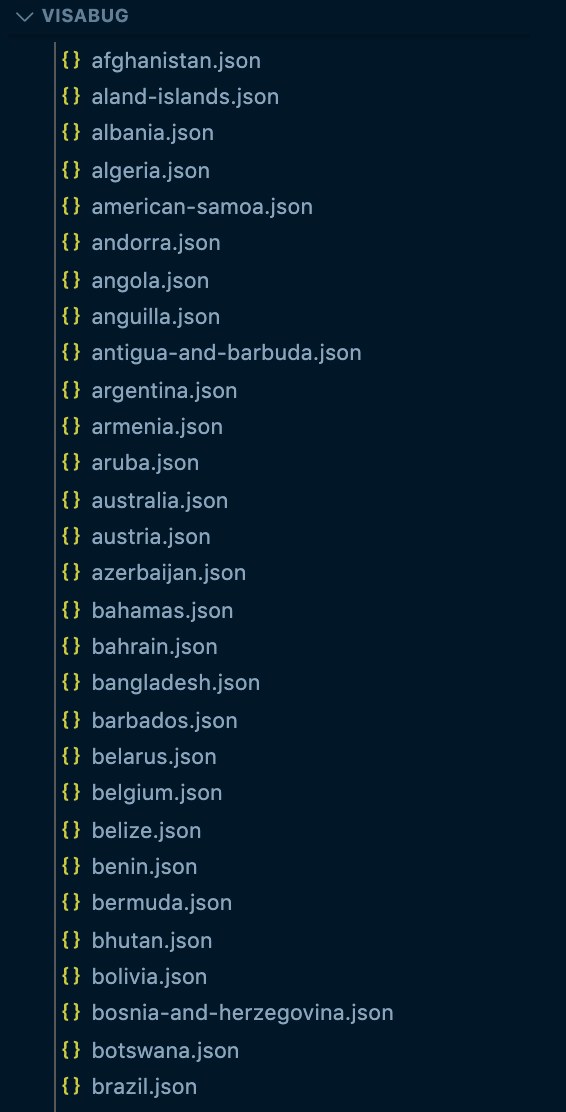

To start, I got a list of all the countries in the world. Then, I used data from the World Bank, along with Google's Geocoding API to get common data such as location, population, capitals, languages, and more.

This was my starting point. I created a JSON file for each country, so I had 238 JSON files titled as canada.json, india.json, etc.

With a little bit of extra work, I was able to also find data on:

- Related countries and nearby countries

- Regions and Continents

- Population

- Weather patterns (Temperature and Rainfall)

Getting Visa and Travel Information

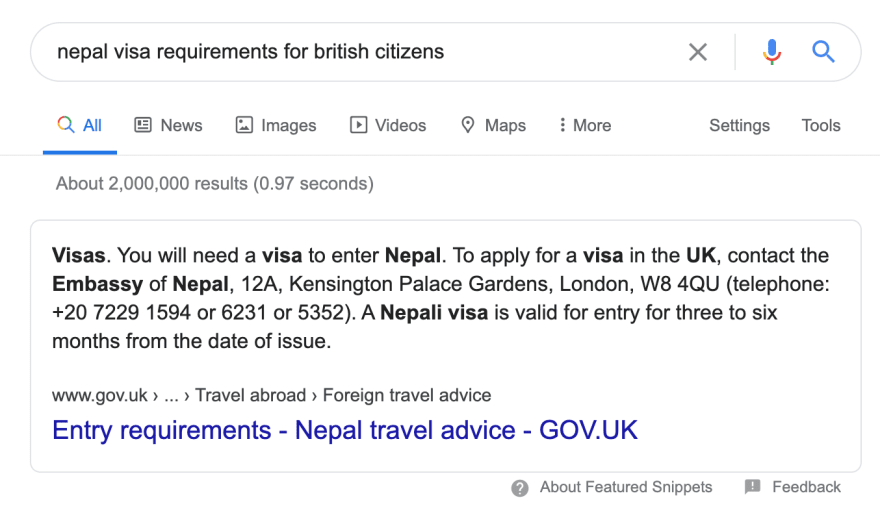

Next, I wanted to get tourist visa data. If I were a citizen of a country and wanted to travel to another country, what kind of visa would I need?

I tried looking around for APIs but there weren't any that were easily available.

I turned towards Google Search. It turns out when you search this on Google, you get a nice card with some useful information:

I wrote a script that created an array of Google search queries with each country permutation. It looked something like this:

const visaSearchSet = [

"usa visa requirement for canada citizens",

"brazil visa requirement for algeria citizens"

...

]

Then, I wrote a Puppeteer script that queried Google and scraped the result set from the card. This was piped into another JavaScript function that parsed the string into a data structure. The result was something like this:

"united-kingdom": {

"nepal": {

visaCategory: "required",

validity: "three to six months from date of issue",

embassy: "12A, Kensington Palace Gardens, London, 48 4KU"

},

...

}

This was my initial prototype. Since then, I've improved the algorithm to double check the visa requirements against some other sites, so a single wrong result doesn't give me incorrect data.

After fetching visa information, I wanted to also fetch travel advisories. A country may be easy to get to, but you may not want to go there because its dangerous!

Luckily, there's a site called SmartTraveller that makes it really easy to get travel advisories, customs information, immunizations and more.

Currently, Visabug is able to track:

- Visa requirements between any two countries in the world

- Classifies visas as "required", "not required", "e-visas", and "visas-being-refused"

- Support for Schengen area visas

- Embassy locations

- Travel Advisories

- Customs Information (coming soon)

- Immunizations (coming soon)

- Multi-country visas (coming soon)

Getting City Data

Next, I wanted to get city data. To do this, I had to first figure out the most popular cities in the world. I couldn't just use population because many popular cities are relatively small. I used this free dataset for my initial set of cities. As a bonus, that dataset let me map cities to their parent country.

Next, I wanted to collect some useful metrics about these cities.

- What is the city known for? To solve this, I used Tripadvisor to get the most popular things to do, and classified them.

- What is the cost of living? The Cost of Living index from Numbeo helped provide relative costs per city.

- Is Uber available? Uber's website has a list of all the cities in which they operate.

- How safe is it? Numbeo also has a safe cities index!

I collect a lot more data than the list above, but that should give you an idea of how it works. By piecing together data from different providers, I was able to understand the unique characteristics of all cities.

Currently, I also collect:

- Average Flight Prices between two countries

- Cost of Meals

- Internet Speeds

- Popular SIM Providers

- Whether water is safe to drink

- Air Quality (coming soon 🤫)

- Popular Tourist Attractions (coming soon 🤫)

Creating Filters

In Visabug, you are able to use filters to find unique destinations. Here's a screenshot of the filter box.

Apart from the Visa Requirement filters, the filtering actually works on the city-level, not at a country-level. So when you apply a filter like "Sand and Beaches", Visabug finds all cities that it thinks are close to beaches, and bubbles the result up to the country-level.

There is some averaging that is done to ensure that countries are not marked as false-positives. For example, you wouldn't say Canada is close to sand and beaches, but Toronto is. I have written some code to verify that a single city doesn't influence the overall country's classification.

The reason I went with this approach is that I like the information to be living at a more granular level. It would let me do city-level searching in the future.I like the information to be living at a more granular level. It would let me do city-level searching in the future.

This is why you can see city level information in Visabug. Cities are what actually power most of the non-visa data, and it's one of the areas of the site I want to improve.

Getting Images

I'm really happy with how the Visabug User Interface looks, and a large part of that is due to the imagery. It just makes me want to travel!

Images were very easy to get. I signed up for an Unsplash Developer Account that gave me access to 50 requests/hour through the Unsplash API. 5 hours later, I had images for all 238 countries in the world.

To determine what image to show for a country, I ordered Unsplash's images by likes and picked the highest-liked one.

Recently, I was approved to get an Unsplash Partner Account, which now gets me 5000 requests/hour. I intend to use this to have better images for Cities in the near future.

All image data is stored as JSON files, so I don't have to make any API queries in real-time.

Storing the data

The funny thing is that I wanted to build Visabug out really quickly, to see if there was any interest in the product. To speed things up, I actually launched the site without a database. 😅

Currently, Visabug has 2 JSON files: one with all country data, and another with all the city data. Together, they are about 300 MB. When the application starts up, this data is loaded into memory. This isn't ideal but has worked up until now.

Of course, I can't send 300MB of data to the client, so Visabug has a NodeJS server that does processing on this data and only sends back what the client wants. Everything is server-rendered and I don't have a public API yet.

What happened next?

I had acquired all this data by January, and spent the next month actually building out the product. This is what Visabug looked like in January.

Here’s what Visabug looked like 60 days ago. I have spent about 1hr on it everyday since then and it has come a long way!

— Tilo (@tilomitra ) February 29, 2020

Persistence and consistency are underrated. https://t.co/Lsy8sbqc2e

I am going to write about how I designed the website in the next post. I received help from Nathan Barry who generously helped me shape my Home Page messaging, and Chris Messina gave me many useful product tips.

Follow me on Twitter or here on Dev.to if you want to be notified when that post comes out. Of course, please do check out Visabug and let me know what you think!

Top comments (1)

That's fantastic. But, isn't the Numbeo API licensed? Which license did you purchase? Or, do they have some free-tier as well?

I had an idea for a side-project that would require some cost of living data. Last time I checked, scraping without permission will be illegal since the data is clearly licensed. That's why I ditched my side project idea.