Author: Sergey Shurlakov

In this post, I’d like to tell you how we use dynamic environments (review or preview environments) in our work here at Typeable, what issues we’ve managed to solve, and how and why we use our Octopod solution for these purposes instead of GitLab Dynamic Environments. In case you don’t know what the dynamic environment is, I recommend reading the post by Flant where the author gives a detailed account of the types of dynamic environments, their purpose and applications. The author also looks into this topic using GitLab as an example and provides detailed cases and descriptions. As for us, we use an alternative approach, somewhat different in terms of ideology, and work with review environments in Octopod. Previously we related the history of Octopod creation and the causes that motivated us to create it. We won’t repeat ourselves but will focus on the differences of our approach and the issues we’ve fixed.

To begin with, I need to tell you that Octopod is a universal tool not tied to any specific package management method in Kubernetes. Nevertheless, as the de facto standard in the world of Kubernetes, it’s primarily meant to simplify the deployment of Helm charts. At Typeable, we use Helm, so starting from version 1.4 the standard Octopod pack already includes all you need to work with this utility.

Main differences from GitLab

Probably the most important thing is that we started working with review environments before GitLab had an interface for dynamic environments. However, there are some other reasons, including the ideological difference of our approach to the implementation and use of review environments. But let’s start from the beginning.

We don’t store the environment configuration in the code. Why? We intentionally untie the environment from the code for several reasons.

a. Not all team members have access to the code. Our processes are shaped in such a way that analysts, testers, project managers, and other team members who don’t write the code usually have read-only access to the repository or even no access at all. Such an approach allows us to better control the code and reduces the number of risks and potential issues. However, they need to be able to create review environments independently without involving a DevOps engineer or developer.

b. In GitLab, in order to manage the dynamic environment, you need to create the file.gitlab-ci.ymlfirst and then modify it, as appropriate. Thus, each code branch will have its own file with the required environment settings. The risk exists that the settings might “leak” to the main branch and the new environments will have invalid parameters. This has to be fixed somehow, which only increases the gitflow complexity by potentially increasing the number of conflicts and, consequently, the number of merges or rebases.

c. We often have to make changes in the review environment configuration. In Octopod, it’s easy to change the environment parameters and variables and, most importantly, to switch between various endpoints in the services we are integrated with. We have lots of integrations with external systems and it’s not always possible to test the application functionality by connecting to the test API. It’s often necessary to interact with the production API.

So the settings of the review environment are stored in Octopod and managed through the Web interface or Octopod’s CLI (octo CLI) and are fully isolated from the code.-

In the GitLab environment, variables are global for the project. A lot of review environment parameters are presented by the environment variables. GitLab provides an interface used to set the environment variables without making changes in

.gitlab-ci.yml, but these variables have the global scope, i.e. they apply to all dynamic environments of the project. It’s only possible to write the environment variables for a specific review environment by inserting them in.gitlab-ci.ymlin the appropriate branch, which contradicts item 1.In Octopod we’ve solved this problem using the Application Configuration and Deployment Configuration. Octopod generates the list of key-value parameters that allows viewing and managing the available chart settings in UI. You only need to select the required key and write the value. It’s also possible to provide custom keys that are missing from the list. We’ve provided two types of configuration settings: Application Configuration and Deployment Configuration. The Application Configuration is the configuration (Helm values) that is passed to the application. For example, this can be a database connection string or environment variables. The Deployment Configuration is used only during the environment creation phase. The key values are passed to Helm and allow redefining the default values thus directly influencing the deployment process. Here a chart version or URL of the Helm repository can be used as an example.

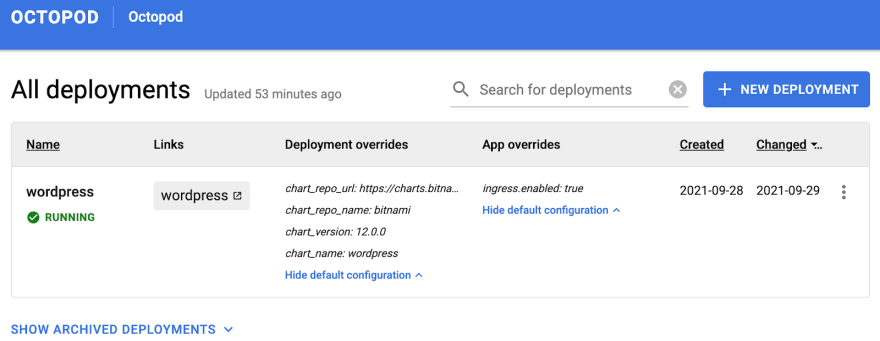

This is what the staging configuration looks like in Octopod:

Resource constraints. There can be a lot of review environments, as well as the resources they consume. In the long run, you run out of resources and have to solve this issue somehow. This is why we archive the environment when the Jira ticket moves to the column Done (we’ve cross-integrated Jira, Octopod and GitHub). Archiving can also be done manually. Archiving implies the “scale-to-zero” approach where Pods are released while all other resources (e.g. Persistent Volumes) are saved. This allows extracting the environment from the archive as necessary and restoring its operating condition very quickly. The archived environments which are more than 14 days old are deleted completely, i.e. a cleanup is carried out involving the deletion of absolutely all resources, including PVC, certificates etc. We set up the automation of this process using octo CLI, a utility in the Octopod command line that has an extended functionality as compared with the Web interface. This approach can also be implemented in GitLab but the task is not trivial and requires complex logic in

.gitlab-ci.yml.The state of the review environment has to be tracked accurately. One of Helm’s disadvantages is that it’s not possible to track the state of the environment after it’s been deployed. After Helm has finished its work, you have to check whether the environment keeps functioning all by yourself. During the work, something may go wrong. The review environment is not working already but we don’t know about this until we need it. The guys of Flant have fixed this issue using the kubedog which is built into the werf. Octopod keeps track of the environment state in a somewhat different way. We write all control scripts for Octopod in Rust and use the kube-rs client to check the statuses of all ReplicaSets. The check is carried out every 5 seconds, which is why the status of the review environment condition in Octopod is always up-to-date.

Writing a .gitlab-ci.yml script can be a challenge. In Octopod we’ve solved this issue by providing ready-made Helm scripts that allow deploying any valid Helm chart. In many cases, this makes it absolutely unnecessary to write any scripts and minimizes the involvement of a DevOps engineer. At the end of the post, we’ll move to a hands-on exercise and deploy an instance of WordPress in Octopod using the Helm chart by bitnami.

The need to create complex interdependent environments. If you need to create an environment that depends on infrastructure services such as PostgreSQL or Redis, these services can be deployed as separate review environments using their Helm charts while their connection parameters can be passed through the Application Overrides. In this way, it’s possible to use, for example, one instance of PostgreSQL or one authentication service for several review environments.

A closer and more reliable integration with Kubernetes cluster is required. Octopod works in the same Kubernetes cluster where it deploys all review environments. The issue of cluster access and transmission of Secrets has been solved fundamentally; Octopod works through the Service Account. In GitLab you can configure seamless integration with the Kubernetes cluster, which solves the problem of secrets transmission. However, if the integration doesn’t work due to any reason, there arise some difficulties.

Practice. Installing Octopod

There are two main ways to install Octopod:

- For industrial application. Octopod is installed in the Kubernetes cluster using the official Helm chart.

- For individual use and to get acquainted with the main features of Octopod, installation is carried out locally and it’s fully automated.

Now we’re going to install Octopod locally. Installation is currently supported for Linux and MacOS. Installation in Windows requires Windows Subsystem for Linux 2 (Docker Desktop is installed in Windows and integrated into WSL 2; other components are installed in WSL based on the Linux instructions). First of all, you’ll need to install the following infrastructure components:

- Docker Desktop

- Kind

- Kubectl

- Helm 3

- (Windows only) WSL 2

Then we run the script that will install Octopod:

/bin/bash -c "$(curl -fsSL

https://raw.githubusercontent.com/typeable/octopod/master/octopod_local_install.sh)"

An important point about Octopod installation in Windows. WSL 2 may have issues with SSL connection to the resources due to the settings of antivirus software functioning as the firewal. The issue manifests itself as time out when you try to download data from repositories.

When the installation is completed, we open the browser and type in http://octopod.lvh.me in the address bar and see

Perhaps, it should be noted here that lhv.me is a simple service returning the IP-address of local host 127.0.0.1 to any request. It’s convenient to use it as you don’t have to make changes in /etc/hosts every time.

Now we need to create a new deployment. Octopod is delivered with predefined parameters for bitnami Helm charts and WordPress is used as an example.

Click on the NEW DEPLOYMENT button. A window will appear on the screen where you need to set the environment parameters. In the simplest case, it’s enough to type in the name. For example, wordpress. However, we’ll add two more App overrides (to this end click on ADD AN OVERRIDE):

| key | value |

|---|---|

| wordpressUsername | admin |

| wordpressPassword | P@ssw0rd |

We add these two variables only for the period of the review environment creation when the variables will be used to initialize WordPress. After that, you can delete them. It goes without saying that here we show the user name and the password explicitly and in plain text only for the sake of simplification and for demonstration purposes in the local Octopod version. In real life, we use appropriate tools such as Hashicorp Vault.

Click on the SAVE button.

After some time, the review environment will be created.

With that, the creation of a review environment is completed. Now you can open WordPress using the link provided in the Links column. To open the admin panel, add /admin to the URL (http://wordpress.lvh.me/admin). Type in the user name and password provided during the environment creation.

If everything has gone well, you can delete the variables wordpressUsername and wordpressPassword and change the password, as necessary, in the WordPress admin panel.

Conclusion

In our opinion, not only the developers and testers but also other people with limited technical skills can make use of review environments. This is why we’ve done our best to make the entry barrier for Octopod as low as possible, create a user-friendly and clear interface and ensure intuitive and predictable user interaction. These are the reasons behind the path we’ve chosen to develop Octopod and solve the problems described in this post. Surely, Octopod differs greatly from GitLab Dynamic Environments in terms of ideology and uses different approaches to solving similar issues. However, it performs its mission without a hitch – DevOps engineers are now free from the mundane tasks of creating and maintaining review environments and it’s well worth it.

In our turn, we’d be happy to get feedback from Octopod users. We are looking forward to your suggestions, wishes and PRs on GitHub!

Top comments (0)