Current state

We have a multi-module Spring Web MVC 4 application with about 100k lines of code. We use Maven and Azure DevOps. A simple build pipeline builds and runs all the unit tests - about 2.8k of them. Well, honestly, I would call most of them component tests or even integration tests. Let's clear out some definition at the beginning.

What is a unit test?

There are many definitions of a unit test. I like the one by Roy Osherove:

“A unit test is an automated piece of code that invokes the unit of work being tested, and then checks some assumptions about a single end result of that unit. A unit test is almost always written using a unit testing framework. It can be written easily and runs quickly. It’s trustworthy, readable, and maintainable. It’s consistent in its results as long as production code hasn’t changed.”

There is a nice article about unit tests in Martin Fowler's bliki so I won't go into much details.

Let's say that we expect the following from a unit test:

- fast feedback (has to run fast)

- short (small amount of code)

- tests one thing (one logical assert)

- fails for a good reason

What is a component test?

Again a nice article in bliki.

Since our application uses Spring Data JPA, we have lots of repositories. Many of them contain custom query methods. We use Spring Test DBUnit to help us out testing them. The idea is simple - you setup a mockup database (H2), import some test data before a test, run a test, assert and then cleanup the DB end the end. Since this approach creates the DB and some (small) spring context with all necessary beans, I treat these tests as component tests.

These tests run much longer than unit tests.

What is an integration test?

The bliki defines the purpose of integration tests as:

The point of integration testing, as the name suggests, is to test whether many separately developed modules work together as expected.

In our case, we have the the Spring Web MVC controllers. And those controllers expose RESTful API. For those we usually create a full spring context (with mocked H2 database) and use MockMvc to examine them. The beauty of such tests is that you test the API, including all underlying services (business logic) and repositories (persistence). The huge drawback is the execution time. It takes really long time to create Spring context (depends on how large your app is). And in many cases the context is destroyed and created fresh. This all adds up to the total build time.

What tests do I need?

If you are now biased to lean towards just unit tests, as they are fast to run, and want to ditch your component/integration tests as they are slow - hang on ... not so fast. You need The tests at all levels. In a reasonable ratio. In large applications you might split the test runs to fast/slow to speedup the feedback loop. You also need UI driven tests, system tests, performance tests ... Ok, the whole topic about high quality testing of a software is very complex and you might start reading here if you are interested. Let's get back to our topic for now.

The path to improve the situation

There is a nice article written about what you can do. It tells you to re-think whether you need that many integration tests and rewrite them with unit tests. As described above, do it with caution.

It's important to define the test strategy early on. Otherwise the issue will just grow too big to finally conclude that you deal with lots of legacy tests that is expensive to change now. In companies and large projects this happens too often to simply ignore it. Legacy or not, there is still something we can do with reasonable effort and positive return of investment.

Spring context

Due to many reasons our spring context is created in total 17 times during the test run. Every time it takes about 30 seconds to start. It is 8.5 minutes added to the test run. You can make use of the advice from the article above to make sure that the context is only created once. We could save 8 minutes here.

DBUnit

If using DBUnit, each test starts with importing data to DB and ends with cleanup. There are several strategies for this. The Spring Test DBUnit expects these annotations on test method:

@DatabaseSetup(value = "data.xml")

@DatabaseTearDown(value = "data.xml")

The default strategy is CLEAN_INSERT for both Setup and Teardown which in fact deletes all data from affected tables and insert them again. With pure DBUnit, you configure it explicitly, e.g.:

AbstractDatabaseTester databaseTester = new DataSourceDatabaseTester(dataSource);

databaseTester.setSetUpOperation(DatabaseOperation.CLEAN_INSERT);

databaseTester.setTearDownOperation(DatabaseOperation.DELETE_ALL);

Consider changing these strategies to something faster, e.g. instead of CLEAN_INSERT for Setup, use just INSERT. Of course, this will cause problems if your tables are not cleaned up properly by previous tests. The CLEAN_INSERT of Teardown can be replaced with just DELETE_ALL or even faster one TRUNCATE_TABLE. There is one catch with TRUNCATE_TABLE on H2 database, more details in the appendix.

We have about 1200 tests that are running with DBUnit. Just imagine if we saved 100 milliseconds for setup/teardown. It would be 4 minutes saved with relatively low effort.

Build time analysis

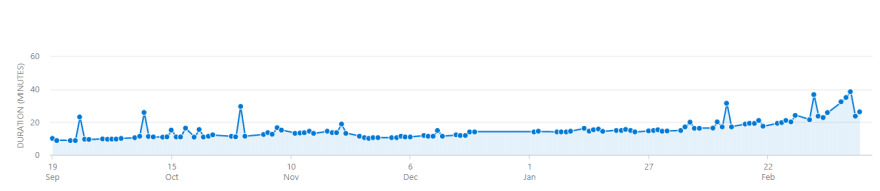

However, our situation was a bit more complicated. Our build execution time grew from about 9 minutes to something about 25 minutes in avarage over the past 6 months.

(the sudden peaks are caused by the fact that we recycle the build agents every now and then and hence the maven cache is gone).

Moreover, we experience some builds taking as long as 45 minutes and some of them even more then 1 hour.

We did a simple analysis of the build logs. The tests are configured to output to the console with TRACE level. There we could see that some operations took suddenly much longer than usual. For instance the DB teardown:

2020-03-13T12:59:21.9660309Z INFO DefaultPrepAndExpectedTestCase - cleanupData: about to clean up 13 tables=[...]

2020-03-13T12:59:29.0545094Z INFO MockServletContext - Initializing Spring FrameworkServlet ''

The first message is the Teardown of DB from one test, the second message is starting a new spring context for another test. Note the timestamps. 7 seconds to cleanup the DB! Remember we do it 1200 times. After analyzing one build output (the build took 45 minutes) we calculated the total time spent on cleaning up the DB - 1800 seconds. 2/3 of the build spent on this. It surely can't take that long.

Of course we already checked the infrastructure. The build agents are pretty decent t2.large EC2 instances with 2 vCPUs and 8GiB RAM powering Ubuntu. Should be OK.

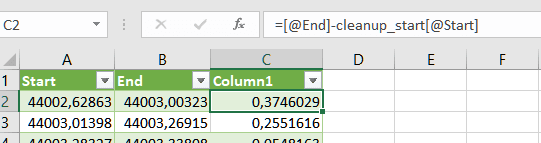

I like quick and easy solutions for complex problems. We analyzed the build output using simple tools - shell and excel. Let's do it step by step:

-

extract the timestamps when cleanup DB starts:

cat buildoutput.log | grep "DefaultPrepAndExpectedTestCase - cleanupData" | sed 's/\([0123456789T.:-]*\).*DefaultPrepAndExpectedTestCase.*/\1/g' > cleanup_start.out(

grepfor the lines matching the substring, then usingsedto only output the timestamp) -

extract the timestamps of the next messages - this will roughly be the end time of the cleanups:

cat buildoutput.log | grep "DefaultPrepAndExpectedTestCase - cleanupData" -A 1 | grep -v "\-\-" | grep -v "DefaultPrepAndExpectedTestCase" | sed 's/\([0123456789T.:-]*\).*/\1/g' > cleanup_end.out(

grepfor the lines matching the substring and 1 line after,grepout the first line and the line with '--', then usingsedto only output the timestamp) -

Load both files to Excel

- choose Data -> From Text/CSV (Alt+A, FT)

- select the first file

cleanup_start.out -

Source and Change type steps should be added automatically, add a few more:

- the new Added column contains

Time.Hour([Column1])*60*60+Time.Minute([Column1])*60+Time.Second([Column1]) - the Removed columns step just deletes the Column1

- now the excel contains the amount of seconds (incl. fraction) elapsed from the day start

- repeat the same for second file

cleanup_end.out - add diff column

Now the diff column contains roughly the duration of the DB cleanup. I simply put a sum at the end of the column to calculate the 1800 seconds mentioned above.

-

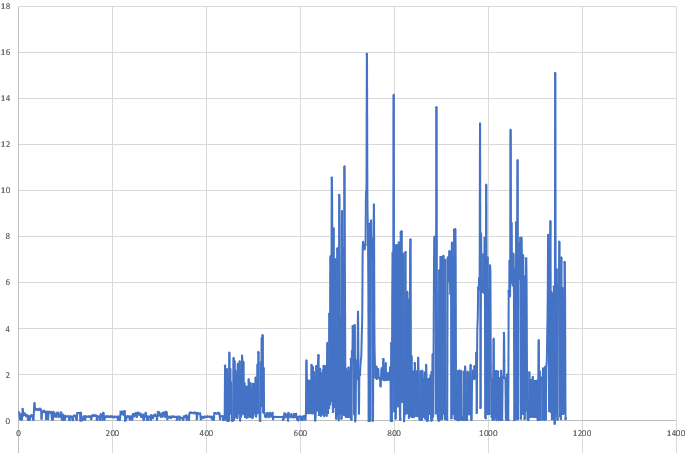

plot a chart

- select the whole third column

- add a new line chart

The chart now shows how log it took the DB cleanup during the build. You can see important information here. It was all fine and then we see sudden blocks of peaks. The pattern looks very suspicious.

Short stare at the chart, some experience and the 'blink' moment - the Garbage Collector!!! This explains why it takes 7 seconds to cleanup the DB. Because the full GC runs in the background.

Improving the situation

We checked the POM of the affected module and ... guess the surefire plugin configuration:

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-surefire-plugin</artifactId>

<version>${surefire.version}</version>

<configuration>

<argLine>${surefireArgLine} -Dfile.encoding=UTF-8 -Xmx1024m

</argLine>

</configuration>

</plugin>

Yep, the -Xmx1024m. The maximum Java heap space explicitly set to 1024m. This is not enough for out tests any longer. Even though we set the Xmx in the build pipeline, it is not picked up. This explains everything - when the heap space is reaching the maximum, the full GC is invoked to free up some memory. This radically slows down the test execution.

First thing we did - increase the Xmx to at least 2048m (or leave it empty to default to 1/4 of RAM, whichever is smaller).

Since we are here, let me show you one more thing. If your tests are good enough to run in isolation properly, and you have the HW that is powerful enough, play around with this configuration of surefire:

<configuration>

<forkCount>2</forkCount>

<parallel>classes</parallel>

<threadCountClasses>2</threadCountClasses>

</configuration>

This settings means, that there will be 2 forks of surefire booter (this guy actually executes the tests in isolated JVM), it will use 2 threads to run the tests and it will break down the test suite to classes to distribute them over. Important to remember here is that the threadCountClasses is used within the same (forked) JVM, meaning that there will be 2 threads per fork. So if you run into race conditions, just decrease the threadCountClasses to 1. The tests will still run in parallel, but in 1 thread per fork. And because H2 is typically 1 per JVM, you should be fine. Unless you have another kind shared data outside JVM.

The outcome that we reached - down to 11 minutes build time from 25 minutes, which is only 44% of previous duration.

Conclusion

Understand the tools that you use (e.g. MockMvc, DBUnit, Spring Test DBUnit, Surefire plugin). Analyse your build outputs. Do not close the case with just stating that your application grows, your test suite grows, hence the build time gets longer. Sure this is true, but you should always reserve some time for refactoring and improving your code.

Appendix

DBUnit H2 TRUNCATE_TABLE operation

Truncate table is usually faster operation than delete from. There are some catches though. Read more about it here. H2 won't allow you to truncate table if there are foreign keys to that table. However, there is a special H2 syntax that you can make use of. We implemented the following DBUnit operation:

class H2TruncateOperation extends org.dbunit.operation.AbstractOperation

{

private final Logger logger = LoggerFactory.getLogger(H2TruncateOperation.class);

@Override

public void execute(IDatabaseConnection connection, IDataSet dataSet)

throws DatabaseUnitException, SQLException

{

logger.debug("execute(connection={}, dataSet={}) - start", connection, dataSet);

IDataSet databaseDataSet = connection.createDataSet();

DatabaseConfig databaseConfig = connection.getConfig();

IStatementFactory statementFactory = (IStatementFactory) databaseConfig

.getProperty("http://www.dbunit.org/properties/statementFactory");

IBatchStatement statement = statementFactory.createBatchStatement(connection);

try

{

int count = 0;

Stack<String> tableNames = new Stack<>();

Set<String> tablesSeen = new HashSet<>();

ITableIterator iterator = dataSet.iterator();

String tableName;

while(iterator.next())

{

tableName = iterator.getTableMetaData().getTableName();

if(!tablesSeen.contains(tableName))

{

tableNames.push(tableName);

tablesSeen.add(tableName);

}

}

if(!tableNames.isEmpty())

{

statement.addBatch("SET FOREIGN_KEY_CHECKS=0");

}

for(; !tableNames.isEmpty(); ++count)

{

tableName = tableNames.pop();

ITableMetaData databaseMetaData = databaseDataSet.getTableMetaData(tableName);

tableName = databaseMetaData.getTableName();

String sql = "TRUNCATE TABLE " +

this.getQualifiedName(connection.getSchema(), tableName,

connection) +

" RESTART IDENTITY";

statement.addBatch(sql);

if(logger.isDebugEnabled())

{

logger.debug("Added SQL: {}", sql);

}

}

if(count > 0)

{

statement.addBatch("SET FOREIGN_KEY_CHECKS=1");

statement.executeBatch();

statement.clearBatch();

}

}

finally

{

statement.close();

}

}

}

An example SQL statements:

SET FOREIGN_KEY_CHECKS=0;

TRUNCATE TABLE table1 RESTART IDENTITY;

TRUNCATE TABLE table2 RESTART IDENTITY;

SET FOREIGN_KEY_CHECKS=1;

You can use pass this operation to plain DB unit configuration like this:

AbstractDatabaseTester databaseTester = new DataSourceDatabaseTester(dataSource);

databaseTester.setTearDownOperation(new H2TruncateOperation());

or implement a new DB Operation lookup if you are using Spring Test DBUnit:

public class H2SpecificDatabaseOperationLookup extends DefaultDatabaseOperationLookup

{

@Override

public org.dbunit.operation.DatabaseOperation get(DatabaseOperation operation)

{

return operation == DatabaseOperation.TRUNCATE_TABLE ?

new H2TruncateOperation() :

super.get(operation);

}

}

and use annotations:

@DbUnitConfiguration(databaseOperationLookup = H2SpecificDatabaseOperationLookup.class)

Top comments (0)