Courtesy Adobe Stock

Does front-end development as a we know it still exist; or has the role evolved into something we no longer recognise? As with evolution in nature, the evolution of "front-end" has resulted in several distinct flavours --- and in my opinion --- an identity crisis.

What is a front-end developer anyway?

Traditionally speaking the front-end could be defined as the UI of an application, i.e. what is client-facing. This however seems to have shifted in recent years as employers expect you to have more experience, know more languages, deploy to more platforms and often have a 'relevant computer science or engineering degree'.

Frameworks like Angular or libraries like React require developers to have a much deeper understanding of programming concepts; concepts that might have historically been associated only with the back-end. MVC, functional programming, high-order functions, hoisting... hard concepts to grasp if your background is in HTML, CSS and basic interactive JavaScript.

This places an unfair amount of pressure on developers. They often quit or feel that there is no value in only knowing CSS and HTML. Yes technology has evolved and perhaps knowing CSS and HTML is no longer enough; but we have to stop and ask ourselves what it really means to be a front-end developer.

Having started out as a designer I often feel that my technical knowledge just isn't sufficient. 'It secures HTTP requests and responses' wasn't deemed a sufficient answer when asked what an SSL certificate was in a technical interview for a front-end role. Don't get me wrong, these topics are important, but are these very technical details relevant to the role?

I will be occasionally referring to front-end development as FED from here on.

The problem

This identity crisis is perpetuated by all parties: organisations, recruiters and developers. The role has become ambiguous with various levels of responsibility, fluctuating pay scales and the lack of a standardised job specification within the industry.

While looking at the job market you might find that organisations expect employees to be unicorns and fill multiple shoes. Recruiters also could have unrealistic expectations in terms of the role which is often supplied by a Human Resources department with little understanding of what they are hiring for. Lastly developers compound this problem ourselves: they accept technical interviews as they are and should we get the job we place ourselves under unnecessary pressure to learn the missing skills, instead of challenging recruiters and organisations on what it actually means to be a front-end developer.

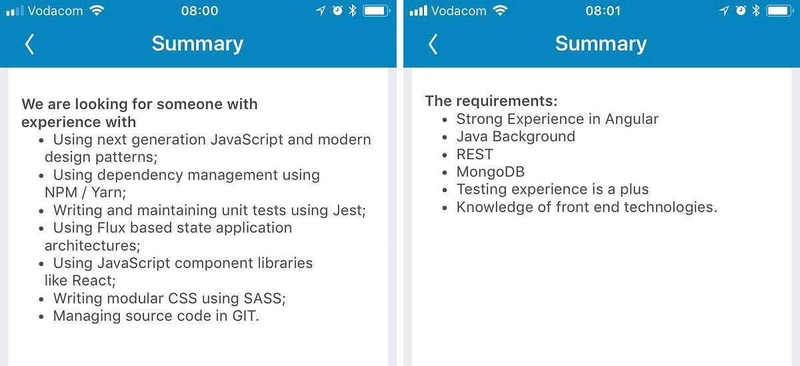

Compare the below job posts on LinkedIn, both titled 'Front-end Developer'. The roles are vastly different: on the one hand the developer is expected to know Flux architecture and unit testing while on the right they are expected to know Java and MongoDB.

Comparing two roles on LinkedIn, both labelled "Front-end developer"

Both of these roles are vastly different; and clearly lack a definitive scope or the role.

Why it is important to standardise the role

- Evening out the pay scale: front-end engineers won't get paid what FEDs should and vice versa.

- Alleviates pressure; allowing developers to either focus on engineering products or on creating rich interactive web experiences

- It creates specialists; developers who are really good at CSS, HTML and interactive JavaScript

- Makes job hunting less stressful when it comes to technical interviews and job specifications

Separation of concerns

In order to define the role we have to strip out all the roles that could be considered above and beyond the scope of a FED. The web developer role, for example, should not be confused with the FED role as the one builds applications and the other builds experiences. Other examples include front-end designer, web engineer, back-end web developer etc.

To distinguish these roles we could look at four criteria:

The developer's canvas

If we were to make the assumption that the primary context for front-end is the browser --- where would that leave PHP or C# developers on the spectrum? PHP is a good example; yes it runs on a server but ultimately still delivers data to a UI (i.e. the browser). Both JavaScript and PHP are scripting languages that don't require compilation. Is a PHP developer then considered a front-end developer or a back-end developer?

Tools like Github's Electron allows a developer to build cross platform desktop apps from HTML, CSS and JavaScript. Similarly tools like Adobe Phonegap make it possible to compile HTML pages with JavaScript to native mobile applications. This essentially enables an intermediate front-end developer to build and publish mobile or desktop apps. Could app development then be added to the scope of a front-end developer's responsibilities?

The lines between back-end and front-end became blurry somewhere between JQuery and Node and ever since it's often expected for front-end developers to know Node and accompanying packages like Express. These are clearly back-end technologies, so why are we adding them to a FEDs job specification?

Before we can standardise the role we have to agree on what the front-end developer's canvas is. In my view, this is confined to the UI of an application and primarily runs in a browser --- the role should not be concerned with building any server side functionality.

The chosen language

A second criteria to consider might be a developer's chosen programming language. It is possible to build website infrastructure in languages like Python and C# which begs the same question as before --- could Python, PHP and C# be considered a front-end language?

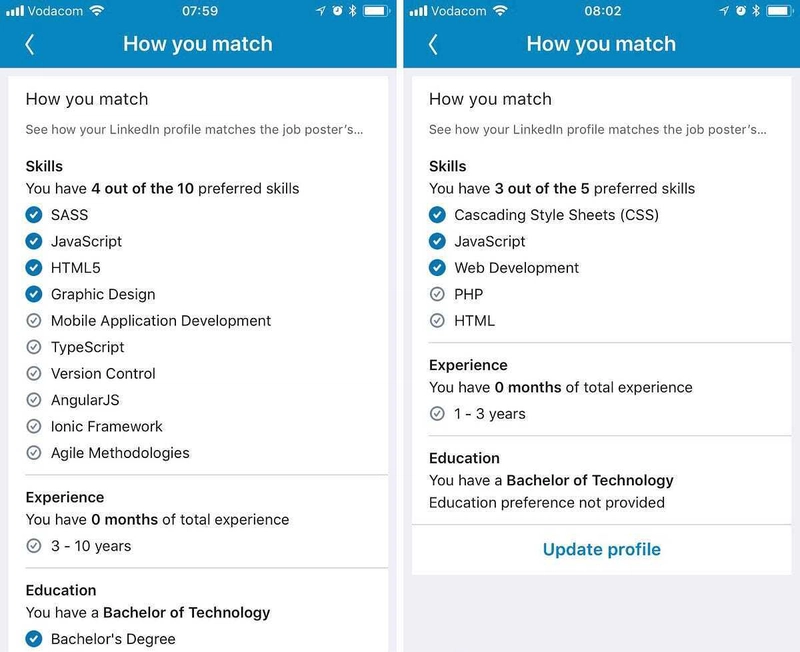

The below example asks for PHP as a required skill where the other expects the developer to know TypeScript.

Comparing the skills required for two roles on LinkedIn, both labelled "Front-end developer"

JavaScript can now do much of what PHP and Python can do; with popular libraries like TensorFlow becoming available to JS developers. Another example includes TypeScript (as above), which brings static typing to JavaScript from languages like Java. Where do we draw the line then in terms of what is considered a front-end language or framework?

Which frameworks or libraries then should be part of the role's scope if we are excluding PHP, C#, Java etc? JQuery for example is a the perfect tool for building interactivity for the web, where most front-end developers might argue that it's better to learn Vue.

Libraries like React on the other hand requires a developer to learn concepts that traditionally were not associated with the front-end: setting up webpack and transpilation, deployment processes, understanding high-order components and just for fun you might throw in state management with Redux. The list grows quickly; and although this all uses JavaScript as a language the understanding that comes with it is often very different.

Many back-end developers have told me that they find CSS very difficult and I agree --- CSS is hard! We forgive back-end developers for not knowing CSS and interactive JavaScript; why then is there an expectation for front-end developers to know back-end technologies?

The question we should ultimately be asking is whether a front-end developer should be dealing with functional or data driven components at all. In my view, the role's language choice should only be HTML, CSS and JavaScript (limited to front-end libraries), primarily used to build interactive components or web projects that consume data from services where necessary.

The skill-level

When does a front-end developer transition into a full-stack developer or a web developer?

Distinguishing this becomes really easy when considering the canvas as well as the chosen language. A full-stack developer is a developer that understands both front-end and back-end (i.e deals with more than one canvas). A web developer is a developer that can work in multiple frameworks, libraries and languages to build rich data driven applications. Most FEDs will then most likely move from an intermediate FED role to a senior full-stack, engineer etc. role.

Is it then possible to be a senior front-end developer when considering our role definition up to now? My argument is yes. Simply look at any winning website on Awwwards and you'll quickly agree that this level of interactivity requires a very good understanding of the DOM and DOM manipulation via JavaScript. FEDs also then have an opportunity to focus on learning libraries that build interactive features (for example HTML canvas, WebGL) as opposed to back-end libraries or frameworks that takes them away from what got them started as a FED in the first place.

Other specialties

The last factor to consider are all the additional requirements that come with front-end roles. I consider this 'baggage' mostly because these requirements often get thrown into the mix in an ad-hoc fashion.

A good example of this is MongoDB (which was a requirement in the listing mentioned earlier). Previously database administration or architecture was a role in itself, so why now are we expecting FEDs to have this skill set on top of everything else?

Another example from an earlier screenshot is the listed graphic design requirement. Personally I am a big advocate of developers understanding design, but expecting them to have it as a skill on top of their other FED skills changes the role into something else (perhaps a front-end designer or full-stack designer).

When considering the added responsibility that comes with having all this knowledge, we have to ask ourselves whether adding them into the mix only complicates the landscape. If today I decided to bring React into my organisation, the developer they choose to replace me with would have to know React as well. If the new developer then decides to add Redux to the mix... well you understand where this is going. To make matters worse, they will keep on hiring front-end developers regardless of the technology used because that is the role required by the department.

So with great power does come great responsibility and it is ultimately up to us as developers to use technology responsibly. Consider the operational impact of a technology stack change and understand that you might be perpetuating an existing problem.

Defining the role

Now that we've unpacked what it means to be a front-end developer, we could write the following job description:

A front-end developer is responsible for building interactive user interfaces or experiences for the web using HTML, CSS and JavaScript.

Let's keep things simple --- a FED should not need to understand functional programming or how SSL works on a micro technical level. This is not to say that they shouldn't learn these concepts; but at the very least it shouldn't be an expectation.

I feel that it is important that we collectively address the confusion surrounding the roles in the development community by helping the next generation of front-end developers understand what it means to be a FED.

This article is purely based on my personal experiences and biased opinions --- I would love to hear your views down in the comments.

Vernon Joyce, a full-stack unicorn.

Top comments (51)

I wouldn't say exactly having an identity crisis, but the barrier to entry has certainly gone up. I'm sure when the web was still in its infancy, you could have a career with just HTML & CSS, but as more people get into it, the bigger competition gets, the more people evolve. Competition leads to innovation, right?

We're still doing the user interface bits of it all, but not only can we put together a design, we can also make it interactive - heck, we can make the whole thing run only on the front-end by only communicating with back-end API's for data exchange. There's some seriously cool stuff you can do as a front-end developer now that you couldn't back then. This isn't a bad thing. The job is ever-evolving and so should developers be.

I do agree with you and I am myself a big advocate of continual development and learning. The way I see it there is an opportunity to branch into either building really good interactive experiences, or you could branch into building the full-stack. So yes for sure you can't just stick to HTML and CSS (although perhaps this is more a web admin as opposed to a FED).

A recruiter recently told me that "React only really started picking up at the beginning of this year", this of course in relation to South Africa, but there seems to be an expectation to know React really well so it becomes difficult to get into that space.

To me the big thing is that we have to start calling things for what they are. I consider myself a full-stack developer but I am finding it hard to get into positions that are considered "front-end development" because of, as you mentioned, the barrier to entry. The crux of it is: let's not call it front-end development but let's call it by what it is (which could be web engineering, web developer, full-stack developer).

This might also be a reflection of the industry in my country where a lot of organisations are recruiting on an international standard whereas the industry itself has a lot yet to learn.

Thanks for reading bud!

I'm also a full-stack developer coming from years of PHP background and unfortunately there aren't many full-stack positions, so I have to pick between front-end or back-end (currently am at front-end). The problem with being full-stack is that full-stacks usually are (and I certainly am) a jack of all trades, master of none type of developers, so I'm always a step or two behind just pure front-end developers.

It's cool you mention React, which is something I just very recently started experimenting with to keep myself in the loop of things (So far, personally, prefer Vue.js).

I don't think one can treat our jobs as merely jobs, they are more like lifestyle choices, because in order to do what we do and keep up with the fast pace of change in our industry we can't just clock out at 5 and then not think about work - more often than not, we need to experiment with new stuff at home, if we can't at work, because otherwise we become obsolete and soon find ourselves in the unemployment line.

As far as title goes, if anybody asks, I'm a software engineer. Great post by the way, I love this topic.

You sound like someone I'd enjoy working with! Clear and succinct (and I happen to agree with all of it, including the quality of the OP).

To quote a Nicolas Cage skit; "That's high praise".

The full phrase is "A jack of all trades is a master of none, but oftentimes better than a master of one"

As a full-stack developer myself, I've found this to be very true. Sure, I might not know every front-end framework inside out, but I've got knowledge elsewhere that someone who's only ever focused on the front-end won't have.

Yes, but tell that to the job market. Apparently, at least in EU, it's very trendy to pay full-stacks less money than those who are specialized in either front-end or back-end only. It's unfortunate.

Because the businesses wanting "full stacks" are cheap and view thier tech as an overhead/commodity. For some reason people are willing to work for them. If I am building the back end I would laugh and walk off if you started going on about me touching the front end. It's like hiring a data scientist and then forcing them to do the data engineering work also. Rediculous and hoaky.

It’s a dilemma and not a new one. Job postings have been littered with unrealistic expectations for as long as I can remember. They’re often poorly written, and poorly understood by those who are writing them. So I take them with a grain of salt, first of all.

Now on the one hand I don't think I want to be typecast as frontend, or backend. But you need some sort of focus to what you're doing so the term fullstack still bothers me. The day is only so long. Put too many items on that tech requirements list and you are never keeping up with them.

I myself hate having to be labelled as either front-end, back-end or full-stack, but I feel that we don't really have a choice. Being a "unicorn" is both a blessing and a curse in that we are forced to pick a side when moving into new positions.

To your point around having only so many hours in the day - I find myself having this problem too. I WANT to learn about GraphQL right now but I can't seem to find an hour to sit down and get stuck in. Another problem with this is that I tend to learn in fragments and I never get really good at a single thing and this poses a problem for me when I look at some of the job postings for front-end developers.

How do we make the time to stay relevant?

"How do we make the time to stay relevant?"

Ha! You're asking the father of The World's Most Precocious Two-year Old Girl. I spend most of my "time" trying to figure out how to pack a week's worth of work into her two hour nap on Sunday. Mostly I just stare wistfully at blogposts that are discussing the things I'd like to be doing. Or taking a nap myself.

I say just do what you love and don't worry about what other people want you to be. When someone hires you it's just that. They did not re-hire the last person who had the job, they didn't hire some mythical know-it-all who doesn't exist. They hired YOU. So give them the best you can be because that's an experience they can't get from anyone else. There's your relevance.

Please, please, please for the love of all things do not use that god-forsaken term ‘front end designer’. If your job involves you using code, you are a developer. End of story. Yes, your role may require some design knowledge, but that doesn’t make you a designer. A designer – as I’m sure you appreciate – is someone who designs solutions to problems; usually in a tool like Sketch, XD or if they hate their developer colleagues, Photoshop.

You’re absolutely spot on that we need to reconsider the job title of front end developer. But I wold strongly suggest that the title UI developer fits better with the semantics of what people do when we’re talking about when we’re talking about specialists in HTML, CSS and interactional JS.

In addition, using the word designer only gives ammunition to the gatekeepers in this industry who proclaim people who only know HTML, CSS and interactional JS ‘aren’t real developers’. We have to be really careful with the language we use as it can – and does – have unintended consequences.

Excellent comment. I think this is really, really important to note.

I'm currently employed as "Full-Stack Developer" which basically includes (but is not limited to) replicating mocks using HTML and CSS in an Angular application; optimizing the Angular application with caching strategies, bundling, etc.; maintaining and adding features to a monolithic Spring application; and refactoring the Spring application into a micro-services based architecture. All of this includes TDD, coverage and bench marking regularly. There is no CI/CD setup so I'm basically the lead on that endeavor for the refactoring project and did I mention this is my first job as a developer? The job description for this position was basically "Java developer with experience in micro-services and JavaScript". Don't get me wrong, I'm really glad I found this job. It's got a great pay and benefits and I live in NYC which has always been dream location for me but this is way more than I expected for my first developer job. I was bar tending just last year!

I'm a front-end developer specialised on HTML/CSS scalable and modular architectures and my JS skills are not so advanced allowing me to build a PWA from scratch. I think there are a many specialisations and they should not mixed. A lot of JS FED aren't able to build a scalable CSS and HTML architectures (most of them doesn't know about 60% of CSS possibilities) so that's why there are people focused on the UI (that it doesn't mean building only js components).

Thanks Mattia, I can very much relate to your situation. I have 12 years experience in CSS and I feel that’s often taken for granted.

I’ve recently started building scalable SASS architectures (design systems or UI libraries) and you can only do this if you have a really good understanding of SASS and CSS.

I think there is a speciality space in CSS and HTML especially when building large projects.

I'm in that dilemma. For most of my career I've been working on the Front End, but during this journey towards making things better and automating things that I don't like to do, I ended up with a solid understanding of NodeJS and data bases.

I still call myself a FED, but when looking for a job, it is hard to describe the kind of value I can bring to the table.

"I still call myself a FED, but when looking for a job, it is hard to describe the kind of value I can bring to the table."

This really resonates with me. Matching your skills with a job spec is hard because really there aren't a lot of roles that exist for people with comb-shaped skills.

As you righfully noticed, some parts of what's done on the backend (e.g. with PHP) constitutes the UI delivered to the browser, so these parts are relevant to frontend jobs. Some of that UI requires building network APIs, so that's relevant. UI puts requirements onto the application state persisted somewhere on the servers, so general API design and database structure is relevant, too.

Given the complexity of the modern UIs the browser-side is no longer enough.

Titles are hard. Some companies say "UI Engineer" which is closer to what the modern frontend has become, i.e. full-stack implementation of UI features. The client-server architecture is an implementation detail of a web UI so it's silly to split responsibilities based on technologies used. Technologies are chosen to implement products, not vice versa.

So I do agree with you, in some contexts some backend languages are very relevant.

Personally I started learning PHP because I had to build forms to send emails and later I needed to make changes to Wordpress templates.

But this creates a problem as I actually don’t understand how PHP works at its core. I would fail interview questions and I have no desire to deep dive into the language. I might be able to do the job but since I don’t understand a fundamental programming concept I might be deemed not qualified for the role.

Also I like UI engineer as a title!

Tech deep dives are off the table for me. I only do conversational style interviews. I try to feel them out as much as possible but if I get in an interview and it slips into deep-dive I just say "I don't know" to everything to end it ASAP.

Tech deep dives are a really bad sign. At the least you are being treated as a commodity.

Hopefully you will take your nicely defined scope for a FED and help HR out next time you need one wherever you work.

One thing I have noticed in doing this for a few years, getting hired and being involved in a hiring process, those in charge have no idea what is going on really from a team organization and skill standpoint.

The "I want a person who can do everything" makes their jobs easier. It does little however for overall effectiveness outside of small teams doing very complex work. Its a cop out for being able to build and actual team of people with expertise in differing technologies. HR also needs criteria to filter candidates on so they throw every keyword in the business in the job description. Then depending on how much they want to pay they will throw junior, mid, senior on there with a smack of programmer, developer, or engineer.

As others have said, this is primarily a disconnect between HR/Management and the teams that really know what the skills are that they need. The job title I have is “Director of Software Development”, but if anyone asks what I do for a living, I say “Software Engineer“. I’m what would probably be classified as a full-stack developer. Which title/description best describes me and my 25+ years of experience? Depends.

The model that has worked for us (IT division at a large research university) is to allow the TEAM to come up with the job descriptions. The only thing HR does is vet the language to make sure we are as inclusive to as wide a group of applicants as possible. Our technical leadership will occasionally adjust the postings based on initiatives we think we might need in 2-3 yrs time.

I have interviewed a lot of people over my career,and I have never missed on a person who in their interview said “I don’t know technology Z, but I know technologies W, X, and Y, which are similar, so there is no reason I can’t learn Z”.

Our industry moves to fast to get hung up on labels. Be a good co-worker, encourage those who are struggling, accept help when offered, learn for defeat and share success. And always learn. The rest will take care of itself.

I love this discussion! I am really struggling with where I currently identify as a developer. I primarily do custom-built WordPress sites and am most fluent in HTML, CSS, and Javascript. However, my role has demanded that I learn more about the 'back end' technologies. It's frustrating because I'm not sure which to tackle first, if at all. I would love to expand my skill-set but am torn which direction to take my dev skills since there is no clear standard. It also feels extremely realistic to be knowledgeable in all of these different areas AND still devote enough time to being a great UI designer (since I am passionate about both design and dev).

Some comments may only be visible to logged-in visitors. Sign in to view all comments.