As with the disambiguation of any word, let’s start with its etymology and definiton. According to Wikipedia,disinformation has been borrowed from the Russian word — dezinformatisya (дезинформа́ция), derived from the title of a KGB black propaganda department.

Disinformation is false information spread deliberately to deceive.

To fully understand disinformation, especially in the modern age, we need to understand the key factors of any successful disinformation operation:

- creating disinformation (what)

- the motivation behind the op, or its end goal (why)

- the medium used to disperse the falsified information (how)

- the actor (who)

At the end, we’ll also look at how you can use disinformation techniques to maintain OPSEC.

In order to break monotony, I will also be using the terms “information operation”, or the shortened forms – “info op” & “disinfo”.

Creating disinformation

Crafting or creating disinformation is by no means a trivial task. Often, the quality of any disinformation sample is a huge indicator of the level of sophistication of the actor involved, i.e. is it a 12 year old troll or a nation state?

Well crafted disinformation always has one primary characteristic — “plausibility”. The disinfo must sound reasonable. It must induce the notion it’s likely true. To achieve this, the target — be it an individual, a specific demographic or an entire nation — must be well researched. A deep understanding of the target’s culture, history, geography and psychology is required. It also needs circumstantial and situational awareness, of the target.

There are many forms of disinformation. A few common ones are staged videos / photographs, recontextualized videos / photographs, blog posts, news articles & most recently — deepfakes.

Here’s a tweet from the grugq, showing a case of recontextualized imagery:

Disinformation.

The content of the photo is not fake. The reality of what it captured is fake. The context it’s placed in is fake. The picture itself is 100% authentic. Everything, except the photo itself, is fake.

Recontextualisation as threat vector. pic.twitter.com/Pko3f0xkXC

— thaddeus e. grugq (@thegrugq) June 23, 2019

Motivations behind an information operation

I like to broadly categorize any info op as either proactive or reactive. Proactively, disinformation is spread with the desire to influence the target either before or during the occurence of an event. This is especially observed during elections.1In offensive information operations, the target’s psychological state can be affected by spreading fear, uncertainty & doubt , or FUD for short.

Reactive disinformation is when the actor, usually a nation state in this case, screws up and wants to cover their tracks. A fitting example of this is the case of Malaysian Airlines Flight 17 (MH17), which was shot down while flying over eastern Ukraine. This tragic incident has been attributed to Russian-backed separatists.2 Russian media is known to have desseminated a number of alternative & some even conspiratorial theories3, in response. The number grew as the JIT’s (Dutch-lead Joint Investigation Team) investigations pointed towards the separatists. The idea was to muddle the information space with these theories, and as a result, potentially correct information takes a credibility hit.

Another motive for an info op is to control the narrative. This is often seen in use in totalitarian regimes; when the government decides what the media portrays to the masses. The ongoing Hong Kong protests is a good example.4 According to NPR:

Official state media pin the blame for protests on the “black hand” of foreign interference, namely from the United States, and what they have called criminal Hong Kong thugs. A popular conspiracy theory posits the CIA incited and funded the Hong Kong protesters, who are demanding an end to an extradition bill with China and the ability to elect their own leader. Fueling this theory, China Daily, a state newspaper geared toward a younger, more cosmopolitan audience, this week linked to a video purportedly showing Hong Kong protesters using American-made grenade launchers to combat police. …

Media used to disperse disinfo

As seen in the above example of totalitarian governments, national TV and newspaper agencies play a key role in influence ops en masse. It guarantees outreach due to the channel/paper’s popularity.

Twitter is another, obvious example. Due to the ease of creating accounts and the ability to generate activity programmatically via the API, Twitter bots are the go-to choice today for info ops. Essentially, an actor attempts to create “discussions” amongst “users” (read: bots), to push their narrative(s). Twitter also provides analytics for every tweet, enabling actors to get realtime insights into what sticks and what doesn’t. The use of Twitter was seen during the previously discussed MH17 case, where Russia employed its troll factory — the Internet Research Agency (IRA) to create discussions about alternative theories.

In India, disinformation is often spread via YouTube, WhatsApp and Facebook. Political parties actively invest in creating group chats to spread political messages and memes. These parties have volunteers whose sole job is to sit and forward messages. Apart from political propaganda, WhatsApp finds itself as a medium of fake news. In most cases, this is disinformation without a motive, or the motive is hard to determine simply because the source is impossible to trace, lost in forwards.5This is a difficult problem to combat, especially given the nature of the target audience.

The actors behind disinfo campaigns

I doubt this requires further elaboration, but in short:

- nation states and their intelligence agencies

- governments, political parties

- other non/quasi-governmental groups

- trolls

This essentially sums up the what, why, how and who of disinformation.

Personal OPSEC

This is a fun one. Now, it’s common knowledge that STFU is the best policy. But sometimes, this might not be possible, because afterall inactivity leads to suspicion, and suspicion leads to scrutiny. Which might lead to your OPSEC being compromised. So if you really have to, you can feign activity using disinformation. For example, pick a place, and throw in subtle details pertaining to the weather, local events or regional politics of that place into your disinfo. Assuming this is Twitter, you can tweet stuff like:

- “Ugh, when will this hot streak end?!”

- “Traffic wonky because of the Mardi Gras parade.”

- “Woah, XYZ place is nice! Especially the fountains by ABC street.”

Of course, if you’re a nobody on Twitter (like me), this is a non-issue for you.

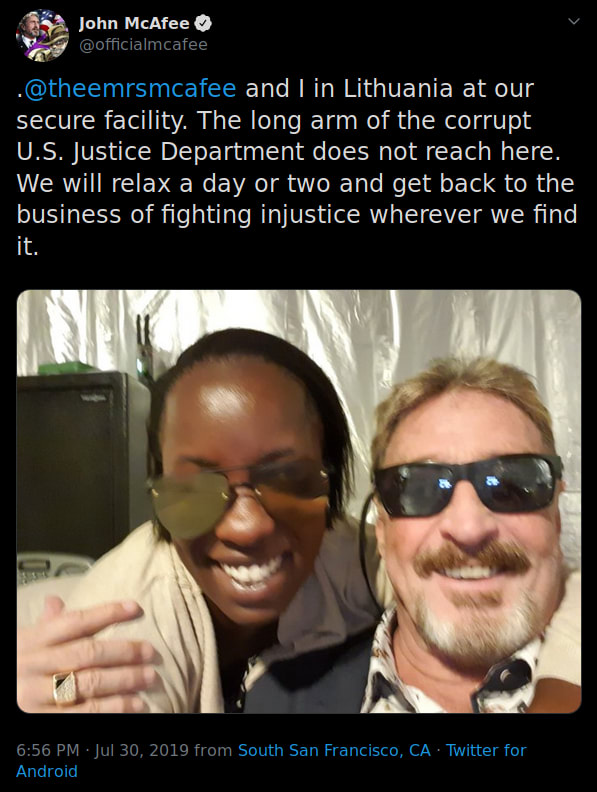

And please, don’t do this:

Conclusion

The ability to influence someone’s decisions/thought process in just one tweet is scary. There is no simple way to combat disinformation. Social media is hard to control. Just like anything else in cyber, this too is an endless battle between social media corps and motivated actors.

A huge shoutout to Bellingcat for their extensive research in this field, and for helping folks see the truth in a post-truth world.

This episode of CYBER talks about election influence ops (features the grugq!). ↩

The Bellingcat Podcast’s season one covers the MH17 investigation in detail. ↩

Wikipedia section on MH17 conspiracy theories ↩

Chinese newspaper spreading disinfo ↩

Use an adblocker before clicking this. ↩

Top comments (0)