Docker is a DevOps platform that is basically used to create, deploy, and run applications using the concept of containerization. With Docker, developers can pack all the dependencies and libraries of an application easily and ship it out as a single package.

This helps developers and operations teams mitigate the environmental issues that used to happen before. Developers can now be able to focus more on the features and deliverables than being concerned about the infrastructure compatibilities and configurations aspect of the platform. Further, this promotes the microservices architecture to help teams to build highly scalable applications.

Why Docker?

Docker is an open-source project that has changed how software is built and shipped by providing a feasible way to containerize applications. Over the last few years, this has resulted in a lot of enthusiasm around containers in all stages of the software delivery lifecycle, from development to testing to production. Docker has become a very mainstream platform in a short time since its debut in 2013. The big giants like Amazon, Cisco, Google, Microsoft, Red Hat, VMWare, and others have created the Open Container Initiative to develop a common standardization around it.

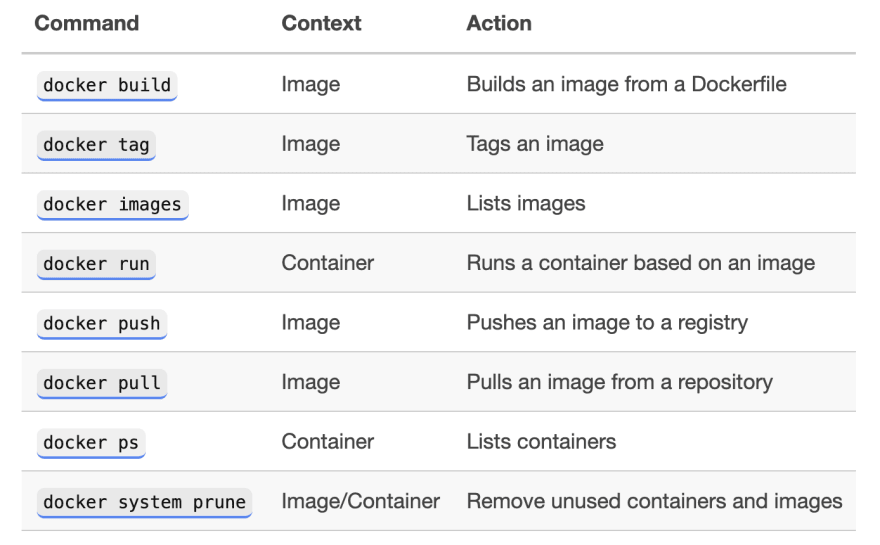

Here's an overview of a few commonly used commands.

Image source: Tania Rasciai

Here are some key benefits of using Docker

- You get a high level of control over all the changes because they are made using Docker containers and images. Thus, you can return back to the previous version whenever you want to.

- With Docker, you get a guarantee that if a feature is working in one environment, it will work in others as well.

- Docker, when used with DevOps, simplifies the process of creating application topology embodying various interconnected components.

- It makes the process of load balancing configuration easier with Ingress and built-in service concepts.

- It enables you to run CI/CD using them, which is more comfortable to use when compared to just using it with Docker.

Read our full article on the same 'The role of Docker in DevOps'

Docker can be a common interface tool between developers and operations personnel, as stated in DevOps principles, eliminating a source of friction between the two teams. It also promotes the same image/binaries to be saved and used at every step of the pipeline throughout. Moreover, being able to deploy a thoroughly tested container without environment differences is the most significant advantage, and it ensures that no errors are introduced in the build process.

You can simply and seamlessly migrate applications into production, eliminating all the friction in between. Something that was once a tedious task can now be as simple as:

docker stop container-id; docker run new-image

And in case if something goes wrong when deploying a new version of the application, you can always roll-back quickly or change to other container:

docker stop container-id; docker start other-container-id

Now, we will learn about how Docker integrates with CI/CD pipeline. Let us now look at how Docker plays a key role in the CI/CD pipeline. To begin with, Docker is supported by a majority of the build systems like Jenkins, Bamboo, Travis, etc. So how it works typically is that each project has a Docker file checked into its code repository along with the rest of the code for the application. The Docker file as we learned before, has instructions on building the Docker image. Once checked-in to GitHub, Jenkins pulls the code, uses the Docker file part of the code to build the Docker image.

You may use a supported Docker plugin for this purpose. On building the new Docker image, Jenkins will tag the image with a new build number, in this case, 1.0. On successful build, this image can then be used to run tests.

Once the tests are successful, it can then be pushed to the image repositories known as Docker registries, either to a repository internal to the company or external on Docker Hub. The image repository can then be integrated into a container hosting platform like Amazon ECS to host our application. This entire cycle of automated actions from making a change in the application to building, testing, releasing and finally deploying in production, completes a CI/CD pipeline.

The next step is to deploy this image in production. Major cloud service providers like Amazon, Google, Azure, all support containers. Google Container Engine supports running containers in production on Kubernetes clusters.

Kubernetes is a container orchestration technology which is an alternate solution to Docker swarm that we learned earlier. AWS has ECS, which stands for EC2 Container Service, it provides another mechanism to run containers in production.

On-prem solutions like Pivotal Cloud Foundry have PKS which stands for pivotal container service, which again, uses Kubernetes underneath. Finally, Docker’s own container hosting platform, Docker cloud uses Docker swarm underneath to orchestrate containers. As you can see containers and Docker are supported everywhere and there are many options to host containers online.

Conclusion

Because of the efficiencies of virtualizing the OS, containerization allows for a much larger scale of applications in virtualized environments. In development and testing, the applications can be built, tested, and deployed much more quickly. The use of containers is definitely booming and has been adopted by many companies so far.

With the help of Docker containers, once an application is containerized, developers will be able to deploy the same container in a different environment. As the Container remains the same, the application will be running identical in all environments without causing any dependency confusion.

Containers and Docker provide developers the freedom they want, as well as ways to build scalable apps that respond and change quickly to the ever-changing business conditions. It is evident from its adoption by many huge and small startups that Docker will continue to gain more fans, grow, and become of greater importance in the DevOps space.

Top comments (0)