In the world of programming write code as today is your last day on earth is not the main thing in the art of programming. Beyond understand what's the problem that our code will solve, we have other variables that we need to take care when we are writing code. For example:

- Complexity

- Maintainability

- Design Patterns

- Algorithms

- and beyond...

One of the most important things and the main topic of this article is the performance of our application when we are implementing an algorithm.

The performance of our algorithm helps us to make more user-friendly our application, letting the user invest only the necessary time to do the tasks and focus on other things before the go bored and close the window (browser or anything else). No matter how fast and proficient was the device from where the user is using our app. In today's world, the time is the most precious thing that we have.

Are many variables to take care when we talk about performance like the use of memory, disk space, execution time and another long beyond.

One of the most important tools to determinate the performance of our algorithm is the Big-O Notation.

Big O Notation

The Big-O Notation is a tool that let us determinate the complexity of an algorithm, letting us take a measure of the performance of a specific algorithm. This tool measures the worst case scenario where the algorithm goes to the highest point of demand.

The main Big-O terms are these:

- O(1) -> Constant

- O(n) -> Linear

- O(log n) -> Logarithmic

- O(n ^ 2) -> Quadratic

- O(2 ^ n) -> Exponential

O(1): Constant Complexity

The constant complexity is the easier, all the algorithms who, no matter the size of the input or output, the execution time and the resources used will be always the same, have this complexity. No matter how many times or where the algorithm is executed, this one will have exactly the same behavior all the time. For example:

As we see, no matter how big is the size of the array given as an argument, the behavior will always be the same. The only thing that might change will be the output, this because the array might not have the same data stored all the time.

O(n): Linear Complexity

We say that an algorithm has linear complexity when his execution time and/or resources used are directly proportional (grows up in a linear way) to the input size. For example:

To get an easier way to understand this complexity, we can compare this to an activity that we do every day (or mostly), for example, read a book or watch a movie. The time that we spend reading a book or watching a movie, depends on the number of pages of a book and the duration of the movie. For example, if a movie has a duration of two hours, you'll spend two hours watching a movie. If the book has one hundred pages and you read fifty pages in one hour, you'll spend two hours to read all the book.

O(log n): Logarithmic Complexity

This complexity is present where the impact of an algorithm is directly proportional to the logarithmic result of the input size. In other words, when we have an input size of 10 and we need 1 second to accomplish the task with our algorithm, we need to do exactly the same task with an input size of 100 in 2 seconds, with an input size of 1000 in 3 seconds and so on.

One interesting example is the binary search. In this algorithm, we divide the array (previously ordered) into two parts. We take the middle index as a reference to get the value at the middle of the array. If the number in that spot is equal to the number we are looking for, we return the middle index adding up the prefix that we use to find the spot where the number we are looking for is stored. Thanks to that, if the number is bigger than the number stored at the middle of the array, we look for the number in the right side of the array, instead, if is lower, we look in the left side of the array. After that, we use the prefix to use as the new prefix in the next iteration as a recursive way. We repeat this loop until get the given number.

O( n^2 ): Quadratic Complexity

The quadratic complexity is present when the impact of the algorithm is directly proportional to the square of the input size. This means, if we have an array as input with a length of 4 spots and we want to compare to look if the array has repeated items, we need to do 16 comparations to accomplish our task. This complexity is common in sorting algorithms like bubble sort, insertion sort, and the selection sort.

In this function, the complexity can grow up if we add more for loops. This could make us go to an O(n * n) complexity.

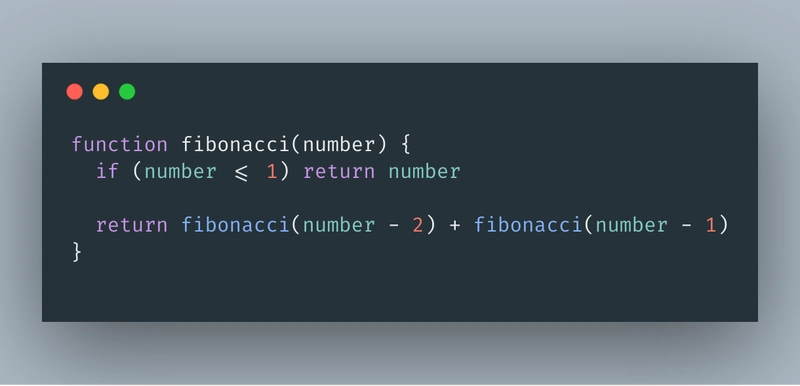

O( 2^n ): Exponential Complexity

When an algorithm has exponential complexity, this means that the complexity will double with each additional item in the input. If 10 items take 100 seconds, 11 would take 200 seconds, 13 would take 400 seconds and so on.

This doesn't mean that only exists O( 2^n ), this is just for explanation purpose. Can grow to O( 3^n ), O( 4^n ) and so on.

Wrapping Up

I hope this helps you to understand more the Big-O Notation, it's a great help to measure the complexity of an algorithm, exists many more tools to do this but this is one of the more common.

If you have questions or want to correct some mistakes, feel free to leave your comment below. I'm leaving below a little list of resources about Big-O Notation.

Top comments (24)

I love this post. Very useful. Thanks Carlos!

Thanks for your words. I love to help! Greetings :)!

Awesome Post! Really helped my general understanding of Big O. that said, I did have a question regarding the O(n2) definition.

If you have a function that has 3 nested for loops, as opposed to 2 (as you have in your example) does the complexity then become O(n3)? I can't seem to see anything online that would say so...

Hi! Thanks, I really appreciate it!

Yes, absolutely. If you make your for-loops grows with the same input, you can have something like an array of 4 spots and go through him three, four or five times growing the complexity in something like O(4 3, 4, 5 ...n ).

If you're going in a recursive way, the complexity it doesn't is O(n n ) and becomes exponential.

awesome!

That makes a tonne of sense! Thanks for the reply 😊

You're welcome. I'm glad to helped you 🙏

Wonderfully written!

One suggestion - Next time when you describe something which involves writing code codes, don't use the screenshots, written code is more useful than the screenshots.

Thank You!

True, sorry about that. Thanks for your suggestion, I'll do it in the next article! 😁

awesome !

Good post :)

Would the exponential example not be 100, 200, (...400), 800 though?

Yes, sure!

Sorry, my bad. I'll fix it, thanks! ;)

No problem...did want to post back though since I saw the article was updated.

In the example you laid out, I think an algorithm running in O(2^n) time would be more like this:

10 items - 100s

11 items - 200s

(12 items - not listed - 400s)

13 items - 800s

Just wanted to point it out, since the typo makes the algorithm sound more performant than it really is.

A good read. Thank you.

Very well put!

What a super clear description.

Thank's. I appreciate :)

Thanks, this post really helped me alot!

You're welcome. It's good to help!

Thanks, this post really helped me a lot!