Should I use Selenium or something else? Should I make a list of everything that needs to be automated? Should I ask for help?

Sounds familiar?

I know the feeling. I felt the same way.

Whether you are a beginner or an expert, chances are some of these points will help you bring your automated testing skills to the next level.

Here are 10 unexpected ways to help you improve your automated tests.

1. Embrace the ever-changing technology trends

Coding your own automated testing framework can make your life a living hell. So, how about you avoid perdition and accept innovation?

The worst part is that you're not going to realize that…at least until it's too late.

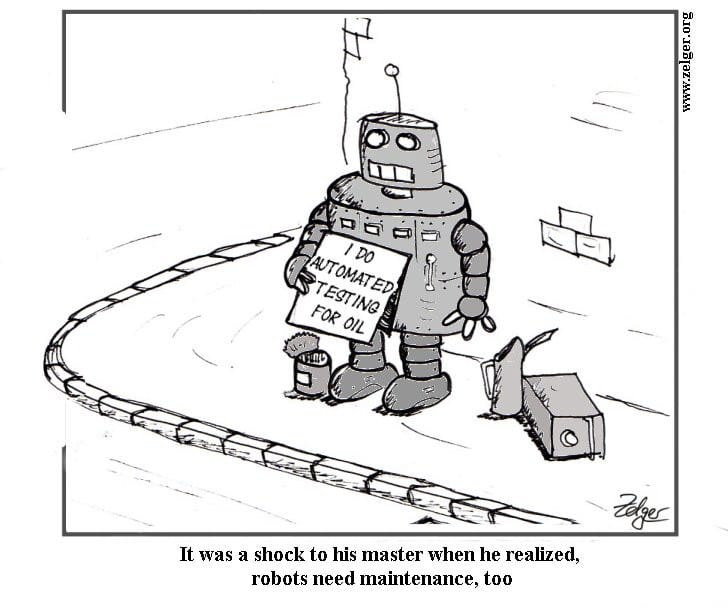

Creating a basic test suite is a piece of cake, but would you enjoy maintaining the entire codebase when 478 out of your 626 tests will be failing due to different errors, right before the big release?

…

That's right.

Yep, your entire team will end up doing manual testing for the next couple of days. Bummer, huh?

If you're thinking "That's totally not me", ask yourself if you have the time to handle:

• Creating a stable cross-browser cloud infrastructure for your tests.

• Implementing image comparison algorithms for visual checks.

• Implementing video recording for your test runs.

• Implementing a schedule for your tests to run on a daily basis.

• Integrating your tests with your CI/CD system.

If the answer is "yes", you can stop reading from here on.

If the answer is "no", the next question should be "alright, what's the alternative?".

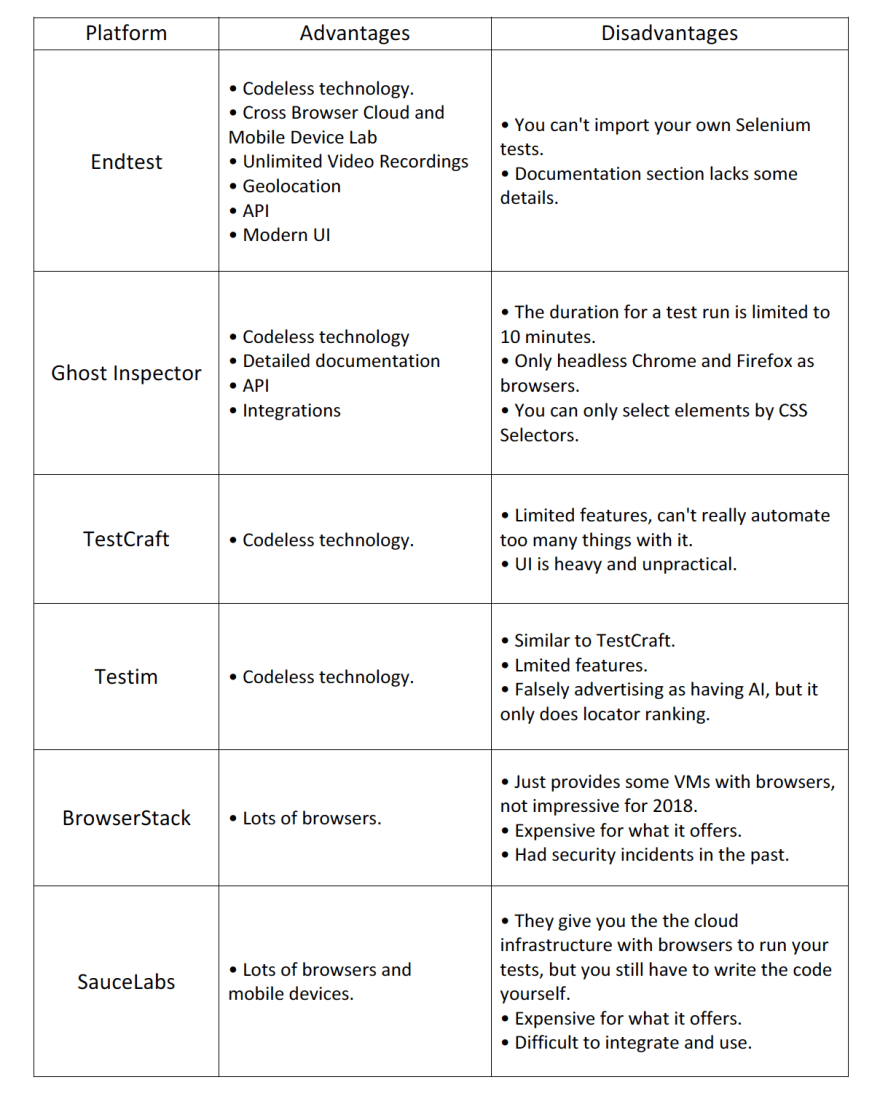

Good news is that companies have started migrating to cloud platforms that provide testing infrastructure (IaaS, PaaS and SaaS).

Here's what I could find about them, after doing some extensive research:

As for Cucumber or Behat, they are just libraries so I couldn't find a spot for them here.

2. Make stability a priority.

If your test passed 99 times and failed once, the bottom line is that your automated tests are unstable, as unpleasant as acknowledging that may be.

Having 2 stable tests instead of 5 unstable ones is always better. Those unstable tests will just test your patience and force you to manually check the functionality over and over again.

You already know that it's not a smart move to move on to the next test case until the one you're working on is completely stable.

That sneaky "I'm going to come back and fix it anyway" lingering in your mind won't happen and you're just going to end up doing extra work to fix it.

3. It's OKAY to be OBSESSED.

Don't forget to focus on the negative testing scenarios, that's where the bugs crawl, they don't like the clean happy path.

Create a test for each bug you find while manually testing, so you know it will never cheap-shot you.

4. Visuals are important.

Even if your tests are interacting with the applications via the UI and you're checking for the existence of some elements, that doesn't mean everything will be pixel perfect.

That's why it's important to add screenshot comparison steps that visually check elements, pixel by pixel, against already existing screenshots.

I wrote a short technical article - - that you might find useful -- about doing that, a few months ago.

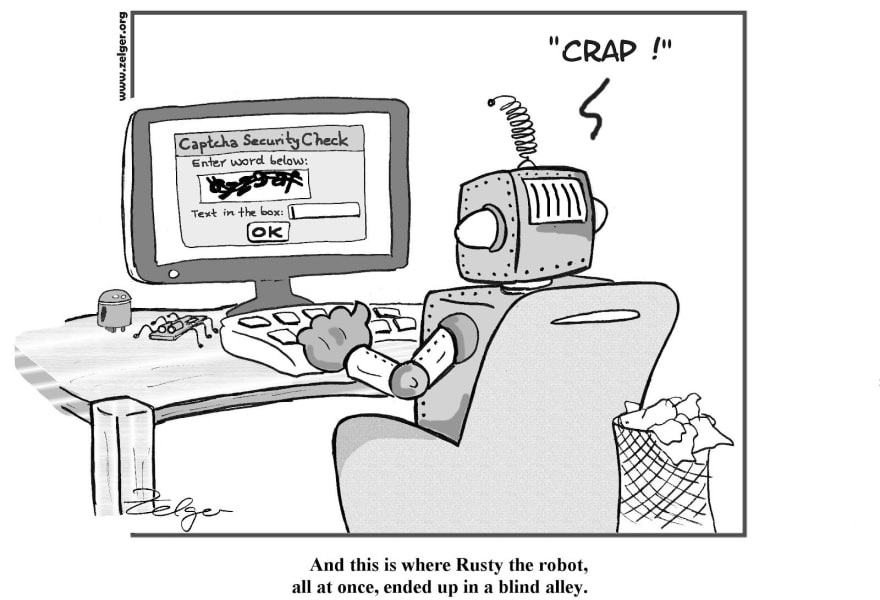

5. You. Can't. Automate. Everything.

Sadly, there are some things that can't be automated in a stable and efficient manner. The sooner we accept that, the better.

Take reCAPTCHA for example, you're probably going to find yourself in need to disable it for the requests coming from your test machines.

Dwelling on automating the impossible will just waste your time.

Luckily, there are smart workarounds out there. All you have to do is find them.

6. Cross Browser: You DO need it.

"Well, our web application is working great in Chrome…"

"And that's what most people are using anyway…"

"Hmm, it would take us some time to make the tests run on all the browsers…"

"Some users have been reporting some bugs, maybe they're using some old version of Chrome or … you know, users are just stupid sometimes."

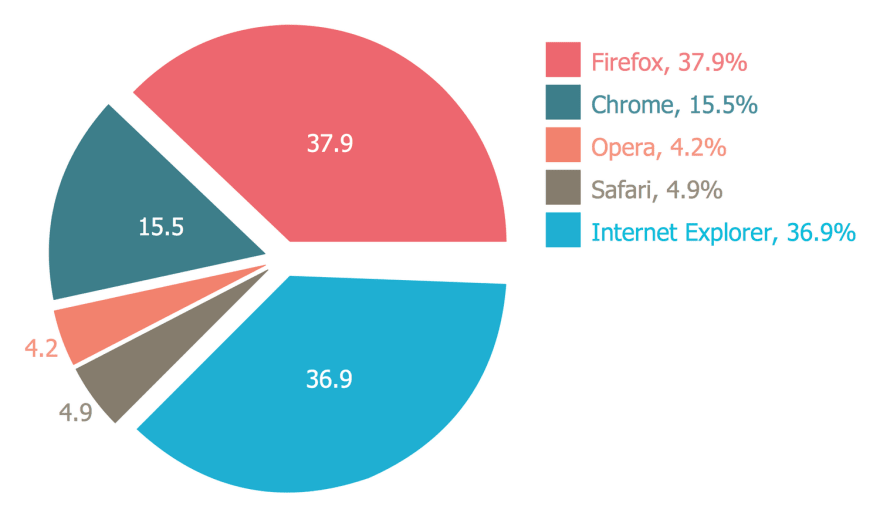

Curiosity drives you to go around and ask for statistics about what browsers people are using to access your web application.

That's how you get this lovely pie chart:

How f****d are you?

This is when you know you need to go Cross Browser, there is really no other way to go.

It may be complicated and, sometimes, expensive, but it's always worth it.

And if you're going to go, go all the way:

• Don't rely on headless browsers.

• Use Windows machines for testing in Chrome, Firefox and IE.

• Use Windows 10 machines for testing in Edge.

• Use Mac OS machines for testing in Safari, Chrome and Firefox.

If you're going to go codeless, the only platform that offers the above - while being…well, codeless - at the moment, is Endtest.

7. Remember the little things.

Sometimes, I tend to forget those little things that do matter.

Here are some questions to ask yourself when you think you're done:

• When was the last time you tested the META tags from the Page Source?

• When was the last time you tested the cookies?

These things are surprisingly important for ranking, marketing and tracking purposes.

8. Sometimes, Mobile comes First.

Those who are working on B2C products already know that most users access their product through their mobile device.

Those who are working on B2B products need to start considering the same thing.

9. Not just the testers, but everyone should write tests.

Since the Product Owner and the Business Analyst are the ones who know best how the software and flows should work, they're the best people to take part in the quest that test-writing can be.

Their take will always be useful when writing the tests for happy paths.

This experience is a win-win, since it also helps them gain a little more insight on how their User Stories were implemented.

But they might not know how to write code….

For this kind of scenario, going codeless is the only way to go if you're not willing to spend months on teaching them how to do it. If you are, I must say I admire your patience.

10. Don't be afraid to ask for a little bit of help.

Since the entire company will benefit from those automated tests, everyone will gladly pitch in if you need a little hand ( even if you know better! ).

Top comments (2)

...• Don't rely on headless browsers....Now that browser vendors are supporting headless browser, not 3rd party stand ins, I feel that not trusting headless is not longer valid. Esp for the top 3.

Love the imagery and humor. :)

This is a duplicate of dev.to/endtest_io/10-unexpected-wa... - is that your company?