In today's world almost everyone has come across the buzz around Artificial Intelligence (AI). We have come a long way in this domain of computer science and the amount of development happening in this field is tremendous. Several unsolved problems have been solved by harnessing the power of AI.

So, is AI magic?

Well, it isn't. It's just science, the science of getting computers to act without being explicitly programmed.

The know how's and the basics.

Before we get deep into this dazzling world of predictions and learning, we need to have our basics strong. Phrases like AI, machine learning, neural networks, and deep learning mean related but different things.

Artificial Intelligence

As the Venn diagram above depicts, AI is a broad field. It encompasses machine learning, neural networks, and deep learning, but it also includes many approaches distinct from machine learning. A crisp definition of the field would be: the effort to automate intellectual tasks normally performed by humans.

Machine Learning

Machine Learning, as a subfield of AI distinct from symbolic AI arises from a question that could a computer learn on its own without being explicitly programmed for a specific task?

Machine Learning seeks to avoid the hard-coding way of doing things. But the question is how a machine would learn if it were not explicitly instructed on how to perform a task. A simple answer to this question is from the examples in data.

This opened the doors to a new programming paradigm.

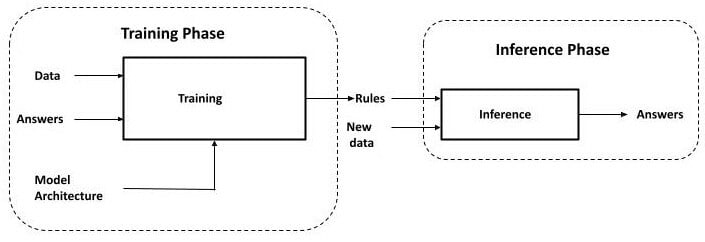

In the classical programming paradigm, we input the data and the rules to get the answers.

Whereas, in the machine learning paradigm we put in the data and the answers and get a set of rules which can be used on other similar data to get the answers.

Let's take the example of identifying human faces in an image.

We, as humans can very well classify objects based on their characteristics and features. But how do we train a machine to do so? It is hard for any programmer, no matter how smart and experienced, to write an explicit set of rules in a programming language to accurately decide whether an image contains a human face.

The hypothetical search space without any constraint is infinite and it's impossible to search for explicit rules to define a task in a limited amount of time.

Any heuristic we produce is likely to fall short when facing the myriad variations that faces can present in real-life images, such as differences in size, shape, and details of the face; expression; hairstyle; color; the background of the image and many more.

There are two important phases in machine learning.

The first is the training phase.

This phase takes the data and answers, together referred to as the training data. Each pair of input data (instances) and the desired answer (labels) is called an example. With the help of the examples, the training process produces the automatically discovered rules.

Although the rules are discovered automatically, they are not discovered entirely from scratch. In other words, although the machine is intelligent, but not enough to produce the rules.

A human engineer provides a blueprint for the rules at the outset of training. The use of labeled data and human guidance in producing the rules is also known as Supervised Learning.

It's just like a child learning to walk and requires support in their initial stages.

The blueprint is encapsulated in a model, which forms a hypothesis space for the rules the machine may possibly learn. Without this hypothesis space, there is a completely unconstrained and infinite space of rules to search in, which is not conducive to finding good rules in a limited amount of time.

In the second phase of the machine learning paradigm, we use these generated rules to perform inferences on new data.

Neural Networks and deep learning

Neural networks are a subfield of machine learning which is inspired by the neurons present in the human and animal brains. The idea here is to replicate the way a brain learns things as it perceives. We build a net of interconnected neurons each responsible for memorizing certain aspects of a given task to perform.

The data is passed through multiple separable stages also known as layers. These layers are usually stacked on top of each other, and these types of models are also known as sequential models.

These neural networks apply a mathematical function over the input data to produce an output value. These neural networks are generally stateful, i.e. they hold internal memory.

Each layer's memory is captured in its weights.

Why TensoFlow.js?

As it's known, JavaScript is scripting language traditionally devoted to creating the web pages and back-end business logic.

Someone who primarily works with JavaScript may feel left out by the deep-learning revolution which seems to be an exclusive territory of languages such as Python, R, C++. TensorFlow, is a primary tool for building deep-learning models.

- TensorFlow.js is the product of cross-pollination between JavaScript and the world of deep learning. It is suitable for folks who are good in JavaScript and want to explore the world of deep learning and for folks who have basic mathematical understanding of the deep learning world and are looking for a place to dive deep into this field. With deep learning, JavaScript developers can make their web apps more intelligent.

- TensorFlow.js is created and maintained by Google, so it's worth noting that some of the best brains in the world have come together to make it happen.

- Provides a no-install experience in the world of machine learning. Generally, the AI in a website is locked in an AI and the performance varies with the bandwidth of the connection. TensorFlow.js provides us with the ability to run deep learning models directly in the browsers without any installation of other dependencies.

JavaScript based applications can run anywhere. These codes can be added to progressive web-apps or React application and then these applications can run without being connected to the internet.

It also provides a great deal of privacy as the data never leaves a user's system.

It can also be used in IoT based devices such as RaspberryPi.

Conclusion, mastery of TensorFlow.js can help us in building cross platform intelligent application with great efficacy and security.

And a huge yes to the picture above XD

Hope you enjoyed reading the blog!

Thank you :)

Top comments (4)

Hi, Sarvesh here, nice article and a good briefing about everything.

Glad you liked it :)

thoroughly enjoyed! beautifully drafted!

Thank you :)