Software developers today are encouraged to focus on building, and that’s a great thing. We’re benefitting from maker culture, an attitude of “always be shipping,” open source collaboration, and a bevy of apps that help us prioritize and execute with maximum efficiency. We’re in an environment of constant creation, where both teams and solo entrepreneurs can be maximally productive.

Sometimes, this breakneck-speed productivity shows its downsides.

As I learn more about security best practices, I can’t help but see more and more applications that just don’t have a clue. A lack of awareness of security seems to lead to a lack of prioritization of those tasks that don’t directly support bringing the product to launch. The market seems to have made it more important to launch a usable product than a secure one, with the prevailing attitude being, “we can do the security stuff later.”

Cobbling together a foundation based more on expediency than longevity is a bad way to build applications and a great way to build security debt. Security debt, like technical debt, is amassed by making (usually hasty) decisions that can make it more difficult to secure the application later on. If you’re familiar with the concept of “pushing left” (or if you read my article about sensitive data exposure), you’ll know that when it comes to security, sometimes there isn’t a version of “later” that isn’t too late. It’s a shame, especially since following some basic security practices with high benefit yield early on in the development process doesn’t take significantly more time than not following them. Often, it comes down to having some basic but important knowledge that enables making the more secure decision.

While application architecture varies greatly, a few basic principles can commonly be applied. This article will provide a high-level overview of areas that I hope will help point developers in the right direction.

There must be a reason we call it application “architecture,” and I like to think it’s because the architecture of software is similar in some basic ways to the architecture of a building. (Or at least, in my absolute zero building-creation expertise, how I imagine a pretty utilitarian building to be.) Here’s how I like to summarize three basic points of building secure application architecture:

- Separated storage

- Customized configuration

- Controlled access and user scope

This is only a jumping-off point meant to get us started on the right foot; a complete picture of a fully-realized application’s security posture includes areas outside the scope of this post, including authentication, logging and monitoring, integration, and sometimes compliance.

1. Separated storage

The concept of separation, from a security standpoint, refers to storing files that serve different purposes in different places. When we’re constructing our building and deciding where all the rooms go, we similarly create the lobby on the ground floor and place administrative offices on higher floors, perhaps off the main path. While both are rooms, we understand that they serve different purposes, have different functional needs, and possibly very different security requirements.

When it comes to our files, the benefit is perhaps easiest for us to understand if we consider a simple file structure:

application/

├───html/

│ └───index.html

├───assets/

│ ├───images/

│ │ ├───rainbows.jpg

│ │ └───unicorns.jpg

│ └───style.css

└───super-secret-configurations/

└───master-keys.txt

In our simplified example, let’s say that all our application’s images are stored in the application/assets/images/ directory. When one of our users creates a profile and uploads their picture to it, this picture is also stored in this folder. Makes sense, right? It’s an image, and that’s where the images go. What’s the issue?

If you’re familiar with navigating a file structure in a terminal, you may have seen this syntax before: ../../. The two dots are a handy way of saying, “go up one directory.” If we execute the command cd ../../ in the images/ directory of our simple file structure above, we’d go up into assets/, then up again to the root directory, application/. This is a problem because of a wee little vulnerability dubbed path traversal.

While the dot syntax saves us some typing, it also introduces the interesting advantage of not actually needing to know what the parent directory is called in order to go to it. Consider an attack payload script, delivered into the images/ folder of our insecure application, that went up one directory using cd ../ and then sent everything it found to the attacker, on repeat. Eventually, it would reach the root application directory and access the super-secret-configurations/ folder. Not good.

While other measures should certainly be in place to prevent path traversal and related user upload vulnerabilities, the simplest prevention by far is a separation of storage. Core application files and assets should not be combined with other data, and especially not with user input. It’s best to keep user-uploaded files as well as activity logs (which may contain juicy data and can be vulnerable to injection attacks) separate from the main application.

Separation can be achieved in a few ways, such as by using a different server, different instance, separate IP range, or separate domain.

2. Customized configuration

While spending time on customization may be frowned upon in some scenarios when it comes to developing quickly, one area that we definitely want to customize is configuration settings. Security misconfiguration is listed in the OWASP Top 10 as a significant number of security incidents occur because a server, firewall, or administrative account is running in production with default settings in play. Upon the opening of our new building, we’d hopefully be more careful to ensure we haven’t left any keys in the locks.

Usually, the victims of attacks related to default settings aren’t specifically targeted. Rather, they are found by automated scanning tools that attackers run over many possible targets, effectively prodding at many different systems to see if any roll over and expose some useful exploit. The automated nature of this attack means that it’s important for us to review settings for every piece of our architecture. Even if an individual piece doesn’t seem significant, it may provide a vulnerability that allows an attacker to use it as a gateway to our larger application.

In particular, examine architecture components for unattended areas such as:

- Default accounts, especially with default passwords, left in service;

- Example web pages, tutorial applications, or sample data left in the application;

- Unnecessary ports left in service, or ports left open to the Internet;

- Unrestricted permitted HTTP methods;

- Sensitive information stored in automated logs;

- Default configured permissions in managed services; and,

- Directory listings, or sensitive file types, left accessible by default.

This list isn’t exhaustive, and specific architecture components, such as cloud storage or web servers, will have other features that can be configured and thus should be reviewed. In general, we can reduce our application’s attack surface by being minimalists when it comes to architecture components. If we use fewer components or don’t install modules we don’t really need, we’ll have fewer possible attack entry points to configure and safeguard.

3. Controlled access and user scope

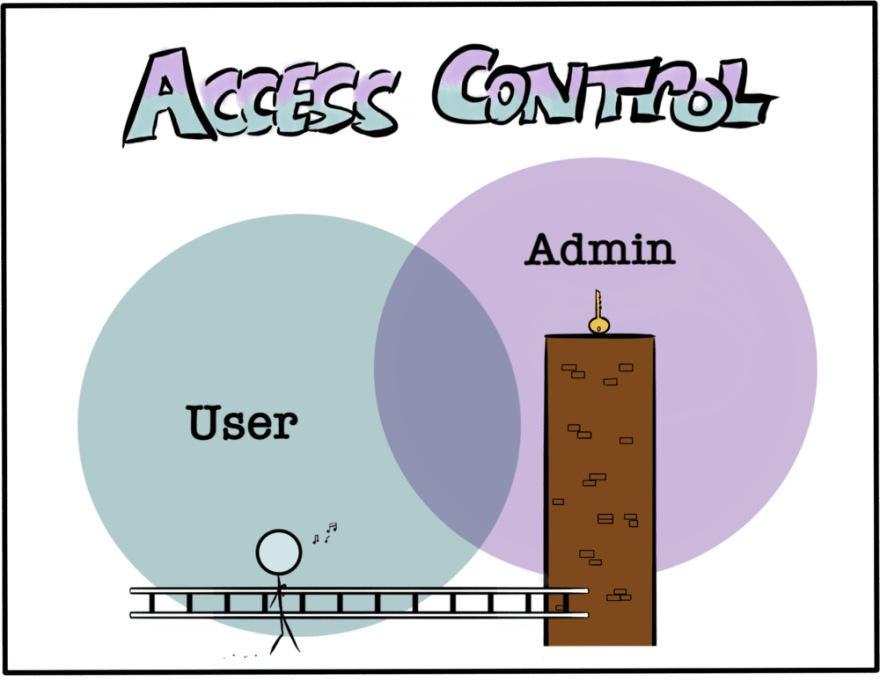

One of the more difficult security problems to test for in an application is improperly configured access control. Automated testing tools have limited capability to find areas of an application that one user shouldn’t be able to access, and this is often thus left to manual testing or source code review to discover. By considering this vulnerability early on in the software development lifecycle when architectural decisions are being made, we reduce the risk that it becomes a problem that’s harder to fix later. After all, we wouldn’t simply leave our master keys out of reach on a high ledge and hope no one comes along with a ladder.

Broken access control is listed in the OWASP Top 10, which goes into more detail on its various forms. As a simple example, consider an application that has two levels of access: administrators, and users. We may build a feature for our application, such as the ability to moderate or ban users, with the intention that only administrators would be allowed to use it. If we’re aware of the possibility of access control misconfigurations or exploits, we may decide to build the moderation feature in a completely separate area from the user-accessible space, such as on a different domain, or as part of a model that users don’t share. This greatly reduces the risk that an access control misconfiguration or elevation of privilege vulnerability might allow a user to improperly access the moderation feature later on.

Robust access control in our application of course necessitates further support to be effective, and we should consider factors such as sensitive tokens or keys passed as URL parameters or whether a control fails securely or insecurely. Nevertheless, by considering authorization at the architectural stage, we can set ourselves up to make further reinforcements easier to implement.

Security basics for maximum benefit

Similar to avoiding racking up technical debt by choosing a well-vetted framework, developers can avoid security debt by becoming aware of common vulnerabilities and the simple architectural decisions we can make to help mitigate them. For a much more detailed resource on how to bake security into our applications from the start, the OWASP Application Security Verification Standard is a robust guide.

Top comments (2)

Hi Victoria,

a pretty informative article of yours, thank you. The mentioning of

OWASPis new to me, so I'm gonna dig there.Cheers,

Labi

Yes! Dig deeper! I'm glad to know my post introduced OWASP to you.

Happy coding!