Follow me on Twitter, happy to take your suggestions on topics or improvements /Chris

This article is part of a series:

- Docker - from the beginning, Part I, this covers: why Docker, how it works and covers basic concepts like images, containers and the usage of a Dockerfile. It also introduces some basic Docker commands on how to manage the above concepts.

- Docker - from the beginning, Part II, this is about learning about Volumes, what they are, how they can work for you and primarily how they will create an amazing development environment

- Docker - from the beginning, Part III, this part is about understanding how to work with Databases in a containerized environment and in doing so we need to learn about linking and networks

- Docker - from the beginning, Part IV, we are here

- Docker - from the beginning, Part V, this part is the second and concluding part on Docker Compose where we cover Volumes, Environment Variables and working with Databases and Networks

This part is about dealing with more than two Docker containers. You will come to a point eventually when you have so many containers to manage it feels unmanageable. You can only keep typing docker run to a certain point, as soon as you start spinning up multiple containers it just hurts your head and your fingers. To solve this we have Docker Compose.

TLDR; Docker Compose is a huge topic, for that reason this article is split into two parts. In this part, we will describe why Docker Compose and show when it shines. In the second part on Docker Compose we will cover more advanced topics like Environment Variables, Volumes and Databases.

In this part we will cover:

- Why docker compose, it's important to understand, at least on a high level that there are two major architectures Monolith and Microservices and that Docker Compose really helps with managing the latter

- Features, we will explain what feature Docker Compose supports so we will come to understand why it's such a good fit for our chosen Microservice architecture

- When Docker isn't enough, we will explain at which point using Docker commands becomes tedious and painful and when using Docker Compose is starting to look more and more enticing

- In action, lastly we will build a docker-compose.yaml file from scratch and learn how to manage our containers using Docker Compose and some core commands

Resources

Using Docker and containerization is about breaking apart a monolith into microservices. Throughout this series, we will learn to master Docker and all its commands. Sooner or later you will want to take your containers to a production environment. That environment is usually the Cloud. When you feel you've got enough Docker experience have a look at these links to see how Docker can be used in the Cloud as well:

- Containers in the Cloud Great overview page that shows what else there is to know about containers in the Cloud

- Deploying your containers in the Cloud Tutorial that shows how easy it is to leverage your existing Docker skill and get your services running in the Cloud

- Creating a container registry Your Docker images can be in Docker Hub but also in a Container Registry in the Cloud. Wouldn't it be great to store your images somewhere and actually be able to create a service from that Registry in a matter of minutes?

Why Docker Compose

Docker Compose is meant to be used when we need to manage many services independently. What we are describing is something called a microservice architecture.

Microservice architecture

Let's define some properties on such an architecture:

- Loosely coupled, this means they are not dependent on another service to function, all the data they need is just there. They can interact with other services though but that's by calling their external API with for example an HTTP call

- Independently deployable, this means we can start, stop and rebuild them without affecting other services directly.

- Highly maintainable and testable, services are small and thus there is less to understand and because there are no dependencies testing becomes simpler

- Organized around business capabilities, this means we should try to find different themes like booking, products management, billing and so on

We should maybe have started with the question of why we want this architecture? It's clear from the properties listed above that it offers a lot of flexibility, it has less to no dependencies and so on. That sounds like all good things, so is that the new architecture that all apps should have?

As always it depends. There are some criteria where Microservices will shine as opposed to a Monolithic architecture such as:

- Different tech stacks/emerging techs, we have many development teams and they all want to use their own tech stack or want to try out a new tech without having to change the entire app. Let each team build their own service in their chosen tech as part of a Microservice architecture.

- Reuse, you really want to build a certain capability once, like for example billing, if that's being broken out in a separate service it makes it easier to reuse for other applications. Oh and in a microservices architecture you could easily combine different services and create many apps from it

- Minimal failure impact, when there is a failure in a monolithic architecture it might bring down the entire app, with microservices you might be able to shield yourself better from failure

There are a ton more arguments on why Micro services over Monolithic architecture. The interested reader is urged to have a look at the following link .

The case for Docker Compose

The description of a Microservice architecture tells us that we need a bunch of services organized around business capabilities. Furthermore, they need to be independently deployable and we need to be able to use different tech stacks and many more things. In my opinion, this sounds like Docker would be a great fit generally. The reason we are making a case for Docker Compose over Docker is simply the sheer size of it. If we have more than two containers the amount of commands we need to type suddenly grows in a linear way. Let's explain in the next section what features Docker Compose have that makes it scale so well when the number of services increase.

Docker Compose features overview

Now Docker Compose enables us to scale really well in the sense that we can easily build several images at once, start several containers and many more things. A complete listing of features is as follows:

- Manages the whole application life cycle.

- Start, stop and rebuild services

- View the status of running services

- Stream the log output of running services

- Run a one-off command on a service

As we can see it takes care of everything we could possibly need when we need to manage a microservice architecture consisting of many services.

When plain Docker isn't enough anymore

Let's recap on how Docker operates and what commands we need and let's see where that takes us when we add a service or two.

To dockerize something, we know that we need to:

- define a Dockerfile that contains what OS image we need, what libraries we need to install, env variables we need to set, ports that need opening and lastly how to - start up our service

- build an image or pull down an existing image from Docker Hub

- create and run a container

Now, using Docker Compose we still need to do the part with the Dockerfile but Docker Compose will take care of building the images and managing the containers. Let's illustrate what the commands might look like with plain Docker:

docker build -t some-image-name .

Followed by

docker run -d -p 8000:3000 --name some-container-name some-image-name

Now that's not a terrible amount to write, but imagine you have three different services you need to do this for, then it suddenly becomes six commands and then you have the tear down which is two more commands and, that doesn't really scale.

Enter docker-compose.yaml

This is where Docker Compose really shines. Instead of typing two commands for every service you want to build you can define all services in your project in one file, a file we call docker-compose.yaml. You can configure the following topics inside of a docker-compose.yaml file:

- Build, we can specify the building context and the name of the Dockerfile, should it not be called the standard name

- Environment, we can define and give value to as many environment variables as we need

- Image, instead of building images from scratch we can define ready-made images that we want to pull down from Docker Hub and use in our solution

- Networks, we can create networks and we can also for each service specify which network it should belong to, if any

- Ports, we can also define the port forwarding, that is which external port should match what internal port in the container

- Volumes, of course, we can also define volumes

Docker compose in action

Ok so at this point we understand that Docker Compose can take care of pretty much anything we can do on the command line and that it also relies on a file docker-compose.yaml to know what actions to carry out.

Authoring a docker-compose.yml file

Let's actually try to create such a file and let's give it some instructions. First, though let's do a quick review of a typical projects file structure. Below we have a project consisting of two services, each having their own directory. Each directory has a Dockerfile that contains instructions on how to build a service.

It can look something like this:

docker-compose.yaml

/product-service

app.js

package.json

Dockerfile

/inventory-service

app.js

package.json

Dockerfile

Worth noting above is how we create the docker-compose.yaml file at the root of our project. The reason for doing so is that all the services we aim to build and how to build and start them should be defined in one file, our docker-compose.yml.

Ok, let's open docker-compose.yaml and enter our first line:

// docker-compose.yaml

version: '3'

Now, it actually matters what you specify here. Currently, Docker supports three different major versions. 3 is the latest major version, read more here how the different versions differ, cause they do support different functionality and the syntax might even differ between them Docker versions offical docs

Next up let's define our services:

// docker-compose.yaml

version: '3'

services:

product-service:

build:

context: ./product-service

ports:

- "8000:3000"

Ok, that was a lot at once, let's break it down:

-

services:, there should only be one of this in the whole docker-compose.yaml file. Also, note how we end with

:, we need that or it won't be valid syntax, that is generally true for any command - product-service, this is a name we choose ourselves for our service

- build:, this is instructing Docker Compose how to build the image. If we have a ready-made image already we don't need to specify this one

-

context:, this is needed to tell Docker Compose where our

Dockerfileis, in this case, we say that it needs to go down a level to theproduct-servicedirectory - ports:, this is the port forwarding in which we first specify the external port followed by the internal port

All this corresponds to the following two commands:

docker build -t [default name]/product-service .

docker run -p 8000:3000 --name [default name]/product-service

Well, it's almost true, we haven't exactly told Docker Compose yet to carry out the building of the image or to create and run a container. Let's learn how to do that starting with how to build an image:

docker-compose build

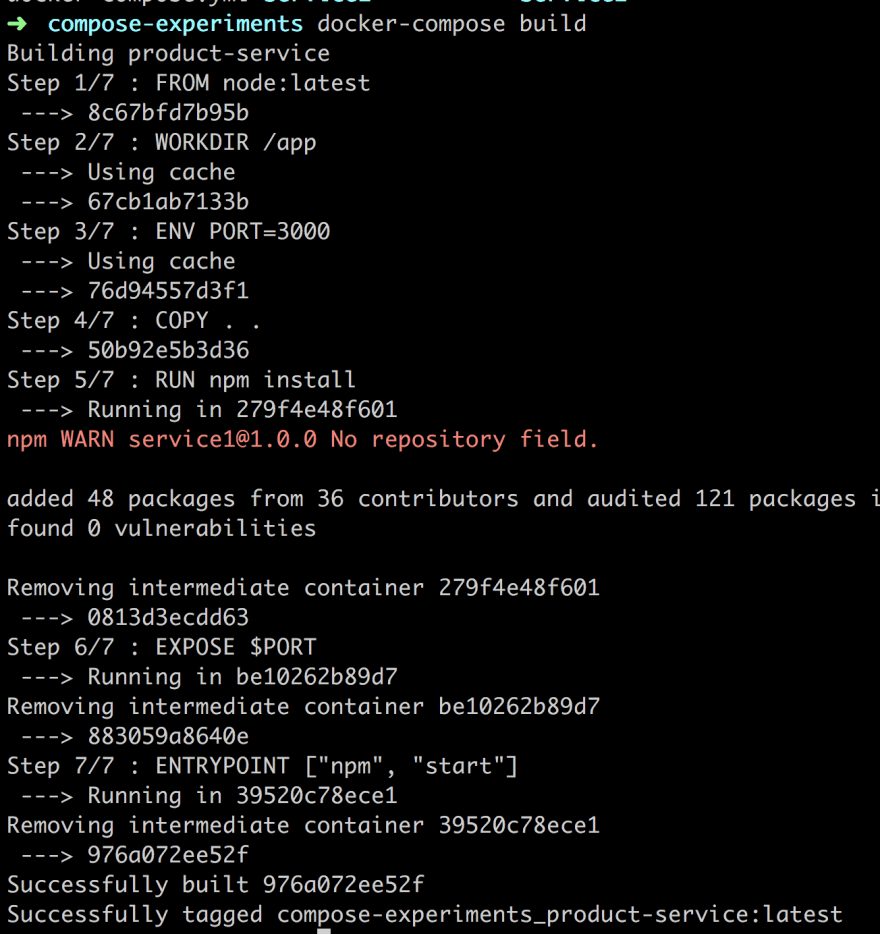

The above will build every single service you have specified in docker-compose.yaml. Let's look at the output of our command:

Above we can see that our image is being built and we also see it is given the full name compose-experiments_product-service:latest, as indicated by the last row. The name is derived from the directory we are in, that is compose-experiments and the other part is the name we give the service in the docker-compose.yaml file.

Ok as for spinning it up we type:

docker-compose up

This will again read our docker-compose.yaml file but this time it will create and run a container. Let's also make sure we run our container in the background so we add the flag -d, so full command is now:

docker-compose up -d

Ok, above we can see that our service is being created. Let's run docker ps to verify the status of our newly created container:

It seems to be up and running on port 8000. Let's verify:

Ok, so went to the terminal and we can see we got a container. We know we can bring it down with either docker stop or docker kill but let's do it the docker-compose way:

docker-compose down

As we can see above the logs is saying that it is stopping and removing the container, it seems to be doing both docker stop [id] and docker rm [id] for us, sweet :)

It should be said if all we want to do is stop the containers we can do so with:

docker-compose stop

I don't know about you but at this point, I'm ready to stop using docker build, docker run, docker stop and docker rm. Docker compose seems to take care of the full life cycle :)

Docker compose showing off

Let's do a small recap so far. Docker compose takes care of the full life cycle of managing services for us. Let's try to list the most used Docker commands and what the corresponding command in Docker Compose would look like:

-

docker buildbecomesdocker-compose build, the Docker Compose version is able to build all the services specified indocker-compose.yamlbut we can also specify it to build a single service, so we can have more granular control if we want to -

docker build + docker runbecomesdocker-compose up, this does a lot of things at once, if your images aren't built previously it will build them and it will also create containers from the images -

docker stopbecomesdocker-compose stop, this is again a command that in Docker Compose can be used to stop all the containers or a specific one if we give it a single container as an argument -

docker stop && docker rmbecomesdocker-compose down, this will bring down the containers by first stopping them and then removing them so we can start fresh

The above in itself is pretty great but what's even greater is how easy it is to keep on expanding our solution and add more and more services to it.

Building out our solution

Let's add another service, just to see how easy it is and how well it scales. We need to do the following:

-

add a new service entry in our

docker-compose.yaml -

build our image/s

docker-compose build -

run

docker-compose up

Let's have a look at our docker-compose.yaml file and let's add the necessary info for our next service:

// docker-compose.yaml

version: '3'

services:

product-service:

build:

context: ./product-service

ports:

- "8000:3000"

inventory-service:

build:

context: ./inventory-service

ports:

- "8001:3000"

Ok then, let's get these containers up and running, including our new service:

docker-compose up

Wait, aren't you supposed to run docker-compose build ? Well, actually we don't need to docker-compose up does it all for us, building images, creating and running containers.

CAVEAT, it's not so simple, that works fine for a first-time build + run, where no images exist previously. If you are doing a change to a service, however, that needs to be rebuilt, that would mean you need to run docker-compose build first and then you need to run docker-compose up.

Summary

Here is where we need to put a stop to the first half of covering Docker Compose, otherwise it would just be too much. We have been able to cover the motivation behind Docker Compose and we got a lightweight explanation to Microservice architecture. Furthermore, we talked about Docker versus Docker Compose and finally, we were able to contrast and compare the Docker Compose command to plain Docker commands.

Thereby we hopefully were able to show how much easier it is to use Docker Compose and specify all your services in a docker-compose.yaml file.

We did say that there was much more to Docker Compose like Environment variables, Networks, and Databases but that will come in the next part.

Top comments (16)

The article link for Microservice over Monolithic Architecture argument is broken now. This is the working link.

Just pointing out 2 misstypings :)

should be

and

should be

Thank you for making this article better. It should be corrected now :)

Great article. Thanks for making it!

Thank you so much for writing this! I am using docker for first time at new job and this explains to me what I'm doing when I paste in my go-to commands.

hi Mic. Really appreciate you writing this comment. This is why I wrote this..

Where do I can get the source code for inventory service or shall I just copy some part from product-service where I just get the hello world message?

Here it is

```const express = require('express')

const app = express()

const port = process.env.PORT || 3000;

app.get('/', (req, res) => res.send('Hello inventory service'))```

Thank you Chris, you are quick😊 I did something similar. Both of my containers are up and running but don't know why I can not access the (localhost:external_port) from my host machine !!

are you on windows or mac?

I am using ubuntu.

I'd just like to point out that the article is inconsistent about the

.yamland.ymlextension in thedocker-composefile.Thanks a lot :)

Thanks I just finished part 1 and now reading this amazing one

I created this repo to summarize the series

github.com/MoatazAbdAlmageed/Learn...

I think there is some mismatch with numbering and parts (2 is part IV) 🤔

yea , it's how the

seriestag is created in dev.to.. No idea how to change. Hopefully, you find your way based on title.