This article is part of #ServerlessSeptember. You'll find other helpful articles, detailed tutorials, and videos in this all-things-Serverless content collection. New articles are published every day — that's right, every day — from community members and cloud advocates in the month of September.

Find out more about how Microsoft Azure enables your Serverless functions at https://docs.microsoft.com/azure/azure-functions/.

I’m going to show you how to use Azure functions to build an Action for Google Assistant.

More precisely we will look at how we can do fulfillment by webhook in Dialogflow, using a backend by Azure Functions.

TL;DR

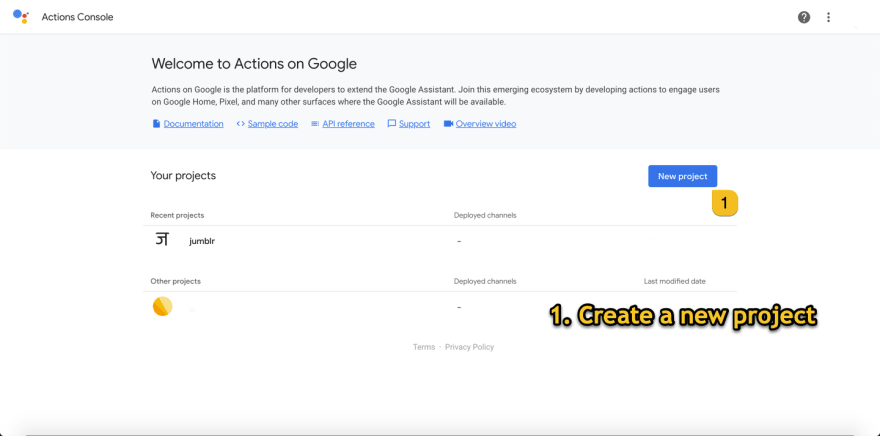

- Go to Google Actions Console and create a new project.

- Setup Invocation

- Build actions -> Integrate actions from dialogflow -> go to dialogflow

- Set default welcome intent -> enable webhook call Set default fallback intent -> enable webhook call

- for welcome, Store sessionID & word, generate a jumbled word from dictionary, send it as response.

- for fallback, look for same session id, return the word in response.

This is a story about how I built my first Google Action. You might be asking yourself,“what is a Google Action?”

on [Unsplash](https://unsplash.com?utm_source=medium&utm_medium=referral)](https://res.cloudinary.com/practicaldev/image/fetch/s--qEfWnG6V--/c_limit%2Cf_auto%2Cfl_progressive%2Cq_auto%2Cw_880/https://cdn-images-1.medium.com/max/12000/0%2AJyROyT2IkvhRTSO8)

Actions on Google is a platform allowing developers to create software applications known as “Actions” that extend the functionality of the Google Assistant.

Google Assistant is an artificial intelligence-powered virtual assistant developed by Google that is primarily available on mobile and smart home devices. Google Assistant can Order food, Book Cabs by having Actions enabled by Zomato and Uber respectively.

Some individuals (like my grandparents, some doctors, and the differently abled) find that using their voice, rather than keyboards, makes it easier to get day-to-day tasks done. There have even been predictions that voice will replace keyboards on the workstations of the future! (But how will we code?!)

One of the benefits of building an app on a voice platform (like Google Assistant) is that it helps bring inclusiveness in your Product, making sure everyone gets the same benefits of the changes you believe in.

What’s word Jumblr?

My app word Jumblr is a game that gives you a jumbled word to unscramble.

For people with other devices — ex. Windows Phone

For people with other devices — ex. Windows Phone

And, if you have an Android or Apple device you can install google assistant from your App store and you’re good to go.

Also, you can say to Google Assistant,

“ Hey Google, Talk to word Jumblr”.

Let’s understand what happens when we invoke word Jumblr.

Whenever a user says the phrase (An invocation to the Action), it triggers the Action and that triggers the Azure Function backend to handle the Request sent by the User in the phrase.

Example —

It might be “Book me a cab from **Uber*” *which will invoke uber google action listed in Google Assistant directory and it will invoke their backend service.

For us it’s “Talk to **word Jumblr**” what triggers our google action and then checks into dialogflow and then fowards request to our Backend Azure functions.

Here’s what you’ll need to get started:

A Google account (You don’t need a Google Assistant device, you can test in the Actions portal)

Let’s get the party Started!

Step I —* Setup Google Actions*

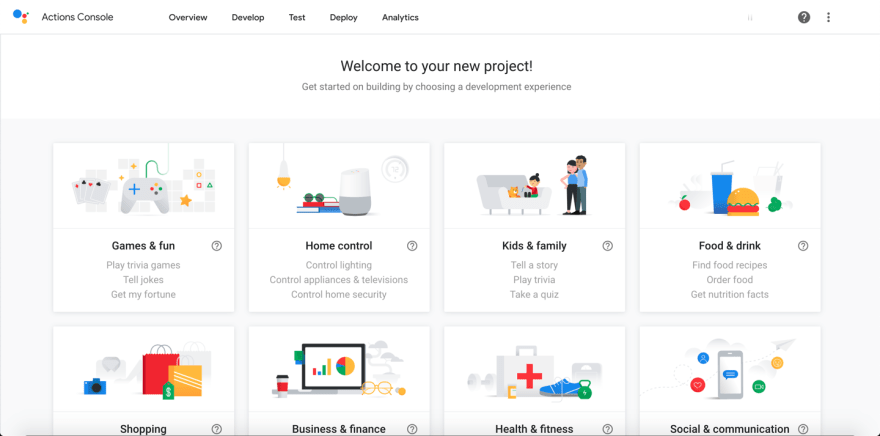

Go to Google Actions Console and create a new project.

Actions Portal will suggest some Templates — Choose Conversational

Actions Portal will suggest some Templates — Choose Conversational

Choose Conversational as I’ll be guiding how to setup intents and webhooks on which Customized experience will suit us best for this project.

Choose Conversational down below the Menu.

Choose Conversational down below the Menu.

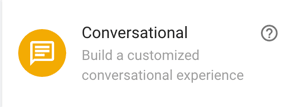

- Setup invocation of the project — Invocation sets up how people will be using phrases to trigger our google action.

Hey Dr. Music, can you play some good vibes?

Hey Dr. Music, can you play some good vibes?

- Build actions -> Integrate actions from dialogflow -> go to dialogflow

Setup Actions and Intents — DIALOGFLOW

Setup Actions and Intents — DIALOGFLOW

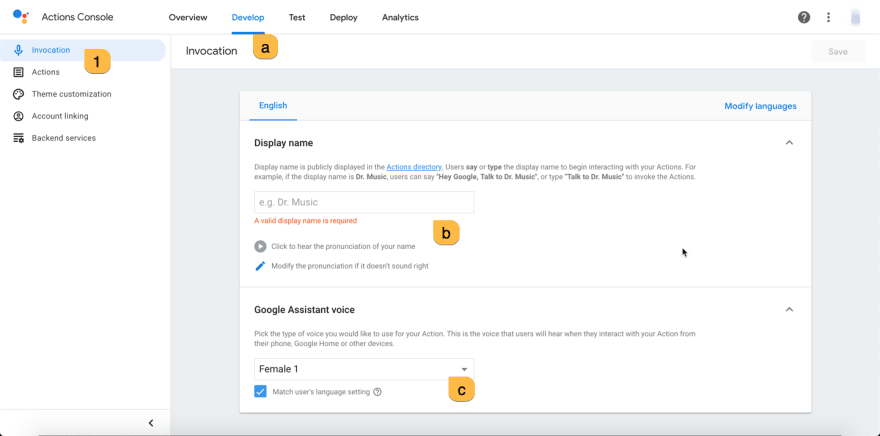

Step II. Dialogflow

Dialogflow is a Google-owned developer of human–computer interaction technologies based on natural language conversations.

We will be dealing with Intents here —

The Merriam-Webster dictionary gives the meaning as “the state of mind with which an act is done.” Tim Hallbom.

This means what Activity, or Events, or particular sets of message convey to do- Here, in** Welcome intent **— I want them to send request to my Azure function which will respond with a jumbled word.

** Set default welcome intent**

** Set default welcome intent**

Set up events —

Welcome by Dialogflow, Google Assistant Welcome and Play game.

Sometimes a user can ask a implicit invocation

(instead of saying “Talk to word jumblr” he can say “play a game”)

and google action can automatically invoke word jumblr.

Setting Events which invoke Welcome intent

Setting Events which invoke Welcome intent

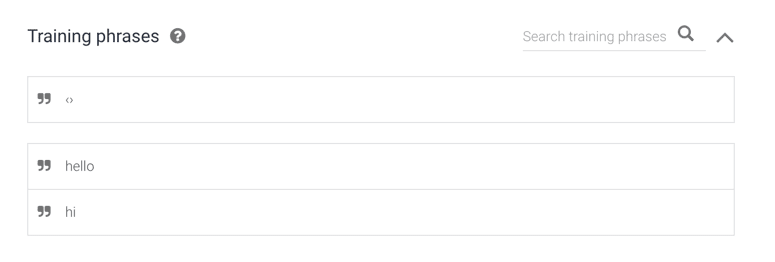

Now we need to train on what messages/phrases our intent will be same which is Welcome intent.

Here are some training phrases

Here are some training phrases

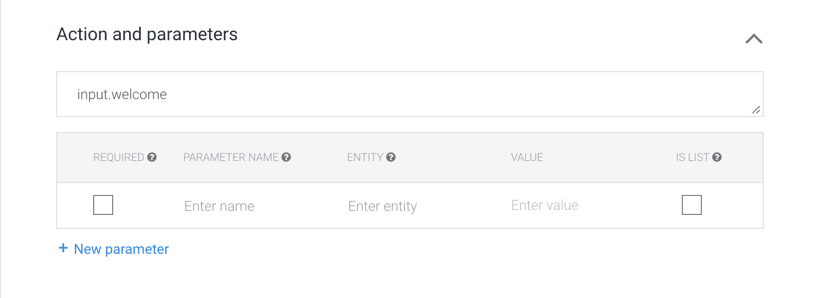

Action and Parameters simplifies on the backend what intent action invocated our Azure function. If this goes up don’t worry we will be covering it later in Step III.

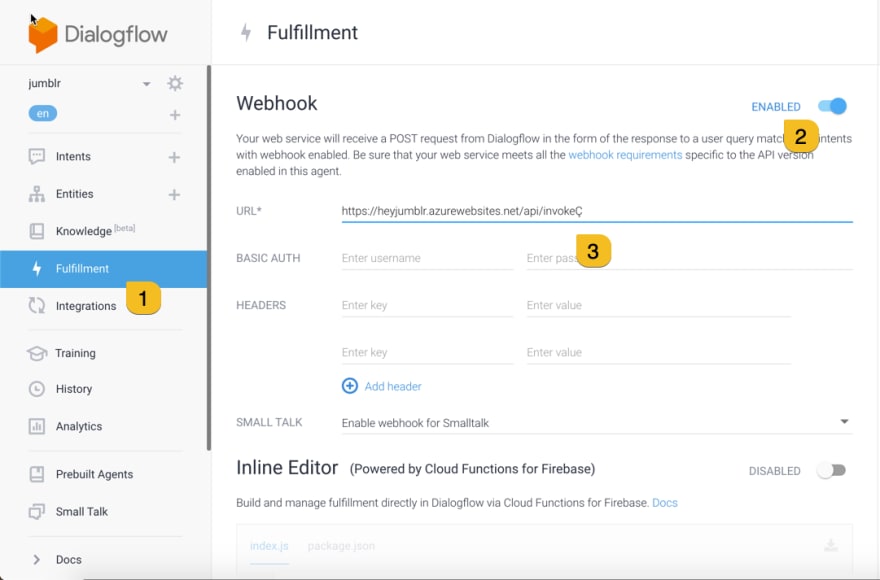

Last step would be turn on fulfilment and Enable webhook, so that whenever this event occurs it sends the request to our azure function URL.

We need to do the same for Default Fallback Intent

Set action to unknown.

Next go to Fulfilment and enable webhook.

So we need a URL in webhook and that’s yet to happen.

Hold on to this tab and open a new one with portal.azure.com

Now i know you are like hey ayush, stop this choo choo train and explain why are we setting intents and fulfillments.

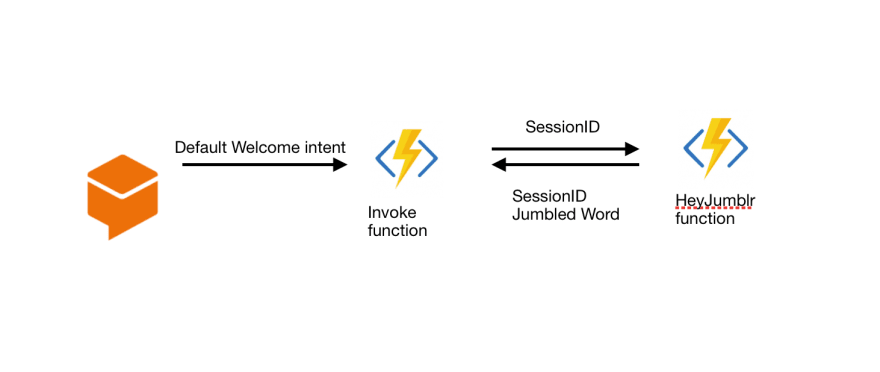

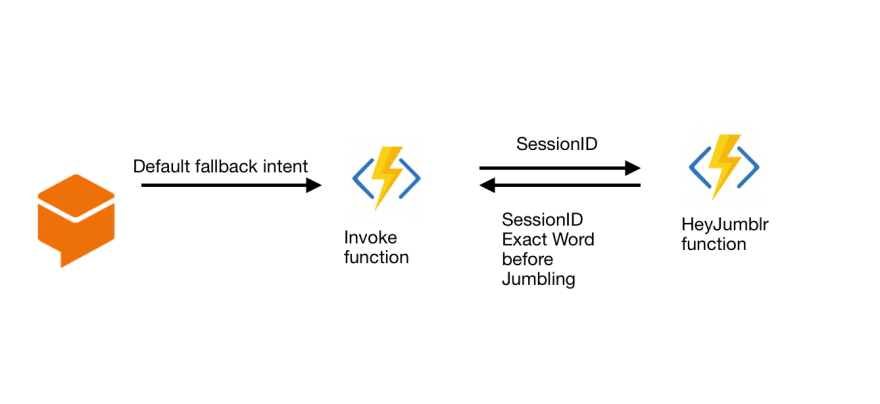

Here we go with another diagram —

Step after Invoking Welcome intent

Step after Invoking Welcome intent

When someone says, “Talk to word jumblr”, “hi”, “hello” etc.

A request is sent to our App with a sessionID and action of the welcome intent which is ‘input.welcome’

have a look here at dialogflow documentation to know what’s under the hood.

So what’s fallback and Why do we need it here?

Whenever a user attempts to solve a word, our app needs an intent for that. Now the attempt can be any word, like anything, even “quit” or “bye” or something ambiguous, so this way having no intent and letting it fall into fallback might help us here.

A request is sent to our App with a sessionID and action of the fallback intent which is ‘input.unknown’.

Step III. Preparing Azure Functions.

Hope you opened Azure Portal in new tab coz it’s gonna get schwifty here —

Create a function app

Choose a HTTP trigger function, name it invoke

Create another HTTP trigger function and name it HeyJumblr

But you’ll be like hey What’s Azure function?

Azure Functions is an event driven, compute-on-demand experience that extends the existing Azure application platform with capabilities to implement code triggered by events occurring in virtually.

So what’s going in Function I (invoke)—

function I

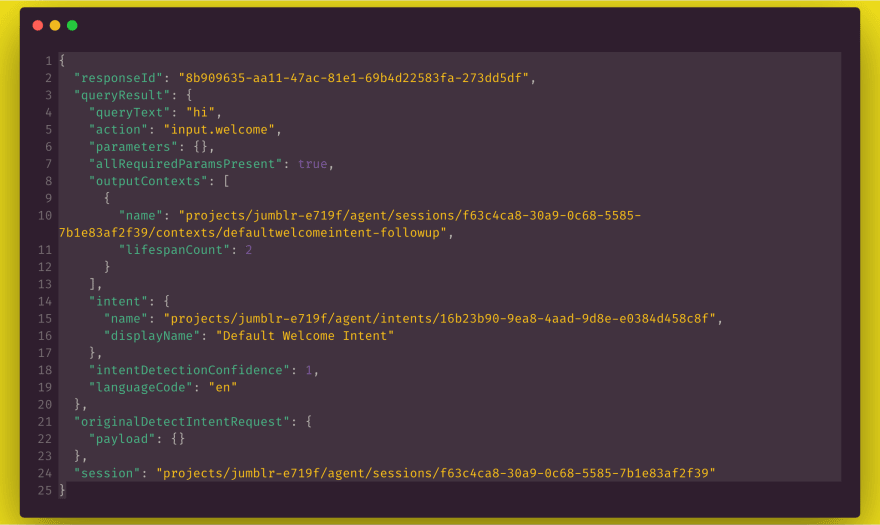

Dialogflow invokes our Azure function (function app 1) with the JSON request below —

In the first function we just split the whole session string and get it replaced by the just the session id, reduce clutter maybe.

And then we pass the request to our heyjumblr (second az func) with the same session ID.

Notice what the queryResult.action have for us, it’s *“input.welcome”.

*That’s how we will recognise what intent called our function, whether someone said hi, or tried to guess a word.

But why do we need session ID?

Look here in the next function heyjumblr, this does the real work.

His work involves-

- Getting a word from Dictionary ( I used “random-word” npm module)

- Jumbling the word

- Sending the word to dialogflow back

But here remains a more crucial step, when someone attempts to solve the word ( when we get “input.unknown” ) how do we know what word we gave the human to solve?

The solution here is pretty simple, storing the sessionID along with the word in a database so that we can recall which word was here in context.

Let’s have a attempt at that —

function II - heyjumblr

Our main function starts line no. 23 module.exports

In line no. 26 we handle if the request contains action “input.welcome”,so that we now know someone said hi to our app, to handle it we will grab a word which we got in line 27.

function on line no. 14 shuffleword() jumbles the word when passed to it as a parameter.

in line no. 29 We parsed the data exactly like how dialogflow can read it.

We need to pass our word in fulfillment text in json so that Dialogflow can understand the text we send and read it aloud in the speaker.

you can read more about dialogflow fulfillment response here.

Because you can send many responses like cards which looks good on devices with Screen.

so our two steps in azure function is done.

For the third step we need to store the sessionID and Word somewhere.

I chose azure table storage which is more likely to help us as a tabular database —

but we need a connection string to access permissions —

Our friends at Microsoft Docs can help with that.

In line no. 35–50 we stored a JSON object into the Table Storage.

Now let’s handle fallback intent.

in line no.53 we handle if the object has a queryAction which is “input.unknown”

We know what to do now, Check in the table if same sessionID has a word stored with it and compare the word returned by user with our word.

“queryResult.queryText” has the text sent to us by the user.

We compare it with the word we got, just like we did in line no. 54.

If the word is right we send the response,

if the word is wrong we send the response.

Awesome here we are finished with our third step.

Now we need to return back to where we left in Step II and fill the webhook url as the invoke one.

Voila, Now you can test your app in dialogflow or in Action Console.

Points to be noted (Production app),

Please have at How to Design Voice User Interfaces, when building an App like this for production.

Also note cold-start might get in your way as google assistant only waits for 10s to get a response from webhook. Cold start is a term used to describe the phenomenon that applications which haven’t been used a while take longer to start up.

To get around with cold start use Premium Plan or a App Service plan to host Azure function.

Serverless is the most suitable compute systems for these type of projects, which needs just a backend and a task to perform based on an event or an invocation.

Thanks for reading this blog.

Follow me for more awesome blogs.

The pics used in blogs were from Unsplash.

And I would thank editors to refine some words.

I would recommend you to stay hydrated.

Top comments (1)

Anyone know how to get something like this working without the conversational interface?

I was just hoping to be able to use a voice prompt to track points for a personal gamification project but I'm kind of stuck now.