I thought our AI agent just stored who completed what - until it surfaced that Shiv delayed the rate-limiting task because of 'unclear specs,' not laziness, and that Neha had nailed OAuth in 5 hours. Suddenly it wasn't assigning tasks based on completion speed; it was assigning based on why people succeeded or failed.

What We Built

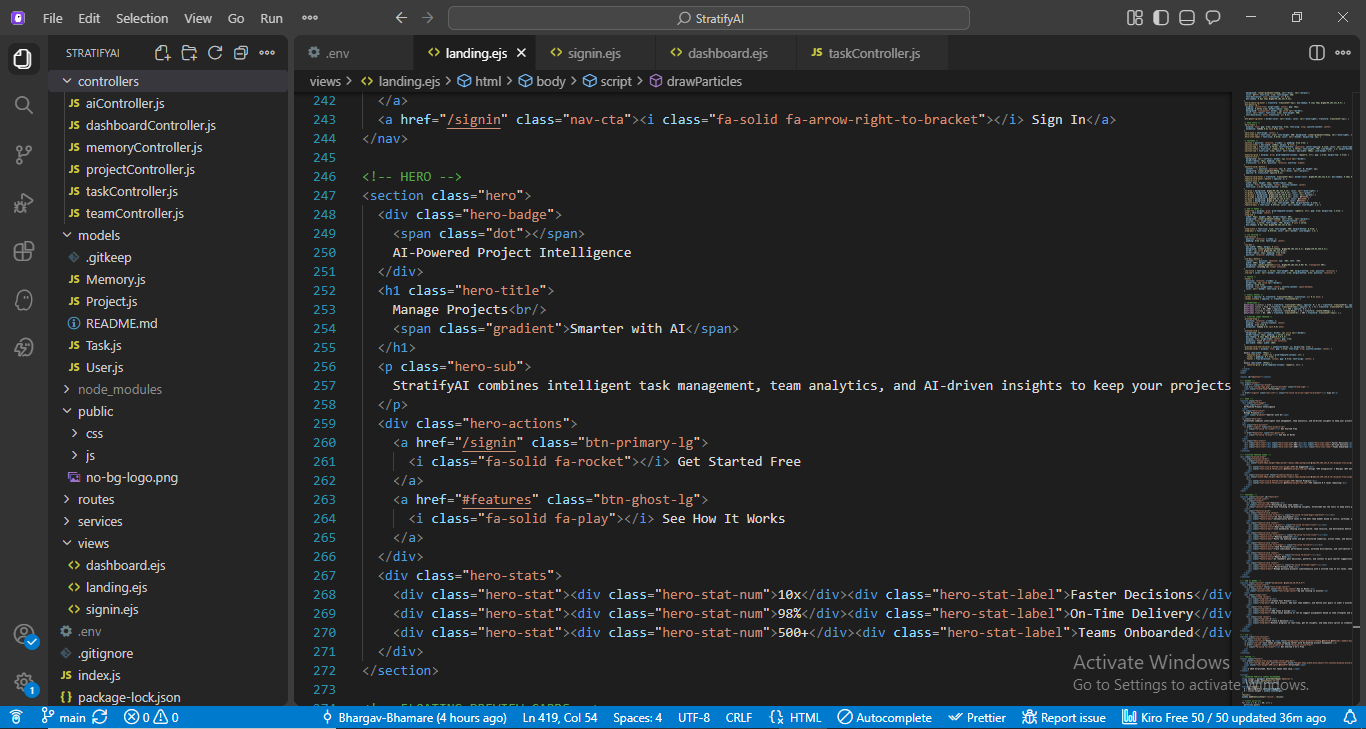

StratifyAI is an AI project manager for your cross-functional team. It watches your team work - who finishes tasks, when they get stuck, what decisions your team makes in meetings - and uses that history to suggest better task assignments. The repo is a Node.js backend. Routes for tasks, team members, projects, and AI suggestions. Controllers that orchestrate the logic. Models for tasks, projects, users, and memory. Nothing exotic on the surface, but the memory layer is where the interesting part lives.

The core idea: instead of assigning tasks based on a static skill list or random rotation, let the system remember what actually happened when a similar task hit your team before. Why did it drag? Who nailed it? What did the meeting notes say about priorities? Then, when a new task comes in, recall that history and reason about it.

The magic hook is Hindsight, a vector-backed memory system we integrated via the memoryService module. Hindsight lets us store discrete events (completed tasks, delays, meeting decisions) and retrieve them by semantic similarity. So when you ask "who should do API auth?" it doesn't just grep for "OAuth" - it fuzzy-matches on task intent and complexity, then reasons over the matches.

The Journey: From Naive to Learned

When we started, the mental model was simple: capture what happened, store it, replay it. I imagined a basic scoring system - Neha did 10 tasks, average 4 hours, so she's "fast at auth" - and hand those scores to an LLM to make suggestions.

But,

The moment I looked at actual task delays in the code, I realized: completion time tells you almost nothing without context. Shiv took 8 hours on the rate-limiting task. That sounds bad. But he took 8 hours because the API docs were ambiguous and he had to reverse-engineer the behavior. Neha took 2 hours on a similar task because the specs were written by someone who'd done it before and knew every edge case. If you assign the next ambiguous spec task to Neha based on speed, you'll burn her out. If you assign it to someone new and expect it to be fast, you'll get surprised.

So we changed the architecture. Instead of storing just { user: Neha, task: "OAuth", hours: 5 }, we store structured events with reasons:

// From taskController.js

exports.delay = async (req, res) => {

const { id, delayReason } = req.body;

const task = await Task.findById(id);

task.status = 'Delayed';

task.delayReason = delayReason; // "Unclear specs", "API rate limits", etc.

await task.save();

const message = `${task.assignedTo} delayed task "${task.title}" due to: ${delayReason}`;

await storeMemory(message, 'decision');

};

The storeMemory call sends that event to Hindsight's vector database. The comment "due to: unclear specs" becomes part of the semantic index. Later, when you ask for a new auth task, Hindsight recalls not just "Neha did auth fast" but also "Shiv had spec issues with this exact type of work before."

The task assignment suggestion flow is where the learned judgment happens:

// From aiController.js - the suggestion endpoint

exports.suggest = async (req, res) => {

const { task } = req.body;

// Step 1: Retrieve memories similar to this task

const memories = await getMemory(task);

// Returns things like:

// - "Neha completed 'Implement OAuth' in 5 hours"

// - "Shiv delayed 'Rate limiting' due to unclear API docs"

// - "Meeting decision: prioritize auth security over speed"

const memorySummary = memories.length

? memories.map((m) => `- ${m.text}`).join('\n')

: 'No past performance data.';

// Step 2: Hand that history to an LLM with explicit instructions

const prompt = `You are an AI Project Manager. Based on past team performance:

${memorySummary}

Suggest the best team member for this task: "${task}"

Provide a short recommendation with reasoning.`;

const suggestion = await callGroq(prompt,

'You are an AI Project Manager who assigns tasks based on team performance history.');

res.json({ success: true, suggestion, memoriesUsed: memories.length });

};

This is not a clever ranking algorithm. It's "fetch relevant history, ask an LLM to reason over it, return the reasoning." The bet is that an LLM, given concrete examples of what worked and what broke, will make better decisions than a human remembering rough patterns.

The Memory Bank Setup

We use Hindsight's bank feature to tune how the system reasons about team dynamics:

// From memoryService.js

const client = new HindsightClient({

baseUrl: 'https://api.hindsight.vectorize.io',

apiKey: process.env.HINDSIGHT_API_KEY

});

await client.createBank('stratifyai', {

name: 'StratifyAI',

background: 'AI project manager that tracks tasks, decisions, and team activity.',

disposition: {

skepticism: 2, // Don't assume correlation = causation

literalism: 3, // Precise fact-tracking matters

empathy: 3 // Account for team burnout and morale

}

});

The disposition settings are subtle but important. skepticism: 2 means Hindsight won't just assume "Neha is good at X because she did X twice." It holds context in reserve. literalism: 3 means it tracks facts precisely - if Shiv had "unclear specs," that specific context stays in the recall. If we'd set literalism: 1, it might generalize to "Shiv is slow," which is wrong.

We also feed meetings into the system. Meeting notes get parsed into structured decisions and action items, then stored as memories:

// From aiController.js - meetingSummary endpoint

exports.meetingSummary = async (req, res) => {

const { notes } = req.body;

const result = await callGroq(prompt,

'Always respond with valid JSON only…');

// Returns:

// {

// summary: "Team discussed Q2 roadmap priorities",

// decisions: ["Prioritize mobile-first", "Use GraphQL for new endpoints"],

// actionItems: ["Complete API migration by March", "Review auth flow"]

// }

// Each decision becomes a memory

for (const decision of result.decisions) {

await storeMemory(`Meeting decision: ${decision}`, 'decision');

}

for (const item of result.actionItems) {

await storeMemory(`Action item from meeting: ${item}`, 'task');

}

res.json({ success: true, summary: result.summary });

};

So if your team decides "we're going mobile-first this quarter," that context shapes task assignment suggestions going forward. If you're suggesting a web-only refactor task, Hindsight will surface that decision and the LLM will reason: "This contradicts what we decided. Maybe suggest it to someone else or re-discuss."

Before vs. After: A Side-by-Side

To make the difference concrete, here's the same scenario two ways:

WITHOUT Hindsight (naive assignment):

New task comes in: "Implement API rate limiting"

Assignment logic: "Last person who did auth was Neha, so assign this to Neha"

Result: Neha gets overloaded. Three weeks later, it's still not done. Nobody knows why.

WITH Hindsight (learned assignment):

New task: "Implement API rate limiting"

Hindsight recalls:

"Shiv delayed 'Rate limiting' due to: unclear API docs, had to reverse-engineer"

"Vishal successfully completed 'Cache layer with rate limits' in 6 hours"

"Meeting decision: we're investing in API stability this quarter"

LLM reasoning: "Vishal has direct experience. Shiv struggled with unclear docs before.

Recommend Vishal, or if assigning to Shiv, provide crystal-clear specs first."

Result: Vishal gets the task with full context. It ships in 5 hours. Shiv learns what

'clear specs' looks like for future work.

The difference: from "who was fast last time?" to "who succeeded under similar constraints, and why did others fail?"

Then keep your existing multi-day scenario as the detailed example.

How It Behaves

Let's walk through a realistic scenario over three days:

Day 1: Neha gets assigned "Implement OAuth flow." She finishes in 5 hours. The system stores: "Neha completed task 'Implement OAuth' in 5 hours" and "Task type: authentication, difficulty: medium estimated, actual: 5h".

Day 2: A new task comes in: "Set up JWT authentication." You hit /ai/suggest with that task. Hindsight recalls the OAuth memory. The prompt goes to Groq: "Neha just nailed a similar auth task in 5 hours. That person has proven velocity and clear understanding. Recommend Neha." You get back: { suggestion: "Neha - proven auth expertise and speed", memoriesUsed: 1 }.

Day 3: You assign "Implement API rate limiting" to Shiv. Turns out, the provider's API docs are cryptic. Shiv delays it, logs: delayReason: "Unclear rate limit docs, had to reverse-engineer". The system stores that.

Day 5: New task: "Build rate-limiting layer for cache endpoints." You ask for a suggestion. Hindsight recalls: "Shiv delayed 'Implement API rate limiting' due to: Unclear rate limit docs, had to reverse-engineer". The LLM reasons: "Shiv struggled with this pattern before because of unclear docs. Choose someone else, or pair them with someone who's done it, or get better docs first." The suggestion might be "Vishal (if she's done this before) or loop back to Shiv with clear specs drafted first."

That's the pivot from "Neha is fast" to "Neha is fast at well-specified work. Shiv hits walls on underspecified work and needs clarity before velocity." It's richer reasoning because it's rooted in why things happened, not just how long they took.

What We'd Do Differently

Several things are visible in the code that point to tradeoffs and limits:

The Groq model is a bottleneck. We're calling it for every suggestion (temperature: 0.7, max_tokens: 1024). In production, you'd want to batch suggestions, cache common patterns, or use a smaller model for routine decisions. Right now, suggesting a task assignment costs an LLM call. For a single group project, that's fine. For 50 concurrent groups, it gets expensive.

Hindsight recall is simple and effective, but not filtered. We call client.recall(MEMORY_BANK, query) with a default budget. If your team has 500 tasks logged, we might get back 10 similar memories. That's usually good - recent, relevant context. But there's no real-time feedback loop saying "that suggestion was wrong, here's why." Over months, stale or wrong patterns sit in the bank. You'd need explicit memory pruning or versioning.

We don't train on feedback. If a suggestion bombs (you assign a task, it fails spectacularly), we log it to Hindsight, but we don't update the disposition or learn a stronger signal. The system is reactive, not adaptive. You could imagine a version that says "when I make a bad suggestion, adjust skepticism higher" or "if Neha has been overloaded, deprioritize assigning to her." That's not in this codebase yet.

Storage is straightforward but unoptimized. Tasks live in a basic MongoDB schema (Task.js). No compound indexes on assignedTo + status + createdAt, so queries scale linearly until you hit thousands of tasks. For a cross-functional team project, that's future-you's problem. For a platform, you'd want explicit index strategy early.

What's Next

The codebase is stable for its current use case: a small team, a few dozen to low hundreds of tasks, human-in-the-loop review of suggestions. If this scales to more teams or longer project history, the obvious bottlenecks are Groq call latency, Hindsight recall precision, and memory churn.

The more interesting question is whether this approach generalizes. Most task assignment systems still use skill matrices ("Neha knows JavaScript, Python, and CSS"). What if they used learned feedback instead? "Neha was fast at JavaScript when the specs were clear, but slow when requirements drifted mid-sprint." That's richer, harder to maintain, but way more useful for real-world projects where clarity and context matter as much as raw skill.

If you're interested in building memory systems for agents, check out Hindsight on GitHub and the Hindsight documentation. If you want to understand the broader landscape of agent memory systems and how they integrate with AI task management, that's worth a read too.

The repo is messy in places, unoptimized in others, and missing features that look obvious in retrospect. But it ships, it works, and it teaches a specific lesson: memory becomes useful the moment you stop treating it as a filing system and start treating it as context for reasoning. That shift changes what becomes possible.

Top comments (0)