Last Hacktoberfest I contributed to the GatsbyJS documentation, where they were encouraging refactors for tone, content and accessibility. Some of the tweaks they wanted to make were to replace any use of words like "easy" and "simply" - I hadn't thought about it before but it seemed like a great idea!

How frustrating is it when you're bashing your head against a problem, only for the docs to tell you how easy this is!

I raised an issue in my own repo for my site Up Your A11y, and when I finally got around to doing something about it, it felt a little overwhelming looking at all these static MDX files I have. Luckily, there's a package for that!

Enter, Alex

Alex is an NPM package that acts like a linter for insensitive writing in your project. In their own words:

Whether your own or someone else’s writing, alex helps you find gender favoring, polarizing, race related, religion inconsiderate, or other unequal phrasing in text

Getting started

You can install alex globally with the command npm install alex --global. Once you have it installed, you can run the linter from your terminal with the alex command.

My main content files are stored in separate folders, and running alex alone only executes in the current directory. Happily, I was able to pass a pattern to alex instructing it where to look: alex content/topics/**/*.mdx

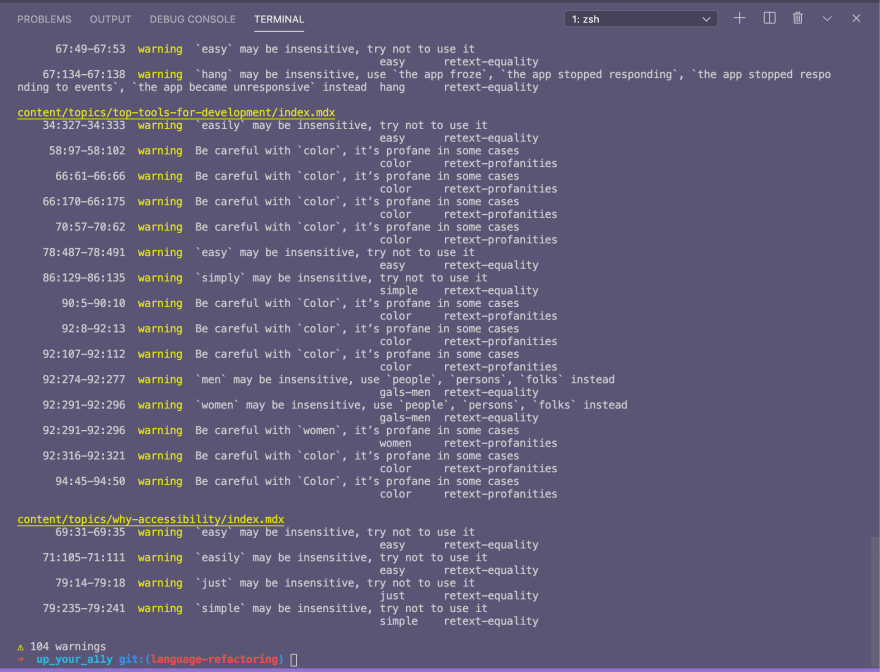

The initial run picked up 104 warnings(!!) Here's a snapshot of what the output looked like:

Common themes

My 104 warnings had a tonne of commonality. They can be broadly grouped as:

- Usage of "easy"/"easily", "simple"/"simply" and "just" in my tutorials

- False positives for use of words like "color" and "special" (in my context not relating to people)

- A few sundry warnings on use of "men" and "women" (related to statistics I've quoted) and "disabled"/"disability"

What I changed

In the end, I made changes to 12 articles, with 60 additions/deletions. You can see the full pull request here:

The vast majority of what I changed was some really unfortunate instances of "easily", "simply", and so on. I still have 39 warnings when I run alex in my project, but I'm happy for now that I've considered those and don't believe they need changed due to their context (as described above).

What I learned

My main take-away is that I am inevitably going to write things like "this simple tweak", "this can be easily solved by..." and so on in my content. Honestly, when I'm in the flow of writing I feel like these are nice, softening, approachable words to use. Almost as if I'm reassuring the reader: "Yes, you can do this! Don't worry".

I'm way more aware now that even if those words are having the desired effect for some people, there is a whole other group who I am potentially alienating, frustrating and accidentally making them feel the opposite of what I intended.

I'm probably unlikely to be able to change my default way of writing overnight (I wish!), so in the meantime I'm going to commit to running alex each time I add a new article to my site.

I'd be really interested in hearing from anyone else who's used this tool, or something similar, and how you got on!

Did you find this post useful? Please consider buying me a coffee so I can keep making content 🙂

Top comments (10)

This is very cool!! It would be neat to see Alex in a format accessible to folks outside of the dev community. (My first thought was something similar to the Grammarly browser extension.)

I think something that acts as a linter for everyday use in things like emails, Slack, etc. would help that kind of accessible and inclusive language become more common by helping us recognize it and learning alternatives (maybe even having explanations of why would be cool too).

P.S. I believe Grammarly has some features around tone and inclusive language, but they're behind their Premium paywall :)

Just noticed there's a browser extension based on alexjs which could definitely be helpful - github.com/skn0tt/alex-browser-ext...

Oh I would love to see that too! It could really help workplace comms in particular be more inclusive and welcoming ♥️

Thanks for sharing this tool. Didn't know it before.

I try to think about the tone and language that I use when writing articles. Especially words like "easy", "obviously", "just" can easily (🤔) slip though because of prior experience with technology and thinking that "everyone knows it". It's also hard to remember that not everyone is as familiar with something. Tools like this help by making us question some assumptions. It starts with awareness.

I ran the tool on my blog and got 90 warnings. Quickly went though them and brought them down to 67. The rest look ok within their context. Will remember to use this tool in the future.

Totally agree when you say it helps us question our assumptions!

Glad you found it useful for your blog!

I looooooove alex.js so super happy to see you spreading the word! I try to integrate the linter for any documentation or technical blogs I work on 😍

Also your terminal is really pretty ✨💜

Thanks! It's fairyfloss-ified 😁

I like the idea of integrating with the linter but I had a good number of what I think are "false positives" and I'm not sure about having that noise in the linter output. Do you ignore any words in your config?

Yeah definitely have it configured a bit. So for my company's docs, we have the

profanitySurenessset to1instead of the default0and that eliminated a lot of the false positives for us (likeexecuteorfailedbecause we're a testing platform so we use those terms a lot). Then we addallows as they pop up - for example, I think we have thehostesses-hostsrule in there because we talk about network hosts.That makes sense - thanks for sharing!