Introduction

Docker is one of the most popular software tools in recent times that has revolutionised the process of software installation and deployment. However, it has been always quite a struggle to explain what Docker is or what problem Docker solves.

In this post, I have covered the answers to the following questions -

Quick Note

The entire article is based on the concepts that I had learnt from Stephen Grider's Udemy course. You can find the link for the course over here.

Why use Docker?

Before we go into what a Docker is, let us try to understand what Docker is trying to solve.

The image you see above details the steps involved when a user tries to install a piece of software on a computer. The user encounters some error during the installation process and repeats the steps as shown in the workflow diagram above, iteratively.

Often, when the user tries to reach out to other support groups, he realizes that the installation process and the set of errors encountered varies based on the underlying operating system and the set of libraries that the software is dependent on. And, this is what Docker precisely solves.

Docker provides an environment (container) that allows the user to install and run the software without worrying about the setup or dependencies. In other words, a user installing the software in a Windows, MacOS, or a Linux operating system will experience the same behavior, and hence troubleshooting/debugging will be agnostic of the underlying operating system.

What is Docker?

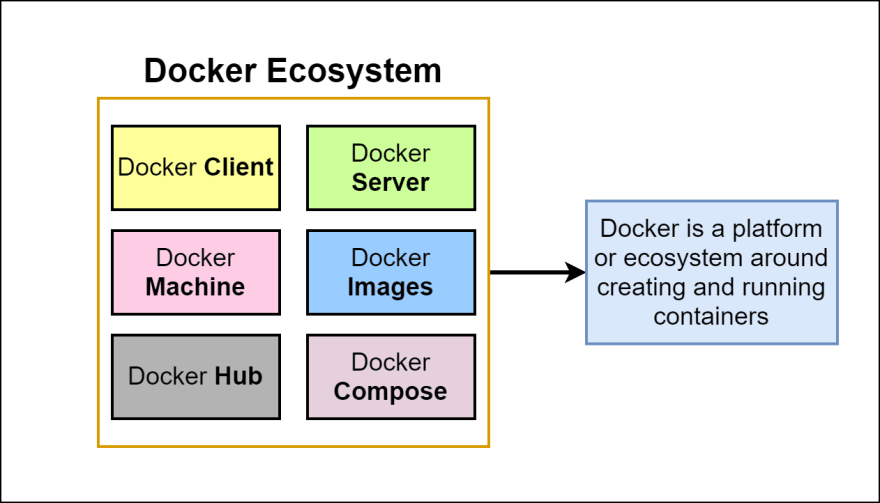

Docker is a platform or an ecosystem of different projects, tools, and pieces of software around creating and running containers.

So, what is a Docker Container? In order, to answer this, we would have to first revisit the fundamentals of what an operating system is.

Fundamentals of an Operating System

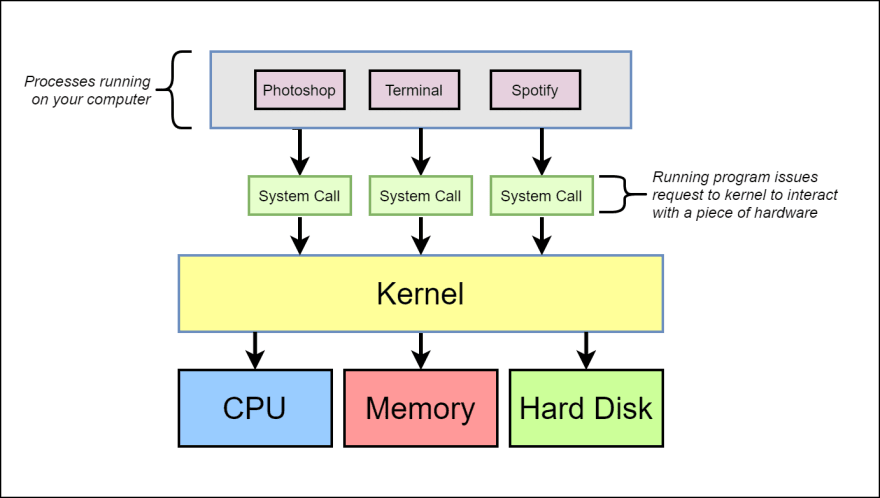

The following diagram provides a quick overview of the Linux Operating system.

As you can see from this diagram, the Kernel is the running software that governs the access between the programs that are running on the computer and the physical hardware that is connected to the computer.

When a user issues a command through the software, e.g. Play a song through Spotify, the command is conveyed by the software program to the Kernel through a system call. Every program has a unique system call to communicate to the Kernel.

The kernel receives the command and communicates with the hard disk/memory to find the song that has to be played by the software.

Namespacing & Control Groups

From the above example, let us assume a hypothetical scenario where two software programs, say Photoshop and Spotify, require different versions of Python software.

Having separate Python versions based on the requesting software can be achieved by a concept called namespacing.

Namespacing

Namespacing is the process of allocating dedicated hardware resources to a single process or a set of processes.

In the above example, the hard disk can be segmented to have a specific version of a python software installed using namespacing.

When a software makes a call to interact with the hard disk, the Kernel determines which process is making the system call and redirects that call to the segment based on the version required for the software to operate. The following illustration explains the same.

The diagram below describes how namespacing can be used to isolate resources such as network, users, hostnames, etc.

Control Groups

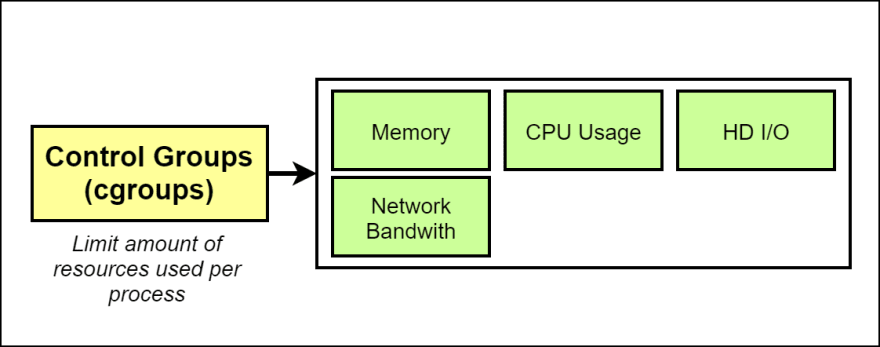

Control Groups (cgroups) is used to limit or control the amount of resources available to a particular process or a set of processes.

In the diagram shown above, using control groups, one can limit the memory, CPU, Hard disk I/O, and network bandwidth available for a process.

Note: The features of namespacing and control groups are specific to the Linux operating system.

Docker Container

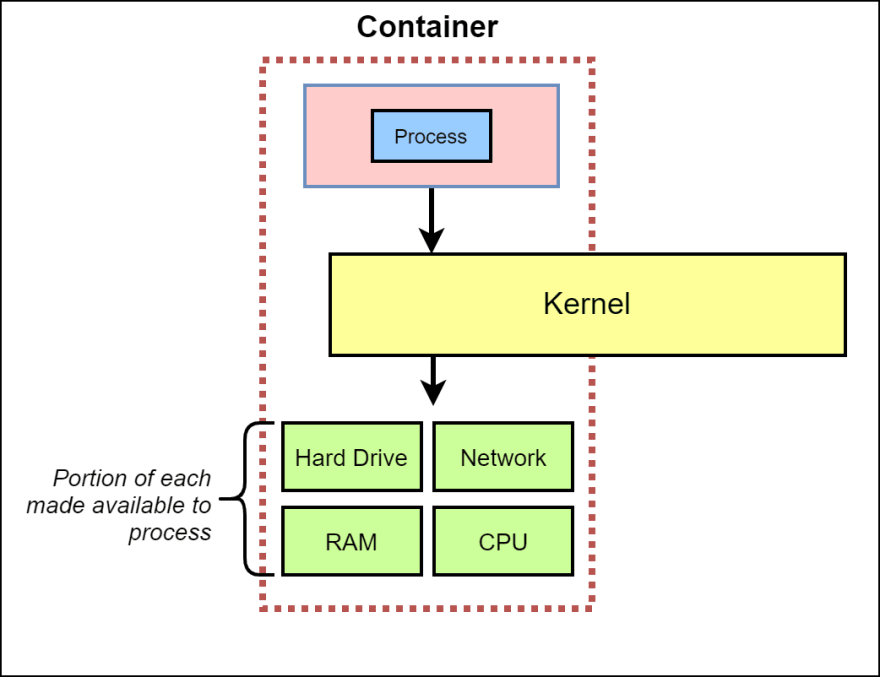

Now that we have established the concepts of namespacing and control groups, a container can simply be explained as a process or a set of processes that have a grouping of resources specifically assigned to it.

Going back to the same example as above, a container can be depicted as a logical grouping of processes. The resources allocated with the container is shown below.

So, in essence, a container is a logical construct that has a process or a set of processes with dedicated resources allocated to it. The following visual summarizes the same. Note that a portion of the hard drive, memory, CPU, and network is dedicated to the process inside a container.

Now that we have understood what a docker container is, let us find out what a Docker image is and what is the relation between a docker image and a docker container.

Docker Image

A Docker image is a file system snapshot that is used to create a container and run any processes defined within.

A Docker image can be visualized as a blueprint and the instance created out of the blueprint becomes the container.

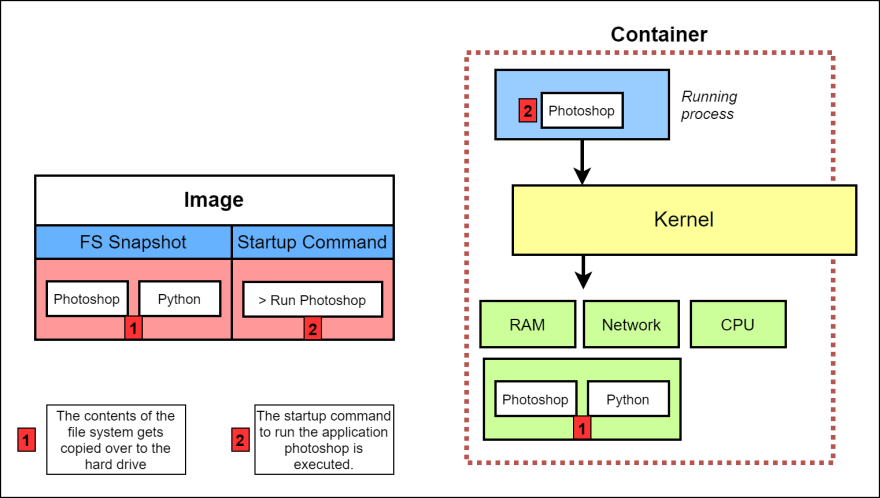

The following visual explains the step-by-step process of how exactly an image is turned into a container.

Step 1 - The contents of the file system snapshot, in this case, Photoshop and Python-related files, are copied over to the hard disk.

Step 2 - The run Photoshop command specified in the file system is executed. This runs the application from inside the hard drive and now you have Photoshop as a running process within the container.

Note that the Photoshop application communicates via the Kernel (that resides outside the container) to the hardware resources allocated to the application.

Conclusion

I hope this article could help in understanding the fundamentals of Docker, Docker Image, and Docker containers. There are other components within the Docker ecosystem such as Docker client, Docker server, Docker hub that also helps in creating, managing, and deploying Docker containers.

Thanks for reading the article and do not forget to connect with me on Twitter @skaytech.

If you enjoyed this, you may also enjoy:

Top comments (4)

Very well explained sir.

I think the real credit goes to stephen grider who explained this in his Udemy course.

Men explain Docker ecosystem such as Docker client, Docker server, Docker Compose please!

It is there in my backlog of items to do... hopefully soon...