Hey there,

For the past month, the team of Uilicious has been going around one north (our country startup hub), and a few other business hubs, knocking over 200 doors to survey the folks and find out what's the most popular front end frameworks used in production and the state of testing in Singapore 🇸🇬

Something that Uilicious as a company did on a much smaller scale 2 years ago, to get the general sense of things. And hopefully, plan to repeat for the next few years.

🤔 Sounds good? let's get to the results

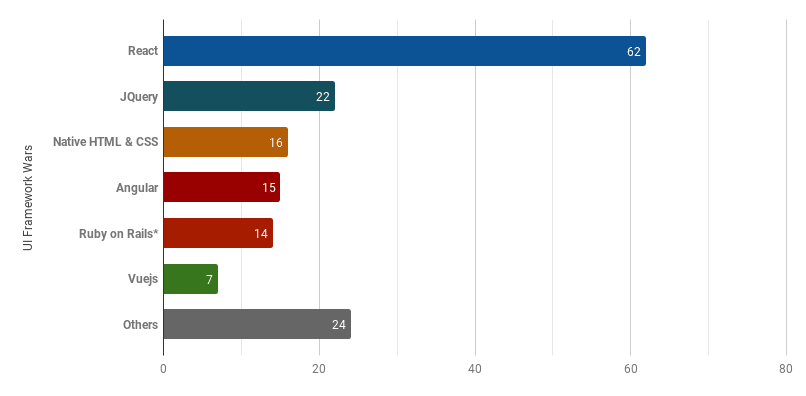

UI Framework Wars - in production?

the endgame answer everyone wants to know on the ongoing UI "standard" war

React was hands down the winner 🔥 when it comes to applications developed in production.

The emphasis on production is intentional. After all, with the recent changes and hype of newer frameworks it is important to remember that it is slow and tedious for production workload under daily usage and development to constantly stay on the hype train and takes a long time to migrate from one framework to another. With JQuery being a close 2nd place on the list or higher for easily the past 10 years 😴

Another surprising contender, Ruby on Rails. Originally not an option among the list, it had such a significant amount of responders in the "Others" free text option, that it was added onto the list.

Sad contenders for me personally, is Vue.js is not as popular as I hoped (disclosure, we are Vue.js supporters and help organize their meetups locally).

Another perhaps more surprising result: 0 response for polymer web components, which was an option in the survey. Probably because it's a little too bleeding edge for production 🚅

Development Team Size?

Of the teams surveyed, a good half has a development team size between 5-10. This is a good indicator that most companies have outgrown their initial startup days.

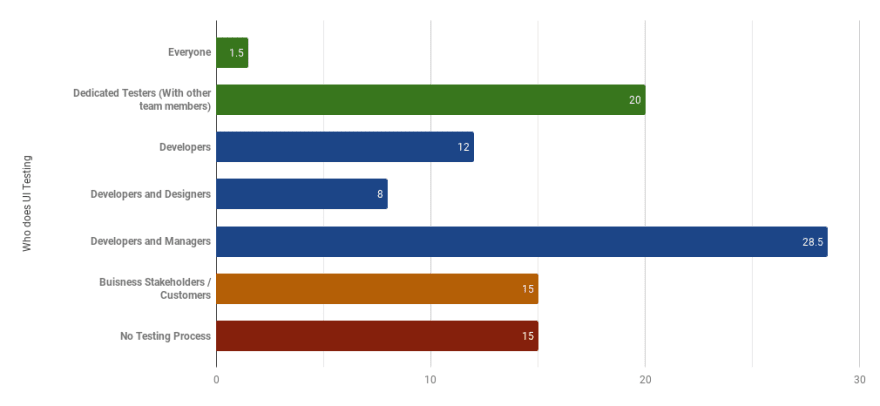

Who does testing?

Interestingly, despite the labor crunch of programmers, most companies heavily rely (48.5%) on their developers for testing.

This is worrying in two ways. One is that developers are typically not a good testers of their own code due to tunnel vision. Two, they are also the most costly option, with the average developer salary being easily double a dedicated manual QA salary 😶

However what was even more shocking 😱 was that a significant 15% of respondent indicated that they do not test, and this included large dev teams that are >10.

Followed by another 15%, who use their customer themselves, or their business stakeholders for testing.

Although some indicated that this is not a problem, especially those who serve as a solution vendor, this will lead to an unhealthy adversarial relationship between the development team and the business team.

Tension worsen when previously fixed bugs reappear through regression testing and the business team who in most cases, faces the wrath of the clients and the impact of any client business lost.

Something that ideally a good QA team or even a single dedicated QA tester can help manage, which thankfully 20% of the respondents had. So a small win here 👍

Side note: The results below, excludes teams without a testing process.

Unkown represent respondents who are not directly involved in the full testing process, and hence could not give an accurate answer

How much time does your team spend on testing?

While not shown in the chart, having a dedicated QA helps bring the overall development team time spent on testing downwards, with most in the 0-10%, and 10-25% category 😃

Insufficient test coverage tends to be the main issue for teams who spend more than 25% of their time testing. Along with the practical time-consuming nature of manually performing testing on various devices and screen sizes ⌛

This is further compounded if the developers are the testers, as it is not an effective use of their time.

How often do you test your application?

A large 40% of teams seem to be running on a weekly or bi-weekly release cycle of development and testing.

Followed by as and when deployments are done on an (unknown schedule), which is typically once a month or less.

Finally a round of applause for the rare 3% who either automate their test to run every single workday or on every commit 😎

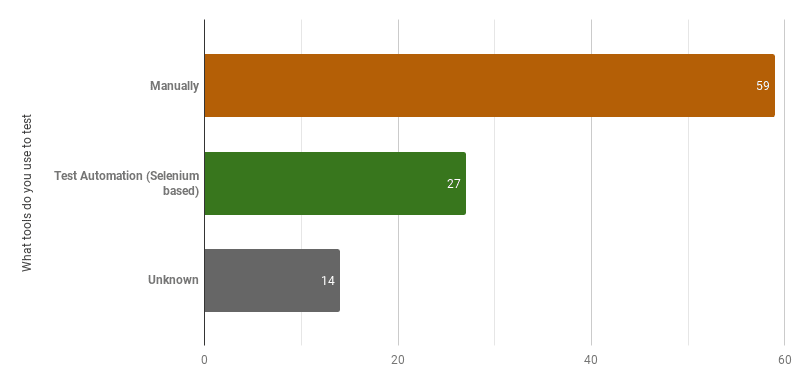

What tools do you use to test?

Despite the whole growth of high tech web apps using the next buzz from AI to the blockchain. Testing is happening in rather low-tech ways, manually for all these apps 🤐

One thing heartening to see is the rise of test automation at 27%, this is significantly higher then the ~5% result we saw two years ago. Perhaps indicative of a slowly maturing industry.

How often do you get a bug report from customers/business team?

A particularly painful question for most. From what it seems, daily and weekly bug reports seem to be the daily grind faced by 75% of the teams.

Something which almost every dev dread to get from a customer 😭

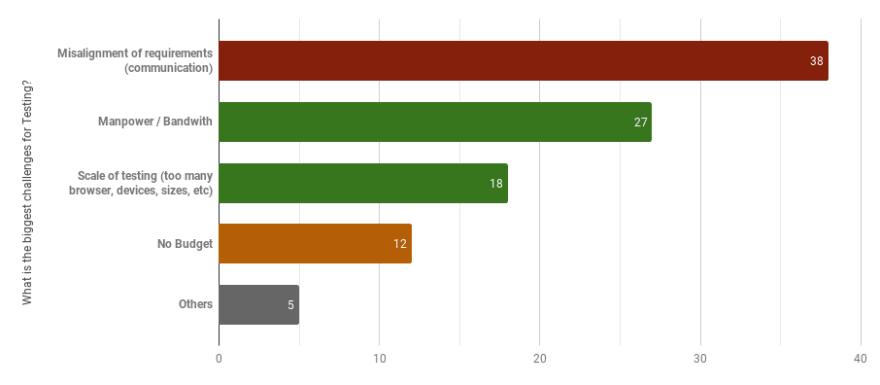

What is the biggest challenge for testing?

Finally, there is the open-ended question on what is the biggest challenge teams faced for testing.

Unsurprisingly, communication is still the number one challenge at 38%. A great shout out to The Mythical Man-Month for those who read it.

Miscommunication of requirements ranges anywhere between any of the following: Customers, Business Team, Developers, Designers, Managers and Testers.

This is followed by the lack of manpower in the current talent crunch (27%) and the scale of web testing various screen sizes, pages, and browsers (18%). All of which is something Uilicious as a company will be glad to help with.

And finally the startup classic, no budget. At 12%, this never changes 😫

The following are personal observations and opinions regarding the survey. AKA. it's not backed by hard data.

Personal observations on testing

What we found interesting was that most companies surveyed either have their entire development team or the majority of the team outsourced overseas, an indicator of the ongoing programmer manpower crunch in Singapore 🤔

A worrying observation, from the various interview, was that most teams who test manually, only tend to do so for new features. Without any regression check. Or more scarily, those that do no testing.

This is understandable for extremely early-stage startups, who just started. But, several were not in this stage, including larger "Series-A/B" level startups.

This is worrying personally as a consumer of the modern web, as this would explain many times what has happened when extremely obvious bugs appear when using an application (such as login and checkout), where simple regression testing is missing.

It also raises questions in me, if a company is not willing to invest any resources into testing functionality, what resources are in place to ensure the security of their system? Especially considering my own personal data on some of these applications.

Something I hope will be improved on in time 😧

Personal observations on UI framework

On the UI framework war side of things, many responders during the interview process mentioned plans to experiment and move away from React onto other frameworks for new projects or as part of their migration plan. So perhaps next year React will slowly topple down?

Go Vue, go 🏎️

So how can Uilicious help make this better?

Uilicious.com testing platform

First, we are a test automation platform aiming to remove all the pain points in website testing (hence the survey). And we are constantly iterating based on feedback.

To get users up and running quickly, our platform allows testers to easily write test scripts like these ...

// Lets go to dev.to

I.goTo("https://dev.to")

// Fill up search

I.fill("Search", "uilicious")

I.pressEnter()

// I should see myself or my co-founder

I.see("Shi Ling")

I.see("Eugene Cheah")

And churn out sharable test result like these ...

Uilicious QA-as-a-service

Second, we have since then soft launched Uilicious Services.

As made obvious in the survey result. Most teams lack the resources required to form their own dedicated Quality Assurance team. And that is something we can help with, from gathering testing requirements to the writing and maintaining of tests.

If the above testing challenges in the survey apply to you, let us know and we will be glad to help with our Uilicious testing service, to get your full QA process up and running with our experienced QA engineers.

Also once the team grows large enough to have their own dedicated QA team, they can take over the role of writing and maintaining test scripts on the platform.

We hope that through the testing service, we can help teams to convince their project managers on to improve the overall quality of their website and product 😉 and get dev teams up and running faster, with their projects, alongside our test platform.

What should we improve on for next year survey?

One notable thing: is that due to the current survey size (~200), and the nature of how the data is collected (almost entirely at one-north). There is a heavy bias towards smaller startups. So take the result as vague indicators at best. Something we hope to improve on in the future 😵

Another thing to note - due to the nature of how this survey is conducted, the result greatly underrepresents the larger development teams.

Many of the much larger startups (who probably have a larger development team) would have an office manager at the entrance, turning away any survey requests 😥

When we repeat the survey next year, we will ask for both the local and overseas team size separately, which would give some insights on the rate of outsourcing in Singapore for developers.

Similarly, there are clear obvious differences in testing requirements for solutions vendor, or a web company. Something we would try to add next year. Along with the question of "how old the company is".

So if you have any ideas on how we should improve the survey, do let me know.

Till then, as a startup founder myself. I gotta get back to investors and fundraising to grow Uilicious, so gotta go now...

Top comments (0)