Before talking about Docker, let’s first talk about Virtualization in general.

Virtualization

Virtualization is a way to utilize the underlying hardware more efficiently.

Do we really need virtualization?

In general, yes. If every single application requires a separate machine, chances are that most of the time that machine will be underutilized and we will be wasting the hardware resources like CPU, memory, etc.

With virtualization, many applications can share the same physical hardware.

A virtualization solution acts as a layer on top of actual hardware.

How does a system achieve virtualization?

It does so via a Hypervisor.

Hypervisor is a software through which we achieve desired virtualization.

There are two types of hypervisors:

- Type-1 aka bare-metal because it runs directly on the hardware.

- Type-2 runs as an application on the host operating system.

Virtual Machines(VMs)

Virtual machine as the name suggests, is a machine with its own OS. A VM emulates physical hardware.

An application running inside a VM doesn’t know if it’s running on the real machine or not, hence the name Virtual Machine.

Many VMs can run on the same machine without knowing each other.

A virtual machine runs on the Hypervisor and accesses the underlying hardware through the Hypervisor.

In this diagram, we see two different VMs running on the same machine using a Hypervisor.

- VM1 has Linux and is running an application named App1.

- VM2 has Windows and is running two applications — App2 and App3.

Containerization

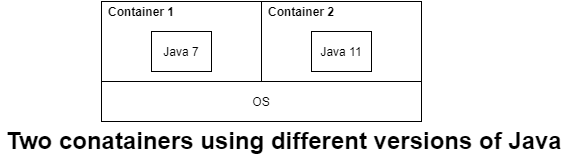

Containerization or OS-level virtualization is an OS feature in which the kernel allows multiple isolated user-space instances, these instances are called containers.

A Container is like a box in which an application runs. The container provides virtualization to that application.

The application doesn’t know on which OS it is actually running, for that application container is everything.

It is the container’s job to provide libraries, dependencies, and everything else that an application needs for a successful execution.

The application runs within the boundaries of the container, hence it is less dependent on the host OS.

And for the same reasons, completely isolated from other containers running on the same machine.

What problems does a container solve?

Portability

Let’s suppose, we’re building an application from scratch.

So,

- We find a machine.

- We download all the required software, their dependencies.

- Then we install and configure everything.

Configuring a software or tool generally involves changing some config file on the OS, or may be changing some network port, etc.

Finally, everything is up & running on your dev machine. Cool stuff!

Now, how would you move this application along with its configurations to another machine?

Without any containerization tool or technology, we would need to repeat everything that we did on our dev machine.

And, if everything is scattered and not properly documented, and managed, we may easily miss an important config on the new machine.

In that case, either the machine itself or some other application is screwed.

With the help of containers or VM, we can just copy the VM or the container to the new machine.

Because apps are configured to run inside a container and nothing is actually installed on the host machine directly, you move them as a package.

Packaging and Deployment

In extension of the previous point, as soon as you containerize your application, it is ready to be moved to any machine.

To create a container for your app, you tell the container everything about you app — configs, ports, file system dependencies, other s/w dependencies, etc.

The container becomes the runnable and deployable package.

Any machine which has the right container infrastructure will be able to run your container.

It is similar to Java classes, once compiled you can run the same class file on any machine as long as it has the right JVM.

Scaling

Containers promote microservices pattern. There should be one service per container.

Under heavy load, if you need another instance to share the load, you just need to add more containers, and your service is immediately scaled.

Dependency conflicts and clean removal of applications

Without containers, dependency conflicts may arise as multiple apps may require the different versions of the same dependency.

- Let’s suppose, A needs x version of Sybase and B needs y version of Sybase.

- Let’s consider another scenario — If you are uninstalling/removing an app from your system, chances are that you may forget to remove its dependencies which will remain there and eat the system’s space.

Dependencies like these not just make the working difficult but also pollute the machine when you don’t need them anymore.

In case of containers, an app and its dependencies are packaged as a bundle. So there is no dependency conflict.

Also, instead of removing an app, you remove the container. So, everything will be removed as a package which keeps a machine clean.

Docker

Let’s finally talk about Docker.

Docker website defines Docker as an open platform for developing, shipping, and running applications.

Docker is an application container which enables us to bundle an application and all its dependencies as a package so that it can be shipped anywhere and run on any machine.

It is written in Go language.

Under the hood, a Docker container is simply a Linux process that uses Linux features e.g. namespaces, cgroups, seccomp, etc.

Docker terminology

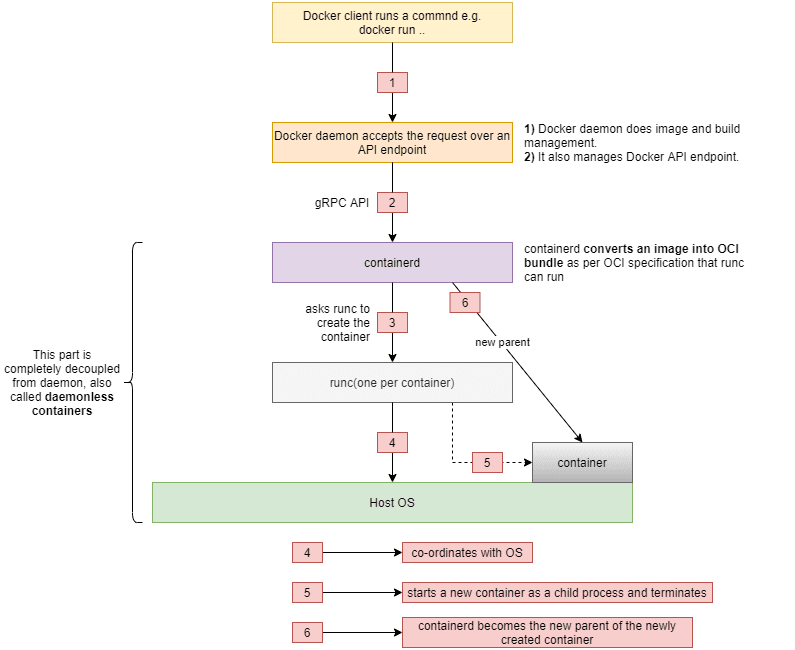

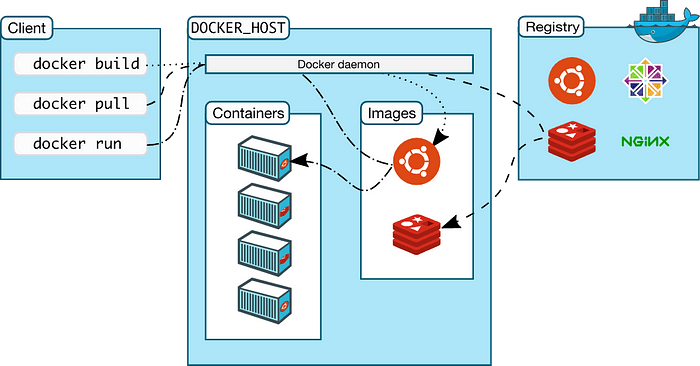

- Docker client — Docker client is installed on the client machine. We run docker commands using docker client.

- Docker daemon — Generally, installed on the same machine. It listens for Docker API requests and does everything required for a docker container to run.

- Docker registry — A registry is a place where docker images are stored. For instance, GitHub is a registry where people manage their codebases. In the same way, docker images are stored in a docker registry. A registry could be public like Docker hub or private.

- Docker image — An image is a read-only template with instructions for creating a Docker container.

- Docker container — A container is a runnable instance of an image.

Difference between a Docker image and Docker container

Let’s take an example of a Java class. A class has some code which could be stored as a .java file on a local directory or as a github project. A class doesn’t do anything on its own.

But, when we compile and run a .class file, the same read-only class becomes a runtime object, an executable piece of code that does what it was coded for.

A docker image is just like a .java file that has some instructions on what to do when you want to run a container.

And when we run a docker image via docker client, docker creates a running container.

Just as we can create multiple objects of a single class, we can create multiple running containers from the single docker image.

High Level Working

There’s a nice diagram on Docker site which depicts the high level flow.

When we run a command to run a container from an image:

- Docker checks if the image already exists on the system.

- If yes, it uses the image to run a container.

- If no, it goes to a registry to find and pull the image.

- Docker downloads the image on the system

- Docker runs the image and starts the container.

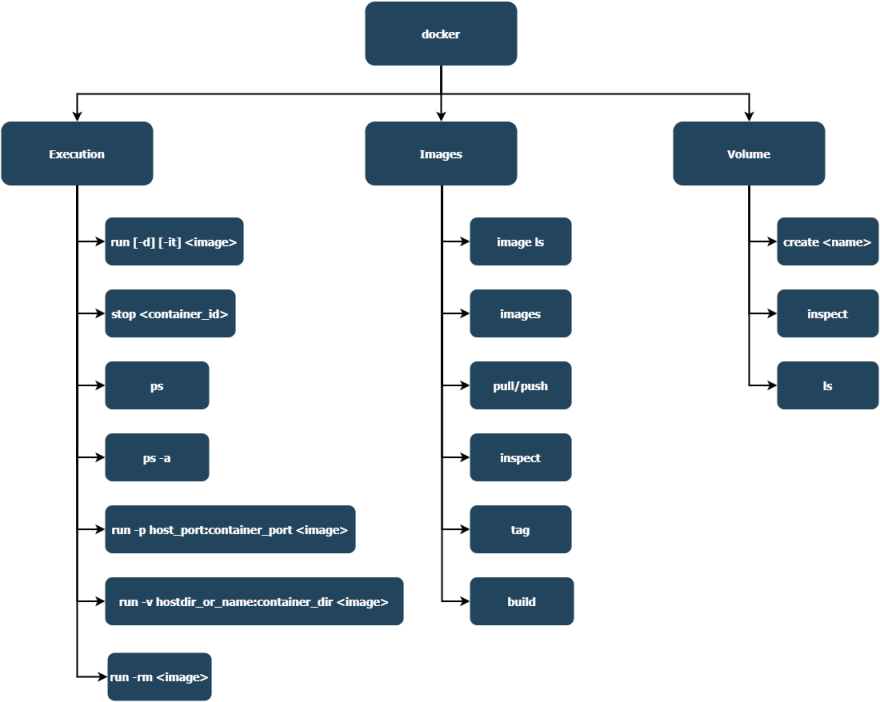

Useful Docker commands

Docker architecture and key components

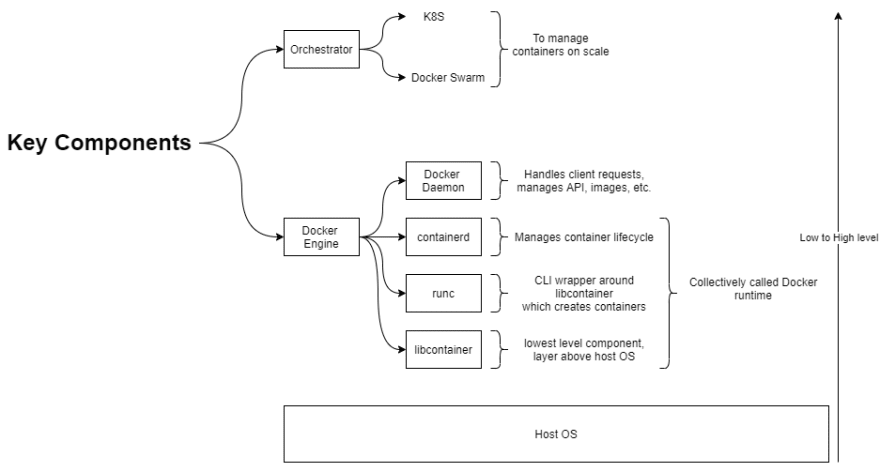

Docker has a layered architecture. Some of the key components, in the order from low to high, are as below:

- runc / libcontainer — https://github.com/opencontainers/runc/tree/master/libcontainer

- containerd — https://github.com/containerd/containerd

- Docker daemon — https://docs.docker.com/engine/reference/commandline/dockerd/

- Orchestration — https://docs.docker.com/get-started/orchestration/

Here is a diagram which shows these components with a small description — what it is and what a component does.

What happens when we run a container?

Feel free to checkout my blog - https://www.vmtechblog.com/search/label/docker

Top comments (0)