In the company I'm currently working we work in several different projects. My job is to deploy and maintain dev and staging environments for each project, and some of them were already deployed. They used one AKS for each environment, which is more expensive than sharing one for all the environments. I found it a little tricky to find how to do this on google, so I'm going to explain it in this post and I hope someone finds it useful.

First, create a new namespace for Ingress:

kubectl create ns ingress-nginx

We are going to use Helm to install Ingress in our cluster. Also the Azure CLI (az) to interact with our Azure resources and, of course, kubectl.

Let's start by adding the Ingress' repo to Helm:

helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

And installing Ingress through Helm one time for each needed environment:

helm install blog-dev ingress-nginx/ingress-nginx --set controller.ingressClass=blog-dev --namespace ingress-nginx --set controller.replicaCount=1 --set rbac.create=true

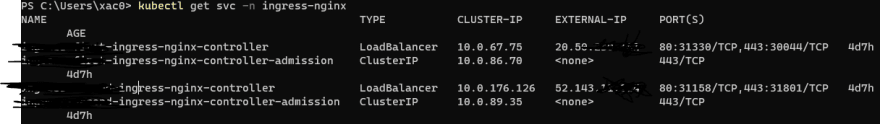

Now ge can go check the services to see if we have all our controllers with their public IPs assigned.

Now, we create a new namespace for each environment. Deploy all your stuff there as you would normally do it. If you are just testing you could deploy just a nginx. Now take a look at our Ingress routing file, let's assume something like this:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: blog-dev-ingress

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

kubernetes.io/ingress.class: blog-dev

spec:

rules:

- http:

paths:

- path: /

backend:

serviceName: nginx-service

servicePort: 80

Check line 7: we have the ingress.class anotation, which points our Ingress file to one of our controllers. If you remember when we installed our controllers with Helm, --set controller.ingressClass=blog-dev sets the class of our Ingress controller. Just point each deployment's Ingress to a different controller, and if you did good you can access all your environments through the LoadBalancer's IPs found with kubectl get svc -n ingress-nginx

We now have all our environments accessible through IP, but usually you will need SSL, or you just want a URL to allow your team to access the environments easily.

Follow this commands:

az network public-ip list --query "[?ipAddress!=null]|[?contains(ipAddress, 'YOUR_IP_HERE')].[id]" --output tsv (keep quotes in YOUR_IP_HERE)

Put the output of the previous command in this:

az network public-ip update --ids OUTPUT --dns-name blog-dev

This will associate the IP from your LoadBalancer with a URL with the following shape: blog-dev.westeurope.cloudapp.azure.com. (Well, westeurope or any other region.)

Now edit your ingress to only accept connections from your new URL:

spec:

rules:

- host: blog-dev.westeurope.cloudapp.azure.com

http:

paths:

- path: /

backend:

serviceName: nginx-service

servicePort: 80

And we are done! In the same AKS, we have n environments, all of them with different namespaces, different URLs and, therefore, different IP addresses. These are completely isolated environments, and it could be nice to add some RBAC access roles, to forbid blog's developers to access, e.g, the camunda environments.

Hope this was useful to you, if so share and like it!

Top comments (2)

That was great, and actually saved my day. I believe that you can even further improve the process by setting up the static IPs beforehand (with a proper names etc) instead of relying on the auto-generated ones, and than add line

--set controller.service.loadBalancerIP="YOUR_IP"to the

helm installcommand. Thanks!Amazing work @xac0 ! I worked with @aboutroots and it helped us a lot that time.

It's worth remarking, that a few things changed, so you might want to update this piece of art.

kubernetes.io/ingress.class: blog-devannotation is getting deprecated. For new API compatible ingress declaration example have a look below (or in docs)ingress controller has been installed as follows (here are docs for additional sets)

Note that

--set controller.ingressClass=blog-devis not valid anymore at least for my fresh installation:rest is pretty much fine. Many thans for good work!