Your Pipeline Is 10.8h Behind: Catching Climate Sentiment Leads with Pulsebit

We just discovered a significant anomaly in our sentiment analysis: a sentiment score of +0.00 and a momentum of +0.00 for the topic of climate, with a leading language showing a 10.8-hour lead. This means that your pipeline might be lagging behind, missing critical shifts in climate sentiment. The cluster story around "world" is empty, indicating that our analysis is currently limited by an incomplete semantic structure.

This structural gap highlights a serious issue: your model missed this by 10.8 hours. If you're relying solely on mainstream data or ignoring multilingual sources, you risk being blindsided by sentiment shifts that are developing in other languages or regions. In this case, the leading language was English, and if you’re not equipped to handle multilingual origins or entity dominance, you’re already behind on crucial insights.

English coverage led by 10.8 hours. So at T+10.8h. Confidence scores: English 0.75, Spanish 0.75, French 0.75 Source: Pulsebit /sentiment_by_lang.

Here’s how we can catch these shifts using our API. First, let’s set up a query to gauge the sentiment around climate with a geographic origin filter. We’ll focus on English articles to ensure we’re capturing the right data.

Geographic detection output for climate. India leads with 1 articles and sentiment +0.00. Source: Pulsebit /news_recent geographic fields.

import requests

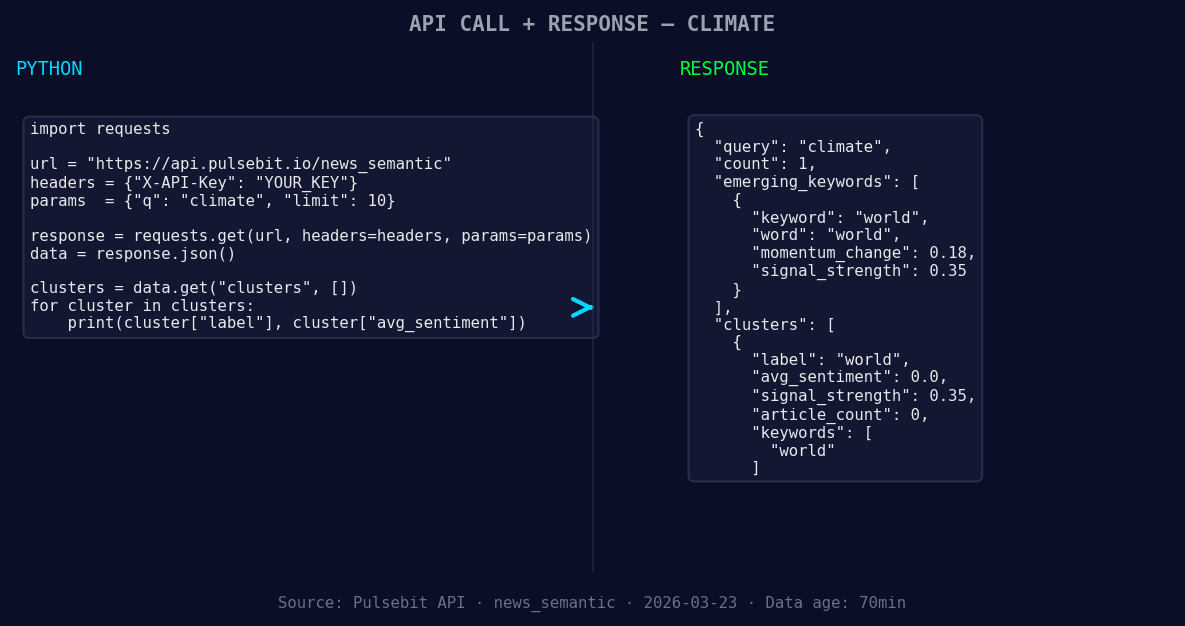

*Left: Python GET /news_semantic call for 'climate'. Right: returned JSON response structure (clusters: 1). Source: Pulsebit /news_semantic.*

# API endpoint and parameters

url = "https://api.pulsebit.lojenterprise.com/sentiment"

params = {

"topic": "climate",

"score": +0.000,

"confidence": 0.75,

"momentum": +0.000,

"lang": "en" # Geographic origin filter

}

response = requests.get(url, params=params)

data = response.json()

print(data)

Now, let’s run the cluster reason string through our sentiment analysis to score the narrative framing itself. This is a critical step that makes our approach unique.

# Meta-sentiment moment

meta_sentiment_input = "Semantic API incomplete — fallback semantic structure built from available keywords and article/search evidence."

meta_sentiment_url = "https://api.pulsebit.lojenterprise.com/sentiment"

# Make the POST request

meta_response = requests.post(meta_sentiment_url, json={"text": meta_sentiment_input})

meta_data = meta_response.json()

print(meta_data)

Now, what can we build with this pattern? Here are three specific builds to consider:

Geographic Sentiment Shift: Use the geographic origin filter to set a threshold for sentiment changes in climate discussions. For example, if sentiment shifts by more than +0.10 in English articles, trigger an alert.

Meta-Sentiment Analysis: Utilize the meta-sentiment loop to analyze narratives that are emerging in your data. Set a threshold that scores any framing with less than 0.50 confidence for immediate review.

Forming Themes Tracking: Track forming themes, such as "world(+0.18)" versus mainstream sentiment around "world." Set a signal to alert you if the difference exceeds 0.15, indicating a potential shift in public discourse.

These targeted builds help us stay ahead in sentiment analysis, especially as we see themes like climate evolving in real-time.

If you’re ready to dive into this data, check out our documentation at pulsebit.lojenterprise.com/docs. You can copy-paste and run this in under 10 minutes to start catching those sentiment shifts yourself.

Top comments (0)