Your Pipeline Is 24.8h Behind: Catching Law Sentiment Leads with Pulsebit

On April 25, 2026, we discovered a significant anomaly in sentiment analysis: a sentiment score of -0.70 and a momentum of +0.00 surrounding the topic of law. This data reveals an emerging narrative that is being overshadowed by dominant entities within the cluster. While other themes like "singh," "congress," and "leader" are leading the conversation, the law sentiment is trailing behind by 24.8 hours. If you’re not accounting for this disparity, your model is effectively missing critical insights.

The Problem

This structural gap highlights a major flaw in any pipeline that doesn’t effectively handle multilingual origins or entity dominance. Your model missed this by 24.8 hours, leaving you blind to emerging themes in law. The leading language in this case is English, which means if you’re not filtering for it, you’re letting essential data slip through the cracks. Ignoring these nuances can lead to misguided decisions based on incomplete narratives.

English coverage led by 24.8 hours. German at T+24.8h. Confidence scores: English 0.85, Spanish 0.85, French 0.85 Source: Pulsebit /sentiment_by_lang.

The Code

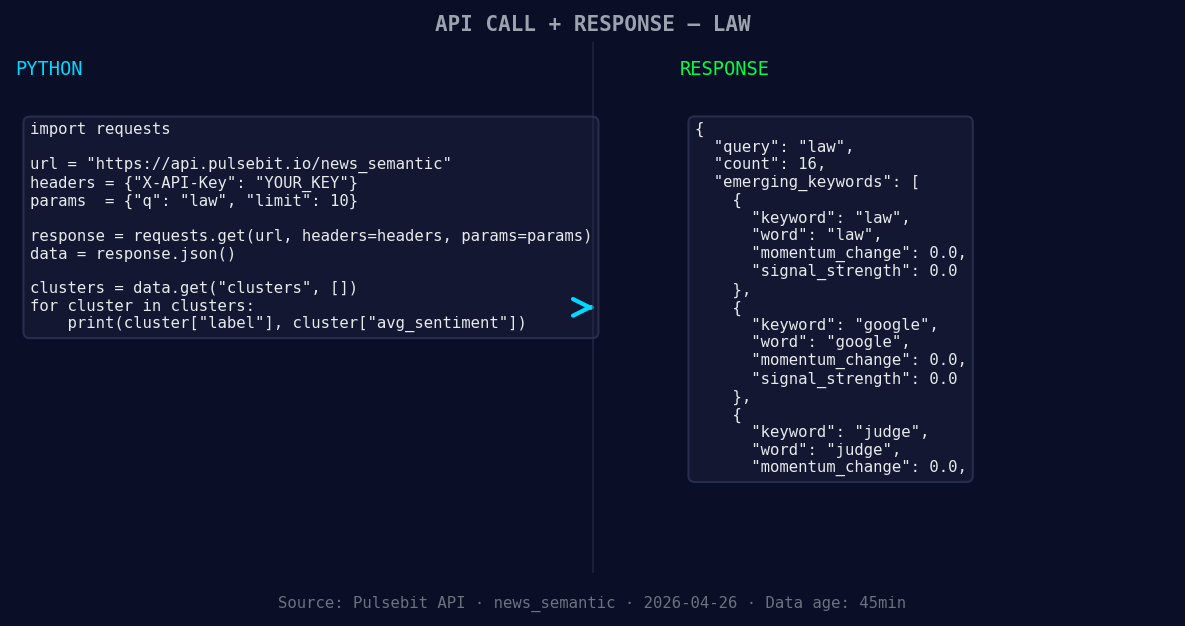

To catch this anomaly, here's a Python snippet that will help you pull the necessary data.

First, we’ll filter by the geographic origin using the language parameter:

Geographic detection output for law. India leads with 13 articles and sentiment -0.10. Source: Pulsebit /news_recent geographic fields.

import requests

*Left: Python GET /news_semantic call for 'law'. Right: returned JSON response structure (clusters: 3). Source: Pulsebit /news_semantic.*

url = 'https://api.pulsebit.lojenterprise.com/v1/sentiment'

params = {

'topic': 'law',

'lang': 'en'

}

response = requests.get(url, params=params)

data = response.json()

print(data)

Next, we’ll run the cluster reason string through our sentiment scoring API to evaluate the narrative framing:

meta_sentiment_url = 'https://api.pulsebit.lojenterprise.com/v1/sentiment'

cluster_reason = "Clustered by shared themes: singh, congress, leader, threat, message."

payload = {

'text': cluster_reason,

'confidence': 0.85

}

meta_response = requests.post(meta_sentiment_url, json=payload)

meta_data = meta_response.json()

print(meta_data)

This allows us to not only capture the sentiment around the law but also assess how the framing of related themes might skew public perception.

Three Builds Tonight

Here are three specific builds you can create with this newfound insight:

- Geo-Filtered Alert System: Set a threshold for sentiment scores below -0.50 for the topic "law". Trigger alerts only for English sources. This will help you catch negative sentiment early.

if data['sentiment_score'] < -0.50 and data['lang'] == 'en':

send_alert("Negative sentiment detected in law.")

Meta-Sentiment Dashboard: Use the meta-sentiment loop to visualize how narratives around "singh", "congress", and "leader" impact the public's perception of the law. Set up a visualization that updates in real time.

Momentum Tracking: Implement a routine that tracks momentum changes in law-related topics. Use our API to compare the sentiment of law with mainstream themes like "singh" and "congress".

if data['momentum_24h'] == 0.00:

log_event("No momentum change detected for law.")

Get Started

For a deeper dive into these capabilities, check out our documentation at pulsebit.lojenterprise.com/docs. You can copy-paste and run these snippets in under 10 minutes to start catching these critical insights!

Top comments (0)