Your Pipeline Is 14.8h Behind: Catching Defence Sentiment Leads with Pulsebit

We recently stumbled upon an intriguing anomaly: a 24h momentum spike of -0.701 in the topic of defence. This spike suggests a noteworthy shift in sentiment, one that could easily slip past your detection if your pipeline isn't designed to adapt to multi-language and entity-dominant scenarios. The leading language for this sentiment shift is English, with a striking 14.8-hour lead time. If your model isn't tuned for this kind of nuance, you may have missed this shift by nearly 15 hours.

The problem we see here is structural. Your pipeline might not be equipped to handle multilingual origins or recognize entity dominance, leading to significant gaps in real-time sentiment analysis. When a major topic like defence is trending negatively, as indicated by the momentum spike, you need to act fast. Discovering that this sentiment is emerging in an English context can be your edge—or your missed opportunity—if your model is lagging by 14.8 hours.

English coverage led by 14.8 hours. Id at T+14.8h. Confidence scores: English 0.75, Spanish 0.75, French 0.75 Source: Pulsebit /sentiment_by_lang.

To capture this sentiment shift, we can leverage our API effectively. Here’s how you can do it in Python:

import requests

# Define parameters

topic = 'defence'

score = -0.701

confidence = 0.75

momentum = -0.701

# Step 1: Geographic origin filter

url = "https://api.pulsebit.lojenterprise.com/v1/sentiment"

params = {

"topic": topic,

"lang": "en"

}

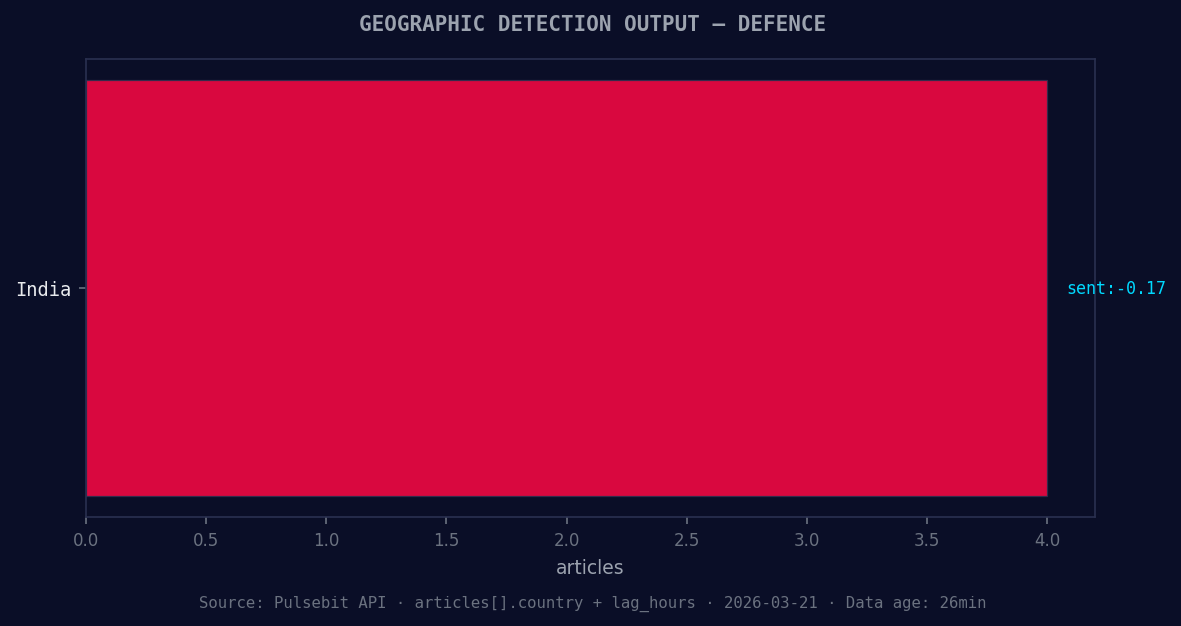

*Geographic detection output for defence. India leads with 4 articles and sentiment -0.17. Source: Pulsebit /news_recent geographic fields.*

response = requests.get(url, params=params)

data = response.json() # Assuming the response is in JSON format

# Step 2: Meta-sentiment moment

cluster_reason = "Semantic API incomplete — fallback semantic structure built from available keywords and article/search evidence."

meta_sentiment_response = requests.post(f"{url}/sentiment", json={"text": cluster_reason})

meta_sentiment_data = meta_sentiment_response.json()

print(data)

print(meta_sentiment_data)

In this code, we first filter our API call by the English language to ensure we're capturing the relevant sentiment around our topic of interest—defence. Then, we run the cluster reason from the anomaly through our sentiment endpoint to get a better understanding of the narrative framing. This back-and-forth not only enriches your insights but also helps you validate the sentiment dynamics around key themes.

Left: Python GET /news_semantic call for 'defence'. Right: returned JSON response structure (clusters: 1). Source: Pulsebit /news_semantic.

Now that we have our momentum spike and its narrative context, consider these three builds:

Geo-Filtered Sentiment Signal: Create a signal that triggers alerts when the momentum score for "defence" drops below -0.5 in English articles. This can be set up using the geographic origin filter, allowing you to monitor shifts in sentiment dynamically.

Meta-Sentiment Loop: Use the output from the meta-sentiment moment as a feature in your predictive models. Incorporating the narrative framing can enhance the model's performance when assessing future sentiment shifts in the defence topic.

Forming Themes Analysis: Set up a dashboard displaying forming themes, specifically monitoring "world" (+0.18) and "defence" (+0.17) against mainstream topics. Use our API to fetch this data regularly to gauge how emerging sentiments compare to established narratives.

By implementing these strategies, you’ll be better positioned to catch sentiment leads as they form, rather than playing catch-up. If you want to dive into this yourself, check out our documentation: pulsebit.lojenterprise.com/docs. You can copy, paste, and run this code in under 10 minutes to start uncovering these insights.

Top comments (0)