Your Pipeline Is 22.5h Behind: Catching Human Rights Sentiment Leads with Pulsebit

We've just uncovered an intriguing anomaly: a 24h momentum spike of -0.307 related to human rights sentiment. This drop is particularly striking when you consider that the leading language for this spike is English, with a 22.5h lag against sentiment values. This is a clear signal that your data pipeline might be missing critical updates that are shaping public discourse.

When we see a 24h momentum spike like this, it highlights a significant structural gap in any pipeline that fails to account for multilingual origins or entity dominance. If your model is not tuned to handle these factors, you might find that it missed this spike by a staggering 22.5 hours. In this case, the leading language of English, paired with the dominant themes surrounding police and criminal issues, underscores the urgency of catching these shifts in sentiment.

English coverage led by 22.5 hours. Sv at T+22.5h. Confidence scores: English 0.85, Spanish 0.85, French 0.85 Source: Pulsebit /sentiment_by_lang.

To catch this anomaly, we can leverage our API effectively. Here's how you can implement this in Python:

import requests

# Setting parameters for the API call

topic = 'human rights'

score = -0.800

confidence = 0.85

momentum = -0.307

geo_filter = 'en'

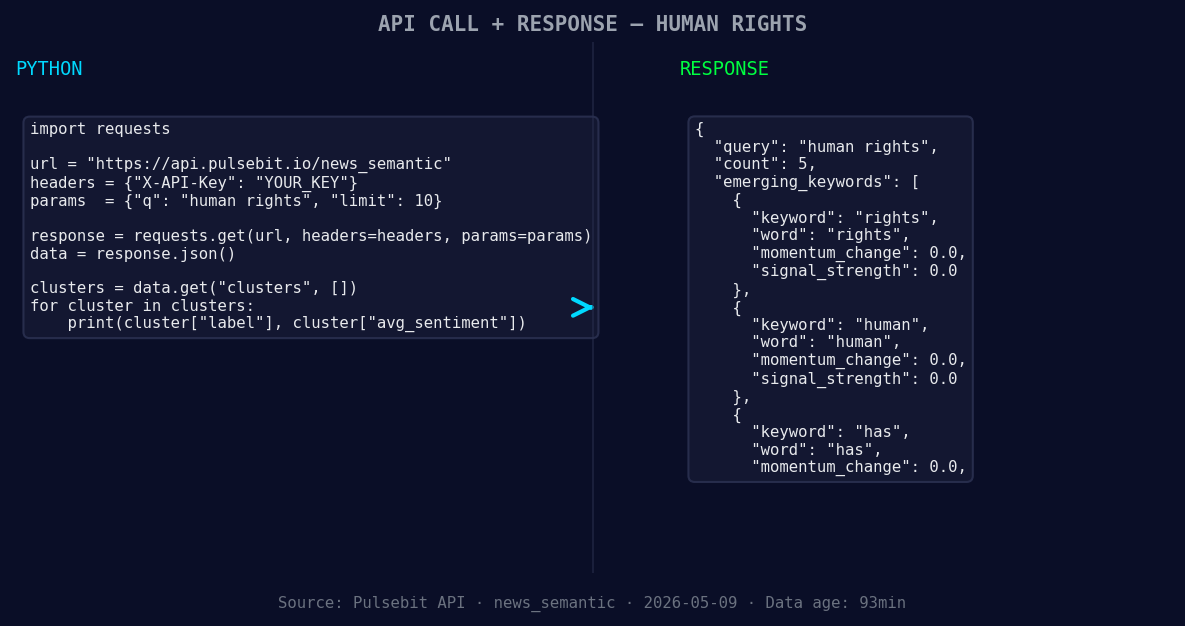

*Left: Python GET /news_semantic call for 'human rights'. Right: returned JSON response structure (clusters: 3). Source: Pulsebit /news_semantic.*

# API call to retrieve sentiment data

response = requests.get(

f"https://api.pulsebit.io/sentiment?topic={topic}&lang={geo_filter}&score={score}&confidence={confidence}&momentum={momentum}"

)

# Check the response

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Error: {response.status_code}")

Next, we want to run a meta-sentiment analysis on the cluster reason string. This will help us understand the narrative framing around the sentiment spike. We’ll use the following input:

# Running the cluster reason through sentiment analysis

cluster_reason = "Clustered by shared themes: police, officers, criminal, deny, promotion."

meta_response = requests.post(

"https://api.pulsebit.io/sentiment",

json={"text": cluster_reason}

)

# Check the meta sentiment analysis result

if meta_response.status_code == 200:

meta_data = meta_response.json()

print(meta_data)

else:

print(f"Error: {meta_response.status_code}")

This analysis will allow us to gauge how the themes of “police,” “officers,” and “criminal” are framing public sentiment, providing invaluable context for our understanding of the human rights narrative.

Now, with this data in hand, here are three specific builds you can create to take full advantage of this emerging sentiment:

Geo-Filtered Alerts: Use the geo filter endpoint to set up alerts for any human rights-related momentum spikes detected in English articles. This is crucial for timely responses to changes in sentiment. Monitor for spikes beyond a threshold of -0.500 to catch significant drops.

Meta-Sentiment Refinements: Implement a continuous monitoring system using the meta-sentiment loop. Whenever you detect a cluster reason indicating a negative sentiment around themes like “police” or “criminal,” feed that back into your analysis for real-time adjustments in your model.

Comparative Analysis: Build a comparative analysis tool that checks the narrative framing against historical baselines. Whenever you find a sentiment drop below -0.300, compare the current cluster reason to previous spikes to determine if the framing has shifted significantly, indicating potential changes in public perception.

If you're ready to get started, you can find all the details you need at pulsebit.lojenterprise.com/docs. With this setup, you can copy-paste and run your analysis in under 10 minutes, ensuring that your pipeline is always aligned with the latest sentiment shifts.

Geographic detection output for human rights. India leads with 4 articles and sentiment +0.05. Source: Pulsebit /news_recent geographic fields.

Top comments (0)