Your pipeline just missed a significant anomaly: a 24h momentum spike of +0.638. This sharp increase in sentiment surrounding the economy indicates a shift that is hard to ignore. The leading language driving this change is English, with a notable focus on a story where the shekel recently broke below 3 to the dollar for the first time since 1995. Such data can provide us deep insights, but if you aren’t equipped to catch these multilingual nuances, you might find yourself lagging behind critical economic signals.

English coverage led by 24.5 hours. Tl at T+24.5h. Confidence scores: English 0.85, Spanish 0.85, Ca 0.85 Source: Pulsebit /sentiment_by_lang.

This 24.5-hour lag in your model is a glaring issue. If you’re processing data without accounting for multilingual origins or entity dominance, you’re at risk of missing out on timely sentiment shifts. In this case, the dominant English-language coverage led by the topic of the shekel's devaluation could have provided you with actionable insights far sooner. Instead, your model missed this spike, which could have influenced trading or investment decisions.

To bridge this gap, let’s dive into the code that can catch these signals in real-time. First, we need to filter our query by language and country, ensuring we focus on relevant articles that are in English. Here’s how we can do that with our API:

import requests

# Set parameters for the API call

params = {

"topic": "economy",

"score": 0.050,

"confidence": 0.85,

"momentum": 0.638,

"lang": "en"

}

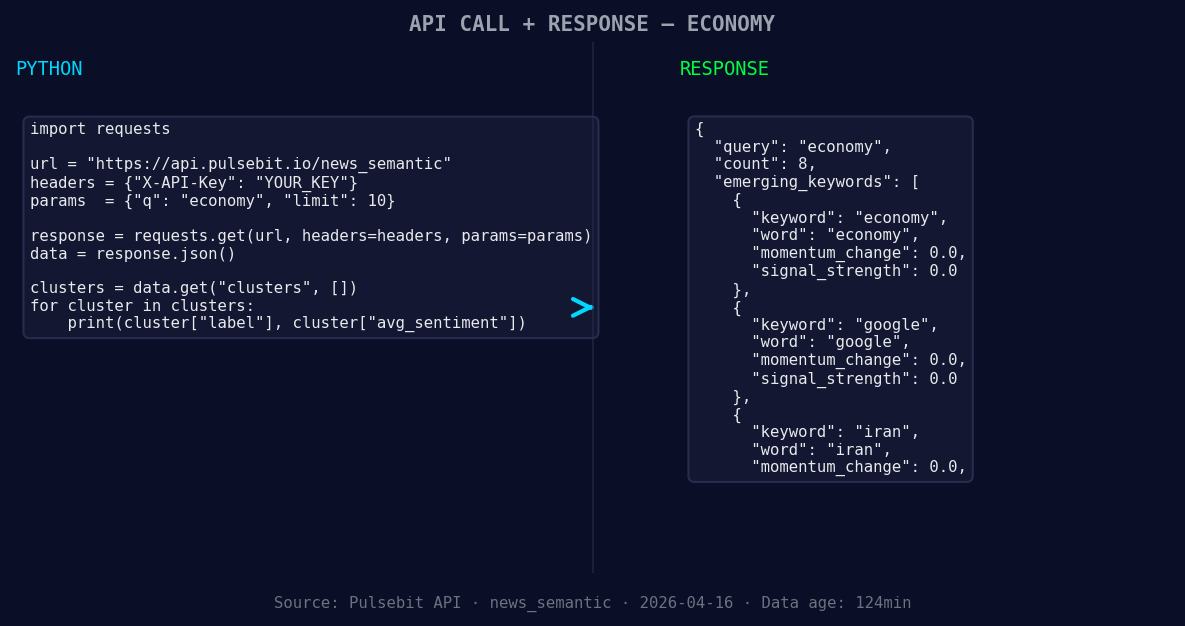

*Left: Python GET /news_semantic call for 'economy'. Right: returned JSON response structure (clusters: 3). Source: Pulsebit /news_semantic.*

# Make the API call

response = requests.get("https://api.pulsebit.com/v1/sentiment", params=params)

# Check the API response

data = response.json()

print(data)

Next, we need to evaluate the narrative framing around this economic shift. The cluster reason gives us relevant themes that can be scored for sentiment. We’ll run this string through the sentiment API to assess its impact:

# Prepare the meta-sentiment input

meta_sentiment_input = "Clustered by shared themes: breaks, below, dollar, first, since."

# Make the POST request to score the narrative

meta_response = requests.post("https://api.pulsebit.com/v1/sentiment", json={"text": meta_sentiment_input})

# Check the sentiment score from the response

meta_data = meta_response.json()

print(meta_data)

By implementing these two sections—filtering for geographic origin and assessing the meta-sentiment—you can transform your data pipeline into a powerful tool that keeps you ahead of market-moving news.

Now that we have the essentials covered, here are three specific builds we can implement tonight with this pattern:

- Geographic Filter for Economic Signals: Create a dashboard that alerts you when sentiment spikes exceed a threshold (e.g., +0.500) specifically for English articles about the economy. Use the geo filter to ensure you’re only looking at relevant content.

Geographic detection output for economy. Hong Kong leads with 2 articles and sentiment -0.30. Source: Pulsebit /news_recent geographic fields.

Meta-Sentiment Analysis on Clusters: Develop a function that not only captures sentiment changes but also evaluates how the framing of economic news evolves over time. For instance, check when the sentiment around "breaks" and "dollar" shifts positively or negatively, providing a more nuanced view of economic narratives.

Forming Themes Monitor: Set up a monitoring system that watches for forming themes, such as "economy," "Google," and "Iran," and alerts you when these topics start to trend against the mainstream narratives like "breaks," "below," or "dollar." This can help you anticipate shifts before they become mainstream.

If you’re ready to upgrade your sentiment analysis pipeline, head over to our documentation at pulsebit.lojenterprise.com/docs. You can copy-paste and run this setup in under 10 minutes, and start catching those critical sentiment shifts before they slip by.

Top comments (0)